Put simply, usability evaluation assesses the extent to which an interactive system is easy and pleasant to use. Things aren’t this simple at all though, but let’s start by considering the following propositions about usability evaluation:

Usability is an inherent measurable property of all interactive digital technologies

Human-Computer Interaction researchers and Interaction Design professionals have developed evaluation methods that determine whether or not an interactive system or device is usable.

Where a system or device is usable, usability evaluation methods also determine the extent of its usability, through the use of robust, objective and reliable metrics

Evaluation methods and metrics are thoroughly documented in the Human-Computer Interaction research and practitioner literature. People wishing to develop expertise in usability measurement and evaluation can read about these methods, learn how to apply them, and become proficient in determining whether or not an interactive system or device is usable, and if so, to what extent.

The above propositions represent an ideal. We need to understand where current research and practice fall short of this ideal, and to what extent. Where there are still gaps between ideals and realities, we need to understand how methods and metrics can be improved to close this gap. As with any intellectual endeavour, we should proceed with an open mind, and acknowledge that not only are some or all of the above propositions not true, but that they can never be so. We may have to close some doors here, but in doing so, we will be better equipped to open new ones, and even go through them.

15.1 From First World Oppression to Third World Empowerment

Usability has been a fundamental concept for Interaction Design research and practice, since the dawn of Human-Computer Interaction (HCI) as an inter-disciplinary endeavour. For some, it was and remains HCI’s core concept. For others, it remains important, but only as one of several key concerns for interaction design.

It would be good to start with a definition of usability, but we are in contended territory here. Definitions will be presented in relation to specific positions on usability. You must choose one that fits your design philosophy. Three alternative definitions are offered below.

It would also be good to describe how usability is evaluated, but alternative understandings of usability result in different practices. Professional practice is very varied, and much does not generalise from one project to the next. Evaluators must choose how to evaluate. Evaluations have to be designed, and designing requires making choices.

15.1.1 The Origins of HCI and Usability

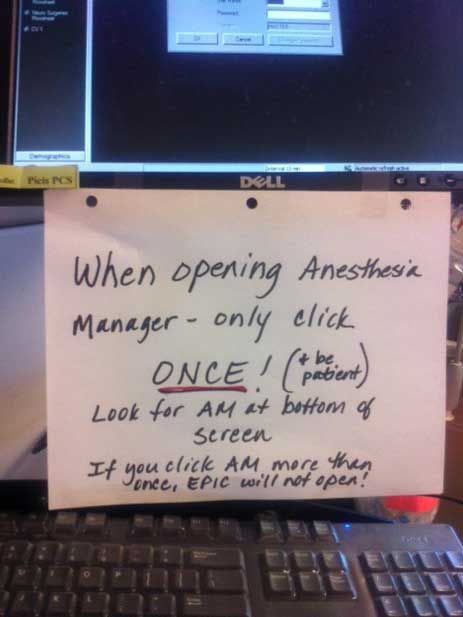

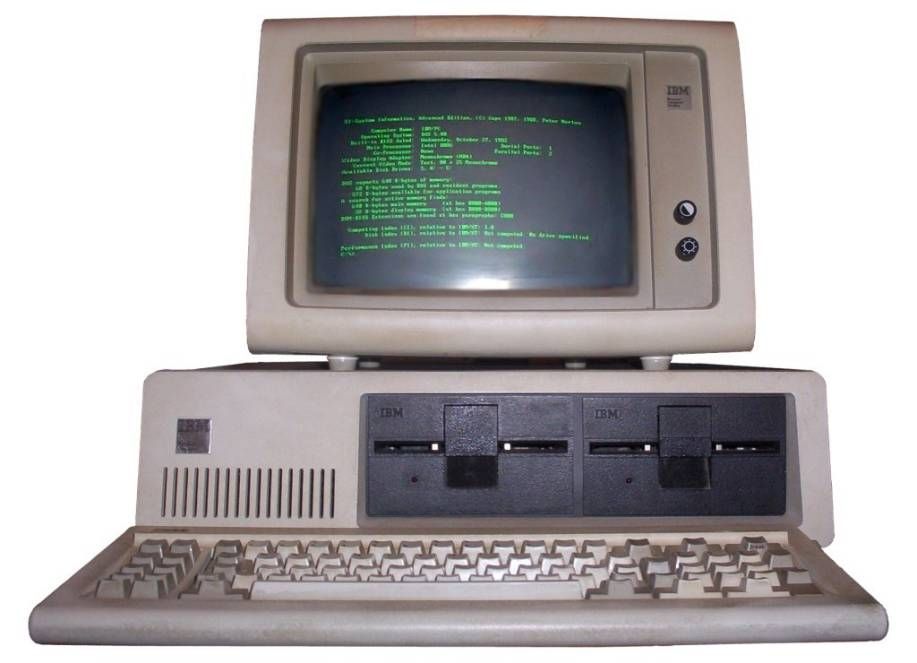

HCI and usability have their origins in the falling prices of computers in the 1980s, when for the first time, it was feasible for many employees to have their own personal computer (a.k.a PC). For their first three decades of computing, almost all users were highly trained specialists of expensive centralised equipment. A trend towards less well trained users began in the 1960s with the introduction of timesharing and minicomputers. With the use of PCs in the 1980s, computer users increasingly had no, or only basic, training on operating systems and applications software. However, software design practices continued to implicitly assume knowledgeable and competent users, who would be familiar with technical vocabularies and systems architectures, and also possess an aptitude for solving problems arising from computer usage. Such implicit assumptions rapidly became unacceptable. For the typical user, interactive computing became associated with constant frustrations and consequent anxieties. Computers were obviously too hard to use for most users, and often absolutely unusable. Usability thus became a key goal for the design of any interactive software that would not be used by trained technical computer specialists. Popular terms such as “user-friendly” entered everyday use. Both usability and user-friendliness were initially understood to be a property of interactive software. Software either was usable or not. Unusable software could be made usable through re-design.

Author/Copyright holder: Courtesy of Boffy b Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Jeremy Banks. Copyright terms and licence: CC-Att-2 (Creative Commons Attribution 2.0 Unported).

Author/Copyright holder: Courtesy of Berkeley Lab. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Figure 15.1 A-B-C: The Home Personal Computer (PC) and Associated Peripherals is Now an Everyday Sight in Homes Worldwide. Usability became a critical issue with PC’s introduction.

15.1.2 From Usability to User Experience via Quality in Use

During the 1990s, more sophisticated understandings of usability shifted from an all-or-nothing binary property to a continuum spanning different extents of usability. At the same time, the focus of HCI shifted to contexts of use (Cockton 2004). Usability ceased to be HCI’s dominant concept, with research increasingly focused on the fit between interactive software and its surrounding usage contexts. Quality in use no longer appeared to be a simple issue of how inherently usable an interactive system was, but how well it fitted its context of use. Quality in use became a preferred alternative term to usability in international standards work, since it avoided implications of usability being an absolute context-free invariant property of an interactive system. Around the turn of the century, the rise of networked digital media (e.g., web, mobile, interactive TV, public installations) added novel emotional concerns for HCI, giving rise to yet another more attractive term than usability: user experience.

Current understandings of usability are thus different from those from the early days of HCI in the 1980s. Since then, ease of use has improved though both attention to interaction design and improved levels of IT literacy across much of the population in advanced economies. Familiarity with basic computer operations is now widespread, as evidenced by terms such as “digital natives” and “digital exclusion”, which would have had little traction in the 1980s. Usability is no longer automatically the dominant concern in interaction design. It remains important, with frustrating experiences of difficult to use digital technologies still commonplace. Poor usability is still with us, but we have moved on from Thomas Landauer’s 1996 Trouble with Computers (Landauer 1996). When PCs, mobile phones and the internet are instrumental in major international upheavals such as the Arab Spring of 2011, the value of digital technologies can massively eclipse their shortcomings.

15.1.3 From Trouble with Computers to Trouble from Digital Technologies

Readers from developing countries can today experience Landauer’s Trouble with Computers as the moans of oversensitive poorly motivated western users. On 26th January 1999, a "hole in the wall" was carved at the NIIT premises in New Delhi. Through this hole, a freely accessible computer was made available for people in the adjoining slum of Kalkaji. It became an instant hit, especially with children who, with no prior experience, learnt to use the computer on their own. This prompted NIIT’s Dr. Mitra to propose the following hypothesis:

The acquisition of basic computing skills by any set of children can be achieved through incidental learning provided the learners are given access to a suitable computing facility, with entertaining and motivating content and some minimal (human) guidance

-- http: //www.hole-in-the-wall.com/Beginnings.html

There is a strong contrast here with the usability crisis of the 1980s. Computers in 1999 were easier to use than those from the 1980s, but they still presented usage challenges. Nevertheless, residual usability irritations have limited relevance for this century’s slum children in Kalkaji.

The world is complex, what matters to people is complex, digital technologies are diverse. In the midst of this diverse complexity, there can't be a simple day of judgement when digital technologies are sent to usability heaven or unusable hell.

The story of usability is a perverse journey from simplicity to complexity. Digital technologies have evolved so rapidly that intellectual understandings of usability have never kept pace with the realities of computer usage. The pain of old and new world corporations struggling to secure returns on investment in IT in the 1980s has no rendezvous with the use of social media in the struggles for democracy in third world dictatorships. Yet we cannot simply discard the concept of usability and move on. Usage can still be frustrating, annoying, unnecessarily difficult and even impossible, even for the most skilled and experienced of users.

Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 15.2: NIIT’s "hole in the wall” Computer in New Delhi

Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 15.3: NIIT’s "hole in the wall” Computer in New Delhi

15.1.4 From HCI's sole concern to an enduring important factor in user experience

This encyclopaedia entry is not a requiem for usability. Although now buried under broader layers of quality in use and user experience, usability is not dead. For example, I provide some occasional IT support to my daughter via SMS. Once, I had to explain how to force the restart of a recalcitrant stalled laptop. Her last message to me on her problem was:

It's fixed now! I didn't know holding down the power button did something different to just pressing it.

Given the hidden nature of this functionality (a short press hibernates many laptops), it is no wonder that my daughter was unaware of the existence of a longer ‘holding down’ action. Also, given the rare occurrences of a frozen laptop, my daughter would have had few chances to learn. She had to rely on my knowledge here. There is little she could have known herself without prior experience (e.g., of iPhone power down).

Author/Copyright holder: Courtesy of Rico Shen. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 15.4: Holding or Pressing? Who’s to Know?

The enduring realities of computer use that usability seeks to encompass remain real and no less potentially damaging to the success of designs today than over thirty years ago. As with all disciplinary histories, the new has not erased the old, but instead, like geological strata, the new overlies the old, with outcrops of usability still exposed within the wider evolving landscape of user experience. As in geology, we need to understand the present intellectual landscape in terms of its underlying historical processes and upheavals.

What follows is thus not a journey through a landscape, but a series of excavations that reveal what usability has been at different points in different places over the last three decades. With this in place, attention is refocused on current changes in the interaction design landscape that should give usability a stable place within a broader understanding of designing for human values (Harper et al. 2008). But for now, let us begin at the beginning, and from there take a whistle stop tour of HCI history to reveal unresolved tensions over the nature of usability and its relation to interaction design.

15.2 From Usability to User Experience - Tensions and Methods

The need to design interactive software that could be used with a basic understanding of computer hardware and operating systems was first recognised in the 1970s, with pioneering work within software design by Fred Hansen from Carnegie Mellon University (CMU), Tony Wasserman from University of California, San Francisco (UCSF), Alan Kay from Xerox Palo Alto Research Center (PARC), Engel and Granda from IBM, and Pew and Rollins from BBN Technologies (for a review of early HCI work, see Pew 2002). This work took several approaches, from detailed design guidelines to high level principles for both software designs and their development processes. It brought together knowledge and capabilities from psychology and computer science. The pioneering group of individuals here was known as the Software Psychology Society, beginning in 1976 and based in the Washington DC area (Shneiderman 1986). This collaboration between academics and practitioners from cognitive psychology and computer science forged approaches to research and practice that remained the dominant paradigm in Interaction Design research for almost 20 years, and retained a strong hold for a further decade. However, this collaboration contained a tension on the nature of usability.

The initial focus was largely cognitive, focusing on causal relationships between user interface features and human performance, but with different views on how user interface features and human attributes would interact. If human cognitive attributes are fixed and universal, then user interface features can be inherently usable or unusable, making usability an inherent binary property of interactive software, i.e., an interactive system simply is or is not usable. Software could be inherently usable by conformance to guidelines and principles that could be discovered, formulated and validated by psychological experiments. However, if human cognitive attributes vary not only between individuals, but across different settings, then usability becomes an emergent property that depends, not only on features and qualities of an interactive system, but also on who was using it, and on what they were trying to do with it. The latter position was greatly strengthened in the 1990s by the “turn to the social” (Rogers et al. 1994). However, much of the intellectual tension here was defused as HCI research spread out across a range of specialist communities focused on the Association for Computing Machinery’s conferences such as the ACM Conference on Computer Supported Cooperative Work (CSCW) from 1986 or the ACM Symposium on User Interface Software and Technology (UIST) from 1988. Social understandings of usability became associated with CSCW, and technological ones with UIST.

Psychologically-based research on usability methods in major conferences remained strong into the early 1990s. However, usability practitioners became dissatisfied with academic research venues, and the first UPA (Usability Professionals Association) conference was organised in 1992. This practitioner schism happened only 10 years after the Software Psychology Society had co-ordinated a conference in Gaithersburg, from which the ACM CHI conference series emerged. This steadily removed much applied usability research from the view of mainstream HCI researchers. This separation has been overcome to some extent by the UPA’s open access Journal of Usability Studies, which was inaugurated in 2005.

Author/Copyright holder: Ben Shneiderman and Addison-Wesley. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 15.5: Ben Shneiderman, Software Psychology Pioneer, Authored the First HCI Textbook

15.2.1 New Methods, Damaged Merchandise and a Chilling Fact

There is thus a dilemma at the heart of the concept of usability: is it a property of systems or a property of usage? A consequence of 1990s fragmentation within HCI research was such important conceptual issues were brushed aside in favour of pragmatism amongst those researchers and practitioners who retained a specialist interest in usability. By the early 1990s, a range of methods had been developed for evaluating usability. User Testing (Dumas and Redish 1993) was well established by the late 1980s, as essentially a variant of psychology experiments with only dependent variables (the interactive system being tested became the independent constant). Discount methods included rapid low cost user testing, as well as inspection methods such as Heuristic Evaluation (Nielsen 1994). Research on model-based methods such as the GOMS model (Goals, Operators, Methods, and Selection rules - John and Kieras 1996) continued, but with mainstream publications becoming rarer by 2000.

With a choice of inspection, model-based and empirical (e.g., user testing) evaluation methods, questions arose as to which evaluation method was best and when and why. Experimental studies attempted to answer these questions by treating evaluation methods as independent variables in comparison studies that typically used problem counts and/or problem classifications as dependent variables. However, usability methods are too incompletely specified to be consistently applied, letting Wayne Gray and Marilyn Salzman invalidate several key studies in their Damaged Merchandise paper of 1998. Commentaries on their paper failed to undo the damage of the Damaged Merchandise charge, with further papers in the first decade of this century adding more concerns over not only method comparison, but the validity of usability methods themselves. Thus in 2001, Morten Hertzum and Niels Jacobsen published their “chilling fact” about use of usability methods: there are substantial evaluator effects. This should not have surprised anyone with a strong grasp of Gray and Salzman’s critique, since inconsistencies in usability method use make valid comparisons close to impossible in formal studies, and they are even more extensive in studies that attempt no control.

Critical analyses by Gray and Salzman, and by Hertzum and Jacobsen, made pragmatic research on usability even less attractive for leading HCI journals and conferences. The method focus of usability research shrunk, with critiques exposing not only the consequences of ambivalence over the causes of poor usability (system, user or both?), but also the lack of agreement over what was covered by the term usability.

Author/Copyright holder: Courtesy of kinnigurl. Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

Figure 15.6: 2020 Usability Evaluation Method Medal Winners

15.2.2 We Can Work it Out: Putting Evaluation Methods in their (Work) Place

Research on usability and methods has since the late 00s been superseded by research on user experience and usability work. User experience is a broader concept than usability, and moves beyond efficiency, task quality and vague user satisfaction to a wide consideration of cognitive, affective, social and physical aspects of interaction.

Usability work is the work carried out by usability specialists. Methods contribute to this work. Methods are not used in isolation, and should not be assessed in isolation. Assessing methods in isolation ignores the fact that usability work combines, configures and adapts multiple methods in specific project or organisational contexts. Recognition of this fact is reflected in an expansion of research focus from usability methods to usability work, e.g., is in PhDs (Dominic Furniss, Tobias Uldall-Espersen, Mie Nørgaard) associated with the European MAUSE project (COST Action 294, 2004-2009). It is also demonstrated in the collaborative research of MAUSE Working Group 2 (Cockton and Woolrych 2009).

A focus on actual work allows realism about design and evaluation methods. Methods are only one aspect of usability work. They are not a separate component of usability work that has deterministic effects, i.e., effects that are guaranteed to occur and be identical across all project and organisational contexts. Instead, broad evaluator effects are to be expected, due to the varying extent and quality of design and evaluation resources in different development settings. This means that we cannot and should not assess usability evaluation methods in artificial isolated research settings. Instead, research should start with the concrete realities of usability work, and within that, research should explore the true nature of evaluation methods and their impact.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 15.7: Usability Expert at Work: Alan Woolrych at the University of Sunderland using a minimal Mobile Usability Lab setup of webcam with audio recording plus recording of PC screen and sound, complemented by an eye tracker to his right

15.2.3 The Long and Winding Road: Usability's Journey from Then to Now

Usability is now one aspect of user experience, and usability methods are now one loosely pre-configured area of user experience work. Even so, usability remains important. The value of the recent widening focus to user experience is that it places usability work in context. Usability work is no longer expected to establish its value in isolation, but is instead one of several complementary contributors to design quality.

Usability as a core focus within HCI has thus passed through phases of psychological theory, methodological pragmatism and intellectual disillusionment. More recent foci on quality in use and user experience make it clear that Interaction Design cannot just focus on features and attributes of interactive software. Instead, we must focus on the interaction of users and software in specific settings. We cannot reason solely in terms of whether software is inherently usable or not, but instead have to consider what does or will happen when software is used, whether successfully, unsuccessfully, or some mix of both. Once we focus on interaction, a wider view is inevitable, favouring a broad range of concerns over a narrow focus on software and hardware features.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 15.8 A-B: What’s Sailable: A Boat Alone or a Crewed Boat in Specific Sea Conditions? A Similar Question Arises for Usable Systems

Many of the original concerns of 1980s usability work are as valid today as they were 30 years ago. What has changed is that we no longer expect usability to be the only, or even the dominant, human factor in the success of interactive systems. What has not changed is the potential confusion over what usability is, which has existed from the first days of HCI, i.e., whether software or usage is usable. While this may feel like some irrelevant philosophical hair-splitting, it has major consequences for usability evaluation. If software can be inherently usable, then usability can be evaluated solely through direct inspection. If usability can only be established by considering usage, then indirect inspection methods (walkthroughs) or empirical user testing methods must be used to evaluate.

15.2.4 Usability Futures: From Understanding Tensions to Resolving Them

The form of the word ‘usability’ implies a property that requires an essentialist position, i.e., one that sees properties and attributes as been inherent in objects, both natural and artificial (in Philosophy, this is called an essentialist or substantivist ontology). A literal understanding of usability requires interactive software to be inherently usable or unusable. Although a more realistic understanding sees usability as a property of interactive use and not of software alone, it makes no sense to talk of use as being usable, just as it makes no sense to talk of eating being edible. This is why the term quality in use is preferred for some international standards, because this opens up a space of possible qualities of interactive performance, both in terms of what is experienced, and in terms of what is achieved, for example, an interaction can be ‘successful’, ‘worthwhile’, ‘frustrating’, ‘unpleasant’, ‘challenging’ or ‘ineffective’.

Much of the story of usability reflects a tension between the tight software view and the broader sociotechnical view of system boundaries. More abstractly, this is a tension between substance (essence) and relation, i.e., between inherent qualities of interactive software and emergent qualities of interaction. In philosophy, the position that relations are more fundamental than things in themselves characterises a relational ontology.

Ontologies are theories of being, existence and reality. They lead to very different understandings of the world. Technical specialists and many psychologists within HCI are drawn to essentialist ontologies, and seek to achieve usability predominantly through consideration of user interface features. Specialists with a broader human-focus are mostly drawn to relational ontologies, and seek to understand how contextual factors interact with user interface features to shape experience and performance. Each ontology occupies ground within the HCI landscape. Both are now reviewed in turn. Usability evaluation methods are then briefly reviewed. While tensions between these two positions have dominated the evolution of usability in principle and practice, we can escape the impasse. A strategy for escaping longstanding tensions within usability will be presented, and future directions for usability within user experience frameworks will be indicated in the closing section.

15.3 Locating Usability within Software: Guidelines, Heuristics, Patterns and ISO 9126

15.3.1 Guidelines for Usable User Interfaces

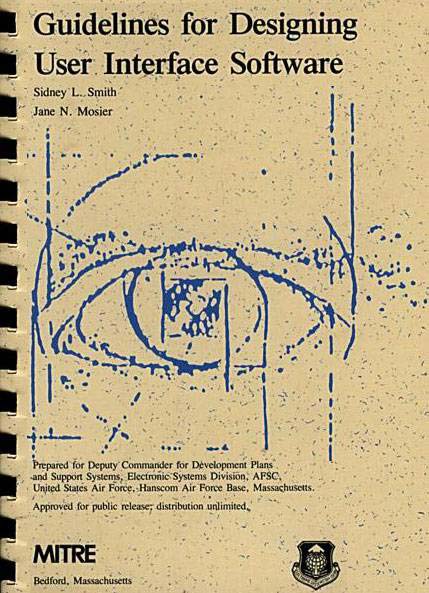

Much early guidance on usability came from computer scientists such as Fred Hansen from Carnegie Mellon University (CMU) and Tony Wasserman, then at University of California, San Francisco (UCSF). Computer science has been strongly influenced by mathematics, where entities such as similar or equilateral triangles have eternal absolute intrinsic properties. Computer scientists seek to establish similar inherent properties for computer programs, including ones that ensure usability for interactive software. Thus initial guidelines on user interface design incorporated a technocentric belief that usability could be ensured via software and hardware features alone. A user interface would be inherently usable if it conformed to guidelines on, for example, naming, ordering and grouping of menu options, prompting for input types, input formats and value ranges for data entry fields, error message structure, response time, and undoing capabilities. The following four example guidelines are taken from Smith and Mosier’s 1986 collection commissioned by the US Air Force (Smith and Mosier 1986):

1.0/4 + Fast Response

Ensure that the computer will acknowledge data entry actions rapidly, so that users are not slowed or paced by delays in computer response; for normal operation, delays in displayed feedback should not exceed 0.2 seconds.

1.0/15 Keeping Data Items Short

For coded data, numbers, etc., keep data entries short, so that the length of an individual item will not exceed 5-7 characters.

1.0/16 + Partitioning Long Data Items

When a long data item must be entered, it should be partitioned into shorter symbol groups for both entry and display.

Example

A 10-digit telephone number can be entered as three groups, NNN-NNN-NNNN.

1.4/12 + Marking Required and Optional Data Fields

In designing form displays, distinguish clearly and consistently between required and optional entry fields.

Figure 15.9: Four example guidelines taken from Smith and Mosier’s 1986 collection

25 years after the publication of the above guidance, there are still many contemporary web site data entry forms whose users would benefit from adherence to these guidelines. Even so, while following guidelines can greatly improve software usability, it cannot guarantee it.

Author/Copyright holder: Sidney L. Smith and Jane N. Mosier and The MITRE Corporation. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 15.10: This Book Contains More Guidelines Than Anyone Could Imagine

15.3.2 Manageable Guidance: Design Heuristics for Usable User Interfaces

My original paper copy of Smith and Mosier’s guidelines occupies 10cm of valuable shelf space. It is over 25 years old and I have never read all of it. I most probably never will. There are simply too many guidelines there to make this worthwhile (in contrast, I have read complete style guides for Windows and Apple user interfaces in the past).

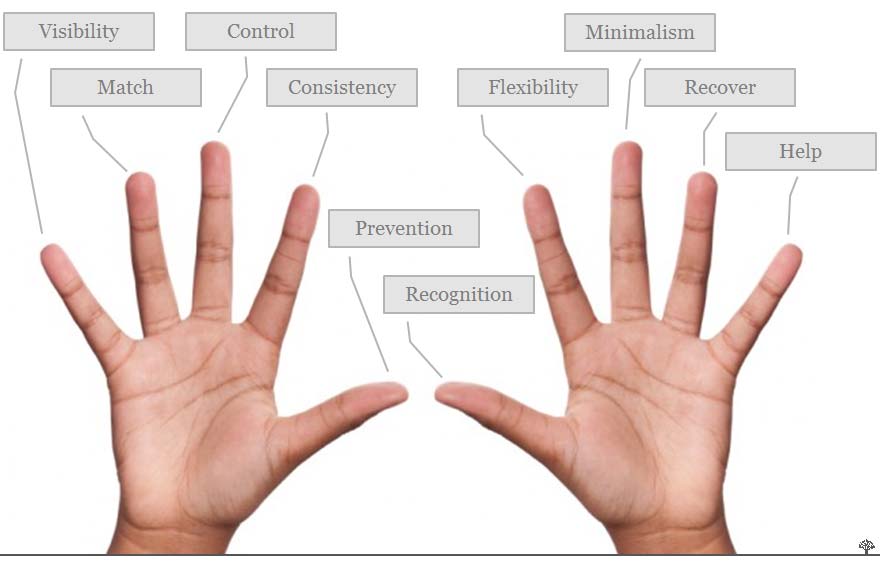

The bloat of guidelines collections did not remove the appeal of technocentric views of usability. Instead, hundreds of guidelines were distilled into ten heuristics by Rolf Molich and Jakob Nielsen. These were further assessed and refined into the final version of in Heuristic Evaluation (Nielsen 1994), an inspection method that examines software features for potential causes of poor usability. Heuristics generalise more detailed guidelines from collections such as Smith and Mosier. Many have a technocentric focus, e.g.:

Visibility of system status

The system should always keep users informed about what is going on, through appropriate feedback within reasonable time.

User control and freedom

Users often choose system functions by mistake and will need a clearly marked "emergency exit" to leave the unwanted state without having to go through an extended dialogue. Support undo and redo.

Error prevention

Even better than good error messages is a careful design which prevents a problem from occurring in the first place. Either eliminate error-prone conditions or check for them and present users with a confirmation option before they commit to the action.

Recognition rather than recall

Minimize the user's memory load by making objects, actions, and options visible. The user should not have to remember information from one part of the dialogue to another. Instructions for use of the system should be visible or easily retrievable whenever appropriate.

Flexibility and efficiency of use

Accelerators -- unseen by the novice user -- may often speed up the interaction for the expert user such that the system can cater to both inexperienced and experienced users. Allow users to tailor frequent actions.

Figure 15.11: Example heuristics orginally developed in Molich and Nielsen 1990 and Nielsen and Molich 1990

Heuristic Evaluation became the most popular user-centred design approach in the 1990s, but has become less prominent with the move away from desktop applications. Quick and dirty user testing soon overtook Heuristic Evaluation (compare the survey of Venturi et al. 2006 with Rosenbaum et al. 2000).

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 15.12: One Heuristic for Each Digit from Nielsen

15.3.3 Invincible Intrinsics: Patterns and Standards Keep Usability Essential

Moves away from system-centric approaches within user-centred design have not signalled the end of usability methods that focus solely on software artefacts, with little or no attention to usage. This may be due to the separation of the usability communities (now user experience) from the software engineering profession. System-centredusability remains common in user interface pattern languages. For example, a pattern from Jenifer Tidwell updates Smith and Mosier style guidance for contemporary web designers (designinginterfaces.com/Input_Prompt).

Pattern: Input Prompt

Prefill a text field or dropdown with a prompt that tells the user what to do or type.

Figure 15.13: An example pattern from Jenifer Tidwell's Designing Interfaces

The 1991 ISO 9126 standard on Software Engineering - Product Quality was strongly influenced by the essentialist preferences of computer science, with usability defined as:

a set of [product] attributes that bear on the effort needed for use, and on the individual assessment of such use, by a stated or implied set of users.

This is the first of three definitions presented in this encyclopaedia entry. The attributes here are assumed to be software product attributes, rather than user interaction ones. However, the relational (contextual) view of usage favoured in HCI has gradually come to prevail. By 2001, ISO 9126 had been revised to define usability as:

(1’) the capability of the software product to be understood, learned, used and attractive to the user, when used under specified conditions

This revision remains product focused (essentialist), but the ‘when’ clause moved IS0 9126 away from a wholly essentialist position on usability by implicitly acknowledging the influence of a context of use (“specified conditions”) that extends beyond “a stated or implied set of users”.

In an attempt to align the technical standard ISO 9126 with the human factors standard ISO 9241 (see below), ISO 9126 was extended in 2004 by a fourth section on quality in use, resulting in an uneasy compromise between software engineers and human factors experts. This uneasy compromise persists, with the 2011 replacement standard for ISO 9126, ISO 25010 maintaining an essentialist view of usability. In ISO 25010, usability is both an intrinsic product quality characteristic and a subset of quality in use (comprising effectiveness, efficiency and satisfaction). As a product characteristic in ISO 25010, usability has the intrinsic subcharacteristics of:

Appropriateness

Recognisability

Learnability

Operability (degree to which a product or system has attributes that make it easy to operate and control - emphasis added)

User error protection

User interface aesthetics

ISO 25010 thus had to include a note that exposed the internal conflict between software engineering and human factors world views:

Usability can either be specified or measured as a product quality characteristic in terms of its subcharacteristics, or specified or measured directly by measures that are a subset of quality in use.

A similar note appears for learnability and accessibility. Within the world of software engineering standards, a mathematical world view clings hard to an essentialist position on usability. In HCI, where context has reigned for decades, this could feel incredibly perverse. However, despite HCI’s multi-factorial understanding of usability, which follows automatically from a contextual position, HCI evangelists’ anger over poor usability always focuses on software products. Even though users, tasks and contexts are all known to influence usability, only hardware or software should be changed to improve usability, endorsing the software engineers’ position within ISO 25010 (attributes make software easy to operate and control). Although HCI’s world view typically rejects essentialist monocausal explanations of usability, when getting angry on the user’s behalf, the software always gets the blame.

It should be clear that issues here are easy to state but harder to unravel. The stalemate in ISO 25010 indicates a need within HCI to give more weight to the influence of software design on usability. If users, tasks and contexts must not be changed, then the only thing that we can change is hardware and/or software. Despite the psychological marginalisation of designers’ experience and expertise when expressed in guidelines, patterns and heuristics, these can be our most critical resource for achieving usability best practice. We should bear this in mind as we move to consider HCI’s dominant contextual position on usability.

15.4 Locating Usability within Interaction: Contexts of Use and ISO Standards

The tensions within international standards could be seen within Nielsen’s Heuristics, over a decade before the 2004 ISO 9126 compromise. While the five sample heuristics in the previous section focus on software attributes, one heuristic focuses on the relationship between a design and its context of use (Nielsen 1994):

Match between system and the real world

The system should speak the users' language, with words, phrases and concepts familiar to the user, rather than system-oriented terms. Follow real-world conventions, making information appear in a natural and logical order.

This relating of usability to the ‘real world’ was given more structure in the ISO 9241-11 Human Factors standard, which related usability to the usage context as the:

Extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency and satisfaction in a specified context of use

This is the second of three definitions presented in this encyclopaedia entry. Unlike the initial and revised ISO 9126 definitions, it was not written by software engineers, but by human factors experts with backgrounds in ergonomics, psychology and similar.

ISO 9241-11 distinguishes three component factors of usability: effectiveness, efficiency, satisfaction. These result from multi-factorial interactions between users, goals, contexts and a software product. Usability is not a characteristic, property or quality, but an extent within a multi-dimensional space. This extent is evidenced by what people can actually achieve with a software product and the costs of these achievements. In practical terms, any judgement of usability is a holistic assessment that combines multi-faceted qualities into a single judgement.

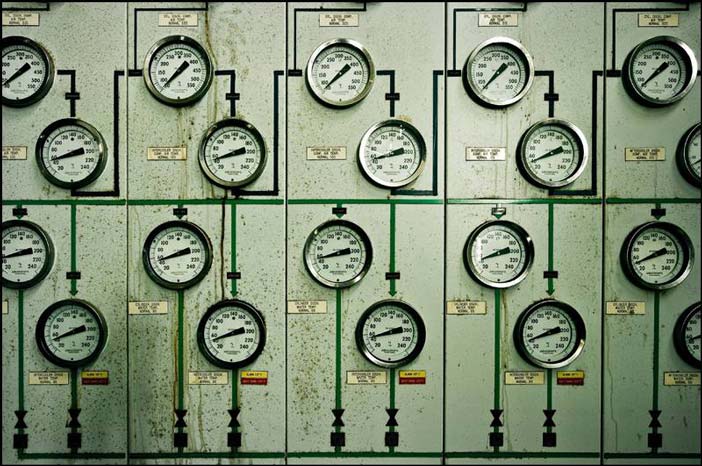

Such single judgements have limited use. For practical purposes, it is more useful to focus on separate specific qualities of user experience, i.e., the extent to which thresholds are met for different qualities. For example, a software product may not be deemed usable if key tasks cannot be performed in normal operating contexts within an acceptable time. Here, the focus would be on efficiency criteria. There are many usage contexts where time is limited. The bases for time limits vary considerably, and include physics (ballistics in military combat), physiology (medical trauma), chemistry (process control) or social contracts (newsroom print/broadcast deadlines).

Effectiveness criteria add to the complexity of quality thresholds. A military system may be efficient, but it is not effective if its use results in what is euphemistically called ‘collateral damage’, including ‘friendly fire’ errors. We can imagine trauma resuscitation software that enables timely responses, but leads to avoidable ‘complications’ (another domain euphemism) after a patient has been stabilised. A process control system may support timely interventions, but may result in waste or environmental damage that limits the effectiveness of operators’ responses. Similarly, a newsroom system may support rapid preparation of content, but could obstruct the delivery of high quality copy.

For satisfaction, usage could be both objectively efficient and effective, but cause uncomfortable user experiences that give rise to high rates of staff turnover (as common in call centres). Similarly, employees may thoroughly enjoy a fancy multimedia fire safety training title, but it could be far less effective (and thus potentially deadly) compared to the effectiveness of a boring instructional text-with-pictures version.

ISO 9241-11’s three factors of usability have recently become five in by ISO 25010’s quality in use factors:

Effectiveness

Efficiency

Satisfaction

Freedom from risk

Context coverage

The two additional factors are interesting. Context coverage is a broader concept than the contextual fit of the match between system and Nielsen’s Match between System and Real World heuristic (Nielsen 1994). It extends specified users and specified goals to potentially any aspect of a context of use. This should include all factors relevant to freedom from risk, so it is interesting to see this given special attention, rather than trusting effectiveness and satisfaction to do the work here. However, such piecemeal extensions within ISO 25010 open up the question of comprehensiveness and emphasis. For example, why are factors such as ease of learning either overlooked or hidden inside efficiency or effectiveness?

Author/Copyright holder: ISO and Lionel Egger. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 15.14: ISO Accessibility Standard Discussion

15.4.1 Contextual Coverage Brings Complex Design Agendas

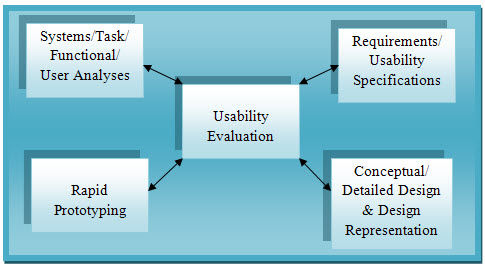

Relational positions on usability are inherently more complex than essentialist ones. The latter let, interactive systems be inspected to assess their usability potential on the basis of their design features. Essentialist approaches remain attractive because evaluations can be fully resourced through guidelines, patterns and similar expressions of best practice for interaction design. Relational approaches require a more complex set of co-ordinated methods. As relational positions become more complex, as in the move from ISO 9241-11 to ISO 25010, a broader range of evaluation methods is required. Within the relational view, usability is the result of a set of complex interactions that manifests itself in a range of usage factors. It is very difficult to see how a single evaluation method could address all these factors. Whether or not this is possible, no such method currently exists.

Relational approaches to usability require a range of evaluation methods to establish its extent. Extent introduces further complexities, since all identified usability factors must be measured, then judgements must be made as to whether achieved extents are adequate. Here, usability evaluation is not a simple matter of inspection, but instead it becomes a complex logistical operation focused on implementing a design agenda.

An agenda is list of things to be done. A design agenda is therefore a list of design tasks, which need to be managed within an embracing development process. There is an implicit design agenda in ISO 9241-11, which requires interaction designers to identify target beneficiaries, usage goals, and levels of efficiency, effectiveness and satisfaction for a specific project. Only then is detailed robust usability evaluation possible. Note that this holds for ISO 9241-11 and similar evaluation philosophies. It does not hold for some other design philosophies (e.g., Sengers and Gaver 2006) that give rise to different design agendas.

A key task on the ISO 9241-11 evaluation agenda is thus measuring the extent of usability through a co-ordinated set of metrics, which will typically mix quantitative and qualitative measures, often with a strong bias towards one or the other. However, measures only enable evaluation. To evaluate, measures need to be accompanied by targets. Setting such targets is another key task from the ISO 9241-11 evaluation agenda. This is often achieved through the use of generic severity scales. To use such resources, evaluators need to interpret them in specific project contexts. This indicates that re-usable evaluation resources are not complete re-usable solutions. Work is required to turn these resources into actionable evaluation tasks.

For example, the two most serious levels of Chauncey Wilson’s problem severity scale (Wilson 1999) are:

Level 1 - Catastrophic error causing irrevocable loss of data or damage to the hardware or software. The problem could result in large-scale failures that prevent many people from doing their work. Performance is so bad that the system cannot accomplish business goals.

Level 2 - Severe problem causing possible loss of data. User has no workaround to the problem. Performance is so poor that ... universally regarded as 'pitiful'.

Each severity level requires answers to questions about specific measures and contextual information, i.e., how should the following be interpreted in a specific project context: ‘many prevented from doing work’; ‘cannot accomplish business goals’; ‘performance regarded as pitiful’. These top two levels also require information about the software product: ‘loss of data’; ‘damage to hardware of software’; ‘no workaround’.

Wilson’s three further lower level scales add considerations such as: ‘wasted time’, ‘increased error or learning rates’, and ‘important feature not working as expected’. These all set a design agenda of questions that must be answered. Thus to know that performance is regarded as pitiful, we would need to choose to measure relevant subjective judgments. Other criteria are more challenging, e.g., how would we know whether time is wasted, or whether business goals cannot be accomplished? The first depends on values. The idea of ‘wasting’ time (like money) is specific to some cultural contexts, and also depends on how long tasks are expected to take with a new system, and how much time can be spent on learning and exploring. As for business goals, a business may seek, for example, to be seen as socially and environmentally responsible, but may not expect every feature of every corporate system to support these goals.

Once thresholds for severity criteria have been specified, it is not clear how designers can or should trade off factors such as efficiency, effectiveness and satisfaction against each other. For example, users may not be satisfied even when they exceed target efficiency and effectiveness, or conversely they could be satisfied even when their performance should not warrant that relative to design targets. Target levels thus guide rather than dictate the interpretation of results and how to respond to them.

Method requirements thus differ significantly between essentialist and relational approaches to usability. For high quality evaluation based on any relational position, not just ISO 9241-11’s, evaluators must be able to modify and combine existing re-usable resources for specific project contexts. Ideally, the re-usable resources would do most of the work here, resulting in efficient, effective and satisfying usability evaluation. If this is not the case, then high quality usability evaluation will present complex logistical challenges that require extensive evaluator expertise and project specific resources.

Author/Copyright holder: Simon Christen - iseemooi. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 15.15: Relational Approaches to Usability Require Multiple Measures

15.5 The Development of Usability Evaluation: Testing, Modelling and Inspection

Usability is a contested historical term that is difficult to replace. User experience specialists have to refer to usability, since it is a strongly established concept within the IT landscape. However, we need to exercise caution in our use of what is essentially a flawed concept. Software is not usable. Instead, software gets used, and the resulting user experiences are a composite of several qualities that are shaped by product attributes, user attributes and the wider context of use.

Now, squabbles over definitions will not necessarily impact practice in the ‘real world’. It is possible for common sense to prevail and find workarounds for what could well be semantic distractions with no practical import. However, when we examine usability evaluation methods, we do see that different conceptualisations of usability result in differences over the causes of good and poor usability.

Essentialist usability is, causally homogeneous. This means that all causes of user performance are of the same type, i.e., due to technology. System-centred inspection methods can identify such causes.

Contextual usability is causally heterogeneous. This means that causes of user performance are of different types, some due to technologies, others due to some aspect(s) of usage contexts, but most due to interactions between both. Several evaluation and other methods may be needed to identify and relate a nexus of causes.

Neither usability paradigm (i.e., essentialist or relational) has resolved the question of relevant effects, i.e., what counts as evidence of good or poor usability, and thus there are few adequate methods here. Essentialist usability can pay scant attention to effects (Lavery et al. 1997): who cares what poor design will do to users, it’s bad enough that it’s poor design! Contextual usability has more focus on effects, but there is limited consensus on the sort of effects that should count as evidence of poor usability. There are many examples of what could count as evidence, but what actually should is left to a design team’s judgement.

Some methods can predict effects. The GOMS model (Goals, Operators, Methods, and Selection rules) predicts effects on expert error free task completion time, which is useful in some project contexts (Card et al 1980, John and Kieras 1996). For example, external processes may require a task to be completed within a maximum time period. If predicted expert error free task completion time exceeds this, then it is highly probable that non-expert error prone task completion take even longer. Where interactive devices such as in-car systems distract attention from the main task (e.g., driving), then time predictions are vital. Recent developments such as CogTool (Bellamy et al. 2011) have given a new lease of life to practical model-based evaluation in HCI. More powerful models than GOMS are now being integrated into evaluation tools (e.g., Salvucci 2009).

Author/Copyright holder: Courtesy of Ed Brown. Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

Figure 15.16: Model-Based methods can predict how long drivers could be distracted, and much more.

Usability work can thus be expected to involve a mix of methods. The mix can be guided by high level distinctions between methods. Evaluation methods can be analytical (based on examination of an interactive system and/or potential interactions with it) or empirical (based on actual usage data). Some analytical methods require the construction of one or more models. For example, GOMS models the relationships between software and human performance. Software attributes in GOMS all relate to user input methods at increasing levels of abstraction from the keystroke level up to abstract command constructs. System and user actions are interleaved in task models to predict users’ methods (and execution times at a keystroke level of analysis).

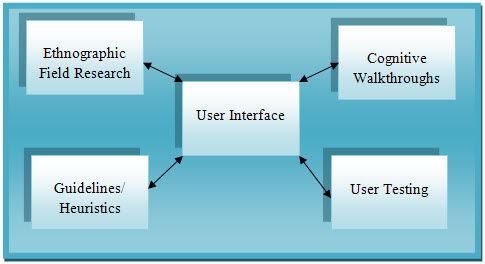

15.5.1 Analytical and Empirical Evaluation Methods, and How to Mix Them

Analytical evaluation methods may be system-centred (e.g., Heuristic Evaluation) or interaction-centred (e.g., Cognitive Walkthrough). Design teams use the resources provided by a method (e.g., heuristics) to identify strong and weak elements of a design from a usability perspective. Inspection methods tend to focus on the causes of good or poor usability. System-centred inspection methods focus solely on software and hardware features for attributes that will promote or obstruct usability. Interaction-centred methods focus on two or more causal factors (i.e., software features, user characteristics, task demands, other contextual factors).

Empirical evaluation methods focus on evidence of good or poor usability, i.e., the positive or negative effects of attributes of software, hardware, user capabilities and usage environments. User testing is the main project-focused method. It uses project-specific resources such as test tasks, users, and also measuring instruments to expose usability problems that can arise in use. Also, essentialist usability can use empirical experiments to demonstrate superior usability arising from user interface components (e.g., text entry on mobile phones) or to optimise tuning parameters (e.g., timings of animations for windows opening and closing). Such experiments assume that the test tasks, test users and test contexts allow generalisation to other users, tasks and contexts. Such assumptions are readily broken, e.g., when users are very young or elderly, or have impaired movement or perception.

Analytical and empirical methods emerged in rapid succession, with empirical methods emerging first in the 1970s as simplified psychology experiments (for examples, see early volumes of International Journal of Man-Machine Studies 1969-79). Model-based approaches followed in the 1980s, but the most practical ones are all variants of the initial GOMS method (John and Kieras 1996). Model-free inspection methods appeared at the end of the 1980s, with rapid evolution in the early 1990s. Such methods sought to reduce the cost of usability evaluation by discounting across a range of resources, especially users (none required, unlike user testing), expertise (transferred by heuristics/models to novices) or extensive models (none required, unlike GOMS).

Author/Copyright holder: Old El Paso. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 15.17: Chicken Fajitas Kit: everything you need except chicken, onion, peppers, oil, knives, chopping board, frying pan, stove etc. Usability Evaluation Methods are very similar - everything is fine once you’ve worked to provide everything that’s missing

Achieving balance in a mix of evaluation methods is not straightforward, and requires more than simply combining analytical and empirical methods. This is because there is more to usability work than simply choosing and using methods. Evaluation methods are as complete as a Chicken Fajita Kit, which contains very little of what is actually needed to make Chicken Fajitas: no chicken, no onion, no peppers, no cooking oil, no knives for peeling/coring and slicing, no chopping board, no frying pan, no stoves etc. Similarly, user testing ‘methods’ as published miss out equally vital ingredients and project specific resources such as participant recruitment criteria, screening questionnaires, consent forms, test task selection criteria, test (de)briefing scripts, target thresholds, and even data collection instruments, evaluation measures, data collation formats, data analysis methods, or reporting formats. There is no complete published user testing method that novices can pick up and use ‘as is’. All user testing requires extensive project-specific planning and implementation. Instead, much usability work is about configuring and combining methods for project-specific use.

15.5.2 The Only Methods are the Ones that You Complete Yourselves

When planning usability work, it is important to recognise that so-called ‘methods’ are more strictly loose collections of resources better understood as ‘approaches’. There is much work in getting usability work to work, and as with all knowledge-based work, methods cannot copied from books and applied without a strong understanding of fundamental underlying concepts. One key consequence here is that only specific instances of methods can be compared in empirical studies, and thus credible research studies cannot be designed to collect evidence of systematic reliable differences between different usability evaluation methods. All methods have unique usage settings that require project-specific resources, e.g., for user testing, these include participant recruitment, test procedures and (de-)briefings. More generic resources such as problem extraction methods (Cockton and Lavery 1999) may also vary across user testing contexts. These inevitably obstruct reliable comparisons.

Author/Copyright holder: George Eastman House Collection. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Author/Copyright holder: George Eastman House Collection. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 15.18 A-B: Dogs or Cats: which is the better pet? It all depends on what sorts of cats and dogs you compare, and how you compare them. The same is true of evaluation methods.

Consider a simple comparison of heuristic evaluation against user testing. Significant effort would be required to allow a fair comparison. For example, if the user testing asked test users to carry out fixed tasks, then heuristic evaluators would need to explore the same system using the same tasks. Any differences and similarities between evaluation results for the two methods would not generalise beyond these fixed tasks, and there are also likely to be extensive evaluation effects arising from individual differences in evaluator expertise and performance. If tasks are not specified for the evaluations, then it will not be clear whether differences and similarities between results are due to the approaches used or to the unrecorded tasks within for the evaluations. Given the range of resources that need to be configured for a specific user test, it is simply not possible to control all known potential confounds (still less all currently unknown ones). Without such controls, the main sources of differences between methods may be factors with no bearing on actual usability.

The tasks carried out by users (in user testing) or used by evaluators (in inspections or model specifications) are thus one possible confound when comparing evaluation approaches. So too are evaluation measures and target thresholds. Time on task is a convenient measure for usability, and for some usage contexts it is possible to specify worthwhile targets, e.g., for supermarket checkouts thetarget time to check out representative trolleys of purchases could be 30 minutes for 10 typical trolley loads of shopping). However, in many settings, there are no time thresholds for efficient use that can be used reliably (e.g., time to draft and print a one page business letter, as opposed to typing one in from a paper draft or a dictation).

Problems associated with setting thresholds are compounded by problems associated with choosing the measures for which thresholds are required. A wide range of potential measures can be chosen for user testing. For example, in 1988, usability specialists from Digital Equipment Corporation and IBM (Whiteside et al. 1988) published a long list of possible evaluation measures, including:

Measure without measure: there’s so much scope for scoring

Counts of:

commands used

repetitions of failed commands

runs of successes and of failures

good and bad features recalled by users

available commands not invoked/regressive behaviours

users preferring your system

Percentage of tasks completed in time period

Counts or percentages of:

errors

superior competitor products on a measure

Ratios of

successes to failures

favourable to unfavourable comments

Times

to complete a task

spent in errors

spent using help or documentation

Frequencies

of help and documentation use

of interfaces misleading users

users needing to work around a problem

users disrupted from a work task

users losing control of the system

users expressing frustration or satisfaction

No claims were made for the comprehensiveness of the full list of measures that were known to have been used up to the point of publication within Digital Equipment Corporation or IBM. What was clear was a position that project teams must choose their own metrics and thresholds. No methods yet exist to reliably support such choices.

There are no universal measures of usability that are relevant to every software development project. Interestingly, Whiteside et al. (1988) was the publication that first introduced contextual design to the HCI community. Its main message was that existing user testing practices were delivering far less value for design than contextual research. A hope was expressed that once contexts of use were better understood, and contextual insights could be shown to inform successful design across a diverse range of projects, then new contextual measures would be found for more appropriate evaluation of user experiences. Two decades elapsed before bases for realising this hope emerged within HCI research and professional practice. The final sections of this encyclopaedia entry explore possible ways forward.

15.5.3 Sorry to Disappoint You But ...

To sum up the position so far:

There are fundamental differences on the nature of usability, i.e., it is either an inherent property of interactive systems, or an emergent property of usage. There is no single definitive answer to what usability ‘is’. Usability is only an inherent measurable property of all interactive digital technologies for those who refuse to think of it in any other way.

There are no universal measures of usability, and no fixed thresholds above or below which all interactive systems are or are not usable. There are no universal, robust, objective and reliable metrics. There are no evaluation methods that unequivocally determine whether or not an interactive system or device is usable, or to what extent. All positions here involve hard won expertise, judgement calls, and project-specific resources beyond what all documented evaluation methods provide.

Usability work is too complex and project-specific to admit generalisable methods. What are called ‘methods’ are more realistically ‘approaches’ that provide loose sets of resources that need to be adapted and configured on a project by project basis. There are no reliable pre-formed methods for assessing usability. Each method in use is unique, and relies heavily on the skills and knowledge of evaluators, as well as on project-specific resources. There are no off-the-shelf evaluation methods. Evaluation methods and metrics are not completely documented in any literature. Developing expertise in usability measurement and evaluation requires far more than reading about methods, learning how to apply them, and through this alone, becoming proficient in determining whether or not an interactive system or device is usable, and if so, to what extent. Even system-centred essentialist methods leave gaps for evaluators to fill (Cockton et al. 2004, Cockton et al. 2012).

The above should be compared with the four opening propositions, which together constitute an attractive ideology that promises certainties regardless of evaluator experience and competence. Each proposition is not wholly true, and can be mostly false. Evaluation can never be an add-on to software development projects. Instead, the scope of usability work, and the methods used, need to be planned with other design and development activities. Usability evaluation requires supporting resources that are an integral part of every project, and must be developed there.

The tension between essentialist and relational conceptualisations of usability is only the tip of the iceberg of challenges for usability work. Not only is it not clear what usability is (although competing definitions are available), but it is also not clear specifically how usability should be assessed outside of the contexts of specific projects. What matters in one context may not matter in another. Project teams must decide what matters. The usability literature can indicate possible measure of usability, but none are universally applicable. The realities of usability work are that each project brings unique challenges that require experience and expertise to meet them. Novice evaluators cannot simply research, select and apply usability evaluation methods. Instead, actual methods in use are the critical achievement of all usability work.

Methods are made on the ground on a project by project basis. They are not archived ‘to go’ in the academic or professional literature. Instead there are two starting points. Firstly, there are literatures on a range of approaches that provide some re-usable resources for evaluators, but require additional information and judgement within project contexts before practical methods can be completed. Secondly, there are detailed case studies of usability work within specific projects. Here the challenge for evaluators is to identify resources and practices within the case study that would have a good fit with other project contexts, e.g., a participant recruitment procedure from a user testing case study may be re-usable in other projects, perhaps with some modifications.

Readers could reasonably draw the conclusion from the above that usability is an attractive idea in principle that has limited substance in reality. However, the reality is that we all continue to experience frustrations when using interactive digital technologies, and often we would say that we do find them difficult to use. Even so, frustrating user experiences may not be due to some single abstract construct called ‘usability’, but instead be the result of unique complex interactions between people, technology and usage contexts. Interacting factors here must be considered together. It is not possible to form judgements on the severity of isolated usage difficulties, user discomfort or dissatisfaction. Overall judgements on the quality of interactive software must balance what can be achieved through using it against the costs of this use. There are no successful digital technologies without what could be usability flaws to some HCI experts (I can always find some!). Some technologies appear to have severe flaws, and are yet highly successful for many users. Understanding why this is the case provides insights that move us away from a primary focus on usability in interaction design.

15.6 Worthwhile Usability: When and Why Usability Matters, and How Much

While writing the previous section, I sought advice via Facebook on transferring contacts from my vintage Nokia N96 mobile phone to my new iPhone. One piece of advice turned out to be specific to Apple computers, but was still half-correct for a wintel PC. Eventually, I identified a possible data path that required installing the Nokia PC suite on my current laptop, backing up contacts from my old phone to my laptop, downloading a freeware program that would convert contacts from Nokia’s proprietary backup format into a text format for spreadsheets/databases (comma separated values - .csv), failing to import it into a cloud service, importing it into the Windows Address Book on my laptop (after spreadsheet editing), and then finally synchronising the contacts instead via iTunes with my new iPhone.

15.6.1 A Very Low Frequency Multi-device Everyday Usability Story

From start to finish, my phone number transfer task took two and a half hours. Less than half of my contacts were successfully transferred, and due to problems in the spreadsheet editing, I had to transfer contacts in a form that required further editing on my iPhone or in the Windows contacts folder.

Focusing on the ISO 9241-11 definition of usability, what can we say here about the usability of a complex ad hoc overarching product-service system involving social networks, cloud computing resources, web searches, two component product-service systems (Nokia 96 + Nokia PC Suite, iPhone + iTunes) and Windows laptop utilities?

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 15.19: A Tale of Two Mobiles and Several Software Utilities

Was it efficient taking 2.5 hours over this? Around 30 minutes each were spent on:

web searches, reading about possible solutions, and a freeware download

installing mobile phone software (new laptop since I bought the Nokia), attempts to connect Nokia to laptop, laptop restart, successful backup, extraction to .csv format with freeware

exploring a cloud email contacts service, failing to upload to it.

test upload to Windows address book, edits to improve imports, failed edits of phone numbers, successful import

Synchronisation of iPhone and iTunes

To reach a judgement on efficiency, we need to first bear in mind that during periods of waiting (uploads, downloads, synchronisations, installations), I proof read my current draft of this entry and corrected it. This would have taken 30 minutes anyway. Secondly, I found useful information from the web searches that lead me to the final solution. Thirdly, I had to learn how to use the iTunes synchronisation capabilities for iPhones, which took around 10 minutes and was an essential investment for the future. However, I wasted at least 30 minutes on a cloud computing option suggested on Facebook (I had to add email to the cloud service before failing to upload from the .csv file). There were clear usability issues here, as the email service gave no feedback as to why it was failing to upload the contacts. There is no excuse for such poor interaction design in 2011, which forced me to try a few times before I realised that it would not work, at least with the data that I had. Also, the extracted phone numbers had text prefixes, but global search and replace in the spreadsheet resulted in data format problems that I could not overcome. I regard both of these as usability problems, one due to the format of the telephone numbers as extracted, and one due to the bizarre behaviour of a well known spreadsheet programme.

I have still not answered the question of whether this was efficient in ISO 9241-11 terms. I have to conclude that it was not, but this was partly due to my lack of knowledge on co-ordinating a complex combination of devices and software utilities. However, back when contacts were only held on mobile phone SIMs, the transfer would have taken a few minutes in a phone store. So, current usability problems here are due to the move to storing contacts in more comprehensive formats separately from a mobile phone’s SIM. However, while there used to be a more efficient option, most of us now make use of more comprehensive phone memory contacts, and thus the previous fast option was at the cost of the most primitive contact format imaginable. So while the activity was relatively inefficient, there are potentially compensating reasons for this.

The only genuine usability problems relate to the lack of feedback in the cloud-based email facility, the extracted phone number formats, and bizarre spreadsheet behaviour. However, I only explored the cloud email option following advice via Facebook. My experience of problems here was highly contextual. For the other two problems, if the second problem had not existed, then I would never have experienced the third.

There are clear issues of efficiency. At best this took twice as long as it should have once interleaved work and much valuable re-usable learning are discounted. However, the causes of this inefficiency are hard to pin-point within the complex socially shaped context within which I was working.

Effectiveness is easy to evaluate. I only transferred just under 50% of the contacts. Note how straightforward the analysis is here when compared to efficiency in relation to a complex product-service system.

On balance, you may be surprised to read that I was fairly satisfied. Almost 50% is better than nothing. I learned how to synchronise my iPhone via iTunes for the first time. I made good use of the waits in editing this encyclopaedia entry. I was not in any way happy though, and I remain dissatisfied over the phone number formats, inscrutable spreadsheet behaviour and mute import facility on a top three free email facility.

15.6.2 And the Moral of My Story Is: It was Worth It, on Balance

What overall judgement can we come to here? On a binary basis, the final data path that I chose was usable. An abandoned path was not, so I did encounter one unusable component during my attempt to transfer phone numbers. As regards a more realistic extent of usability (as opposed to binary usable vs. unusable), we must trade off factors such as efficiency, effectiveness and satisfaction against each other. I could rate the final data path as 60% usable, with effective valuable learning counteracting the ineffective loss of over half of my contacts, which I had to subsequently enter manually. I could raise substantially this to 150% by adding the value of the resulting example for this encyclopaedia entry! It reveals the complexity of evaluating usability of interactions involving multiple devices and utilities. Describing usage is straightforward: judging its quality is not.

So, poor usability is still with us, but it tends to arise most often when we attempt to co-ordinate multiple digital devices across a composite ad-hoc product-service system. Forlizzi (2008) and others refer to these now typical usage contexts as product ecologies, although some (e.g., Harper et al. 2008) prefer the term product ecosystems, or product-service ecosystems (ecology is the discipline of ecosystems, not the systems themselves).

Components that are usable enough in isolation are less usable in combination. Essentialist positions on usability become totally untenable here, as the phone formats can blame the bizarre spreadsheet and vice-versa. The effects of poor usability are clear, but the causes are not. Ultimately, the extent of usability, and its causes in such settings, is a matter of interpretation based on judgements of the value achieved and the costs incurred.

Far from being an impasse, regarding usability as a matter of interpretation actually opens up a way forward for evaluating user experiences. It is possible to have robust interpretations of efficiency, effectiveness and satisfaction, and robust bases for overall assessments of how these trade-off against each other. To many, these bases will appear to be subjective, but this is not a problem, or at least it is far less of a problem than acting erroneously as if we have generic universal objective criteria for the existence or extent of usability in any interactive system. To continue any quest for such criteria is most definitely inefficient and ineffective, even if the associated loyalties to seventeenth century scientific values bring some measure of personal (subjective) satisfaction.

It is poor usability that focused HCI attention in the 1980s. There was no positive conception of good usability. Poor usability could degrade or even destroy the intended value of an interactive system. However, good usability can not donate value beyond that intended by a design team. Usability evaluation methods are focused on finding problems, not on finding successes (with the exception of Cognitive Walkthrough). Still, experienced usability practitioners know that an evaluation report should begin by commending the strong points of a design, but these are not what usability methods are optimised to detect.

Realistic relevant evaluations must assess incurred costs relative to achieved benefits. When transferring my contacts between phones, I experienced the following problems and associated costs:

Could not upload contacts into cloud email system, despite several attempts (cost: wasted 30 minutes)

Could not understand why I could not upload contacts into cloud email system (costs: prolonged frustration, annoyance, mild anger, abusing colleagues’ company #1)

Could not initiate data transfer from Nokia phone first time, requiring experiments and laptop restart as advised by Nokia diagnostics (cost: wasted 15 minutes)

Over half of my contacts did not transfer (future cost: 30-60 further minutes entering numbers, depending on use of laptop or iPhone, in addition to 15 minutes already spent finding and noting missing contacts)

Deleting type prefixes (e.g., TEL CELL) from phone numbers in a spreadsheet resulted in an irreversible conversion to a scientific format number (cost: 10 wasted minutes, plus future cost of 30-60 further minutes editing numbers in my phone, bewilderment, annoyance, mild anger, abusing colleagues’ company #2)

Had to set a wide range of synchronisation settings to restrict synchronisation to contacts (cost extra 10 minutes, initial disappointment and anxiety)

Being unable to blame Windows for anything (this time)!

By forming the list above, I have taken a position on what, in part, would count as poor usability. To form a judgement as to whether these costs were worthwhile, I also need to take a position on positive outcomes and experiences:

an opportunity to ask for, and receive, help from Facebook friends (realising some value of existing social capital)

a new email address gilbertcockton@... via an existing cloud computing account (future value unknown at time, but has since proved useful)

Discovered a semi-effective data path that transferred almost half of my contacts to my iPhone (saved: 30-60 minutes of manual entry, potential re-usable knowledge for future problem solving)

Learned about a nasty spreadsheet behaviour that could cause problems in the future unless I find out how to avoid it (future value potentially zero)

Learned about the Windows address book and how to upload new contacts as .csv files (very high future value - at the very least PC edits/updates are faster than iPhone, with very easy copy/paste from web and email)

Learned how to synchronise my new iPhone with my laptop via iTunes (extensive indubitable future value, repeatedly realised during the editing of this entry, including effortless extension to my recent new iPad)

Time to proof the previous draft of this entry and edit the next version (30 minutes of effective work during installs, restarts and uploads)

Sourced the main detailed example for this encyclopaedia entry (hopefully as valuable to you as a reader as to me as a writer:I’ve found it really helpful)