40.1 What is Design for All?

Contemporary interactive technologies and environments are used by a multitude of users with diverse characteristics, needs and requirements, including able-bodied and disabled people, people of all ages, people with different skills and levels of expertise, people from all over the world with different languages, cultures, education, etc. Additionally, interactive technologies are penetrating all aspects of everyday life, in communication, work and collaboration, health and well being, home control and automation, public services, learning and education, culture, travel, tourism and leisure, and many others. New technologies targeted to satisfy human needs in the above contexts proliferate, whether stationary or mobile, centralized or distributed, visible or encapsulated in the environment. A wide variety of devices is already available, and new ones tend to appear frequently and on a regular basis.

In this context above, interaction design acquires a new dimension, and the question arises of how it is possible to design systems that permit systematic and cost-effective approaches to accommodating all users. Design for All is an umbrella term for a wide range of design approaches, methods, techniques and tools to help address this huge diversity of needs and requirements in the design of interactive technologies. Design for All entails an effort to build access features into a product, starting from its conception and throughout the entire development life-cycle.

This chapter:

introduces Design for All through a brief excursus into its roots and origin

provides an overview of the dimensions of diversity which make Design for All a necessity in today’s technological landscape

presents the main perspectives on Design for All and the related technical approaches

discusses some commonly design methods and techniques in the Design for All context

presents both consolidated and emerging interaction technologies and techniques

identifies future directions in the context of the emerging paradigm of Ambient Intelligence.

40.1.1 Brief history

The concept of Design for All was introduced in the Human-Computer Interaction (HCI) literature at the end of the nineties, following a series of research efforts mainly funded by the European Commission (Stephanidis and Emiliani, 1999; Stephanidis et al., 1998; Stephanidis et al., 1999). Design for All in HCI is rooted in the fusion of three traditions:

user-centered design placing the user at the center of the interaction design process

accessibility and assistive technologies for disabled people

Universal Design for physical products and the built environment.

40.1.1.1 From User-centered design to Design for All

User-centered design (Vrendenburg et al., 2001; ISO 1999; ISO 2010) is an approach to interactive system design and development that focuses specifically on making systems usable. It is an iterative process whose goal is the development of usable systems, achieved through the involvement of potential users during the design of the system.

Definition

User-centered design

An approach to designing ease of use into the total user experience with products and systems. It involves two fundamental elements—multidisciplinary teamwork and a set of specialized methods of acquiring user input and converting it into design.

Vrendenburg et al., 2001

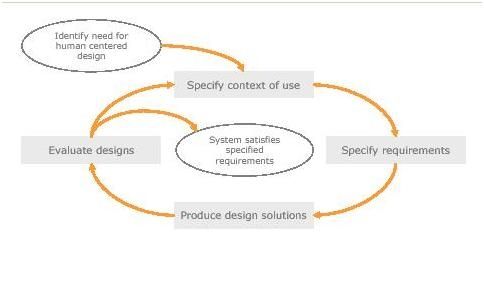

User-centered design includes four iterative design activities, all involving direct user participation:

understand and specify the context of use, the nature of the users, their goals and tasks, and the environment in which the product will be used;

specify the user and organizational requirements in terms of effectiveness, efficiency and satisfaction; and the allocation of function between users and the system;

produce designs and prototypes of plausible solutions; and

carry out user-based assessment.

Author/Copyright holder: UXPA. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 40.1: User-centered design cycle.

User-centered design requires:

Active involvement of users and clear understanding of user and task requirements. The active involvement of end-users is one of the key strengths, as it conveys to designers the context of use in which the system will be used, potentially enhancing the acceptance of the final outcome.

The appropriate allocation of functions between the user and the system. It is important to determine which aspects of a job or a task will be handled by users and which can be handled by the system itself. This division of labor should be based on an appreciation of human capabilities and their limitations, and on a thorough grasp of the particular demands of the task.

Iteration of design solutions. Iterative design entails receiving feedback from end-users following their use of early design solutions. The users attempt to accomplish “real world” tasks using the prototype. The feedback from this exercise is used to further develop the design.

Multi-disciplinary design teams. User centered system development is a collaborative process that benefits from the active involvement of various parties, each of whom have insights and expertise to share. Therefore, the development team should be made up of experts with technical skills in various phases of the design life cycle. The team might thus include managers, usability specialists, end-users, software engineers, graphic designers, interaction designers, training and support staff, and task experts.

User-centered design claims that the quality of use of a system, including usability and user health and safety, depends on the characteristics of the users, tasks, and the organizational and physical environment in which the system is used. Also, it stresses the importance of understanding and identifying the details of this context in order to guide early design decisions, and provides a basis for specifying the content in which usability should be evaluated. However, it is limited in its capability of addressing the diversity in user requirements, as it fosters the traditional perspective of “typical” or “average” users interacting with desktop computers in working environments (Stephanidis, 2001). While user-centered design focuses on maintaining a multidisciplinary and user-involving perspective into systems development, it does not specify how designers can cope with radically different user groups.

40.1.1.2 From Accessibility and Assistive Technologies to Design for All

Accessibility in the context of HCI refers to the access by people with disabilities to Information and Communication Technologies (ICT). Interaction with ICT may be affected in various ways by the user’s individual abilities or functional limitations that may be permanent, temporary, situational or contextual. For example, someone with limited visual functions will not be able to use an interactive system which only provides graphical output, while someone with limited bone or joint mobility or movement functions, affecting the upper limbs, will encounter difficulties in using an interactive system that only accepts input through the standard keyboard and mouse.

Accessibility in the context of HCI aims to overcome such barriers by making the interaction experience of people with diverse functional or contextual limitations as near as possible to that of people without such limitations.

The interaction process can be roughly analyzed as follows:

the user provides input to the system through an action using an input device (e.g., the user pushes a mouse button to enter a command);

the input is interpreted by the system (e.g., the system recognizes and executes the command);

the system generates a response (e.g., the system generates a response message for the user notifying the execution of the command);

the response is presented to the user through a system action using an output device (e.g., the message is displayed on the screen through a message window);

the user perceives and interprets the response (e.g., the users sees the message in the message window on the screen and reads it).

A physical device is an artifact of the system that acquires (input device) or delivers (output device) information. Examples include keyboard, mouse, screen, and loudspeakers. An interaction technique involves the use of one or more physical devices to allow end-users provide input or receive output during the operation of a system.

An interaction style is a set of perceivable interaction elements used by the user (through an interaction technique) or the system to exchange information sharing common aesthetic and behavioral characteristics. In graphical user interfaces the term interaction style is used to denote a common look and feel among interaction elements. Typical examples are menu selection and direct manipulation. Interaction elements compose the user interface of a system with user interaction resulting from physical actions. A physical action is an action performed either by the system or the user on a physical device. Typically, system actions concern feedback and output, while user actions provide input. Examples of input actions include pushing a mouse button or typing on a keyboard. Different interaction techniques and styles exploit different sensory modalities. In practice, the modalities related to seeing and hearing are the most commonly employed for output, whereas haptics is less used. Interestingly, however, haptics remains the primary modality for input (e.g., typing, pointing, touching, sliding, grabbing, etc). Taste and smell have only recently started being investigated for output purposes.

In summary, the actual human functions involved in interaction are motion, perception and cognition. In this context, accessibility implies that:

the input devices and related interaction techniques are such that their manipulation by users is feasible (i.e., they are compatible with the user’s functions related to motion);

the adopted interaction styles (and the resulting user interfaces) can be perceived by the users (i.e., they are compatible with the user’s sensory functions);

the adopted interaction styles (and the resulting user interfaces) can be understood by the users (i.e., they are compatible with the user’s cognitive functioning).

Given the degree of human diversity as regards the involved functions, accessibility requires the availability of alternative devices and interaction styles to accommodate different needs.

In traditional efforts to improve accessibility, the main direction followed has been to enable disabled users to access interactive applications originally developed for able-bodied users through appropriate assistive technologies.

Assistive Technology (AT) is a generic term denoting a wide range of accessibility plug-ins including special-purpose input and output devices and the process used in selecting, locating, and using them. AT promotes greater independence for people with disabilities by enabling them to perform tasks that they were originally unable to accomplish, or had great difficulty accomplishing. In this context, it provides enhanced or alternative methods to interact with the technology involved in accomplishing such tasks.

Definition

Assistive or Adaptive Technology commonly refers to "...products, devices or equipment, whether acquired commercially, modified or customized, that are used to maintain, increase or improve the functional capabilities of individuals with disabilities...“

Assistive Technology Act of 1998

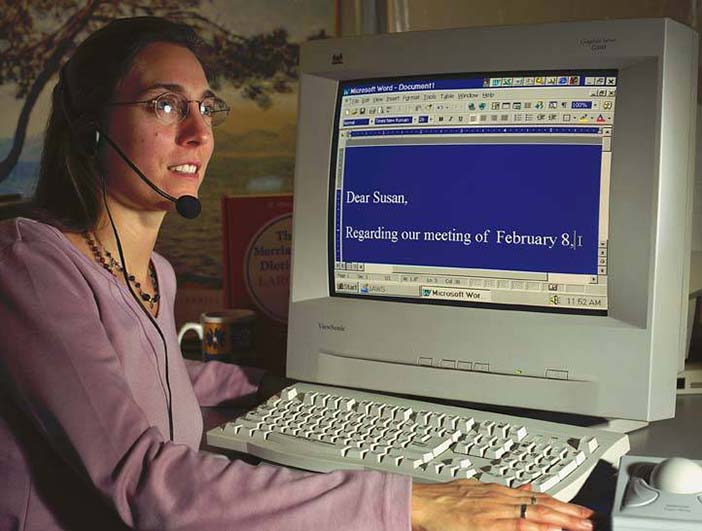

Popular Assistive Technologies include screen readers and Braille displays for blind users, screen magnifiers for users with low vision, alternative input and output devices for motor impaired users (e.g., adapted keyboards, mouse emulators, joystick, binary switches), specialized browsers, and text prediction systems).

Author/Copyright holder: Clevy Keyboard. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 40.2: Adapted keyboard

Author/Copyright holder: gizmodo.com. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 40.3: Footmouse

Assistive Technologies are essentially reactive in nature. They provide product-level and platform-level adaptation of interactive applications and services originally designed and developed for able-bodied users. The need for more systematic and proactive approaches to the provision of accessibility has led to the concept of Design for All. Such shift from accessibility, as traditionally defined in the assistive technology sector, to a Design for All perspective, is due to: (i) the rapid pace of technological developments, with many new systems, devices, applications, and users, making accessibility add-ons very difficult to develop; (ii) an increased interest in people at risk of technological exclusion, not only limited to people with disabilities. Under this perspective, accessibility has to be designed as a primary system feature, rather than decided upon and implemented a posteriori, thus integrating accessibility into the design process of all applications and services.

40.1.1.3 From Universal Design to Design for All in HCI

Proactive approaches toward addressing people’s diversity first emerged in engineering disciplines, such as civil engineering and architecture, with many applications in interior design, building and road construction.

The term Universal Design was coined by the architect Ronald L. Mace to describe the concept of designing all products and the built environment to be both aesthetically pleasing and usable to the greatest extent possible by everyone, regardless of their age, ability, or status in life (Mace et al., 1991). Although the scope of the concept has always been broader, its focus has tended to be on the built environment.

Definition

Universal Design:is the design of products and environments to be usable by all people, to the greatest extent possible, without the need for adaptation or specialized design. The intent of Universal Design is to simplify life for everyone by making products, communications, and the built environment more usable by as many people as possible at little or no extra cost. Universal Design benefits people of all ages and abilities.

(Mace et al., 1991)

A classic example of Universal Design is the kerb cut (or sidewalk ramp), initially designed for wheelchair users to navigate from street to sidewalk, and today widely used in many buildings. Other examples are low-floor buses, cabinets with pull-out shelves, as well as kitchen counters at several heights to accommodate different tasks and postures.

Author/Copyright holder: www.thelittlehousecompany.co.uk. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 40.4: Accessible home

Author/Copyright holder: Mohammed Yousuf and Mark Fitzgerald. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 40.5: Accessible traffic light

Perhaps the most common approach in Universal Design is to make information about an object or a building available through several modalities, such as Braille on elevator buttons, and acoustic feedback for traffic lights. People without disabilities can often benefit too. For example, subtitles on TV or multimedia content intended for the deaf can also be useful to non-native speakers of a language, to children for improving literacy skills, or to people watching TV in noisy environments.

The seven Principles of Universal Design were developed in 1997 by a working group of architects, product designers, engineers, and environmental design researchers, led by Ronald Mace at North Carolina State University. The Principles "may be applied to evaluate existing designs, guide the design process and educate both designers and consumers about the characteristics of more usable products and environments."

Equitable use: The design is useful and marketable to people with diverse abilities

Flexibility in use: The design accommodates a wide range of individual preferences and abilities

Simple and intuitive: Use of the design is easy to understand, regardless of the user's experience, knowledge, language skills, or current concentration level

Perceptible information: The design communicates necessary information effectively to the user, regardless of ambient conditions or the user's sensory abilities

Tolerance for error: The design minimizes hazards and the adverse consequences of accidental or unintended actions

Low physical effort: The design can be used efficiently and comfortably and with a minimum of fatigue

Size and space for approach and use: Appropriate size and space is provided for approach, reach, manipulation, and use regardless of user's body size, posture, or mobility

Copyright © 1997 NC State University, The Center for Universal Design. (http://www.ncsu.edu/ncsu/design/cud/about_ud/udprinciples.htm)

In the context of HCI, the above concepts were used by the end of the nineties to denote design for access by anyone, anywhere and at any time to interactive products and services.

The term Design for All either subsumes, or is a synonym of, terms such as accessible design, inclusive design, barrier-free design, universal design, each highlighting different aspects of the concept.

(Stephanidis et al., 1998)

Definition

Design for All in HCI:Is the conscious and systematic effort to proactively apply principles and methods, and employ appropriate tools, in order to develop Information Technology &Telecommunications (IT&T) products and services which are accessible and usable by all citizens,thus avoiding the need for a posteriori adaptations, or specialized design.This entails an effort to build access features into a product starting from its conception, throughout the entire development life-cycle.

(Stephanidis et al., 1998)

Design for All in HCI implies a reconsideration of traditional design qualities such as accessibility and usability.

Definitions

Accessibility: for each task a user has to accomplish through an interactive system, and taking into account specific functional limitations and abilities, as well as other relevant contextual factors, there is a sequence of input and output actions which leads to successful task accomplishment (Savidis and Stephanidis, 2004). Usability: capability of all supported paths towards task accomplishment to “maximally fit” individual users’ needs and requirements in the particular context and situation of use

(Savidis and Stephanidis, 2004).

It follows that accessibility is a fundamental prerequisite of usability, since there may not be optimal interaction if there is no possibility of interaction in the first place.

40.2 Dimensions of diversity

40.2.1 User diversity

The term “user diversity” refers to the various differences among users in their perception, manipulation, and utilization of technology. Understanding the various dimensions of user diversity helps design and develop user interfaces that maximize benefits for different types of users. There are several dimensions of diversity that differentiate people’s interactions with technology.

40.2.1.1 Disability

At the heart of accessibility lies a focus on human diversity in relation to access and use of ICTs. Main efforts in this direction are concerned with the identification and study of diverse target user groups (e.g., people with various types of disabilities and elderly people), as well as of their requirements for interaction, and of appropriate modalities, interactive devices and techniques to address their needs. Much experimental work has been conducted in recent years in order to collect and elaborate knowledge of how various disabilities affect interaction with technology. Such understanding can be facilitated by the functional approach of the “International Classification of Functioning, Disability and Health (ICF)” (World Health Organization 2001), where the term disability is used to denote a multidimensional phenomenon resulting from the interaction between people and their physical and social environment. This allows grouping and analysis of limitations that are not only due to impairments, but also, for example, due to environmental reasons.

40.2.1.1.1 Perception

Visual and auditory impairments significantly affect human-computer interaction.

Blindness means anatomic and functional disturbances of the sense of vision of sufficient magnitude to cause total loss of light perception, while visual impairment refers to any deviation from the generally accepted norm. Blind users benefit from using the auditory and the haptic modality for output and input purposes. In practice, blind users’ interaction is supported through screen readers (i.e., specialized software which reads aloud a graphical interface to the user (Asakawa and Leporini, 2009)), or speech output, and Braille displays (see section Haptics). The latter are haptic devices, but require knowledge of the Braille code. Audio (non-speech) sound can also be used to improve the blind user’s interactive experience (Nees and Walker, 2009). Blind users can use keyboards and joysticks, though not the mouse. Therefore, all actions in a user interface must be available without the use of the mouse. It is also important for both output and input that the provided user interface is structured in such a way as to minimize the time required to access specific important elements (e.g., menus or links) when they are made available in a sequential fashion (e.g., through speech).

Less severe visual limitations are usually addressed by increasing the size of interactive artifacts, as well as color contrast between the background and foreground elements of a user interface. Specific combinations of colors may be necessary for users with various types of color-blindness.

Hearing impairments include any degree and type of auditory disorder, on a scale from slight to extreme. Hearing limitations can significantly affect interaction with technology. Familiar coping strategies for hearing-impaired people include the use of hearing aids, lip-reading and telecommunication devices for the deaf. Deaf users may not be comfortable with written language in user interfaces, and may benefit from sign-language translations of the information provided (see section Sign Language).

40.2.1.1.2 Motion

The nature and causes of physical impairments vary; however, the most common problems faced by individuals with physical impairments include poor muscle control, weakness and fatigue, difficulty in walking, talking, seeing, speaking, sensing or grasping (due to pain or weakness), difficulty reaching things, and difficulty doing complex or compound manipulations (push and turn). Individuals with severe physical impairments usually have to rely on assistive devices such as mobility aids, manipulation aids, communication aids, and computer interface aids (Keates, 2009).

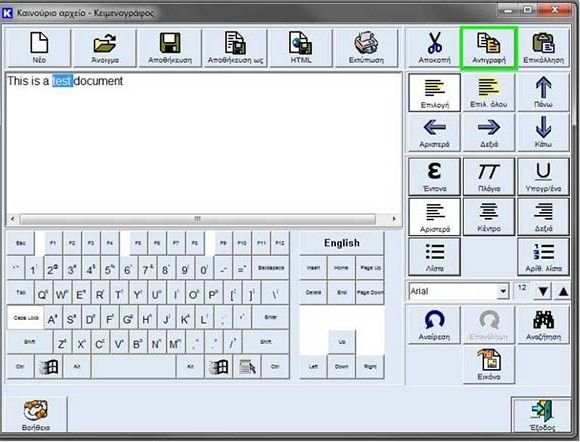

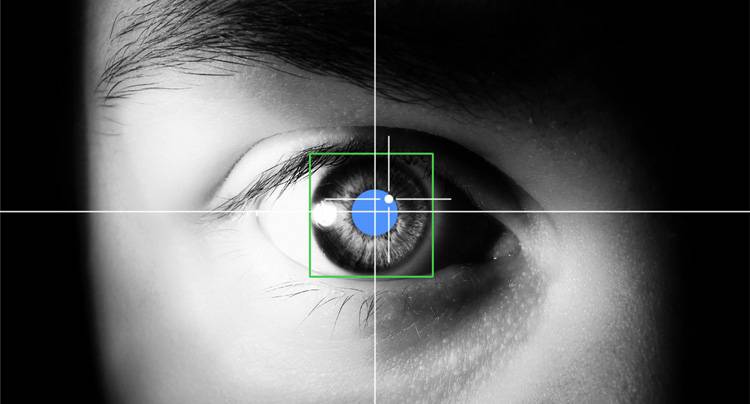

Motion impairments interfere with the functions that are necessary for interacting with technology. For example, using a mouse and a keyboard can be challenging or painful. Therefore, motor-impaired users may benefit from specialized input devices minimizing movement and physical effort required for input, including adapted keyboards, mouse emulators, joystick and binary switches, often used in conjunction with an interaction technique called scanning (see section Scanning-based Interaction), as well as virtual on-screen keyboards for text input, sometimes supported by text prediction systems. Other interaction techniques which have been investigated for use by people with motion functions limitations are voice input of spoken commands (see section Speech), keyboard and mouse simulation through head movements (see section Gestures and Head Tracking), and eye-tracking (see section Eye-tracking). Brain-computer interfaces which allow control of an application simply by thought are also being investigated for supporting the communication of people with severe motor impairments (see section Brain Interfaces).

40.2.1.1.3 Cognition

Cognition is the ability of the human mind to process information, think, remember, reason, and make decisions. The extent of cognitive abilities varies greatly from person to person. Cognitive disability entails a substantial limitation in one's capacity to think, including: conceptualizing, planning, and sequencing thoughts and actions; remembering, interpreting subtle social cues, and understanding numbers and symbols. Cognitive disabilities can stem from brain injury, Alzheimers disease and dementia, severe and persistent mental illness, and stroke. Cognitive disabilities are many and diverse, individual differences are often very pronounced for these user groups, and it is particularly difficult to abstract and generalize the issues involved in researching user requirements for this part of the population.

Various cognitive limitations and learning difficulties may affect the interaction process. General principles which facilitate access for users with some types of cognitive difficulties, but also for other user groups, such as, for example, older users, are to keep the user interface as simple and minimalistic as possible, provide syntactically and lexically simple text, reduce the need to rely on memory, allow sufficient time for interaction, and support user attention (Lewis, 2009). Specific developmental learning conditions such as dyslexia also require particular care in the use of text, fonts, colors, contrast and images in order to facilitate comprehension.

40.2.4.1 Age

Age plays a significant role in how a person perceives and processes information. Knowing the age of the target population of a technology product can provide vital clues about how to present information, feedback, video, audio, etc. Two user groups have particular requirements dependent on age: children (defined as users below the age of 18, with particular focus on children under the age of 12) and older persons (usually defined as users over the age of 65).

40.2.1.2.1 Children

In the United States, nearly half (48%) of all children aged six and under have used a computer, and more than one in four (30%) have played video games. By the time they are in the four-to six-year-old range, seven out of ten have used a computer (Wartella et al., 2005).

The emergence of children as an important new user group of technology dictates the importance of supporting them in a way that is useful, effective, and meaningful for their needs.

The physical and cognitive abilities of children develop over a period of years from infancy to adulthood. Children, particularly those who are very young, do not have a wide repertoire of experiences that guide their responses to cues. In addition to this lack of experience, children perceive the world differently from adults, and have their own likes, dislikes, curiosities and needs that are different from adults. Therefore, children should be regarded as a different user population with its own culture and norms (Bruckman and Bandlow, 2002).

The design of applications for children poses a special challenge, as designers must learn how to perceive systems through the eyes of a child. For example, audio feedback may alarm very young children and extremely bright colors and video could easily distract them from the task.

40.2.1.2.2 Older users

There is overwhelming evidence that the population of the developed world is ageing. In addition to the growing population of elders in the United States (20% of the entire population by 2030), these numbers are increasing on the global scale as well. It is estimated that, for the first time in history, the population of older adults will exceed the population of children (age 0-14) in the year 2050. Almost 2 billion people will be considered older adults by 2050 (US Department of Health and Human Services Administration on Ageing 2009).

This large and diverse user group, with a variety of different physical, sensory, and cognitive capabilities, can benefit from technological applications which can enable them to retain their health, well being and independent living.

Older users may experience a decrease in motor, sensory and cognitive functioning, which may lead to combined impairments and highly affect interaction (Kurniawan, 2009). Principles for providing accessibility to older users include improved contrast, enlargement of information presented on the screen, careful organization of information, choice of appropriate input devices, avoiding relying on memory, and design simplicity.

Older people have a vast set of memories from experiences in the past that compose a large repertoire. This naturally influences their feelings towards technology. Older users may feel a sense of resistance to certain technologies, especially when dealing with applications for tasks that people are used to completing without technology, such as online banking systems. The feeling of being “forced” to adapt to technology during the later years of life can add to these feelings of resistance.

40.2.6.1 Computer use expertise

The wide use of technology by a large group of the population has resulted in increased comfort with basic technological tools. However, the level of comfort and the ease of use of technology vary significantly depending on the skill levels of users (Ashok and Jacko, 2009).

Some groups of users are unfamiliar with technology, particularly older users and those with minimal or no education, but are nevertheless required to use computing tools in order to keep up with the current evolution of society. The result is a mix of users with great diversity in technology skill level.

The challenge of designing systems for users who fall within a wide and uneven spectrum of skills can be daunting. This is especially so because designers are typically experts in their respective domains and find it difficult to understand and incorporate the needs of novices. Judging the skill levels of users can be more difficult than assessing impairments or difficulties because users who are experts on a particular tool may find a new replacement tool hard to use and understand. This results in a situation where a person who apparently is an expert actually behaves like a novice. Feedback from users with different skill levels can provide fresh perspectives and new insights.

Including useful help options, and explanations which can be expanded and viewed in more detail, consistent naming conventions, and uncluttered user interfaces, are just a few ways in which technology can be made accessible by users with less knowledge of the domain and system without at the same time reducing efficiency for expert users. In fact, these suggestions are guidelines of good design, which will benefit all users, irrespective of skill level.

40.2.6.2 Culture and Language

In today’s world, due to globalized technology, there is a significant shift in the perception, understanding, and experience of culture. The inclusion of this knowledge in technology will lead to more inclusiveness and tolerance.

Language is an integral part of culture and much can be lost in translation due to language barriers. For example, many technological applications use English and this in itself could be a restricting factor for people who do not speak or write the language. Abbreviations, spelling, and punctuation are all linguistic variables. The connection between language and the layout of text on technical applications is a factor to be considered, since certain languages such as English and French lend themselves to shorter representations, while other languages may require longer formats.

The term “localization” refers to customizing products for specific markets to enable effective use. Included in localization are language translations, changes to graphics, icons, content, etc.

Other differences in culture include interpretations of symbols, colors, gestures, etc. For example, there are differences in the use of colors (green is a sacred color in Islam, yellow in Buddhism) and in the reading direction (e.g. left to right in N. America and Europe, right to left in the Middle East). Ideas on clothing, food, and aesthetic appeal also vary from culture to culture.

These numerous differences make it imperative that designers are sensitive to these differences during the creation of technology and avoid treating all cultures as the same. Rather than neutralize cultural and linguistic differences, Design for All acknowledges, recognizes, appreciates, and integrates these differences (Marcus and Rau, 2009).

40.2.6.3 Social Issues

Globalization has created an environment of rich information and easy communication. Social issues such as economic and social status pose a serious challenge with respect to access to technology. In many parts of the world, only the wealthier segments of society have the opportunity to use technology and benefit from it.

Poverty, social status and limited educational opportunities create barriers to technology access. Designing applications that are equally accessible and equally easy to use for every single socio-economic group in the world is virtually impossible, but there are lessons to be learned from considering the needs of various social groups.

Studies have revealed that a certain level of education, technical education to be precise, is required to receive optimal productivity from the use of technology (Castells, 1999). The realization that technological benefits are available more readily to the educated conveys a simple message regarding the responsibility of designers, developers, engineers, and all those involved in the creation of technology. This team of people creates and distributes technology, and it is critically important for them to be educated in matters of universal access and issues related to the diversity of users, including the need to consider designing for the undereducated. Designing for technological literacy becomes therefore a top priority.

40.2.7 Diversity in the technological environment

Diversity does not only concern users, but also interaction environments and technologies, which are continuously developing and diversifying.

Temporary states of impairment may be created by the particular contexts in which users interact with technology. For instance, a working environment in which noise level and visual distractions of the environment are high can interfere with the efficient use of computer-based applications. Impairments caused by contextual factors are known as situationally-induced impairments (Sears et al., 2003). Technology itself can also cause situationally-induced impairments. For example, when screens are too small, the user may become vision-impaired in this particular situation.

The diffusion of the Internet and the proliferation of advanced interaction technologies (e.g., mobile devices, network attachable equipment, virtual reality, agents, etc.) signify that many applications and services are no longer limited to the visual desktop, but span over new realities and interaction environments. Overall, a wide variety of technological paradigms plays a significant role for Design for All, either by providing new interaction platforms, or by contributing at various levels to ensure and widen access.

The World Wide Web and its technologies are certainly a fundamental component of this landscape. Various challenges exist and solutions have been elaborated to make the Web accessible to all. The World Wide Web offers much for those who are able to access its content, but at the same time access is limited by serious barriers due to limitations of visual, motor, language, or cognitive abilities.

Another very important and rapidly progressing technological advance is that of mobile computing. Mobile devices acquire an increasingly important role in everyday life, both as dedicated tools, such as media players, and multi-purpose devices, such as smart phones. The device needs to be easy to use, even on the move. Mobile interaction often brings forward contradictory design goals and requirements. The environments of mobile contexts are demanding due to characteristics such as noise or poor lighting. The user may need to multitask and that leaves only part of his/her attention for using the device. Also cultural differences and user expectations have a major impact on the use of the devices. These characteristics of mobile devices and usage situations set high demands for design. Similar to any other application field, mobile user groups can be defined by age, abilities and familiarity with the environment. However, the requirements that each user group sets for mobile devices and services vary in different contexts of use.

Mobility brings about the challenge that contexts of use vary greatly and may change, even in the middle of usage situations. The variable usage contexts need to be taken into account when designing mobile devices and services. The initial assumption of mobile devices and services is that they can be used “anywhere”. This assumption may not always be correct; the environment and the context create challenges in use. Using mobile phones is prohibited in some environments and in some places there may not be network coverage.

The user can be physically or temporally disabled. In dark or bright environments it may be hard to see the user interface elements. In a crowded place it may be difficult to carry on a voice conversation over the phone—even more difficult than in a face to face situation, when you can use non-verbal cues to figure out what the other person is saying if you cannot hear every word. In social communication the context plays an important role; people often start telephone conversations by asking the other person’s physical location— “Where are you”—to figure out whether the context of the other person allows the phone call.

40.3 Perspectives and Approaches

In the context of Design for All, user interface design methodologies, techniques and tools acquire increased importance. Various methods, techniques, and codes of practice have been proposed to enable authors proactively to take into account and appropriately address diversity in the design of interactive artifacts. Three fundamental approaches are outlined below.

40.3.1 Guidelines and Standards

Guidelines and standards have been formulated in the context of international collaborative initiatives towards supporting the design of interactive products, services and applications, which are accessible to most potential users without any modifications. This approach has been mainly applied to the accessibility of the World Wide Web.

40.3.1.1 Web accessibility

Web accessibility means that people with disabilities can use the Web. More specifically, Web accessibility means that people with disabilities can perceive, understand, navigate, and interact with the Web, and that they can contribute to the Web. Web accessibility also benefits others, including older people with changing abilities due to aging.

http://www.w3.org/WAI/intro/accessibility.php

A number of accessibility guidelines collections have been developed (Pernice and Nielsen, 2001; Vanderheiden et al., 1996). In particular, the Web Content Accessibility Guidelines (WCAG) explain how to make Web content accessible to people with disabilities (W3C, 1999). Web "content" generally refers to the information in a Web page or Web application, including text, images, forms, sounds, etc. WCAG 1.0 provides 14 guidelines that are general principles of accessible design. Each guideline has one or more checkpoints that explain how the guideline applies in a specific area. WCAG foresees 3 levels of compliance, A, AA and AAA. Each level requires a stricter set of conformance guidelines, such as different versions of HTML (Transitional vs. Strict) and other techniques that need to be incorporated into code before accomplishing validation. Further to WCAG 1.0, in December 2008, the W3C published a new version of the guidelines, targeted to help Web designers and developers to create sites that better meet the needs of older users and users with disabilities. Drawing on extensive experience and community feedback, WCAG 2.0 (W3C, 2008) improves upon WCAG 1.0 and applies to more advanced technologies. Guidelines are also available for the usability of web interfaces on mobile devices (W3C, 2005). WCAG 2.0 guidelines are organized around four principles that provide the foundation for Web accessibility, namely perceivable, operable, understandable, and robust. The 12 guidelines provide the basic goals that authors should work toward in order to make content more accessible to users with different disabilities. The guidelines are not testable, but provide the framework and overall objectives to help authors understand the success criteria and better implement the techniques. For each guideline, testable success criteria are provided to allow WCAG 2.0 to be used where requirements and conformance testing are necessary such as in design specification, purchasing, regulation, and contractual agreements. In order to meet the needs of different groups and different situations, three levels of conformance are defined: A (lowest), AA, and AAA (highest). WACAG guidelines also address content used on mobile devices.

The use of guidelines is today the most widely adopted process by web authors for creating accessible web content. This approach has proven valuable for bridging a number of barriers faced today by people with disabilities. In addition, guidelines serve those with low levels of experience with computers, and facilitate interoperability with new and emerging technology solutions (e.g., navigator with voice recognition for car drivers). Additionally, guidelines constitute de facto standards, as well as the basis for legislation and regulation related to accessibility in many countries (Kemppainen, 2009). For example, the US government Section 508 of the US Rehabilitation Act (Rehabilitation Act of 1973, Amendment of 1998) provides a comprehensive set of rules designed to help web designers make their sites accessible.

However, many limitations arise in the use of guidelines for a number of reasons. These include the difficulty in interpreting and applying guidelines, which require extensive training. Additionally, the process of using, or testing conformance to, widely accepted accessibility guidelines is complex and time consuming. To address this issue, several tools have been developed enabling the semi-automatic checking of html documents. Such tools make the development of accessible web content easier, particularly since the checking of conformance does not rely solely on the expertise of developers. Developers with limited experience in web accessibility can use such tools for evaluating web content and without the need to go through a large number of checklists.

Despite the proven usefulness of WCAG for web accessibility, it is common for web content manufacturers to ignore or overlook them, thus limiting the ability of disabled users to navigate through the information and services offered by a website. The guideline principles are therefore far from being well integrated, even to public Web sites where legislation enforces it. Recent studies reveal that web accessibility metrics are worsening worldwide (Basdekis, et al., 2010).

As a final consideration, guidelines are usually applied following a “one-size-fits-all” approach to accessibility, which, while ensuring a basic level of accessibility for users with various types of disabilities, does not support personalization and improved interaction experience.

40.3.1.2 Other Accessibility Guidelines and Standards

Besides web accessibility, guidelines and standards are available also for other types of applications. For example, major software companies have launched accessibility initiatives and provide accessibility guidelines for developers using their tools and development environments. Examples are (Microsoft, 2013), (Adobe, 2013), and (IBM, 2013).

Accessibility of multimedia content is also addressed by international consortia, especially in the domain of education, e.g., (IMS, 2013), but also by content providers, e.g., (BBC, 2013).

Other accessibility related activities by international, European and National standardization bodies are discussed in details in (Engelen, 2009).

40.3.2 User Interface Adaptation

In the light of the above, it appears that design approaches focusing on the delivery of single interaction elements to be used by everybody offer limited possibilities of addressing the diverse requirements reflected in all users. Therefore, a critical property of interactive elements becomes their capability for some form of automatic adaptation and personalization (Stephanidis, 2001).

Adaption-based approaches promote the design of products which are easily adaptable to different users by incorporating adaptable or customizable user interfaces. This entails an effort to build access features into a product, starting from its conception and continuing throughout the entire development life-cycle.

Methods and techniques for user interface adaptation meet significant success in modern interfaces. Some popular examples include the desktop adaptations in various versions of Microsoft Windows, offering, for example, the ability to hide or delete unused desktop items, and personalization features of the desktop based on the preferences of the user, by adding helpful animations, transparent glass menu bars, live thumbnail previews of open programs and desktop gadgets (like clocks, calendars, weather forecast, etc.). Similarly, Microsoft Office applications offer several customizations, such as toolbars positioning and showing/hiding recently used options. However, adaptations integrated into commercial systems need to be set manually, and mainly focus on aesthetic preferences. In terms of accessibility and usability, only a limited number of adaptations are available, such as keyboard shortcuts, size and zoom options, changing color and sound settings, automated tasks, etc.

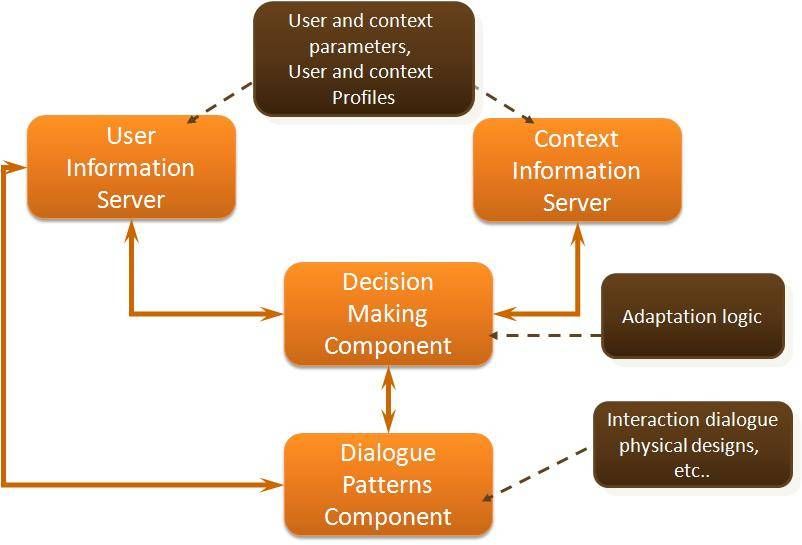

Research efforts in the past decades have elaborated more comprehensive and systematic approaches to user interface adaptations in the context of Design for All. The Unified User Interfaces methodology was conceived and applied (Savidis and Stephanidis, 2009) as a vehicle to ensure, efficiently and effectively through automatic adaptation, the accessibility and usability of UIs to users with diverse characteristics, also supporting technological platform independence, metaphor independence, and user-profile independence. Automatic UI adaptation seeks to minimize the need for a posteriori adaptations and deliver products that can be adapted for use by the widest possible end user population (adaptable user interfaces). This implies the provision of alternative interface instances depending on the abilities, requirements, and preferences of the target user groups, as well as the characteristics of the context of use (e.g., technological platform, physical environment). The main objective is to ensure that each end-user is provided with the most appropriate interactive experience at run-time.

Designing for automatic adaptation is a complex process. Designers should be prepared to cope with large design spaces to accommodate design constraints posed by diversity in the target user population and the emerging contexts of use. Therefore, designers need accessibility knowledge and expertise. Moreover, user adaptation must be carefully planned, designed and accommodated into the life-cycle of an interactive system, from the early exploratory phases of design, through to evaluation, implementation, deployment, and maintenance.

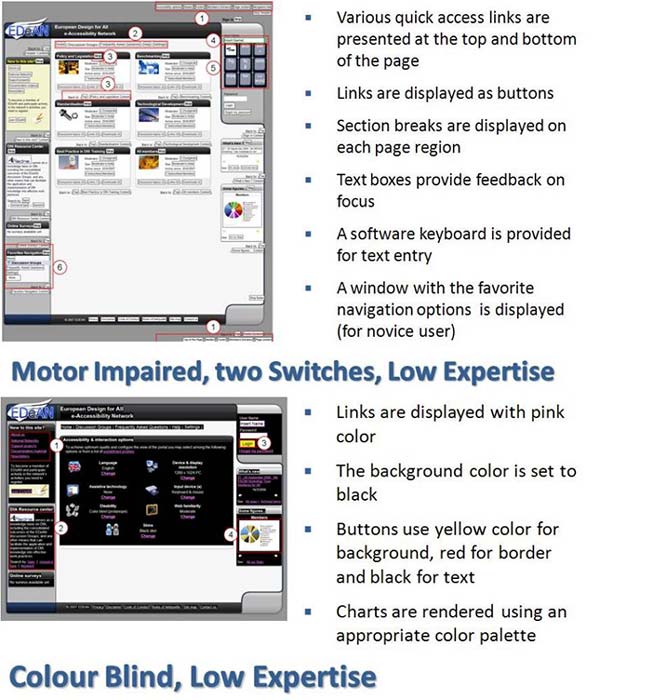

Author/Copyright holder: Stephanidis. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 40.6: The architecture of an adaptable user interface

A series of tools and components have been developed to support Unified User Interface design, including toolkits of adaptable interaction objects, languages for user profiling and adaptation decision making, and prototyping tools (Stephanidis et al., 2012). These tools are targeted to support the design and development of user interfaces capable of adaptation behavior, and more particularly the conduct and application of the Unified User Interface development approach. Over the years, these tools have demonstrated the technical feasibility of the approach and have contributed to reducing the practice gap between traditional user interface design and design for adaptation. They have been applied in a number of pilot applications and case studies.

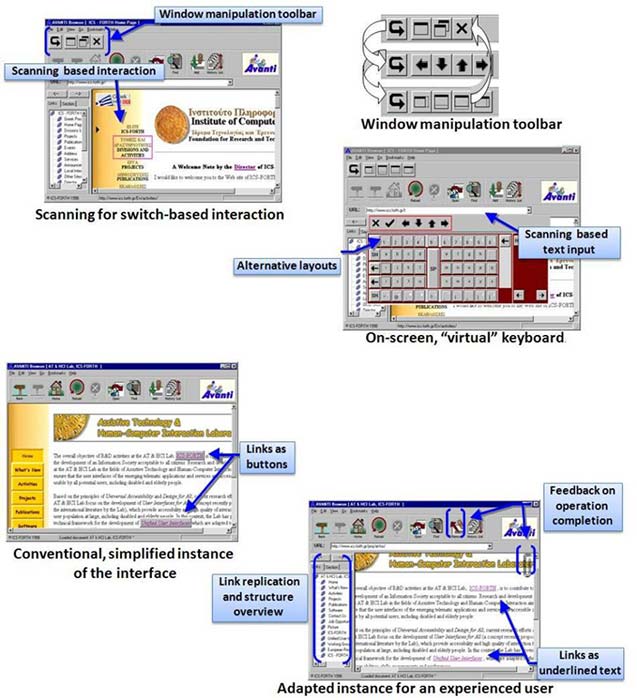

Author/Copyright holder: Stephanidis et al., 2001. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 40.7: AVANTI browser: a universally accessible web browser with a unified user interface. The AVANTI browser provides an interface to web-based information systems for a range of user categories, including: (i) “able-bodied” people; (ii) blind people; and (iii) motor-impaired people with different degrees of difficulty in employing traditional input devices. Stephanidis et al., 2001

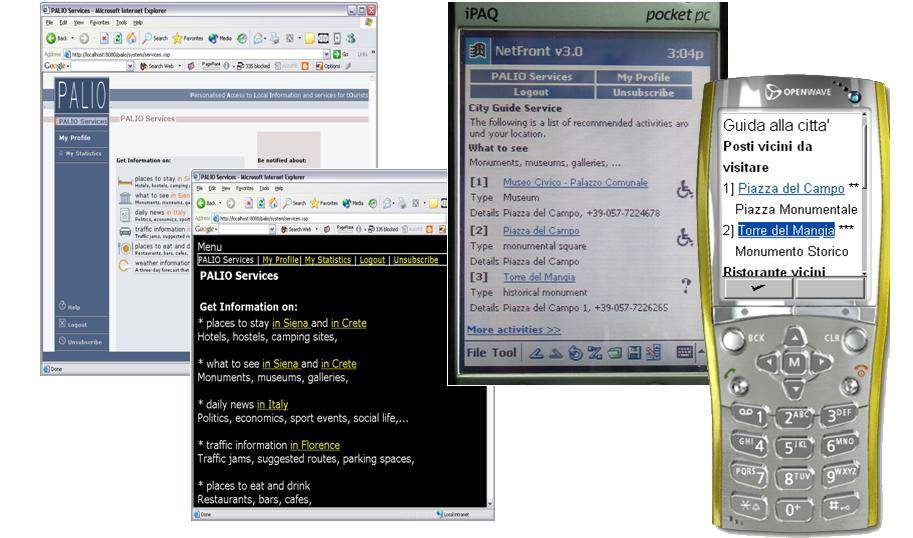

Author/Copyright holder: Stephanidis et al., 2005. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 40.8: PALIO: a system that supports the provision of web-based services exhibiting automatic adaptation behavior based on user and context characteristics, as well as the user current location. Stephanidis et al., 2005

Author/Copyright holder: Grammenos et al., 2005. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 40.9: UA-Chess: a universally accessible multi-modal chess game, which can be played between two players, including people with disabilities (low-vision, blind and hand-motor impaired), either locally on the same computer, or remotely over the Internet. Grammenos et al., 2005

Author/Copyright holder: Doulgeraki et al., 2009. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 40.10: EDeAN Portal: an adaptable web portal for the support of the activities of the EDeAN Network. Adaptations are performed server-side using a toolkit of adaptable interaction objects. Doulgeraki et al., 2009

40.3.3 Accessibility in the cloud

The emergence of cloud computing in recent years is opening new opportunities for the provision of accessibility. Accessibility in the cloud is another approach targeted to move away from the concept of special "assistive technologies" and "disability access features" and towards providing more mainstream interface options for everyone, i.e., interfaces appropriate for people facing barriers in the use of interactive technologies due to disability, literacy or age-related issues, but also for people who just want a simpler interface, have a temporary disability, want access when their eyes are busy doing something else, want to rest their hands or eyes, or want to access information in noisy environments (Vanderheiden et al., 2013). Thus, the approach is targeted to everybody, including people with specific disabilities, such as (indicatively) blind and low-vision users, motor impaired users, users with cognitive impairments, hearing impairment users, and speech impaired users.

The main objective is to create an infrastructure that will support the creation of solutions that correspond to and respect the full range of human diversity. New systems need to allow prospective users to access and use solutions not just on a single computer, but on all of the different computers and ICT that they must use (at home, at work, when travelling, etc).

The infrastructure will enable users to declare requirements in functional terms (whether or not they fill into traditional disability categories) and allow service providers, crowd sourcing mechanisms, and commercial entities to respond to these requirements. This will mean that users with disabilities do not need to be constrained by their diagnostic categories, thus avoiding stereotyping and recognizing that everyone’s requirements are different and that each individual’s requirements may change according to the context.

Technically the approach is based on the creation of an explicit and implicit user profile (stored either locally or in the cloud), that automatically matches mainstream products and services with necessary access features and configures them according to the user’s preferences and context of use. The infrastructure would consist of enhancements to platform and network technologies to simplify the development, delivery and support of access technologies and provide users with a way to instantly apply the access techniques and technologies they need, automatically, on any computers or other ICT.

Currently, the infrastructure is in its conceptualization and development phases. Although its basic concepts are based on past work and implementations, current efforts attempt to apply them together across the different platforms and technologies, and in a manner that can scale to meet the need. A wide range of delivery options is currently under development and testing, including auto-personalization of different OSs, browsers, phones, web apps, kiosks, ITMs, DTVs, smart homes and assistive technologies (cloud and installed).

40.4 Design methods and techniques

The emergence of user-centered design (see section Brief History) has led to the development and practice of a wide variety of design methods and techniques, mostly originating from the social sciences, psychology, organizational theory, creativity and arts, as well as from practical experience (Maguire and Bevan 2002). Many of these techniques are based on the direct participation of users or user representatives in the design process. However, the vast majority of available techniques have been developed with the “average” able-bodied user and the working environment in mind.

In Design for All, this precondition no longer holds, and the basic design principle of “knowing the user” becomes “knowing the diversity of users”. Therefore, the issue arises of which methods and techniques can be fruitfully employed while addressing diversity in design, and how such techniques need to be used, revised and modified to optimally achieve this purpose. This is further complicated by the fact that, in Design for All, more than one group of users with diverse characteristics and requirements need to be taken into account (Antona et al., 2009).

Practical and organizational aspects of the involvement process play an important role when non-traditional user groups are addressed, and are critical to the success of the entire effort. Their importance should not be underestimated.

Very few design methods can be used as they stand when addressing diverse user groups. One of the main issues is therefore how to appropriately adapt and fine-tune methods to the characteristics of the people involved. Methods are mostly based on communication between users and other stakeholders in the design process. Therefore, the communication abilities of the involved users should be a primary concern.

40.4.1 Observation

Popular methods of exploring the user experience come from field-research in anthropology, ethnography and ethnomethodology (Beyer and Holtzblatt, 1997).

Direct observation is one of the hallmark methods of ethnographic approaches. It involves an investigator viewing users as they conduct some activity. The goal of field observation is to gain insight into the user experience as experienced and understood within the context(s) of use.

Examining the users in context is claimed to produce a richer understanding of the relationships between preference, behavior, problems and values.

Observation sessions are usually video-recorded, and the videos are subsequently analyzed. The effectiveness of observation and other ethnographic techniques can vary, as users have a tendency to adjust the way they perform tasks when knowingly being watched. The observer needs to be unobtrusive during the session and only pose questions when clarification is necessary.

Field studies and direct observation can be used when designing for users with disabilities and older users. This method does not specifically rely on the participants’ communication abilities, and is therefore useful when the users have cognitive disabilities. Observational studies have been conducted with blind users in order to develop design insights for enhancing interactions between a blind person and everyday technological artifacts found in their home such as wristwatches, cell phones or software applications (Shinohara, 2006). Analyzing situations where work-arounds compensate for task failures reveals important insights for future artifact design for the blind, such as tactile and audio feedback, and facilitation of user independence.

A difficulty with direct observation studies is that they may in some cases be perceived as a form of “invasion” of the user’s space and privacy, and therefore may not be well accepted, for example, by disabled or older people who are not keen to reveal their problems in everyday activities.

40.4.2 Surveys and Questionnaires

User surveys, originating from social science research, involve administering a set of written questions to a sample population of users, and are usually targeted to obtaining statistically relevant results. Questionnaires are widely used in HCI, especially in the early design phases but also for evaluation. Questionnaires need to be carefully designed in order to obtain meaningful results (Oppenheim, 2000).

Research shows that there are age differences in the way older and younger people respond to questionnaires. For example, older people tend to use the “Don't know” response more often than younger people. They also seem to use this answer when faced with questions that are complex in syntax. Their responses also seem to avoid the extreme ends of ranges. Having the researcher administer the questionnaire directly to the user may help to retrieve more useful and insightful information (Eisma et al., 2004). In-home interviews are effective in producing a wealth of information from the user that could not have been obtained by answering a questionnaire alone (Dickinson et al., 2002).

Since questionnaires and surveys address a wide public, and it is not always possible to be aware of the exact user characteristics (i.e., if they use Braille or if they are familiar with computers and assistive hardware and software), they should be available either in alternative formats or in accessible electronic form. The simple and comprehensible formulation of questions is vital. Questions must also be focused to avoid gathering large amounts of irrelevant information.

40.4.3 Interviews

Interviews are another ethnographically-inspired user requirements collection method. In HCI, as generally in software system development, it is a commonly used technique where users, stakeholders and domain experts are questioned to obtain information about their needs or requirements in relation to a system (Macaulay, 1996). Interviews can be unstructured (i.e., no specific sequence of questions is followed), structured (i.e., questions are prepared and ordered in advance) or semi-structured (i.e., based on a series of fixed questions with scope for the user to expand on their responses). The selection of representative users to be interviewed is important in order to obtain useful results. Interviews on a customer site by representatives from the system development team can be very informative.

Semi-structured interviews have been used to identify accessibility issues in mobile phones for blind and motor impaired users (Smith-Jackson et al., 2003. With older people, interviews as a means for gathering user requirements has also proven to be an effective method, although in-house interviews can be even more productive, because they tend to lead to spontaneous excursions into users' own experiences, and demonstrations of various personal devices used.

Obviously, interviews present difficulties when deaf people are involved, and sign-language translation may be necessary. Interviews are often avoided when the target user group is composed of cognitively and communication impaired people. Recently, a trend to conduct interviews on-line using chat tools has also emerged. An obvious consideration in this respect is that the used chat tool must be accessible and compatible with screen readers.

40.4.4 Activity Diaries and Cultural Probes

Diary keeping is another ethnographically inspired method which provides a self-reported record of user behavior over a period of time (Gaver et al., 1999). Participants are required to record activities they are engaged in during a normal day. Diaries allow identifying patterns of behavior that would not be recognizable through short-term observation. However, they require careful design and prompting if they are to be employed properly by participants. Diaries can be textual, but also visual, employing pictures and videos. Generalizing the concept of diaries, “cultural probes” are based on “kits” containing a camera, voice recorder, a diary, postcards and other items. They have been successfully employed for user requirements elicitation in home settings with sensitive user groups, such as former psychiatric patients and the elderly (Crabtree et al., 2003). Reading and writing a paper-based diary may be a difficult process for the blind and users with motor impairments. Therefore, diaries in electronic forms or audio recorded diaries should be used in these cases.

40.4.5 Group discussions

Brainstorming, originating from early approaches to group creativity, is a process where participants from different stakeholder groups engage in informal discussion to rapidly generate as many ideas as possible. All ideas are recorded, and criticism of others’ ideas is forbidden. Overall, brainstorming can be considered as appropriate when the users to be involved have good communication abilities and skills (not necessarily verbal), but can also be adapted to the needs of other groups. This may have implications in terms of the pace of the discussion and generation of ideas.

Focus groups are inspired from market research techniques. They bring together a cross-section of stakeholders in a discussion group format. The general idea is that each participant can act to stimulate ideas, and that by a process of discussion, a collective view is established (Bruseberg and McDonagh-Philp, 2001). Focus groups typically involve six to twelve persons, guided by a facilitator. Several discussion sessions may be organized.

The main advantage of using focus groups for users with disabilities is that it does not discriminate against people who cannot read or write and it can encourage participation from people reluctant to be interviewed on their own or who feel they have nothing to say. During focus groups, various materials can be used for review, such as storyboards (see section Scenario, Storyboards and Personas).

This method should not be employed for requirements elicitation if the target user group has severe communication problems. Moreover, it is important that the discussion leader manages the discussion effectively and efficiently, allowing all users to participate actively in the process, regardless of their disability.

Focus groups have been used for eliciting expectations and needs from the learning disabled, as it was felt they would result in the maximum amount of quality data (Hall and Mallalieu, 2003). They allow a range of perspectives to be gathered in a short time period in an encouraging and enjoyable way. This is important, as, typically, people with learning disabilities have a low attention span.

Concerning older people, related research has found that it is not easy to keep a focus group of older people focused on the subject being discussed (Newell et al., 2007). Participants tend to drift their discussions off the subject matter as for them the focus group meeting is a chance to socialize. Thus, it is important to provide a social gathering as part of the experience of working with IT researchers rather than treat them simply as participants.

40.4.6 Empathic modeling

Empathic modeling is a technique intended to help designers/developers put themselves in the position of a disabled user, usually through disability simulation (Nicolle and Maguire, 2003). This technique was first applied to simulate age-related visual changes in a variety of everyday environmental tasks, with a view to eliciting the design requirements of the visually impaired in different architectural environments. Empathic modeling can be characterized as an informal technique, and there are no specific guidelines on how to use it.

Modeling techniques for specific disabilities can be applied through simple equipment.

Visual impairment due to cataracts can be simulated with the use of an old pair of glasses smeared with Vaseline.

Total blindness is easy to simulate using a scarf or a bandage tied over the eyes.

Total hearing loss can be easily simulated using earplugs.

Upper limb mobility impairments can be simulated with the use of elastic bands and splints.

40.4.7 Scenario, Storyboards and Personas

Scenarios are widely used in requirements elicitation and, as the name suggests, are narrative descriptions of interactive processes, including user and system actions and dialogue. Scenarios give detailed realistic examples of how users may carry out their tasks in a specified context with the future system. The primary aim of scenario building is to provide examples of future use as an aid to understanding and clarifying user requirements and to provide a basis for later usability testing. Scenarios can help identify usability targets and likely task completion times (Carroll, 1995).

Storyboards are graphical depiction of scenarios, presenting sequences of images that show the relationship between user actions or inputs and system outputs. Storyboarding originated in the film, television and animation industry. A typical storyboard contains a number of images depicting features such as menus, dialogue boxes, and windows (Truong et al., 2006).

Another scenario-related method is called personas (Cooper, 1999), where a model of the user is created with a name, personality and picture, to represent each of the most important user groups.

The persona model is an archetypical representation of real or potential users. It is not a description of a real user or an average user. The persona represents patterns of users’ goals and behavior, compiled in a fictional description of a single individual. Potential design solutions can then be evaluated against the needs of particular personas and the tasks they are expected to perform.

Zimmermann and Vanderheiden (2008) propose a methodology based on the use of scenarios and personas to capture the accessibility requirements of older people and people with disabilities and structure accessibility design guidelines. The underlying rationale is that the use of these methods has great potential to make this type of requirement more concrete and comprehensible for designers and developers who are not familiar with accessibility issues.

However, really reliable and representative personas can take a long time to create. Additionally, personas may not be well suited to presenting detailed technical information, e.g., about disability, and their focus on representative individuals can make it more complex to capture the range of abilities in a population.

It is self-evident that storyboarding is not optimal for blind users, while it requires particular care for users with limited vision or color-blindness. On the contrary, it would appear to be a promising method for deaf or hearing-impaired users.

40.4.8 Prototyping

A prototype is a concrete representation of part or all of an interactive system. It is a tangible artifact, does not require much interpretation, and can be used by end users and other stakeholders to envision and reflect upon the final system (Beaudouin-Lafon and Mackay 2002).

Prototypes, also known as mockups, serve different purposes and thus take different forms:

Off-line prototypes (also called paper prototypes) include paper sketches, illustrated story-boards, cardboard mock-ups and videos. They are created quickly, usually in the early stages of design, and they are usually thrown away when they have served their purpose.

On-line prototypes, on the other hand, include computer animations, interactive video presentations, and applications developed with interface builders.

Prototypes also vary regarding their level of precision, interactivity and evolution. With respect to the latter, rapid prototypes are created for a specific purpose and then thrown away, iterative prototypes evolve, either to work out some details (increasing their precision) or to explore various alternatives, and evolutionary prototypes are designed to become part of the final system.

Research has indicated that the use of prototypes is more effective than other methods, such as interviews and focus groups, when designing innovative systems for people with disabilities, since potential users may have difficulty imagining how they might undertake familiar tasks in new contexts (Petrie et al., 1998). Using prototypes can be a useful starting point for speculative discussions, enabling the users to provide rich information on details and preferred solutions.

Prototypes are usually reviewed through user-trials, and therefore all considerations related to user trials and evaluation are pertinent. An obvious corollary is that prototypes must be accessible in order to be tested with disabled people. This may be easier to achieve with on-line prototypes, closely resembling the final system, than with paper prototypes.

40.4.9 User trials

In user trials, a product is tested by “real users” trying it out in a relatively controlled or experimental setting, following a standardized set of tasks to perform. User trials are performed for usability evaluation purposes. However, the evaluation of existing or competitive systems, or of early designs or prototypes, is also a way to gather user requirements (Maguire and Bevan 2002).

While there are wide variations in where and how a user trial is conducted, every user trial shares some characteristics. The primary goal is to improve the usability of a product by having participants who are representative of real users to use the product carrying out real tasks while being observed; the data that is collected is later analyzed. In field studies, the product or service is tested in a “real-life” setting.

In user trials, an appropriately equipped room needs to be available for each session. When planning the test, it should be taken into account that trials with elderly and users with disabilities may require more time than usual in order to complete the test without anxiety and frustration.

Research on the use of the most popular methods has indicated that modifications to well established user trial methods are necessary when users with disabilities are involved. For example, the think aloud protocol has been adapted to be applied differently when carrying out user trials with deaf users and blind users respectively (Chandrashekar et al., 2006; Roberts and Fels, 2006).

Furthermore, explicitly emphasizing during the instructions that it is the product that is being tested and not the user is very important (Poulson, Ashby and Richardson, 1996), since a trial may reveal serious problems with the product, to the extent that it may not prove possible to carry out some tasks. Therefore, it is important that users do not feel uncomfortable and attribute the product failure to their disability.

When the user trial participants are users with upper limb motor impairments and poor muscle control, it should be ensured that testing sessions are short, so as to prevent excessive fatigue.

Testing applications with children requires special planning and care. Guidelines have been developed to conduct usability testing with children. These guidelines provide a useful framework to obtain maximum feedback from children, while at the same time ensuring their comfort, safety and sense of well-being (Hanna, Risden and Alexander 1997). The need to involve children in every stage of design is particularly important in the case of children’s technology, because for adult designers it is difficult (and often incorrect) to make assumptions about how a child may view or interpret data.

40.4.10 Cooperative and Participatory Design

Participatory design may adopt a wide variety of techniques, including brainstorming, scenario building, interviews, sketching, storyboarding and prototyping, with the full involvement of users.

Traditionally, partnership design techniques have been used for gathering user requirements from adult users. However, in the past few years a number of research projects have shown ways to adapt these techniques to benefit the design of technology process for non-traditional user groups, such as children and the elderly.

Cooperative inquiry has been widely used to enable young children to have a voice throughout the technology development process (Druin, 1999), based on the observation that although children are emerging as frequent and experienced users of technology, they were rarely involved in the development process. In these efforts, alterations were made to the traditional user requirement gathering techniques used in the process in order to meet the children’s needs. For example, the adult researchers used note-taking forms, whereas the children used drawings with small amounts of text to create cartoon-like flow charts. Overall, involving children in the design process as equal partners was found to be a very rewarding experience and one that produced exciting results in the development of new technologies.

Designing technology applications to support older people in their homes has also shown an increase in necessity as the developed world is ageing. However, designing for this group of users is not an easy process as developers and designers often fail to fully grasp the problems that this user group faces when using technologies that affect their everyday life. HCI research methods need to be adjusted when used on this user group. They have to take into consideration that older adults experience a wide range of age-related impairments, including loss of vision, hearing, memory and mobility, which ultimately also contribute to loss of confidence and difficulties in orientation and absorption of information.

Participatory design techniques can help designers reduce the intergenerational gap between them and older people, and help better understand the needs of this group of users (Demirbileka and Demirkan, 2004). When older people participate in the design process from the start, their general fear towards using technology decreases, because they feel more in control and confident that the end result of the design process has truly taken into consideration their needs.

40.4.11 Summary of design methods and techniques

The table below (adapted from Antona et al., 2009), summarises the design methods and techniques discussed in the previous sections, suggesting an indicative path towards method selection for different target user groups.

Disability | Age | |||||

Motion | Vision | Hearing | Cognitive / Communication | Children | Elderly | |

Direct observation | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

Survey and questionnaires | ∎ | ∎ | ∎ | ⌧ | ∎ | ∎ |

Interviews | ✓ | ✓ | ∎ | ⌧ | ∎ | ∎ |

Activity diaries and cultural probes | ∎ | ∎ | ✓ | ∎ | ∎ | ✓ |

Group discussions | ✓ | ✓ | ∎ | ⌧ | ∎ | ∎ |

Empathic modeling | ✓ | ✓ | ✓ | ⌧ | ⌧ | ⌧ |

Scenarios, storyboards and personas | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

Prototyping | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

User trials | ∎ | ∎ | ∎ | ∎ | ∎ | ∎ |

Cooperative and participatory design | ✓ | ✓ | ✓ | ∎ | ∎ | ∎ |

✓ Appropriate ∎ Needs modifications and adjustments ⌧ Not recommended

Table 40.1

40.5 Interaction Techniques

40.5.1 Speech

Speech-based interactions allow users to communicate with computers or computer-related devices without the use of a keyboard, mouse, buttons, or other physical interaction devices. Speech-based interactions leverage a skill that is mastered early in life, and have the potential to be more natural than other technologies such as the keyboard. Speech-based interaction is of particular interest for children, older adults, and individuals with disabilities (Feng and Sears, 2009). Additionally, speech is a compelling input alternative when the user’s hands are busy with another task (e.g., driving a car, conducting medical procedures) and the traditional keyboard and mouse may be inaccessible or inappropriate. Based on the input and output channels being employed, speech interactions can be categorized into three groups:

Speech output systems, which include applications that only utilize speech for output while leveraging other technologies, such as the keyboard and mouse, for input. Screen access software, which is often used by individuals with visual impairments, is an example of speech output.