This chapter covers why and how Semiotics can help advance some of the major goals of Human-Computer Interaction (HCI) and be useful when designing interactive products. It begins with brief definitions and explanations of a few central concepts in Semiotics. This is followed by a discussion of harder challenges involved in bringing Semiotics into the domain of HCI research and the consequences of viewing computers as media. Following Semiotic Engineering concepts, which we have been developing and using for two decades now, we then revisit computer-mediated communication in view of 21st century literacy issues. First, we show that basic computing skills exhibited by contemporary users are in fact semiotic engineering abilities of the same sort as required from professional designers. Then we show how these skills can leverage an individual’s participation in a variety of social processes. In conclusion, the chapter presents our personal answer to the question that most readers certainly have in mind: ‘So, what’s in it for me?’

25.1 Introduction

Semiotics and HCI have more in common than is usually acknowledged in either side of the cultural divide that has been keeping them apart. Researchers and professional practitioners working with these disciplines have different interests and perspectives when selecting, applying and building knowledge. As a result, mutual understanding has not only been rare, but usually perceived as “more cost than benefit”.

This chapter is an essay on why and how Semiotics can help advance some of the major goals in HCI. It begins with a definition of Semiotics and a brief explanation of a few central concepts that will be used throughout the chapter. Next, it discusses some deeper challenges of using Semiotics in the context of HCI research. Then it explores the notion of computers as media, the hallmark of all semiotic approaches to HCI proposed to date. It highlights the interdisciplinary work of pioneers like Mihai Nadin and Peter Bøgh Andersen and ends with a description of Semiotic Engineering, a comprehensive semiotic theory of HCI which we have been developing and using at SERG, the Semiotic Engineering Research Group in Rio de Janeiro, since 1990. In subsequent sections, we take a closer look at computer-mediated communication in view of contemporary opinions that having basic programming skills is as important for citizens of the 21st century as reading, writing and counting have been in the 20th century and before. Some examples of basic computing literacy skills exhibited by contemporary users are framed as semiotic engineering abilities of the same sort as required from professional HCI designers. This helps us to show why computers are indeed the most pervasive media used by contemporary societies, and it points at computing literacy issues with which HCI is not only necessarily involved, but also in which it has clear vested interests. The chapter then provides our answer to the provocative question implied by its title, proposed by the editors of the Encyclopedia of HCI: “So, what’s in it for me?” The answer, I hope, will attract more attention to this fascinating discipline in times when computers so obviously coalesce and transform society’s means of communication and participation.

25.2 Some preliminary definitions

Semiotics is the study of signs. Although strictly correct, this definition is not helpful for those who do not know what signs are and how they can be studied. So, let us begin with additional definitions. The selected material will also give the reader a flavor of the cultural divide between Semiotics and HCI as expressed by terminology and conceptual framing.

In the Encyclopedia of Semiotics (Bouissac 1998), the entry for “sign” explores numerous variations in the way philosophers and semioticians have defined the central object of interest in this discipline over the centuries. Here are two of them:

In the tradition inaugurated by Ferdinand de Saussure: “[...] the sign can be understood as a correlation of differences.” (Bouissac 1998: p. 573)

In the tradition inaugurated by Charles Sanders Peirce: “[...] the defining characteristic of signs is their capacity to determine additional signs [in the mind].” (Bouissac 1998: p. 574)

Saussure and Peirce are the founding fathers of Semiotics. Although they were contemporaries (Saussure died in 1913 and Peirce in 1914), they lived in different continents (Europe and North America, respectively) and followed completely independent paths. Saussure was a linguist, interested in a formal characterization of natural languages. Peirce was a logician, interested in meaning and knowledge discovery processes. The definitions above are both very true to their respective theories, but they are also mysterious for non-semioticians. I picked them up because they also illustrate rhetorical differences between disciplines, which we have to understand in order to reap the benefits of interdisciplinary research.

25.2.1 Saussure

![]()

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

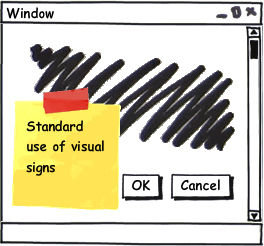

Figure 25.1: Oppositions in form that do not correspond to oppositions in meaning

Keeping in mind that Saussure (Saussure 1972) was focused almost exclusively on linguistic signs, the correlates with which he defined the sign were: an acoustic image (the ‘signifiant’, or signifier); and a concept (the ‘signifié’, or signified). The former is a physical entity, whereas the latter is an abstract one. Differences determine what constitutes a sign at various levels and dimensions. Just as a brief illustration, take the word ‘privacy’ in English. Its acoustic image in spoken language is not unique: some say |'prɪvəsi|, while others say |'praɪvəsi|. Although in English the long vowel |aɪ| is different from the short vowel |ɪ| (e. g. |baɪt| and |bɪt| are not the same word), this difference is neutralized in words like ‘privacy’: The concept, no matter the pronunciation, is the same. So, what is a sign? In phonology, |aɪ| and |ɪ| are signs because they have significant differences. One is associated with the concept of ‘long vowel’, and the other with ‘short vowel’. However, in syntax or semantics |'prɪvəsi| and |'praɪvəsi| are not distinct signs.

Difference, as the reader can infer, is a very powerful principle in meaning making and sense making. In natural languages, differences and oppositions are systemic. That is, certain opposing combinations are recurrent and meaningful in the language, whereas others aren’t. Therefore, a language can be described as an inventory of signs and sign combinations (or structures), for which the correlation between the signifiant and the signifié can be formally established by systemic differences. Here is a simple example of how this principle can be applied in the context of HCI.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.2: Well-formed sign structures in HCI

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.3: Ill-formed sign structures in HCI

In Figure 1, we see different renditions of a familiar window control element. The signifiants constitute different signs in the equivalent of HCI phonology. Nevertheless, in HCI syntax and semantics, the whole collection corresponds to a single lexical item (a single sign for ‘close window’). In Figure 2 and Figure 3, we see the parallel with Saussure’s patterns of structures. In one case, the ‘close window’ sign is combined with other signs that are systemically used in conjunction with it, whereas in the other case it is combined with signs that should never co-occur with it. Therefore, in this interface language, only one of the two structures is well-formed.

Note that signifiant/signifié correlations in interface languages are much weaker than in natural languages. Semiotic correlations are programmed into software. Compared to natural language, this can be a blessing and a curse. The blessing is that in interface languages, unlike in natural languages, the conceptual correlate of signs can (and must) be fully specified and instantiated by a mechanical process. Thus, we always have access to the origin of meaning. The curse is that mechanical instantiation of the signifiés as correlates of interface signifiants is arbitrarily programmed by human minds. That is, nothing can prevent a mischievous programmer from specifying that ‘|x|’ causes a window to freeze, rather than close. This is not the case in natural languages: neither you nor I can decide and establish that from now on ‘privacy’ means something else in English. The correlation between parts of signs in formal grammatical descriptions of natural languages is not established by individuals.

This example gives us a chance to mention another fundamental dichotomy in Saussurean theory, that between langue (language) and parole (speech). This significant opposition has been posed to account for the fact that individual variations in language use do not affect the system. It is easy to collect numerous examples of individual linguistic behavior contradicting the general rules and conventions of a given language system (e. g. we make occasional grammatical mistakes as we speak, which may be due to heavy cognitive loads). However, individual and sporadic variations do not change or affect the language system. It is only if and when such variations make their way into a formal abstract and general sign system, extending over large spans of space and time, that they actually cause a change in langue. Other variations, within smaller spatial and temporal coverage, correspond to changes in parole.

Saussure’s interest was in a theory of langue, his formal theory of signs. This refers us to a silent Cartesian tradition running under the surface of non-Cartesian concepts, such as the determining forces of social structures and conventions. The Cartesian gene in the theory is the assumption that linguists can describe langue and that individual minds can have access to supra-individual concepts and formulate them in abstract, free of psychological, social, spatial and temporal contingencies. In other words, a linguist’s description of langue objects should be warranted by this individual’s ability to capture abstract ‘signifiés’ that exist independently of his or her mind and to preserve them from contamination of individual interpretation when building formal theories of language.

Part of Descartes’ much more complex philosophy, not discussed here, was that individual minds could have access to ultimate meanings by exercising methodical doubt and analysis (Descartes 2004). Eventually, Descartes believed, the mind would reach an unquestionable state, some primary cognition that constitutes the true meaning of the object(s) under consideration. In the remainder of this chapter, whenever we use the word Cartesian we are referring to this specific aspect of Descartes’ theory, an important one for discussing alternative semiotic theories and their application to HCI.

25.2.2 Peirce

Peirce (Peirce 1992), as already mentioned, was interested in logic and the origin of meaning. His theory can actually be framed as a long and deeply elaborate rebuttal to the Cartesian canons we just mentioned (Santaella 2004). He defined signs as a three-part structure consisting of a representation (the representamen), an object and a mediating element (the interpretant) that binds the object to its representation in somebody’s mind. In other words, unless there is a mental mediation, nothing is a representation of anything. This will probably remind the reader of the proverbial saying that “meaning is in the mind of the beholder”. But, as the next paragraphs will show, the theory goes far beyond intuitive interpretations of the old adage.

The definitional feature of signs mentioned in (b) on page 2 — that they can generate other signs — refers to the fact that, according to Peirce, the mediating interpretant, just like the representamen, stands for the object that it binds to a particular representation. In other words, the interpretant is a second-order representation of the object itself. The sign has, in itself, a generative recursive seed that produces other signs, ad infinitum. For example, let us go back to the word ‘privacy’. This is a sign where |'prɪvəsi| is the representamen and something out-there-in-the-world is its object because in some minds (like yours and mine) there is an interpretant that binds them together. Is this interpretant unique? Is it the same for everyone? Is it the same for you or me throughout our entire lives? The answer is ‘no’. From the very moment that we inspect this interpretant (i. e. that we take it to mean the object out-there-in-the-world which we call |'praɪvəsi|), it becomes a second-order representation of its very object. A newly generated interpretant (e. g. an explanation or further elaboration referring to the object) is instantiated, which generates yet another sign instantiation as soon as our mind engages in further interpretation.

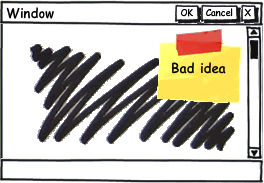

In order to illustrate how these concepts can be used in a practical HCI context description, let us go back to the ‘|x|’ window control (see Figure 4). In a Peircean account of this sign, ‘|x|’ is the representamen of the whole sign, whereas the specified computational procedure that causes a well-determined subsequent state of the system (in which the ‘Street video’ window is no longer visible) is its object. All of the interesting things have to do with the interpretant. What mediating sign (which will generate other signs) is binding representation and object together? To answer the question, we would have to inspect minds, like the programmers’ and the users’, for example.

An exhaustive account for all interpretants — all possible reasons and related facts that can cause ‘|x|’ to stand for whatever it is that makes the window disappear when we click on it — in programmers’ and users’ minds, over large spans of time and space, is beyond the ability of individual minds, caught in the contingencies of momentary interpretation.

We can already see how Peirce’s theory differs in focus and essence from Saussure’s, and also why, in the context of this chapter, a semiotic theory’s positioning relative to certain aspects of Cartesian tradition can extensively determine its fate in HCI. For instance, the tension between a Peircean perspective on meaning and the algorithmic nature of interface sign interpretation and generation processes in computer systems cannot be missed. Neither can the apparently smooth compatibility between Saussure’s theory and the basic tenets of formal language and automata theory in Computer Science (Hopcroft and Ullman 1979) be ignored.

Nevertheless, there is more promise in Peircean theory for HCI than meets the eye. Let us briefly introduce a couple of additional concepts and definitions.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.4: How simple interface signs can generate unlimited semiosis

The first is Peirce’s most widely known and used definition of sign: “A sign [...] is something which stands to somebody for something in some respect or capacity. It [...] creates in the mind of that person an equivalent sign, or perhaps a more developed sign.” (Buchler 1955: p. 99) Note that the mediation of the interpretant is done ‘in some respect or capacity’ (i. e. for some potentially partial and arbitrary reason). Thus, although most users will tend to generate an interpretation for ‘|x|’ that is at least superficially appropriate for the immediate context of interaction sketched in Figure 4, subsequently generated signs are likely to be very different. For example, thanks to some unfortunate previous experience, one user may accept that ‘|x|’ means ‘close the window’, but develop further interpretations according to which the presence of ‘|x|’ in that particular window is actually the result of negligent programming. She may believe that if she clicks on it and closes that window, the browser will freeze. In her mind, to prevent the unfortunate situation, the ‘|x|’ control should not be active, especially because the ‘Close & Return’ button is there to control the closing operation safely. Some other user, however, thanks to luckier interactions, may develop the interpretation that the ‘|x|’ control and the ‘Close & Return’ button mean exactly the same thing. The difference, he may think, is a practical one. The generic ‘close a window’ can be communicated with different signs: to click on ‘|x|’ or press some specific key combination. The latter can be faster than the point-and-click alternative. Thus, whereas advanced users may interpret the presence of ‘|x|’ as meaning that the use of keyboard input to control the window on Figure 4 is allowed, novice users may miss this signification of ‘|x|’ altogether and interpret that to close the window they must click on the ‘Close & Return’ button. The next steps in each user’s theories of what ‘|x|’ means in different situations are virtually impossible to predict since they depend substantially on the users’ experience with software, their level of technical awareness, and virtually all other meanings that populate their minds.

The second concept of Peircean theory that this example helps to illustrate is the process of abductive reasoning (also known as hypothetical reasoning or abduction), which underlies — according to the theory — all meaning-making activities. Very simply put, this kind of reasoning consists of generating hypotheses to explain (i. e. to interpret) significant elements of reality around us. Once a hypothesis is generated, it is tested against ready-to-hand evidence. If the evidence contradicts the hypothesis, a new one is generated and tested. If not, the hypothesis is accepted as a general principle capable of binding not only the tested instances of representations to the tested objects, but also — and more importantly — future instances and yet-to-be-met objects as well. The principle is true until further evidence is found that calls for revision of beliefs. This indefinitely long process of sign generation is named semiosis. It can, for example, explain Carroll and Rosson’s observations reported in an influential early work in HCI, The paradox of the active user (Carroll and Rosson 1987). The authors remark that users “hastily assemble ad hoc theories” of how the system works and refuse to learn more even though they hold important misconceptions about the interface language semantics, which can lead to inefficient or ineffective interaction. A Peircean account of this phenomenon tells us that, in the process of abductive reasoning, users come upon mediating signs that do not require or motivate further abductive effort. They are content with their inferencing and fixate a generative belief (i. e. an interpretant that will be used in other sense-making situations). Even if from a programmer’s point of view the users’ reasoning and interpretations are totally wrong, for as long as they ‘work’, the users believe that they are right. The meaning of ‘work’, and not so much the meaning of interface elements themselves, is the key to understanding interaction patterns and choices in this case.

The third and last additional concept that we will discuss with respect to Peircean Semiotics is that of a/the true meaning of signs. If human minds can engage in widely (and wildly) different interpretive directions, how is it that two people can understand each other, or at least truly believe that they do so? And, much more importantly, is there such a thing as a/the true meaning of signs?

In a Peircean perspective, meaning emerges in a constantly changing chain of “equivalent [...] or perhaps more developed” signs. Subsequent interpretants are connected, corrected, adjusted, expanded, stabilized and destabilized by the insistence of the real. Without going into the very complex details of Peirce’s theory of truth and true meanings, it is worth highlighting a few points about his particular view of reality and reality’s role in sign-making processes:

Sign making (which for the purposes of this chapter is the same as sense making) is a natural disposition of the human species, just like flying is a natural disposition of birds. Humans cannot help but assign meaning to whatever they sense in the world around them. Therefore, reality is always mediated by signs.

Because it is an abductive inferencing process, human instinctive sign making is fundamentally prone to error. We constantly make mistakes while interpreting reality. However, our innate interpretive apparatus is such that it can correct interpretations in the presence of new evidence. This mode of mental operation constitutes the first and more essential step in any sort of human mental activity, from the mundane daily inferences about the freshness of products on market stalls to the scholarly debate about the validity of scientific theories.

The principle that makes human knowledge and understanding gravitate towards ‘truth’ is the insistence of reality, the inexorable massive set of evidence provided by the world around us. This is also an indication that, in this theory, truth is not the result of an individual’s introspective activity (as Descartes would wish), but a collective ongoing semiotic process, in which abductive corrections made and expressed by other minds penetrate culture through social processes that eventually affect individual sense-making. Hence, as a social, cultural and historical process, human interpretation gravitates toward truth.

Back to HCI, these three points allow for a reinterpretation of heated controversies. Take for example Jared Spool’s criticism of User-Centered Design. In a CHI Panel in 2005 (Spool and Schaffer 2005), among other things, Spool claimed that “beyond small teams with ‘simple’ issues, formalized UCD doesn’t seem to work” and that “UCD pretends to act like an engineering discipline (formalized methods that have repeatable results independent of practitioner), but actually behaves as if it’s a craft (dependent completely on skills and talents of practitioners with no repeatable results).” (p. 1174)

Spool’s position is a good example of how the “insistence of reality” is related to, but not the same as, “repeatable results”. A Peircean account of meaning tells us that predictions of how users will interpret interface signs must be taken very cautiously. When making sense about the natural and the cultural world, a user’s abductive reasoning paths will eventually be corrected and refined by the insistence of a massive volume of facts that penetrate the mind as signs contingently related to each other “in some respect or capacity”. Contingency — the craft that lies at the opposite of “repeatable results” expected by Spool — is actually the essence of human sense making.

Computer encoded interface signs, however, are different. They are, and must be, uniquely defined and engineered into software artifacts that continually “repeat” exactly the same mechanical interpretation and generation for each and every representation in the program. These representations are produced by human minds (the software designers’ and developers’) and meant to affect other human minds (the users’). The inexorable repetition of these representations in computer systems’ interfaces is ontologically very different from what Peirce refers to as the insistence of the real. Actually, computer interface signs are no more than the expression of a particular moment in someone’s (or some group of people’s) abductive reasoning path, which is as prone to interpretive error as any user’s abductions while trying to make sense of the interface.

We thus see that Spool’s vigorous criticism of UCD echoes some of the Cartesian notions we discussed above. He, just like UCD adopters, is looking for repeatable semiosis “independent of practitioner”, meaning independent of mind, which should nevertheless be mentally achieved by an individual’s use of rigorous methods of inspection and analysis. The interest of a semiotic perspective on this debate is to show that there are theoretical choices beyond Cartesianism, and that depending on whether the focus is on human semiosis or computer semiosis, some choices are better than others. For illustration, we comment on two alternative paths.

Louis Hjelmslev (Hjelmslev 1961) followed Saussure’s ideas, but gave them a more axiomatic interpretation. In his view, signs could be studied as the correlation between any two autonomous systems. Neither one had to (although they might) be anchored in psychological reality. Charles Morris (Morris 1971), in turn, followed Peirce’s ideas, but gave them a radically behaviorist interpretation. In his view, signs should be defined in terms of stimulus and response. Influenced by Peirce’s notion that habit was a powerful mediating force in sign-making processes, Morris developed a semiotic model that explained all sign processes in terms of reactions caused by the presence of signs under certain conditions. Hjelmslev was the theorist chosen by Peter Bøgh Andersen (Andersen 1990), when writing his Theory of Computer Semiotics, and Morris the one chosen by Heinz Zemanek (Zemanek 1966), when writing his pioneering Semiotics and Programming Languages.

25.2.3 Culture, signification and communication

Before we proceed to the next sections, let us revisit the saying that “meaning is in the mind of the beholder” for final remarks about conditions for human mutual understanding. We will borrow Umberto Eco’s Theory of Semiotics (Eco 1976), which brings together Peircean and Saussurean elements in interesting ways. On the one hand, Eco adopts the notion that signs trigger an indefinitely long chain of other signs in the mind (he speaks of unlimited semiosis). On the other, he defines signs as content-expression correlates. The apparently diverging perspectives are reconciled with the introduction of culture in two fundamental semiotic processes: signification and communication.

Signification is a correlation between content and expression. Signification systems are collections of “discrete units [...] or vast portions of discourse, provided that the correlation has been previously posited by a social convention”. Communication is a process in which “the possibilities provided by a signification system are exploited in order to physically produce expressions for many practical purposes.” (Eco 1976: p. 4) Notice that Eco does not say that in communication one repeats the conventions encoded in signification systems. He says that communicators exploit the possibilities provided by a signification system. In other words, communication may include sign productions that deviate from the system, as well as others that don’t. In fact, Eco’s theory of sign production defines communication as a process that manipulates signs, “considering, or disregarding, the existing [socially conventionalized] codes” (Eco 1976: p. 152). The primacy of social conventions in communication, even when stepping out of existing signification systems, places culture at the center of Eco’s Semiotics, which he actually characterizes as the logic of culture. Semiotics, in his view, is thus a comprehensive study of culturally-determined codes and sign productions at all levels of human experience.

25.3 Semiotics and HCI: Two Disciplines, Two Cultures

The work of Hirschheim, Klein and Lyytinen (Hirschheim et al 1995) on the conceptual and philosophical foundations of Information Systems Development (ISD) can further our understanding of why Semiotics and HCI have been keeping apart from each other. Following a still widely-accepted view that information systems are technical implementations of social systems, the authors trace the differences among ISD approaches, concepts, models, methods and even tools back to their proponents’ interpretation of reality. Building on Burrell and Morgan’s work in organizational analysis (Burrell and Morgan 1979), they list four paradigms in ISD research and professional practice. The paradigms are simplified world-view models produced by combining the end points of two axes: objectivity-subjectivity and order-conflict (see Table 1).

Paradigm | Choice between Objectivity and Subjectivity | Choice between Order and Conflict | Assumptions about Reality |

Functionalism | Objectivity | Order | There is an objective order in social reality, which can be known and described independently of subjective interpretation. |

Social Relativism | Subjectivity | Order | Reality is a social construction, which is the result of interpretations determined by continuous cultural changes. |

Radical Structuralism | Objectivity | Conflict | Reality is determined by objective super-structures that are in constant power conflicts. |

Neo-humanism | Subjectivity | Conflict | Reality is continually transformed by multiple objective and subjective epistemologies that co-exist and contribute to historical evolution. |

Table 25.1: Hirschheim and colleagues’ paradigms at the base of information systems development

Different assumptions about reality, shown in the last column of Table 1, can be aligned with distinctions between Saussurean and Peircean perspectives on meaning. This is not to say, however, that the same set of paradigms can be generated by semiotic theories alone, and much less that Saussure or Peirce (would) have embraced the principles of functionalism, social relativism, radical structuralism or neo-humanism. We must only observe the fact that paradigms that preclude subjectivity have higher compatibility with definitions of meaning that do not speak about mental mediation than those that include it, and vice versa. Likewise, speaking only of the paradigms where order is important, we should observe that the defining characteristics of functionalism in Table 1 are more compatible with Cartesian canons than is the case with social-constructivism.

Hirschheim and co-authors note that most of the work in ISD published until 1995 tacitly falls into the functionalist perspective, according to which subjective interpretation doesn’t play a role when systems requirements are elicited and data models are built, for example. Testing hypotheses against empirical data can, in this view, prevent subjective intuitions, beliefs and aspirations from contaminating scientific knowledge of an objective reality. Consequently, this paradigm has long supported a feedback loop in which information and communication technologies (ICT) are viewed and produced as the impersonal (i. e. non-subjective) result of applying empirically-tested facts and principles to solve problems. Computer scientists have thus been increasingly led into empirical research practices looking for more facts and principles, but speaking very little of their interpretations and valued perspectives regarding what these facts and principles mean, why, and under what kinds of conditions.

As a multi-disciplinary field, HCI encompasses almost as much diversity in terms of scientific traditions as the number of disciplines that can be listed among its contributors (e. g. Psychology, Anthropology, Sociology, Design, Ergonomics and Computer Science, to name but only a few). However, most of its success and prestige is associated to ever more sophisticated and pervasive kinds of interactive products coming from the ICT industry. In this context, predictive knowledge, based on sound empirical experimentation, is in high demand. The industry is avidly looking for tested facts and principles to guide its engineering processes and found the establishment of norms and standards. Thus, the pressure of industrial demands on HCI research has been reinforcing functional perspectives on science and restating the belief that scientists and technologists do their work impersonally, backed by statistical inferencing methods applied to objectively collected empirical data.

Semiotics has been successfully applied in marketing and advertising (Umiker-Sebeok 1988; Floch 2001), for example, which suggests that HCI and Semiotics could work together to respond to industrial demands. Nonetheless, the power of opposing scientific traditions and practices has been keeping them on separate tracks. Semioticians of Peircean extraction, for instance, are interested in the rich variety of meanings that emerge in sign production and sign interpretation contexts. They may use extensive interpretive and hermeneutic methods to generate in-depth understanding of situated meaning-related processes, before they engage in statistical inferencing methods leading to general signification facts and principles. In addition, they must not dismiss the fact that in order to establish the very object of their investigation (a sign or sign process) they need the mediation of their own minds. That is, in order to take anything as a sign (or evidence) of other signs or sign processes of interest, investigators must carry out interpretation. The process and product of their investigation will depend totally on other signs: their inferences, their validation criteria, their conclusions, and last but not least on the way they signify their knowledge discovery process and communicate it to the scientific community. The main criteria for scientific validity, which must be made apparent in this specific situation, is that newly discovered signs can: be framed into coherent inferential discourse that consistently relates to previously existing knowledge; correct, expand or innovate aspects of previous scientific findings; be exposed to the evaluation of other researchers in the scientific community; and produce, as a result of such exposure, the advancement of collective scientific knowledge.

This view contrasts in important ways with the one that credits scientific validity only to research results obtained by an empirical testing of hypotheses and subsequent statistical inferencing. Firstly, although a semiotic view acknowledges the scientific validity of knowledge produced with these methods, it requires that the choice of hypotheses be accounted for as a signification process. Why have the hypotheses been chosen? Why are they significant per se, or more significant than other possible hypotheses? Secondly, and by consequence, it brings into the territory of legitimate scientific activity the very elaboration of candidate theories and hypotheses that will be tested in the course of empirical knowledge discovery processes. By means of rigorous interpretive methods, Semiotics is ready to inspect and validate the processes by which scientists assign meaning to (i. e. “interpret”) their hypotheses, their testing and their results, against the backdrop of theories that they propose to be building.

These contrastive characteristics can explain why semiotic knowledge is usually viewed by the HCI community as speculative and subjective, an impractical philosophic discussion that cannot usefully inform the design and development of ICT products. By the same token, they can also explain why researchers with conscious or unconscious semiotic awareness tend to be skeptical of HCI theories built upon hypotheses whose selection is not carefully and consistently accounted for. Moreover, motivated by the huge diversity of human meaning-assigning strategies, these researchers may be far more attracted to the possible variations of users’ interpretive behavior in view of even small contextual changes than to regularities found under highly controlled tests in laboratory.

Given the above-mentioned differences in purpose, practice, beliefs and values, the most difficult obstacle for bringing together Semiotics and HCI is probably not the fact that Semiotics requires extensive learning of unfamiliar concepts and methods. This has always been the case in the multidisciplinary research practices that characterize HCI. The real challenge for the two disciplines seems to be how to combine different epistemologies and rise above scientific validity disputes. Only then can Semiotics seed progress in HCI.

The rest of this chapter is my personal narrative of a successful case, the theoretical construction of Semiotic Engineering (de Souza 2005; de Souza and Leitão 2009). I hope many others are on their way to correct and refine our thinking, partaking in the ongoing scientific semiosis.

25.4 Computers as Media

Today, viewing computers as media is like viewing cars as vehicles. Of course, computers enable, support and enrich individual communication, group communication, and mass communication. We can even put it the other way around: All contemporary media involve computation. But, how obvious was this connection when HCI emerged as a discipline? And, how obvious right now are the consequences of this view for current and future computation?

Viewing computers as media is the hallmark of all semiotic approaches to computing and human-computer interaction. In 1988, Kammersgaard proposed that human-computer interaction could be characterized in substantially different ways (Kammersgaard 1988). One of them was called the media perspective, which involved not only the fact that computers could be used by people to communicate with each other, but also the fact that computer application programmers could communicate with users through systems interfaces. As the author acknowledges, the latter was influenced by illustrious predecessors:

I will not go into further detail about this last type of communication, except to mention that Oberquelle, Kupka & Maass (1983) talk about delegation of communicating behaviour from the designer to the machine and then treat the situation as seen from a dialogue partner perspective, whereas Andersen (1985) treats the designer as having the role of one sender in a collective of senders, who makes a contribution to each message sent through the medium.

-- Kammersgaard 1988: p. 356

Only a few years before, Winograd and Flores had published a notorious book, in which they proposed to shift the focus in computing from pursuing ever-increasing artificial cognitive capacities to supporting pervasive human communication and coordination processes (Winograd and Flores 1986). And in 1990, Andersen would publish the first comprehensive account of computers as media in his Theory of Computer Semiotics (Andersen 1990).

From the late 1980’s to the mid 1990’s, a number of researchers, in different parts of the world, started thinking about how to articulate Semiotics and Computing. In 1988, in a 55-page chapter in Hartson’s Advances in Human-Computer Interaction (Hartson 1988), Nadin (Nadin 1988a) explored the semiotic implications of interface design and evaluation. In the same year, he would also publish an article in Semiotica, about a semiotic paradigm to systems interface design (Nadin 1988b). In 1993, Andersen edited a book explicitly called The Computer as Medium (Andersen et al 1993), and we published our first paper on the semiotic engineering of human-computer interfaces (de Souza 1993).

All of this early work explored the power of viewing computer programs and systems — and especially their interfaces — as signs of a very specific kind. They are designed and engineered according to the laws and limits of computation, but what they ultimately communicate is a message meant by humans (systems designers and developers) and to humans (systems users). This is the rationale for viewing human-computer interaction as computer-mediated human communication, the powerful idea underlying Winograd and Flores’s manifesto for a language-action perspective (LAP). LAP attracted many semiotically-inspired researchers in Computer Science (Communications of the ACM, May 2006). Most of them turned towards Organizational Semiotics (Liu 2000), which in general is closer to information systems development than to human-computer interaction design. In Brazil, however, a group of researchers has been exploring the use of Organizational Semiotics concepts in the design of human-computer interaction (Baranauskas et al 2003; Bonacin et al 2004; Neris et al 2011).

Semiotics was also implicit or explicit in the work of other researchers working in HCI. For example, Mullet and Sano (Mullet and Sano 1995) explored semiotic features of representations used in the design of visual interfaces. Their emphasis was on how to communicate the design intent to users through interface signs, an idea that was gaining momentum at the time. In fact, computer systems interfaces provided an excellent context to revisit the concept that there are languages of/in design. The chapter by Rheinfrank and Evenson in Winograd’s Bringing design to software (Winograd 1996) is a great illustration. The authors discuss design as communication, whether intentional or not, and advance the idea that when it is intentional, the use of design languages can increase the effectiveness of communication.

As computers became more pervasive and took over a larger portion of social processes, the computers as media perspective gained the interest of researchers investigating the users’ response to intentional communication by service providers (Light 2001) and information appliances in general (Fogg 2002), for example. Fogg’s work, for one, developed into an elaborate study of persuasion through technology (Fogg 2003), which has been interestingly explored in the context of games and culture-sensitive communication (Khaled et al 2006). In a recent work, O’Neill (O'Neill 2005) theorizes about interactive media in view of contemporary technologies that provide new mediation opportunities for human semiotic processes. Research on the convergence of Semiotics, interactive technology and literacy is also noteworthy. Marion Walton’s work (Walton 2008) in South Africa provides insightful elements to the study of computers as media, enriched by empirical evidence collected in African school children’s encounters with computers and the Internet (Prinsloo and Walton 2008).

Inspired by Andersen’s pioneering work in Computer Semiotics, we developed an extensive semiotic account of human-computer interaction, Semiotic Engineering (de Souza 2005). Having started as a semiotic approach to user interface language design (de Souza 1993), over the years Semiotic Engineering evolved into a semiotic theory of HCI with its own ontology and specifically-designed methods of investigation (de Souza and Leitão 2009). This is the theory I will use in the rest of this chapter to illustrate, in practice, the kinds of connections existing between Semiotics and HCI.

25.4.1 Semiotic Engineering

Semiotic Engineering picked up the early view that human-computer interaction is in fact computer-mediated human communication. It then defined and articulated a number of fundamental concepts, their relations with each other and their implications not only for the development of the theory itself (the internal motivation), but as a contribution to the advancement of HCI (the external motivation). The most striking distinction proposed by Semiotic Engineering compared to other theories and conceptual frameworks in HCI (Carroll 2003) is to develop the early suggestion put forth by Kammersgaard (Kammersgaard 1988) and postulate that designers of interactive software are active participants in the communication that takes place through user interfaces. They communicate their design vision to users by means of interface signs like words, icons, graphical layout, sounds, and interface controls like buttons, links, and dropdown lists. Users unfold and interpret this message as they interact with the system. In other words, the communication between users and systems is in fact part of a metacommunication process; part of the communication process initiated by designers about how, when, where and why to communicate with the system they have designed.

Since designers cannot be personally present when a user interacts with software, they have to represent themselves in the interface, using a specifically designed signification system, and subsequently tell the users what the software does, how it can be used, why, and so on. The choice of representations is actually wide. For instance, designers may represent themselves as humanoids (e. g. systems interfaces with human characteristics like affect and natural language abilities), as machines (e. g. systems interfaces with press buttons, slide controls, dials, and the like), or even as spaces (e. g. systems interfaces with virtual worlds that users explore to achieve various kinds of effects). Depending on the message that they have to communicate, some representations will work better than others. Humanoids, for example, are likely to communicate explanations and instructions more easily than virtual spaces. Machines, in turn, convey physical affordances more efficiently than the natural language discourse of humanoids.

No matter their self-representation, through systems interfaces designers are telling users:

what they know about the users (who they are, what they know, what they wish or need to do, in which preferred ways, and why);

how they have responded to the users’ needs or aspirations (what system they have built and how it works)

what values are encoded in their response (why and how does the system improve the users’ lives).

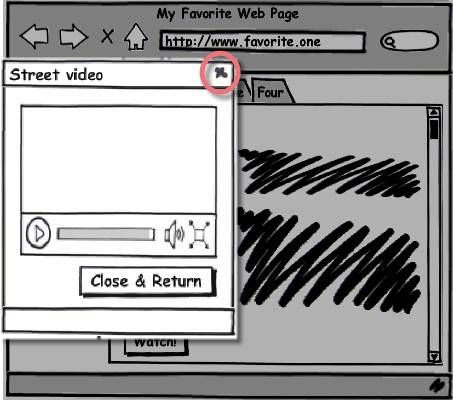

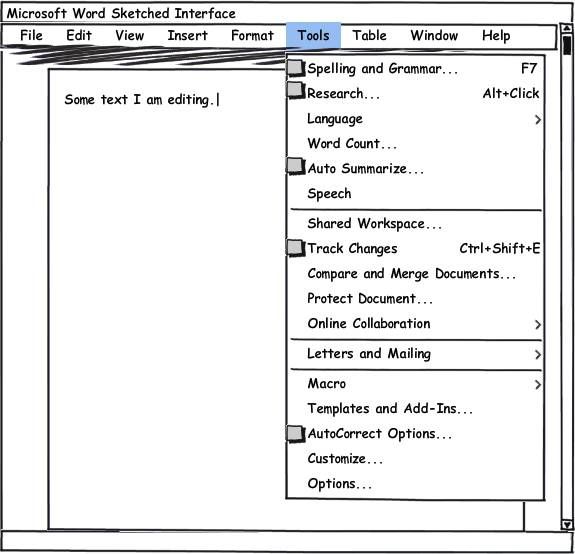

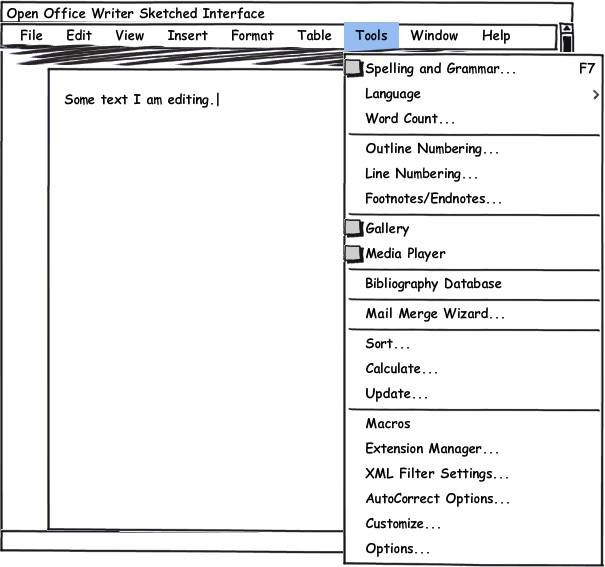

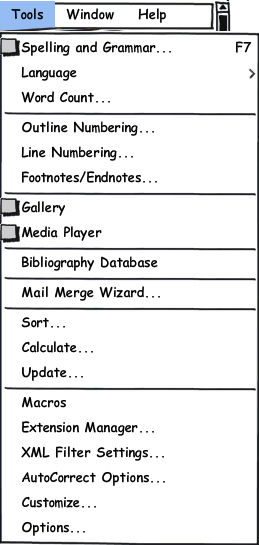

Here is a very simple sketched example of how the elements above are communicated by designers through the interface. We contrast Microsoft Word (Figure 5) and Open Office Writer (Figure 6) tools menu, showing how the designers’ message comes across.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.5: A sketched version of Microsoft’s Word interface unfolding the Tools menu

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.6: A sketched version of Open Office’s Writer interface unfolding the Tools menu

The contrast of both designs shows that they communicate different views on users’ needs and opportunities. The following is a summary of the most striking differences between the two messages communicated through Microsoft Word and Open Office Writer interfaces.

25.4.2 Contrast between MS Word and the OO Writer interfaces

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Microsoft Word

MS Word Tools can be grouped into 4 categories (list items separated by a line): the first with tools related to document content; the second to document manipulation and authoring; the third to mail preparation; and the fourth to interface customization and extensions.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Open Office Writer

OO Writer Tools can be grouped into 7 categories (list items separated by a line): the first with tools related to document content; the second to document structure; the third to inclusion of media files; the fourth to bibliography handling; the fifth to mail preparation; the sixth to data field manipulation; and the seventh to interface customization and extension.

The designers of both applications are telling their users that tools applying to document content are top of the list, and that ‘Spelling and Grammar’ tools are the most important ones. By comparison, we (like users) get the message that MS Word provides more resources than OO Writer in this particular category. In fact, OO Writer tools are a subset of MS Word’s. Similarities in shortcuts are remarkable, suggesting that OO Writer’s designers expect their users to have (or to have had) experience with MS Word at some point in their lives.

Similarities extend also to other categories. In both applications, there are tools to handle mail preparation and to support customization and extensions. The tools in these categories, however, may have different names, and OO Writer provides an additional tool, compared to MS Word: it lets the users customize ‘XML Filters Settings’.

At a closer look, we see that differences in names actually communicate a different perspective on the tools themselves. Whereas in MS Word names tend to express objects (with the exception of ‘customize’), in OO Writer the designers’ communication, when different from MS Word’s, alludes to agents: ‘Mail Merge Wizard’ and ‘Extension Manager’. In these two cases, the communication is clearer (more precise) than in MS Word, which may achieve the effect of encouraging novice users into experimenting new features (since a wizard and a manager will be there to help them).

Microsoft Word

MS Word’s designers communicate very clearly their emphasis on group collaboration. They are telling users that document preparation is or can be a group activity, requiring such things as shared workspaces and online communication, in addition to tracking changes and comparing, merging and protecting documents. The communication of their design vision at this point clearly locates users in a computer-supported organizational context.

Open Office Writer

OO Writer’s designers, by contrast, do not talk about computer-supported collaboration. The communication of their design vision at this point portrays an individual user, working on the details of his/her document. The designers give special emphasis to document structure (numbering and notes) and bibliographic reference management, communicating that they want to support users in preparing complex extensive documents.

By comparison with OO Writer’s designers’ communication, MS Word’s designers communicate their concern with usability in different ways. Five of their tools come with icon representations that help users identify their presence in toolbars (a more concise and more easily accessible interface control than menus). Three menu options have associated keyboard shortcuts, and four options unfold into submenus, rather than invoking dialog windows (communicated by ‘...’). Together these messages tell the users that MS Word’s designers are concerned with providing fast access and fast learning support for their users. In contrast, OO Writer’s designers favor longer dialogs (‘...’), which actually is in line with longer processes that can also be expected from interaction with a ‘wizard’ and a ‘manager’. Furthermore, by communicating that they expect their users to need a bibliographic database, OO Writer’s designers give us the impression that they have worked for meticulous users engaged in longer-term tasks. By contrast, MS Word designers give us the impression that they have worked for busy users, engaged in broader-context activities of which document preparation is only a part.

In both cases, however, the complete message about which tools are available and how valuable they are expected to be for the targeted users is not likely to be understood unless users engage into interacting with the application. In MS Word, for example, in order to understand what “research” really means in context, users will probably click on the option (i. e. interact with the message itself) and see what comes next. If they do, they’ll find out that the designers are offering the possibility to connect to and search online reference books and other resources. Likewise, in order to understand what “bibliography database” is about in OO Writer, users will also probably click on the option. If they do, they will find out that this option connects them to an existing ‘bibliography’ that is installed with OO Writer, and which can be extended or modified to accommodate the users’ reference items. In both applications, the users can resort to online help resources to learn more about these and other parts of the designers’ message conveyed in the menus above. Thus, we see that communication is not limited to visible signs in isolated screens — communication is also conveyed in the process of subsequent communication with the application and its resources (i.e. online help and documentation).

The summative contrast between communication expressed by the MS Word and the OO Writer interfaces is a good sampler of the essence of Semiotic Engineering, whose main tenets are the following:

HCI is an instance of metacommunication — communication about how, when, where and why to communicate with computer systems. The metacommunication message content can be summarized as follows (note the presence of the designers using 1st person pronouns like “I”, “my”, etc.)

Here is my understanding of who you are, what I’ve learned you want or need to do, in which preferred ways, and why. This is the system that I have therefore designed for you, and this is the way you can or should use it in order to fulfill a range of purposes that fall within this vision.

Both designers and users are engaged in metacommunication; the system’s interface represents its designers at interaction time, it is the designers’ deputy. The interface enables all and only the designed types of user-system conversations encoded in the underlying computer programs at development time. The metacommunication message from the designers is unfolded and received as users interact with it and learn ‘what the system means’.

There are three classes of metacommunication signs that designers can use to communicate their message to users. Static signs communicate the essence of their meaning with instant time-independent representations (typically representations that can be correctly interpreted in static screen shots). Dynamic signs communicate the essence of their meaning with a series of time-dependent representations (typically representations that can only be correctly interpreted over a number of subsequent screens or states of the system). Metalinguistic signs are static or dynamic signs that differ from either the former or the latter because the essence of their meaning is an explanation, a description, an illustration, a demonstration or an indication of other [interface] signs (typically textual or video material referring to the meaning of some other static or dynamic sign).

Metacommunication signs in HCI must be produced computationally. Consequently, this specific kind of computer-mediated human communication introduces critical constraints in sign production processes. Systems designers must create representations that by necessity have a single definitive encoded meaning — no matter if the designers (and the users) can easily produce evolved meanings for these representations in natural sign-exchange situations. The algorithmic nature of the medium in which metacommunication takes place mechanizes human semiosis, in both directions (designers’ signs produced by the system interface and users’ signs produced with the system interface).

The quality required for metacommunication to be efficient and effective is communicability, a system’s ability to signify and communicate the designers’ intent (which is ultimately to satisfy the users). The evaluation of communicability involves a methodical analysis of how the designers’ message is emitted (composed and sent through the interface) and of how it is received (interpreted and followed by physical and/or mental sign-mediated action) by the users.

Because the users’ response to the designers’ metacommunication must be mediated by interface signs, the users must learn the interface language, a unique signification system, in which the designers’ message is fully encoded. Users learn this language in the very process of using it in interaction. This process is similar to natural language acquisition, which humans are fully equipped to do, except that the users’ immediate interlocutor (unlike humans) does not reason abductively. Therefore, some of the users’ communicative intent may persistently fail to be interpreted simply because the designers have not anticipated the users’ sign-making strategies. Because this can cause disorientation in the process of interface language acquisition, designers should also explicitly signify the communication principles that they have chosen to encode their message.

Semiotic Engineering has been gradually making its way into HCI, especially after Don Norman, one of the leading figures in early UCD (Norman 1986), wrote about advantages that he sees in a semiotic engineering perspective on HCI (Norman 2004; Norman 2007; Norman 2009). In the next section, we begin to conclude this chapter with very brief concrete examples of how this perspective influences the analysis and design of computer-mediated communication in HCI.

25.5 A closer look at computer-mediated communication

As mentioned earlier in this chapter, saying that computers are media has become a cliché in the turn of the century. Computers are everywhere, computing is embedded in hundreds of things that we carry and encounter in daily life, and digital information exchanged through computers, with or without our control, affects almost every step we take as individuals and as a society. A relevant question for HCI is: How well are HCI theories prepared to inform and equip HCI designers in this context?

The question is actually not new. In 1986, Winograd and Flores asked it from the Artificial Intelligence community, mainly, hitting additional targets in HCI and in Computer-Supported Collaborative Work (Winograd and Flores 1986). In spite of this history, however, most of the existing HCI theories are dominated by cognitive perspectives. They can and do inform the design of interaction with respect to facilitating the learning, memorization and retrieval of productive interactive patterns, for instance. And nobody disputes that this is a fundamental requirement in HCI design. However, the user-centered approach, as its name so clearly ‘signifies’, intentionally or unintentionally has treated design meanings as if they were mind-independent entities that are somehow elicited from users and then reified in design models and prototypes before they eventually take the shape of a system’s interface. The designers’ interpretation and signification processes are not accounted for in original UCD (Norman 1986), whose theorists have in general tended to follow the Cartesian tradition of postulating the existence of primary cognitions. These are shared by all humans (and thus by all users), which stimulates research seeking for universal primitives and, based on their consequences, making predictions about users’ behavior.

The problem of UCD in the computer as medium paradigm is that it cannot accommodate computer-mediated communication between systems designers and users. Designers, quite plainly, do not belong in UCD interaction models. Neither of the two most influential historical sources of UCD — the seven-step theory of action (Norman 1986) and the human information processing model (Card et al 1983) — account for the designers’ cognitive processes and meaning-making activity. This part of the story has been covered by methods (e. g. ethnography (Bouissac 1998) and theories (e. g. Activity Theory (Kaptelinin and Nardi 2006)), which have nonetheless failed to form with UCD a seamless body of knowledge that can satisfactorily account for the whole design process in accordance with the computer as medium perspective. The final ad hoc combination of uncohesive parts in actual design processes seems to be what Spool refers to as the “skills and talents of practitioners” (p. 8, above).

Although the new media perspective instantly reminds us of Web applications design and of interaction with or through mobile devices, there is yet more to it. Digital literacy, which is undoubtfully a requirement for the achievement of full citizenship in the 21st century, has evolved to include computational thinking skills without which users (citizens) are not likely to be able to engage in the new cultures of participation (Fischer 2011).

In the abstract of a thoughtful and provocative article, Jeannette Wing (Wing 2008) expresses her perception of the extent to which changes in current computing practices and possibilities affect science, technology and society:

Computational thinking will influence everyone in every field of endeavour. This vision poses a new educational challenge for our society, especially for our children. In thinking about computing, we need to be attuned to the three drivers of our field: science, technology and society. Accelerating technological advances and monumental societal demands force us to revisit the most basic scientific questions of computing.

-- Wing 2008: p. 3717

In the following, I will present three contemporary examples of different levels of computational thinking involved in new kinds of social interactions. In each case, I will underline the potential advantages of a semiotic framing for the illustrated phenomenon. Then, in a separate sub-section, I will talk about research we are doing at SERG, the Semiotic Engineering Research Group of Pontifícia Universidade Católica do Rio de Janeiro (PUC-Rio), partnering with colleagues in Colorado University at Boulder and Universidade Federal Fluminense (UFF), a public university in the State of Rio de Janeiro, Brazil.

25.5.1 Some contemporary examples

In the illustrations below, we will see how computer-mediated human communication may involve explicit computational representations of self and message at different levels of complexity. In each case, the purpose of communication is very prosaic — to help someone use his email account. The innovation lies in the chosen form of communication and the wealth of new meanings that it introduces in human experience. We will also see that computing literacy is the key factor for reaping the benefits of this new means of self-expression and social participation. Taking a semiotic perspective on literacy — the ability to interpret and produce signs of socially-valued signification systems in order to achieve social participation and full citizenship — the point of the illustrated cases is to show that being able to program computers has become as important in the 21st century as reading, writing and counting has been in previous centuries.

25.5.1.1 parameter setting

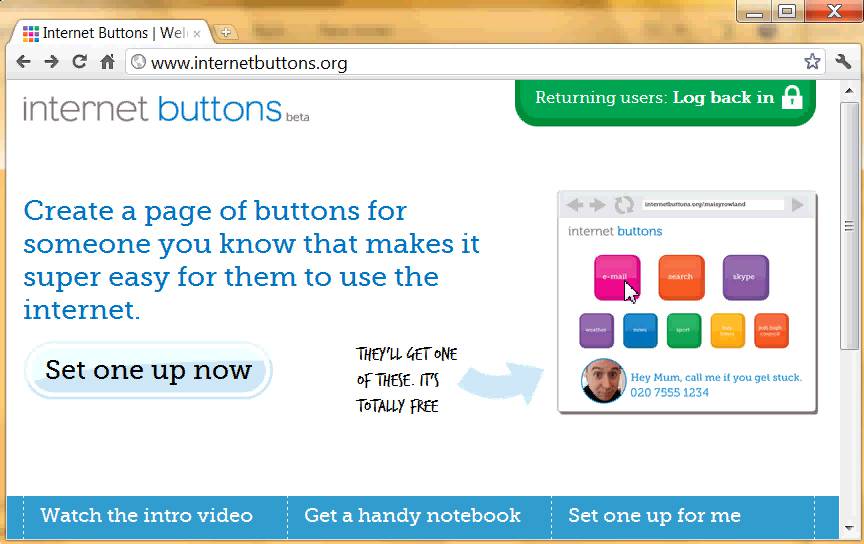

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.7: Internet Buttons” entry page

The first example is Internet Buttons®, a tool that allows you to create a personalized page with buttons that direct you straight to your favorite web sites, services and applications. Internet Buttons was created by a not-for-profit company in the UK “to get people from different generations talking more, sharing more and spending more time together.” In Figure 7, we show their home page. Notice how they get their message across with different kinds of signs like text (“Create a page of buttons for someone you know [...]” and “Hey Mum, call me if you get stuck [...]”) and image (colorful buttons and personal picture) and think of how obviously the software designers not only participate actively in interaction with the user, but also of how they would completely fail to achieve their intent if they did not have the ability (a “personal talent”?) to express themselves through software.

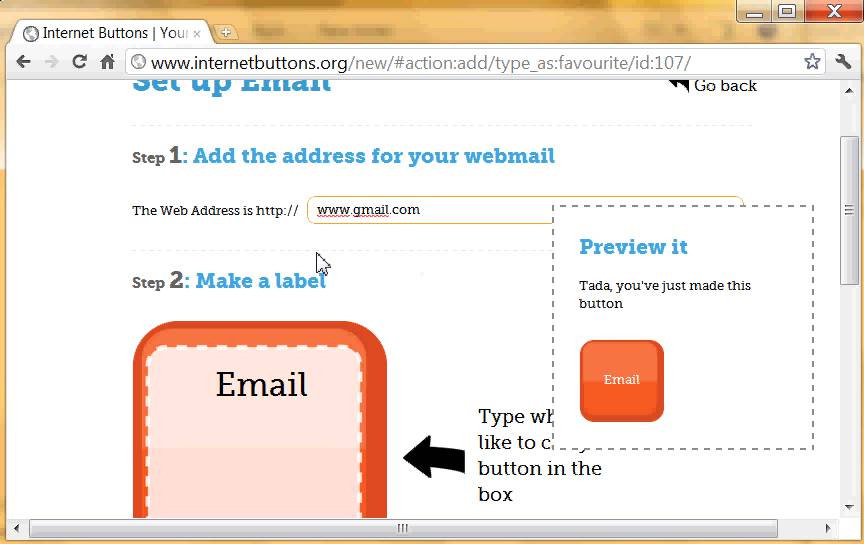

The beauty of Internet Buttons lies, however, in the recursive nature of the designers’ message. Through their program, they are inviting Internet users to program “super easy” Internet interaction for “someone they know”. The programming paradigm is also an extremely simple form of parametric procedure where all that the users have to do is provide the correct values for pre-selected parameters. Therefore, the kind of computational thinking required is very basic. In Figure 8, we show a step of the programming required to access a Gmail account.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.8: Creating an Internet Button to access Google’s Gmail login page

As the Internet Buttons entry page shows, once the buttons are programmed they go into a personalized web page where the user can place any number of buttons especially created for someone else. The personalized page template allows the user-programmer to add a personal picture and a message directly addressed to whoever he are talking to through software that speaks for him. Therefore, the software carries a representation of “self” in addition to a representation of the “message” to be communicated.

25.5.1.2 macro recording

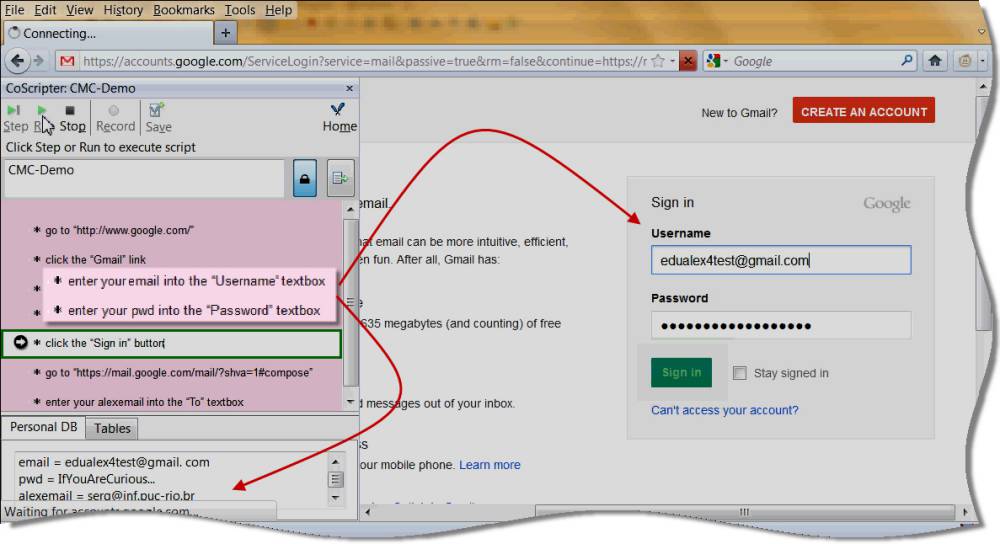

Our next example is IBM’s CoScripter®, a macro recorder for the Web. Unlike Internet Buttons, CoScripter does not focus on personal communication and representations of self. However, just like Internet Buttons, the purpose of CoScripter is to make Internet processes easier — for the user himself/herself and for whoever needs help with Web interaction. The system allows the user to record and playback interaction steps, optionally using the values of variables stored in a “Personal Database” in the user’s machine. Recorded CoScripts are stored in an Internet server and can be shared with other users. In Figure 9, the arrows that we added to the snapshot show how, in this case, the CoScript instructions (on the left) use the information in the Personal Database (at the bottom left) to command interaction with the Gmail login page.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.9: The execution of a CoScript for accessing a Gmail account

Although the scripters are not explicitly represented in the CoScript (an interesting sign of the effect of impersonal and implied communication between script designer and script user), the purpose of CoScripter creators is also interpersonal communication through software. In their home page, visited in December of 2011, we read the following message:

CoScripter is a system for recording, automating, and sharing processes performed in a web browser such as printing photos online, requesting a vacation hold for postal mail, or checking flight arrival times. Instructions for processes are recorded and stored in easy-to-read text here on the CoScripter web site, so anyone can make use of them. If you are having trouble with a web-based process, check to see if someone has written a CoScript for it!

-- http: //coscripter.researchlabs.ibm.com/coscripter

The programming paradigm in CoScripter is more sophisticated than in Internet Buttons. One of its creators, Allen Cypher, is a leading figure in programming by demonstration (Cypher 1993). The user-programmer in this case is dealing with a simple programming language, in which demonstrated interactions are automatically encoded. Optionally, the user may manipulate variables to make CoScripts more general and reusable in similar, but not identical, contexts. Mainly because of variable manipulations, the level of computational thinking required to use CoScripter is intermediary. There are a number of interesting semiotic issues to explore with CoScripter. In this chapter, I will only briefly remark that, unlike Internet Buttons, whose messages are computationally encoded with fixed “token-level” semantics (each button means a single Internet address), CoScripter messages can be computationally encoded with fixed “type-level” semantics (each script can be executed with different parameters specified in the end user’s Personal Database). Each CoScript means a range of possible interactions with the same web page, web service or web application. All of the interactions are predicated by the same interactive steps, but there can be variations in contextual parameters. Thus, the communication content that can be expressed with CoScripter is considerably more complex (and powerful) than in the previous example. The interested reader can see a deeper semiotic analysis of CoScripter in one of our previous publications (de Souza and Cypher 2008).

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

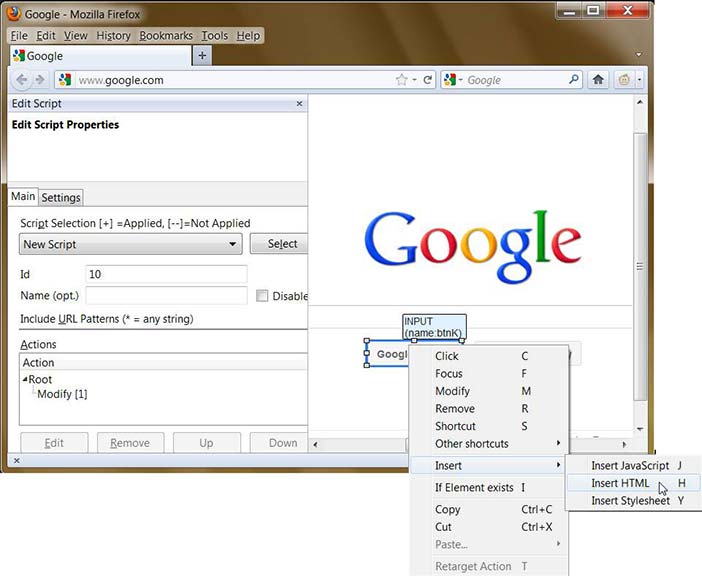

Figure 25.10: Programming with "Customize Your Web"

25.5.1.3 on wheels

Our last example is Customize Your Web, a personalization tool that allows users to change the appearance of web pages and add new functionality to them without having to dive too deeply into JavaScript programming if they don’t want or don’t know how to do it. Customize Your Web is an extension to Firefox designed by Rudolf Noe. In Figure 10, we show the end user programming environment offered by this tool. The user can select elements of a Web page (in this example, the “Google Search” button is selected) and make changes to its appearance and behavior (see the menu from which the user is about to select between “Insert JavaScript”, “Insert HTML” or “Insert CSS”). Interface elements can be deleted, inserted and relocated as desired, as long as they can be uniquely identified on the existing page whenever it is loaded.

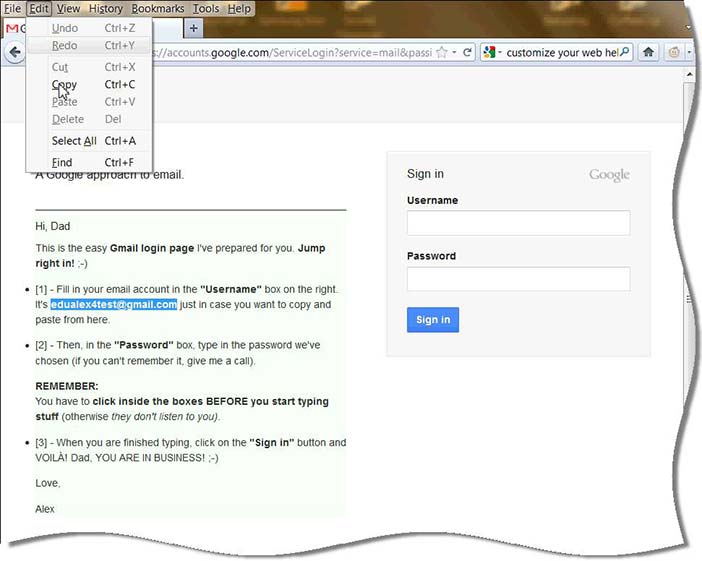

Compared to the previous example, Customize Your Web opens even more powerful possibilities for computer-mediated communication. In Figure 11 and Figure 12, we show how facilitating interaction with Gmail is substantially different in Customize Your Web, compared to CoScripter and Internet Buttons. Because the programming paradigm is close to programming on wheels, the possibilities for representation of self and message are limited only by technicalities of the tool (like problems with unique identification of elements on certain Web pages) and the resources of the user. The level of computational thinking required to use this tool effectively is advanced.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.11: Gmail login page modified with “Customize your Web”

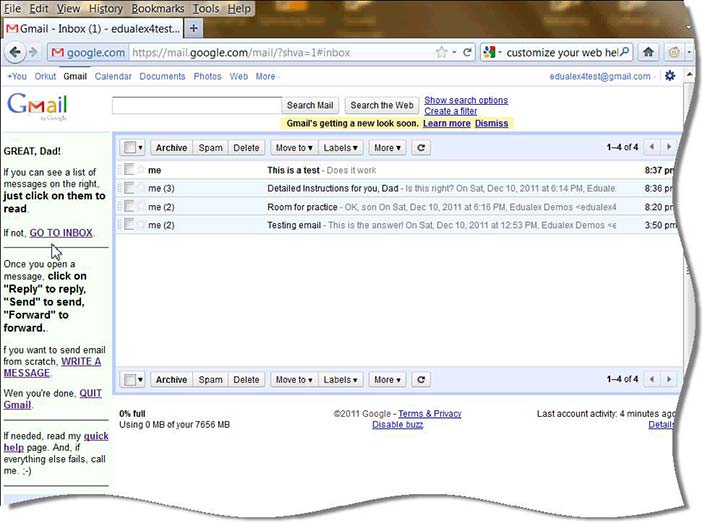

In Figure 11 (see customized text starting with “Hi, Dad” on the left), we can see that the user can script his own presence and participation in someone else’s experience with Gmail. The complexity of self-representation and message in this case is considerably high. Notice that in Figure 12, the conversation between the scripter and the targeted user (his Dad) extends over whole interaction spans with Gmail. In other words, a parallel communication about communication with Gmail (i. e. genuine metacommunication about Gmail) is explicitly and intentionally in place. This is one of the best examples of why the computer as media perspective calls for a different breed of HCI theories in order to help end users take the best out of the virtually unlimited possibilities of social interaction and participation now available for them.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.12: Gmail inbox page modified with “Customize your Web”

Designing cohesive and consistent dialog turns throughout a Web process, like shown in the Customize Your Web example, is end user HCI design — not only end user programming and development. However, theories that do not include the designers in the communication process that they are designing have very little to say about how (and why) the current interface of Customize Your Web (see Figure 10) must be improved. For example, UCD is not going to help the user design all the conversational paths that his parallel communication with Dad might take in his absence. Dad can decide to use any of the available interface controls during interaction with Gmail. So, how does the user build a representation of conversational context that is consistent with what he is telling Dad at each step of the programmed interaction?

The answer to these questions can not only show the complexity of programming that end users can engage in at this stage of technological development, but also — and more importantly — it can show what kinds of new dimensions a semiotic perspective can bring to HCI research and practice. In the next sub-section, we will very quickly mention how we are exploring computer-mediated communication issues with Semiotic Engineering.

25.5.2 Going a few steps further

One’s level of computing literacy will determine how one will be able to participate in new kinds of social interactions made possible by new ICT. As the examples above have shown, higher literacy levels raise the transformative power of communication to unprecedented standards. The challenge is in the air: “If computational thinking will be used everywhere, then it will touch everyone directly or indirectly. [...] If [it] is added to the repertoire of thinking abilities, then how and when should people learn this kind of thinking and how and when should we teach it?” (Wing 2008: p. 3720)

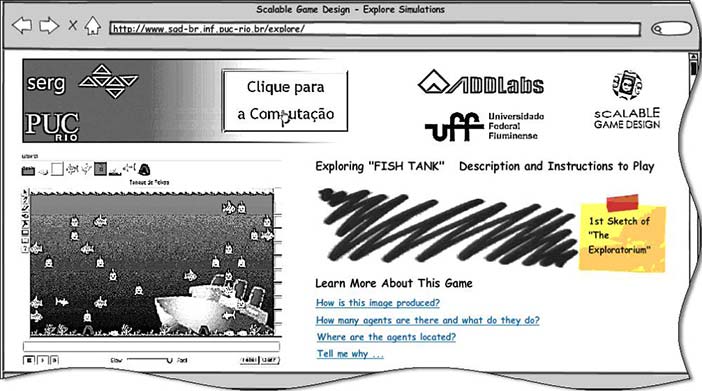

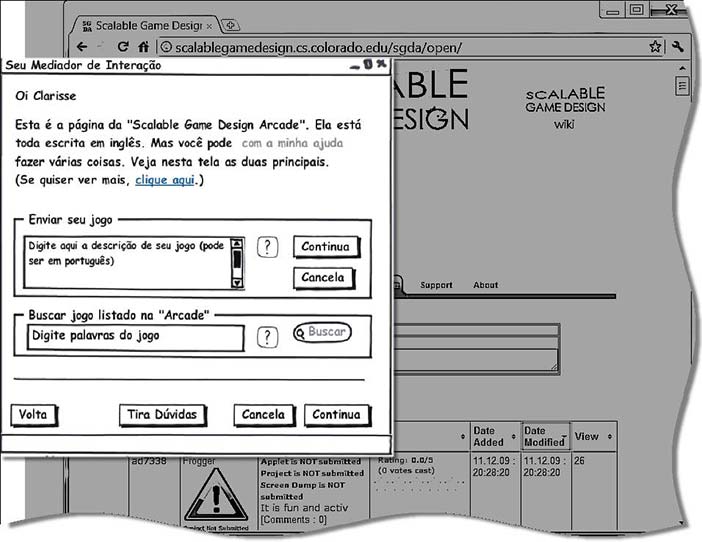

One of the most successful responses to-date is AgentSheets, a visual programming environment designed mainly for teaching computational thinking at schools (Repenning and Ioannidou 2004). The Scalable Game Design Project, carried out by Alexander Repenning and his group at Colorado University at Boulder has been educating school teachers and students in computational thinking for many years now. In 2010, we brought the project to Brazil and started working with a public school in the city of Niterói.

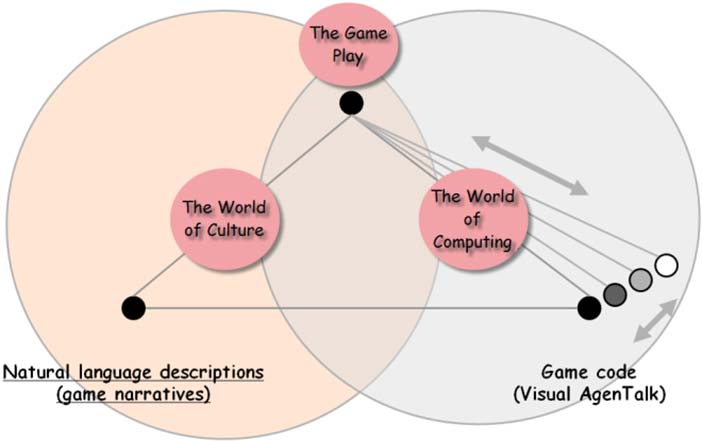

Given our interest in semiotic theories of HCI, we have approached the project with a dual perspective. On the one hand, we want to educate teachers and students in computational thinking. On the other, we want to show them that computational thinking gives them a completely new language for self-expression and communication, with which they can do whatever they can mean (or “signify”, in semiotic terms). Our strategy is to work constantly with the semiotic relations that hold between three distinct signification systems (see Figure 13): natural language, game play (i. e. executable games programmed by AgentSheets users) and game code (i. e. the various levels of programming language encodings that make the games run). In AgentSheets, the users can program in Visual AgenTalk, a visual programming language with a textual XML counterpart.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.13: Semiotic varieties in a visual programming environment

Empirical evidence collected in game design sessions with a group of students in 2010 has shown elaborate and intriguing signification patterns when we compared, for each game, the natural language descriptions provided by the programmers, the Visual AgenTalk code, and the resulting executable game representations (de Souza et al 2011). In particular, we traced interesting entity-naming strategies, token/type relations in representation choices, and curious transitive structure changes when contrasting natural language and computer program representations. Subsequent research steps carried out in 2011 with another group of students suggest that semiotic relations between signification systems involved in using AgentSheets can be used to raise the level of computing awareness among learners.

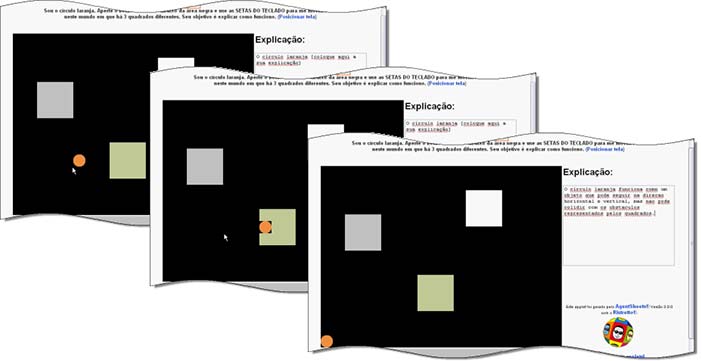

In Figure 14, we show successive screen shot snippets from a semiotic exploration of relations between game play signs, game code signs and natural language signs in a very abstract environment used by CS graduate students. The “game” representation (an executable simulation) has only four static signs: an orange circle; three colored squares; and a black background. The dynamic signs are also very few and very simple: if the user presses arrow keys the orange circles moves in the corresponding direction; if the circle moves next to the boundaries of any of the three squares, it is “trapped” by the square and the game is “reset”. The fascinating aspects of the exploration were: to examine what meanings the participating students assigned to the simulation; and to examine how the apparently identical behaviors of the three squares had been intentionally encoded by the experimenters as completely different representations.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 25.14: Screen shots from a semiotic exploration of simulation signs with AgentSheets

When asked to write down their explanation (“Explicação” in Figure 14) for the orange circle behavior, inferred exclusively from the participant’s interaction with the game, the students used signs referenced to the “protagonist” of the game. For example, the explanation depicted in Figure 14 says that “the orange circle cannot collide with any of the obstacles represented by the squares”. A totally different story, however, would be told if the student looked at the underlying program. The three squares are actually programmed as very different agents. The green square “traps” the circle as it comes near it. The white square is like an attractive area into which the orange circle “throws itself” as soon as it comes near it. And the white square is no more than “a hole in the ground”, an agent-free space in the worksheet, into which the circle may “fall propelled by the ground”. Thus, the designer’s story about this simulation is a totally different one if looked at from the inside or the outside. From the inside, it might go like this, for instance: “The orange circle loves the grey square and jumps into it as soon as it sees it; it must escape from the green square that traps it when the circle comes near it; and it must beware of a square-shaped hole in the ground, into which it must not fall.”