Over the last two decades, interaction design has emerged as a design discipline alongside traditional design disciplines such as graphics design and furniture design. While it is almost tautological that furniture designers design furniture, it is less obvious what the end product of interaction design is. Löwgren's answer is "interactive products and services" (Löwgren 2008). This narrows it down, but leaves open the question of what it means for something to be interactive.

Interactive systems have been studied within the field of Human-Computer Interaction since the early 1980s. This research has given us valuable knowledge about users, systems and design methodology, but few have asked "philosophical" questions about the very nature of interactivity and the interactive user experience.

I will approach the question of interactivity from a number of angles, in the belief that a multi-paradigmatic analysis is necessary to give justice to the complexity of the phenomenon. I will start by defining the scope through some examples of interactive products and services. Next, I will analyse interactivity and the interactive user experience from a number of perspectives, including formal logic, cognitive science, phenomenology, and media and art studies. A number of other perspectives, e.g. ethnomethodology, semiotics, and activity theory, are highly relevant, but are not included here. (For an analysis that includes these perspectives, see (Svanaes 2000)).

Author/Copyright holder: Courtesy of Rikke Friis Dam and Mads Soegaard. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported).

Introduction to Philosophy of Interaction

Author/Copyright holder: Courtesy of Rikke Friis Dam and Mads Soegaard. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported).

Guiding Principles of Interaction Design derived from Heidegger

Author/Copyright holder: Courtesy of Rikke Friis Dam and Mads Soegaard. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported).

Principles of Interaction Design derived from Merleau-Ponty

Author/Copyright holder: Courtesy of Rikke Friis Dam and Mads Soegaard. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported).

Advantages and Problems with Cognitivism

11.1 Terminology

The Merriam-Webster dictionary defines interaction as "mutual or reciprocal action or influence". Taking this definition as a starting point, what is the meaning of interactive and interactivity? A product or service is interactive if it allows for interaction. An artefact's interactivity is its interactive behaviour as experienced by a human user. Or to be more precise, it is the potential for such experiences. Its interactivity is a property of that artefact; alongside other properties like its visual appearance. Interactivity can also be used as a noun to signify everything interactive, similar to how radioactivity refers to everything radioactive.

Many definitions exist for "the user experience". I prefer this one: "a person's perceptions and responses that result from the use or anticipated use of a product, system or service" (ISO 2009)

11.2 The scope: Interactive products and services

What makes a product or service interactive? One of the simplest interactive products imaginable is a touch-sensitive light switch like the one in Figure 1A. You touch it once to turn the light on, and again to turn the light off. At the other end of the complexity scale you find interactive products like the cockpit of a modern aeroplane (Figure 1B); allowing trained pilots to fly the plane through a number of input devices and visual displays.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 11.1 A-B-C-D: Examples of interactive products and services

Examples of interactive services include internet banking, online shopping, and social media, all made possible through networked digital devices like PCs (1C) and mobile phones (1D). All above examples are interactive. Are there digital products that are not interactive?

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

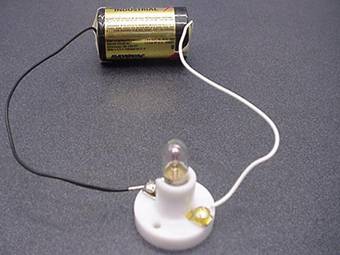

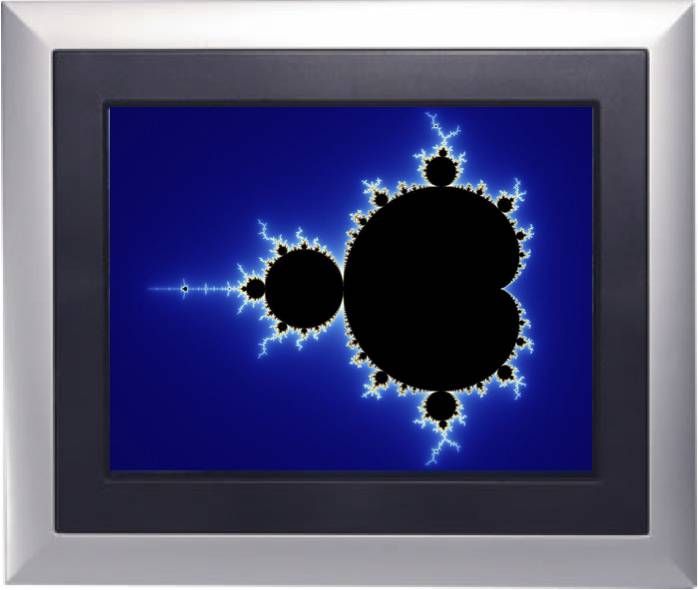

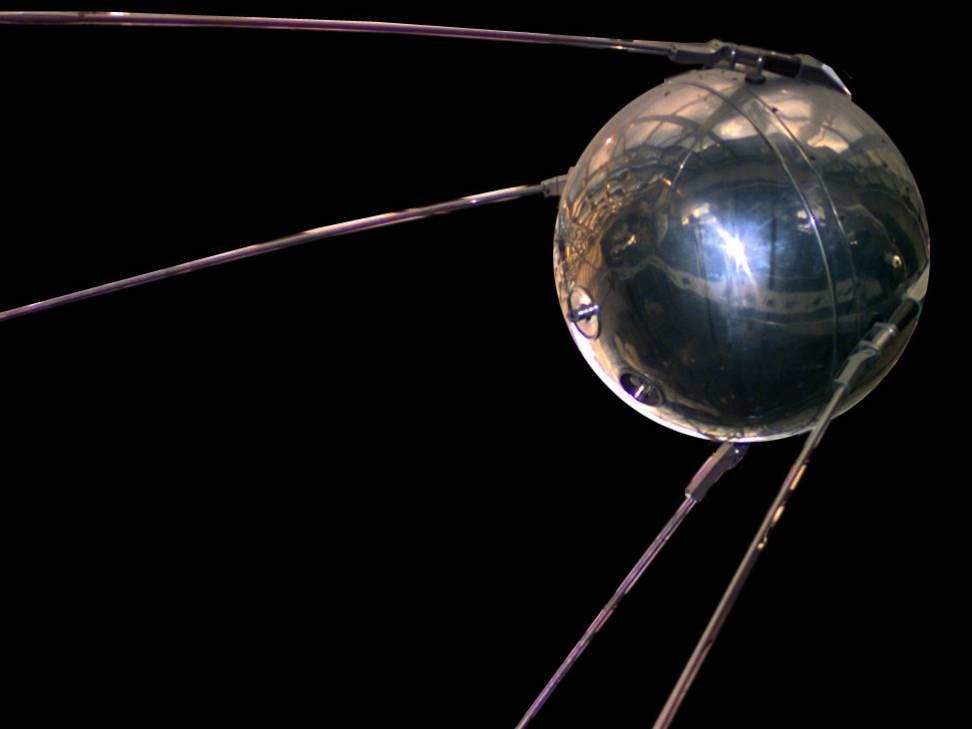

Figure 11.2 A-B-C: Some non-interactive artefacts

If you solder a light bulb to a battery and leave it on your desk until the battery is drained (Figure 2A), this digital product can hardly be called interactive. You can of course turn it off by cutting one of its wires, but that would not be an intended interactivity of the product. The light bulb could be substituted with something far more complex, like a digital photo frame that was programmed to generate random fractals on a screen (Figure 2B). With no buttons, handles or other means for interacting, despite its complex behaviour, neither that product would be interactive. It would be like the 1957 Sputnik 1 satellite (Figure 2C), which contained a "transmit only" radio beacon that transmitted beeps from space for 20 days until its batteries ran out.

From the above examples it becomes clear that what makes a product or service interactive is not its complexity, nor the fact that it is digital, but whether it is designed to respond to actions by a user.

11.3 Formal descriptions of interactive behaviour

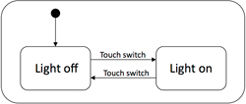

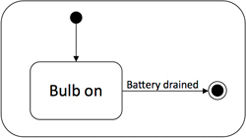

One way of describing the interactive behaviour of a product or a service is through a formal representation. A number of such formalisms exist, the simplest being state diagrams. A state diagram is a visual representation of a Finite State Machine.

Figure 3A shows a state diagram for the touch-sensitive light switch in Figure 1A. It contains two states, "Light off" and "Light on", and two user-initiated transitions between the states ("Touch switch"). The black dot leading in to the "Light off" state tells us that this is the initial state, i.e. the light starts out in the "off" state.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 11.3 A-B: State diagrams for light switch and "Bulb with battery"

In Figure 3B, we see the state diagram for the non-interactive "Bulb-with-battery" device. It starts with the light being on and stays in that state until the battery is drained. The black dot in a circle is the "game over" symbol. When the battery is drained, the device stops being what it was intended to be.

A number of more sophisticated formalisms have been used for describing interactive behaviour, including Harel's hierarchical state diagrams (Harel 1987), temporal logic (Hartson and Gray 1992), Petri nets (Elkoutbi and Keller 2000) and algebra (Thimbleby 2004).

Formal representations of interactive behaviour are well suited to describe the technical side of interactivity, but say little of the human side. They are of little value in answering questions like: "How is the interaction experienced?", "What does the interaction mean to the user?" To be able to answer such questions about the interactive user experience, we have to leave formal logic and the natural sciences and turn to the humanities and the social sciences.

11.4 Cognitive science: Interaction as information processing

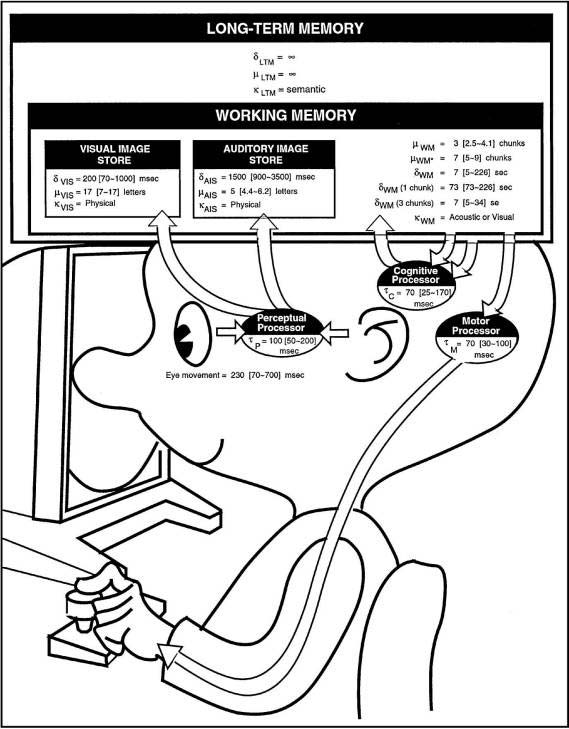

Since the birth of Human-Computer Interaction (HCI) as a scientific discipline in the 1980s, cognitive science has been the dominant paradigm for describing the human side of the equation. "The Psychology of Human-Computer Interaction" by Stuart K. Card, Thomas P. Moran and Allen Newell (Card et al 1983) presented a model of the user based on an information processing metaphor (Figure 4). Here, the interaction is modelled as information flowing from the artefact to the user, where it is processed by the user's "cognitive processor", leading to actions like pushing a button. Their model sees interaction as the sum of stimuli reception and user actions.

Author/Copyright holder: Card, Moran and Newell. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 11.4.A: Modelling the user as an information processor.

Author/Copyright holder: Donald A. Norman. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

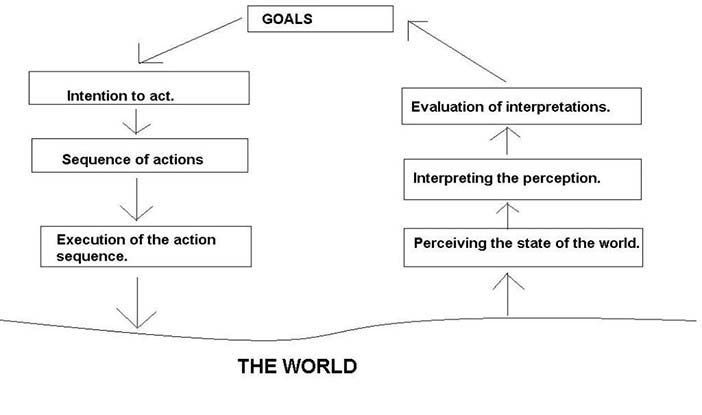

Figure 11.4.B: The seven step action cycle

Imagine the user in Figure 4A operating the light switch in Figure 1A. The act of turning on the light switch would be modeled as information about the state of the light reaching the perceptual processor through the user's eyes, where it would flow to the working memory, and be processed by the cognitive processor. A command would then be sent to the motor processor, leading to the hand pushing the switch.

In "The Design of Everyday Things" (Norman 1988), Don Norman elaborates the details of what is going on as a seven step "action cycle" (Figure 4B). Returning to our user in front of the light switch in Figure 1A, Norman would describe this as the user having the goal of turning the light on (step 1). This goal would lead to an intention to act (step 2), leading to a sequence of actions being sent to the motor processor (step 3), where it would trigger a hand movement (step 4). In "the world", the light would turn on, and this would be perceived (step 5) and interpreted (step 6) by the user. Finally, the user would evaluate the new state of the light as a fulfillment of the goal (step 7), and be ready for a new action cycle. The action cycle is described by Norman in the following video.

Author/Copyright holder: Donald A. Norman. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

The action cycle as described by Don Norman

Based on their model of the user, Card, Moran and Newell devised a framework for predicting user behaviour called GOMS (Goals, Operators, Methods, and Selection Rules). A number of GOMS-inspired cognitive frameworks have since been developed to model the behaviour of the user, all based on the same basic assumptions of the human information processing model.

GOMS-like models have been successful in predicting key-level human behaviour for routine tasks, but have shown little explanatory and predictive power when it comes to more open tasks, like updating your Facebook profile. Further, they are of little help in understanding the interactive user experience.

In "The Design of Everyday Things", Don Norman introduced the concept of affordance that had been developed by the psychologist J.J. Gibson. Norman defines affordance: "...the perceived and actual properties of the thing, primarily those fundamental properties that determine just how the thing could possibly be used". While Gibson's ecological approach to human cognition and perception in many respects is incommensurate with the information-processing approach, the affordance concept has mainly been interpreted within HCI to describe what functions an object allows for, and how this is "signalled" through its visual appearance. Norman illustrates the affordance concept in the video below. Adding the concept of affordance to the framework, the light switch in Figure 1A would appear to the user as an object that affords turning the light on and off.

Author/Copyright holder: Donald A. Norman. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Don Norman's illustration of the Affordance concept

A number of researchers in HCI have argued that the information-processing model reduces the user to a mechanical symbol-processing machine, leaving out important aspects of what defines us as human. One of the earliest criticisms of the information processing approach to human-computer interaction was voiced by Stanford professor Terry Winograd and Fernando Flores in their influential book "Computers and Cognition" (Winograd and Flores 1986). The book was primarily written as a criticism of artificial intelligence and cognitive science, but has strong relevance for a discussion of interactivity.

Winograd and Flores presented three alternatives to cognitive science, of which the phenomenology of the German philosopher Martin Heidegger (1889-1976) is the most relevant here.

11.5 Heidegger: Interaction as tool use

Winograd and Flores argue in "Computers and Cognition" that cognitive science takes for granted that human cognition and communication are symbolic, and that symbols like "cat" refer in a one-to-one manner to objects in the world. Heidegger's philosophy of being (Heidegger 1996; original version is Heidegger 1927) rejects this view and starts out with our factual existence in the world and the way in which we cope with our physical and social environment. His philosophy spans a wide range of topics, of which Winograd and Flores mainly use his analysis of tools. Heidegger used a carpenter and his hammer as an example (Figure 5B). Winograd and Flores argue that a computer can be viewed as a tool: For skilled users of computers, the computer is transparent in use - it is ready-to-hand. When I write a document in a text editor, my focus is on the text and not on the text editor. If my text editor crashes, my focus is moved from the text that I am working on to the text editor itself. It is only when we have a breakdown situation and the computer stops working as a tool that it emerges as an object in the world - it becomes unready-to-hand. If we are not able to fix the problem that causes the breakdown, it becomes present-at-hand.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below

Figure 11.5 A-B-C-D: The "hammerness" of things

Heidegger would describe the light switch in Figure 1A as a tool for controlling the light. As part of our everyday life, a light switch is an integral part of our background of readiness-to-hand, and the interaction with the switch is to some extent invisible to us. It is only when the switch stops working as expected, or when we consciously chose to reflect on it, that it emerges from the background as an object.

Heidegger does not deny the fact that the light switch exists in the world as an object to be viewed, touched and manipulated. His point is that the essence of the switch only emerges through use. Its "switchness" is hidden for us until we put it into use. An important aspect of its "switchness" is that it allows for a certain kind of interaction. When the ape in Kubrick's 2001: A Space Odyssey (Figure 5C) realises that the piece of bone in front of him can be used to crack things, the "hammerness" of the bone emerges to him - and bones forever stop only being bones. The bone's "hammerness" had been there all the time, but it needed to be put into practice to emerge. Similarly with the interactivity of a light switch - its "switchness" emerges through use.

From a Heideggerian perspective, the specific meaning of the interaction with the light switch depends on the use situation and the user's intention. Turning the light on as part of my everyday action of entering a room is different from turning the light on to see if the switch works. In the first case the interaction is part of a wider goal, while in the second case it would be a goal in itself. Cognitive science would miss this subtle difference, as it would model both interactions as the same goal-seeking information processing behaviour. Heidegger would also argue that to be able to understand how an interaction is meaningful for a specific user, we would have to understand the lifeworld of that user, i.e. the cultural and personal background that serves as a frame of reference and context for every experience of that person.

Heidegger further argues that tools exist in the shared practice of a culture as part of an equipmental nexus , e.g. hammers with nails and wood. The hammer gets it significance through its relation to nails and wood, as the nail get its significance through its relation to hammer and wood. The elements form a whole, and each element gets its significance from its role in this whole.

11.6 Merleau-Ponty: Interaction as perception

Maurice Merleau-Ponty (1908-1961) was, besides Jean Paul Sartre, the most influential French philosopher of the 1940s and 1950s. Inspired by Heidegger, Merleau-Ponty stressed that every analysis of the human condition must start with the fact that the subject is in the world. This being-in-the-world is prior to both object perception and self-reflection. To Merleau-Ponty, we are not Cartesian self-knowing entities detached from external reality, but subjects already existing in the world and becoming aware of ourselves through interaction with our physical environment and with other subjects.

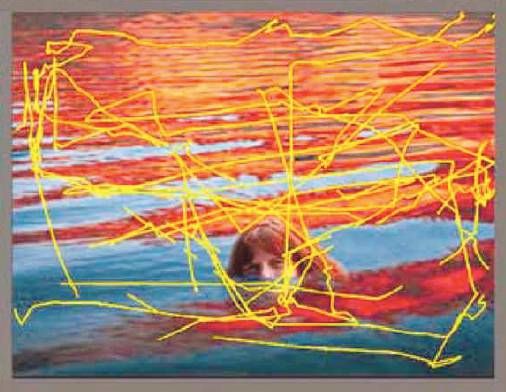

In his major work, "The Phenomenology of Perception" (original: Merleau-Ponty 1945; Translated: Merleau-Ponty 1962), Merleau-Ponty performs a phenomenological analysis of human perception. His purpose is to study the "precognitive" and embodied basis of human existence. He ends up rejecting most of the prevailing theories of perception at his time. In all his writing there is a focus on the first-person experience. Merleau-Ponty rejected the idea of perception as a passive reception of stimuli. When we perceive objects with our eyes, this is not a passive process of stimuli reception, but an active movement of the eyeballs in search of familiar patterns. This view is in total opposition to the popular view in "information-processing" HCI that sees perception as sense data being passively received by the brain. To Merleau-Ponty there is no perception without action; perception requires action.

Author/Copyright holder: Vogt and Magnussen 2007. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below

Figure 11.6.A: Rapid eye movements of layperson

Author/Copyright holder: Vogt and Magnussen 2007. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below

Figure 11.6.B: Rapid eye movements of artist

Perception hides for us this complex and rapid process going on "closer to the world" in "the pre-objective realm". Modern eye trackers allow us to see these rapid perceptual interactions unfold in vision. The optics of the human eye is such that we see the world through a rapidly moving peephole. In Figure 6 we see the rapid eye movements of two different persons viewing the same painting, a non-artist (6A) and a trained artist (6B). We see how the artist rapidly scans the whole painting (Figure 6B), while the layperson mostly focuses on the face of the girl (Figure 6A) (from (Vogt and Magnussen 2007)). The result of their different viewing styles is that they actually see different paintings. Merleau-Ponty uses the term phenomenal field to denote the personal background of experiences, training, and habits that shapes the way in which we perceive the world.

Merleau-Ponty saw perception as an active process of meaning construction involving large portions of the body. The body is, a priori, the means by which we are intentionally directed towards the world. When I hold an unknown object in my hand and turn it over to view it from different angles, my intentionality is directed toward that object. My hands are automatically coordinated with the rest of my body and take part in the perception in a natural way. Any theory that locates visual perception to the eyes alone does injustice to the phenomenon. To Merleau-Ponty, the body is an undivided unity, and it is meaningless to talk about the perceptual process of seeing without reference to all the senses, to the total physical environment in which the body is situated, and to the "embodied" intentionality we always have toward the world.

The body has an ability to adapt and extend itself through external devices. Merleau-Ponty used the example of a blind man's stick to illustrate this. When I have learned the skill of perceiving the world through the stick, the stick has ceased to exist for me as a stick and has become part of "me". It has become part of my body and at the same time changed it.

Applied to an analysis of interactivity, Merleau-Ponty invites us to see interaction as perception. If I test out the light switch in Figure 1A to see if it works, this interaction can be seen as a perceptual act involving both eyes and hand. I move my hand to the switch as part of the process of perceiving its behaviour, in the same way as my eyes make rapid eye movements when I see a painting. The hand movements towards the switch result from my directedness towards the object of perception, i.e. the behaviour of the switch.

In more complex interactions, like when an experienced computer user plays World-of-Warcraft, the perceiving body extends into the game. When the gameplayer tries out a new sword that she has acquired for her game character, she perceives its working through the mouse and the part of the software that let her control her character. Playing World-of-Warcraft is similar to riding a bicycle or driving a car in that the technology becomes a tool, but it differs in that the world is computer generated.

The integrated view of action and perception makes Merleau-Ponty an interesting starting point for a discussion of meaningful interactive experiences. A consequence of his theory is that it should be possible to lead users into interactions with the computer that are meaningful at a very basic level. The interactions themselves can be meaningful.

The interactive artefact in Figure 7 exemplifies this. Try it by clicking on the "Mr. Peters" button!

The button has a script that makes it jump when the cursor is moved over it. The user tries to click on the button, but experiences that "Mr. Peters" always "escapes". Most users understand the intended meaning of the example and describe Mr. Peters as a person who always avoids you, a person you should not trust. The interaction itself works as a metaphor for Mr. Peters' personality. How does the philosophy of Merleau-Ponty shed light on this example?

11.6.1 Key points

11.6.1.1 Perception requires action

Perception of the "Mr. Peters" button requires action. The button as interactive experience is the integrated sum of its visual appearance and its behaviour. Without action, we are left with the visual appearance of the button, not the actual button as it emerges to us through interaction.

11.6.1.2 Perception is an acquired skill

One of the necessary conditions for the Mr. Peters example to work is that the user has acquired the skill of moving the mouse cursor around. This skill (Merleau-Ponty: habit) is part of being a computer user. Without this skill, the only perception of the Mr. Peters button would be its visual appearance.

11.6.1.3 Tool integration and bodily space

For the trained computer user, the mouse has similarities with the blind man's stick. The physical mouse and the corresponding software in the computer are integrated into the experienced body of the user. The computer technology, and the skills to make use of it, changes the actual bodily space of the user by adding to the potentials for action in the physical world also the potentials for action presented by the computer. The world of objects is in a similar manner extended to include also the "objects" in the computer.

11.6.1.4 Perception is embodied

Experiencing the "Mr. Peters" button requires not only the eye, but also arm and hand. Mouse movements and eye movements are integrated parts of the perceptual process that lead up to the perception of the button's behaviour. The interactive experience is both created by and mediated through the body.

11.6.1.5 Intentionality towards-the-world

As a skilled computer user, I have a certain "directedness" towards the computer. Because of this intentionality, the Mr. Peters button presents itself to me not only as a form to be seen, but also as a potential for action with an expectation for possible reactions. From seeing the button to moving the cursor towards it, there is no need for a "mental representation" of its position and meaning. The act of trying to click on the button is part of the perceptual process of exploring the example. When the button jumps away, I follow it without having to think.

11.6.1.6 The phenomenal field

In the above example, the context of the button is given by the leading text and by the user's past experiences with graphical user interfaces. It is important to notice that this example only works with users who are used to clicking on buttons to find more information. This is the horizon of the user, i.e. the phenomenal field that all interaction happens within. The Mr. Peters button emerges as a meaningful entity because the appearance of a button on a computer screen leads to a certain expectation and a corresponding action. The action is interrupted in a way that creates an interactive experience that is similar to that of interacting with a person who always escapes you.

With Merleau-Ponty it becomes meaningless to talk about interaction as the sum of stimuli reception and action as cognitive science tells us. Interaction is better described as a kind of perception. I perceive the behaviour of the "Mr. Peters" button through interaction. This perception involves both hand and eye in an integrated manner. Interaction-perception is immediate and "close to the world".

11.7 A media and art perspective

While phenomenology can help us understand the interactive user experience for a specific product, and might help us choose between two or more alternative designs, it gives us little guidance on what designs are possible. To be able to fully utilize the potential of interactive media, it is important to have a deep understanding of the medium itself. There is a tradition in Media and Art Studies for asking questions concerning the nature of the medium being studied. However, compared to the vast literature on the social and cultural impact of new media, media studies with a focus on the properties of the medium itself are rare. The most prominent author on this subject is Rudolf Arnheim (1904-2007). Arnheim dealt with non-interactive media like film, painting, drawing, sculpture, and architecture, and he analysed their media-specific properties from an artistic and psychological perspective. In the introduction of "Art and Visual Perception: a Psychology of the Creative Eye" (Arnheim 1974), he states explicitly that he is not concerned with the cognitive, social, or motivational aspects. Nor is he concerned with "the psychology of the consumer". By ignoring all elements of social function and meaning in a traditional sense, he was free to discuss issues such as balance, space, shape, form, and movement in relation to the different media. Arnheim draws heavily on examples from art and gestalt psychology. What is relevant for the current study is not his results, but his approach to the study of a new medium.

We find a similar approach to studying a medium in artist and Bauhaus teacher Johannes Itten's theory of colours.

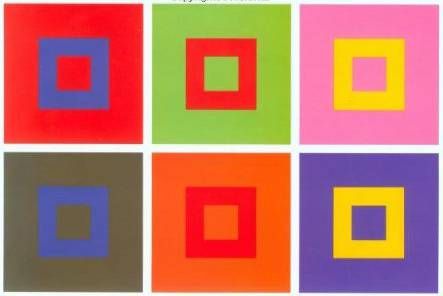

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below

Figure 11.7: One of Itten's explorations of the interplay between colors

In Figure 8 we see some of the coloured squares that he drew to illustrate how the perception of colour changes with the background (Itten 1974).

A

B

Figure 11.8: A white and a black pixel enlarged

Itten brought to the Bauhaus School of Design the idea that students of design should develop a deep knowledge of their media and materials through explorations of their properties. Seen as a medium and a material, the modern computer can be viewed as a display of pixels that each can have only one colour at any given time. Through some input device(s), the user can interact with this matrix of pixels. In Figure 9 we see a white and a black pixel enlarged.

The two pixels in Figure 9 are static in the sense that they do not respond to input from the user. An interactive pixel is a pixel that responds to user actions. The simplest "artefact" of this kind is a pixel that changes colour when clicked on. The pixel in Figure 10B has what we call "push button" behaviour. Figure 10A shows its state diagram.

The two interactive artefacts in Figure 11 look the same, but differ in behaviour. The "user experience" of an interactive artefact is the sum of its visual appearance and its interactive behaviour. The behaviour can only be experienced through interaction, and requires an active user. The fact that the pixel in Figure 11A is a "push button" and the pixel in Figure 11B is a "toggle" can only be perceived through interaction.

Borrowing from gestalt psychology, I use the term interaction gestalts for these kinds of basic interactive user experiences (Svanaes 1993).

If Figure 11A were placed in an art gallery, such an artefact would require the interactive behaviour to be perceived through use. Figure 12 illustrated this. A detached observer would miss the essence of this piece of minimalist interactive abstract art.

The artefacts in Figure 13 each consist of two pixels. Their behaviours are so simple that we can take them in as wholes, i.e. as interaction gestalts.

The previous artefacts all had the response located to the pixel you click on. By allowing pixels to affect each other, we get more complex artefacts. The artefacts in Figure 14 illustrate this. When clicked on, Figure 14A creates a foreground and a background; a black square moving on a white background. In Figure 14B the white square is active. This creates the illusion of a white square moving on a black background.

With three pixels like in Figure 15, the spatialisation becomes clearer. This simple swapping of colours is what happens at the pixel level when objects move on the computer screen.

The three-pixel artefact in Figure 16B has three states. As the artefact does not create the illusion of foreground/background, we perceive the behaviour as a rotation between states. It starts out all white, and after two states we are back "home" where we started. Figure 16A shows its state diagram. This kind of "state space" is the perceptual basis for the World-Wide-Web metaphor of moving between web pages, each web page being a state of the screen's pixels.

Real computers do not have two pixels, but millions. Figure 17 shows an example of how a matrix of elements with simple behaviour can become something potentially useful. Even with as few as 5x5 pixels you can use it to make basic shapes, i.e. an icon editor.

These kinds of explorations of interactive media can be extended in different directions. All the above examples have been with only two colours and discrete states. If we include analogue input, full colour, hidden states, delays, animations, sound, algorithms and communication through Internet, we get enough complexity to justify a whole new profession: Interaction Design.

11.8 Implications for interaction designers

What are the implications of this for interaction designers? We have gone through four perspectives on interactivity: interaction as information processing (Cognitive Science), interaction as tool use (Heidegger), interaction as perception (Merleau-Ponty), and interaction from a media and art perspective (Arnheim/Itten). The focus of the current analysis has mostly been on the interactivity of digital products. The implications for design will consequently mainly be related to the design of interactivity:

11.8.1 Interactivity is an important part of the user experience

Combining the perspectives of Merleau-Ponty and Arnheim, it becomes evident that the interactivity of digital artefacts is perceived as interaction gestalts at a very immediate level, similar to that of visual perception. Interactivity is not simply the behaviour necessary to implement a certain functionality, but an important quality of a digital product. We often talk about the "look and feel" of a digital product. Users perceive "the feel" of the product through interaction, and this thus becomes an important part of the resulting user experience. Users care about "the feel".

11.8.2 A product's "feel" should be designed with care to detail

If you want to design interactive products that stand out, you must be conscious of the interactive qualities of your products. "The feel" should consequently be designed; not only engineered. As with everything designed, God is in the details also concerning interactivity.

11.8.3 Perception of "the feel" requires action: Invite the user in

Perception of the interactive dimension of a product, its “feel”, requires user actions. It is therefore important that the product signals its potentials for interaction, its “affordances”. The user experience is created through interaction. Interactivity that is hidden away is like a tree falling in the forest with no one watching.

11.8.4 Design for unforeseen use

From a phenomenological perspective, objects get their meaning through use and social interaction. As Norman pointed out in his video on affordances, a good product should be designed for use situations other than the one intended.

11.8.5 Design for "the lived body"

When designing for interaction beyond mouse and keyboard (e.g. mobile and whole-body interaction), design for the experienced body. This requires a focus on the bodily feeling of using the product.

11.8.6 Take responsibility for the feel of the total user experience

In some cases, the user experience is a result of the user’s interaction with a number of interconnected products and services, e.g. the combination of earplugs, an MP3 player, and an online music store. In those cases it is important that the "feel" of these products and services are designed in such a way that the sum give rise to a good user experience.

11.8.7 Interaction designers need to learn basic programming skills

Designing interactivity requires the ability to make rapid "sketches" of interactive behaviour. This is important to be able to explore different behaviours, and to have running specification to hand over to the programmers. Despite numerous attempts to make the process of designing interactivity non-technical, interaction designers who want to add an extra quality to the interactive user experience still need to learn basic programming skills. Programming is the tool for shaping interactivity. A lot can be done with simple programming environments like Processing and Arduino.

11.8.8 The perceptual field is personal: Test with real users and listen carefully

Make numerous sketches of interactive behaviour, but always test them on real users before you make important design decisions. Different users can perceive the same interactive behaviour in surprisingly different ways; and often very differently from you. This is not only because they interpret and experience their interactions differently, but also because their ways of interacting differ. Test for more than usability; ask them how it feels in use and listen carefully to what they tell you.

11.8.9 Intentionality and context matters: Make the tests realistic

The perception of a product's interactivity is to a large extent coloured by the user's intention. Trying out "the feel" of a product in a controlled setting is very different from using it in a real context and for a real purpose. Ideally, tests should be done with real tasks and in real contexts. If that is not possible, you should be aware of the difference between real use and your test setting, and how this colours the user experience.

11.8.10 Interaction designers should be skilled in kinesthetic thinking

The interactivity of digital products should be designed by interaction designers with a special sensitivity for "the feel" of a product. "The feel" is about action-reaction; it involves the whole body and it is about timing and rhythm. This requires the interaction designer to develop skills in kinesthetic thinking and bodily intelligence. While drawing classes are excellent for designing "the look", interaction designers should also consider classes in dance, drama or martial art to develop their sensitivity for things interactive.

Getting the feel right is of course not sufficient to make successful interactive products, but it is my belief that in a competitive market, products with a well designed feel will always stand out; interactivity matters.

11.9 Where to learn more

11.9.1 Books

Winograd, Terry and Flores, Fernando (1986): Understanding Computers and Cognition. Norwood, NJ, Intellect

This book by Stanford professor Terry Winograd and former minister of finance of Chile, Fernando Flores, was primarily written as a criticism of artificial intelligence and cognitive science, but has strong relevance for a discussion of interactivity. As mentioned above, it presents three alternatives to cognitive science, of which the phenomenology of Heidegger is the most relevant here. Their interpretation of Heidegger was strongly influenced by Berkeley professor Hubert Dreyfus. The book was important by showing the relevance of continental philosophy to fundamental discussions in computer science.

Dourish, Paul (2001): Where the Action Is: The Foundations of Embodied Interaction. MIT Press

This book has become the standard textbook on the relevance of phenomenology for HCI. It starts out with a discussion of Ubiquitous Computing (pre-iPhone) and Social Media (pre-FaceBook), and shows that the phenonemology of Heidegger, Merleau-Ponty and Schutz is an excellent foundation for understanding the embodied and social nature of these technologies. He also draws on Wittgenstein and his concept of language games. Dourish introduces embodied interaction as a theoretical construct to capture the way in which interactive technologies become integral parts of our lives.

Dreyfus, Hubert L. (1990): Being-in-the-World: A Commentary on Heidegger's Being and Time, Division I. A Bradford Book

This is still one of the best introductions to the work of Martin Heidegger; from a philosopher who has been very successful in making continental philosophy available to Anglo-American readers. The book is a good starting point for a dive into 20th century German and French phenomenology.

Svanaes, Dag (2000): Understanding Interactivity: Steps to a Phenomenology of Human-Computer Interaction.Trondheim, Norway, Norges Teknisk-Naturvitenskapelige Universitet (NTNU)

Much of the current encyclopedia entry builds on my 2000 PhD monograph on interactivity. The book contains extended discussions of the four perspectives presented here, in addition to more background on Activity Theory, Etnomethodology, and Semiotics. It also presents an experiment on how users percieve abstract interaction, and some examples of ways to simplify the design of interactivity. A pdf version of the book can be downloaded here.

Fällman, Daniel (2003). In Romance with the Materials of Mobile Interaction : A Phenomenological Approach to the Design of Mobile Information Technology (PhD Thesis). Umeå Universitet

This thesis deals analytically and through design with the issue of Human-Computer Interaction (HCI) with mobile devices and mobile interaction. This subject matter is theoretically, methodologically, and empirically approached from two outlooks: a phenomenological and a design-oriented attitude to research. The book gives a very good overview of relevant aspects of the works of Husserl, Heidegger and Merleau-Ponty.

Hornecker, Eva (2004). Tangible User Interfaces als kooperationsunterstützendes Medium. Mathematik & Informatik, der Universität Bremen http://elib.suub.uni-bremen.de/diss/docs/E-Diss907_E.pdf

If you read German, this is a very interesting PhD on Tangible Computing strongly inspired by phenomenology. Hornecker is the author of the encyclopedia entry on Tangible Computing.

Ehn, Pelle (1988): Work-Oriented Design of Computer Artifacts. Stockholm, Arbetslivscentrum

Ehn's PhD from 1988 sums up his experience as one of the founders of the participatory design tradition in Scandinavia. His analysis builds on the theoretical frameworks of Marx, Heidegger and Wittgenstein. Heidegger is used to show the tool-like nature of software, and to argue for building tools to support skilled work.

11.9.2 Relevant papers

Das, Anita, Faxvaag, Arild and Svanaes, Dag (2011): Interaction design for cancer patients: do we need to take into account the effects of illness and medication?. In: Proceedings of the 2011 annual conference on Human factors in computing systems 2011. pp. 21-24

Hornecker, Eva (2005): A Design Theme for Tangible Interaction: Embodied Facilitation. In: Gellersen, Hans-Werner, Schmidt, Kjeld, Beaudouin-Lafon, Michel and Mackay, Wendy E. (eds.) Proceedings of the Ninth European Conference on Computer Supported Cooperative Work 18-22 September , 2005, Paris, France. pp. 23-43

Hummels, Caroline, Overbeeke, Kees and Klooster, Sietske (2007): Move to get moved: a search for methods, tools and knowledge to design for expressive and rich movement-based interaction. In Personal and Ubiquitous Computing, 11 (8) pp. 677-690

Larssen, Astrid Twenebowa, Robertson, Toni and Edwards, Jenny (2007): The feel dimension of technology interaction: exploring tangibles through movement and touch. In: Proceedings of the 1st International Conference on Tangible and Embedded Interaction 2007. pp. 271-278

Larssen, Astrid Twenebowa, Robertson, Toni and Edwards, Jenny (2006): How it feels, not just how it looks: when bodies interact with technology. In: Kjeldskov, Jesper and Paay, Jane (eds.) Proceedings of OZCHI06, the CHISIG Annual Conference on Human-Computer Interaction 2006. pp. 329-332

Larssen, Astrid Twenebowa, Robertson, Toni and Edwards, Jenny (2007): Experiential Bodily Knowing as a Design (Sens)-ability in Interaction Design. In: Desform - 3rd European Conference on Design and Semantics of Form and Movement Dec. 12-13, 2007, Newcastle upon Tyne, UK.

Lim, Youn-kyung, Lee, Sang-Su and Lee, Kwang-young (2009): Interactivity attributes: a new way of thinking and describing interactivity. In: Proceedings of ACM CHI 2009 Conference on Human Factors in Computing Systems 2009. pp. 105-108

Lim, Youn-kyung, Stolterman, Erik A., Jung, Heekyoung and Donaldson, Justin (2007): Interaction gestalt and the design of aesthetic interactions. In: Koskinen, Ilpo and Keinonen, Turkka (eds.) DPPI 2007 - Proceedings of the 2007 International Conference on Designing Pleasurable Products and Interfaces August 22-25, 2007, Helsinki, Finland. pp. 239-254

Lowgren, Jonas (2006): Articulating the use qualities of digital designs. In: Fishwick, Paul A. (ed.). " aesthetic="" computing="" (leonardo="" books)".="" the="" mit="" presspp.="" 383-403<="" p="">

Lowgren, Jonas (2009): Towards an articulation of interaction aesthetics. In New Review of Hypermedia and Multimedia, 15 (2) pp. 129-146

Moen, Jin (2005): Towards people based movement interaction and kinaesthetic interaction experiences. In: Bertelsen, Olav W., Bouvin, Niels Olof, Krogh, Peter Gall and Kyng, Morten (eds.) Proceedings of the 4th Decennial Conference on Critical Computing 2005 August 20-24, 2005, Aarhus, Denmark. pp. 121-124

Ozenc, Fatih Kursat, Kim, Miso, Zimmerman, John, Oney, Stephen and Myers, Brad A. (2010): How to support designers in getting hold of the immaterial material of software. In: Proceedings of ACM CHI 2010 Conference on Human Factors in Computing Systems 2010. pp. 2513-2522

Schiphorst, Thecla (2007): Really, really small: the palpability of the invisible. In: Proceedings of the 2007 Conference on Creativity and Cognition 2007, Washington DC, USA. pp. 7-16

Sundstrom, Petra and Hook, Kristina (2010): Hand in hand with the material: designing for suppleness. In: Proceedings of ACM CHI 2010 Conference on Human Factors in Computing Systems 2010. pp. 463-472

11.10 References

Arnheim, Rudolf (1974): Art and Visual Perception: A Psychology of the Creative Eye. University of California Press

Card, Stuart K., Moran, Thomas P. and Newell, Allen (1983): The Psychology of Human-Computer Interaction.Hillsdale, NJ, Lawrence Erlbaum Associates

Elkoutbi, Mohammed and Keller, Rudolf K. (2000): User Interface Prototyping Based on UML Scenarios and High-Level Petri Nets. In: ICATPN 2000 2000. pp. 166-186

Harel, David (1987): Statecharts: A Visual Formalism for Complex Systems. In Sci. Comput. Program., 8 (3) pp. 231-274

Hartson, H. Rex and Gray, Philip D. (1992): Temporal Aspects of Tasks in the User Action Notation. In Human-Computer Interaction, 7 (1) pp. 1-45

Heidegger, Martin (1996): Being and Time (translated by Joan Stambaugh and Dennis J. Schmidt). State University of New York Press

Hornecker, Eva (2005): A Design Theme for Tangible Interaction: Embodied Facilitation. In: Gellersen, Hans-Werner, Schmidt, Kjeld, Beaudouin-Lafon, Michel and Mackay, Wendy E. (eds.) Proceedings of the Ninth European Conference on Computer Supported Cooperative Work 18-22 September , 2005, Paris, France. pp. 23-43

Hummels, Caroline, Overbeeke, Kees and Klooster, Sietske (2007): Move to get moved: a search for methods, tools and knowledge to design for expressive and rich movement-based interaction. In Personal and Ubiquitous Computing, 11 (8) pp. 677-690

ISO (2009). ISO 9241-210:2009. Ergonomics of human system interaction - Part 210: Human-centred design for interactive systems. International Organization for Standardization

Itten, Johannes (1974): The Art of Color: The Subjective Experience and Objective Rationale of Color. John Wiley and Sons

Larssen, Astrid Twenebowa, Robertson, Toni and Edwards, Jenny (2007): The feel dimension of technology interaction: exploring tangibles through movement and touch. In: Proceedings of the 1st International Conference on Tangible and Embedded Interaction 2007. pp. 271-278

Larssen, Astrid Twenebowa, Robertson, Toni and Edwards, Jenny (2007): Experiential Bodily Knowing as a Design (Sens)-ability in Interaction Design. In: Desform - 3rd European Conference on Design and Semantics of Form and Movement Dec. 12-13, 2007, Newcastle upon Tyne, UK.

Larssen, Astrid Twenebowa, Robertson, Toni and Edwards, Jenny (2006): How it feels, not just how it looks: when bodies interact with technology. In: Kjeldskov, Jesper and Paay, Jane (eds.) Proceedings of OZCHI06, the CHISIG Annual Conference on Human-Computer Interaction 2006. pp. 329-332

Lim, Youn-kyung, Stolterman, Erik A., Jung, Heekyoung and Donaldson, Justin (2007): Interaction gestalt and the design of aesthetic interactions. In: Koskinen, Ilpo and Keinonen, Turkka (eds.) DPPI 2007 - Proceedings of the 2007 International Conference on Designing Pleasurable Products and Interfaces August 22-25, 2007, Helsinki, Finland. pp. 239-254

Lim, Youn-kyung, Lee, Sang-Su and Lee, Kwang-young (2009): Interactivity attributes: a new way of thinking and describing interactivity. In: Proceedings of ACM CHI 2009 Conference on Human Factors in Computing Systems2009. pp. 105-108

Loke, Lian, Larssen, Astrid Twenebowa, Robertson, Toni and Edwards, Jenny (2007): Understanding movement for interaction design: frameworks and approaches. In Personal and Ubiquitous Computing, 11 (8) pp. 691-701

Lowgren, Jonas (2006): Articulating the use qualities of digital designs. In: Fishwick, Paul A. (ed.). "Aesthetic Computing (Leonardo Books)". The MIT Presspp. 383-403

Lowgren, Jonas (2009): Towards an articulation of interaction aesthetics. In New Review of Hypermedia and Multimedia, 15 (2) pp. 129-146

Lowgren, Jonas (2008). Interaction Design - brief intro. Retrieved 4 November 2013 from [URL to be defined - in press]

Merleau-Ponty, Maurice (1962): Phenomenology of Perception: An Introduction. Routledge

Moen, Jin (2005): Towards people based movement interaction and kinaesthetic interaction experiences. In:Bertelsen, Olav W., Bouvin, Niels Olof, Krogh, Peter Gall and Kyng, Morten (eds.) Proceedings of the 4th Decennial Conference on Critical Computing 2005 August 20-24, 2005, Aarhus, Denmark. pp. 121-124

Norman, Donald A. (1988): The Psychology of Everyday Things. New York, Basic Books

Ozenc, Fatih Kursat, Kim, Miso, Zimmerman, John, Oney, Stephen and Myers, Brad A. (2010): How to support designers in getting hold of the immaterial material of software. In: Proceedings of ACM CHI 2010 Conference on Human Factors in Computing Systems 2010. pp. 2513-2522

Schiphorst, Thecla (2007): Really, really small: the palpability of the invisible. In: Proceedings of the 2007 Conference on Creativity and Cognition 2007, Washington DC, USA. pp. 7-16

Stambaugh, Joan and Schmidt, Dennis J. (eds.) (1996): Being and Time (Suny Series in Contemporary Continental Philosophy). State Univ of New York Pr

Sundström, Petra and Höök, Kristina (2010): Hand in hand with the material: designing for suppleness. In:Proceedings of ACM CHI 2010 Conference on Human Factors in Computing Systems 2010. pp. 463-472

Svanaes, Dag (1993): Interaction is orthogonal to graphical form. In: Ashlund, Stacey, Mullet, Kevin, Henderson, Austin, Hollnagel, Erik and White, Ted N. (eds.) INTERACT 93 - IFIP TC13 International Conference on Human-Computer Interaction - jointly organised with ACM Conference on Human Aspects in Computing Systems CHI93 24-29 April, 1993, Amsterdam, The Netherlands. pp. 79-80

Svanaes, Dag (2000): Understanding Interactivity: Steps to a Phenomenology of Human-Computer Interaction.Trondheim, Norway, Norges Teknisk-Naturvitenskapelige Universitet (NTNU)

Thimbleby, Harold (2004): User interface design with matrix algebra. In ACM Transactions on Computer-Human Interaction, 11 (2) pp. 181-236

Vogt, Stine and Magnussen, Svein (2007): Expertise in pictorial perception: eye-movement patterns and visual memory in artists and laymen. In Perception,

Winograd, Terry and Flores, Fernando (1986): Understanding Computers and Cognition. Norwood, NJ, Intellect