Smart products that adapt to aspects of the users’ activity, context or personality have become commonplace. With more and more products which act intelligently emerging in the market place, users often end up expecting to interact with them more like they would among themselves, as humans. In the first decades of the 21st century, technical limitations keep us, as designers, from being able to create smart products that fully live up to those expectations. Consequently, managing the users’ expectations of the interaction as you’re moving through the design process for a smart product is absolutely essential. Here, you’ll get a firm grounding of the basic psychology of how people interact with smart products and guidelines for designing smart products that do not break with the users’ expectations.

What are smart products?

In everyday life, we are surrounded by smart products and user interfaces that can perform an awe-inspiring range of actions. In this context, we define smart products as products that gather information about their users and their use context, and process the information so as to adapt their behaviour to the user or to a specific context. Examples could be sun blinds that automatically lower when they detect that the sun is shining or a travel app that suggests new vacation locations based on where you have previously been – maybe even reading them aloud in a natural voice. Basically, any product that gathers information about the user or various context factors and uses it in a smart way to automatically adapt its behaviour. In some cases, the user’s interaction with smart products seems more like communication than tool use. For example, when you use voice commands that resemble natural speech to interact with your smart home and your home answers your commands in an equally natural way, you could be forgiven for thinking you’ve had a brief conversation with your abode.

Author/Copyright holder: Guillermo Fernandes. Copyright terms and licence: Public Domain.

Author/Copyright holder: Guillermo Fernandes. Copyright terms and licence: Public Domain.

The Amazon Dot is a voice-controlled speaker with the intelligent Personal Assistant, Alexa, which (or ‘who’, to some!) learns your preferences over time. It can help you with things such as choosing music, turning off the lights or ordering Chinese food.

When products do things automatically without being asked and appear to communicate, they can seem more intelligent to the user than they are. Humans are constantly looking for clues of intelligence in the world around us, and being able to self-initiate actions is the most fundamental clue that something or someone possesses a level of intelligence and intentions. When we meet something that seems to be intelligent, such as another person or a dog, we know how much intelligence to expect because we can base it on past experience. When we meet a smart product that behaves intelligently and does things by itself, we don’t have much experience to base our expectations on; so, anticipating exactly how much to expect is a hard task. This causes different challenges when designing interaction with smart products. Consequently, considering how expectations for smart products can cause challenges from two sides is especially important. Let’s look at them now:

The users’ expectation of smart products — Research in psychology, from scholars such as Byron Reeves and Clifford Nash, tells us that users tend to treat smart products as though they are intelligent and intentional. Therefore, first we will look at what the users’ expectations are based on, and how to manage those expectations.

The designer’s expectations of smart products — As designers, we sometimes underestimate the complexity of understanding the user and the user’s context. In the latter part of our journey here, we will provide you with an understanding of what you can expect to be able to predict about the users’ context.

Author/Copyright holder: Nest. Copyright terms and licence: CC BY-NC-ND 2.0

Author/Copyright holder: Nest. Copyright terms and licence: CC BY-NC-ND 2.0

During its first week of use, the Nest thermostat learns your habits during the day. Then, it will program itself to set the temperature so that it suits your habits. This is one big component in what many users can expect—a fully-automatic home.

What do users expect from smart products?

“People’s responses show that media are more than just tools. Media are treated politely, they can invade our body space, they can have personalities that match our own, they can be a teammate and they can elicit gender stereotypes.”

—Byron Reeves and Clifford Nash, Authors of The Media Equation

We can all think of examples where we have treated technology more like an intelligent social being than a tool. We sometimes treat our robotic vacuum cleaners as pets rather than cleaning tools. We get angry at our computers because we feel misunderstood or because they do not do what we want them to. Anyone who’s shouted at a laptop for daring to show the ‘blue screen of death’ and then done a hard reset, whispering, ‘Phew—Thank you!’ on seeing it boot up like normal will appreciate this concept. We know that technological products are just objects, but in certain situations we treat them as if they had intentions, feelings and sophisticated intelligence. In a series of experiments, Stanford professors Byron Reeves and Clifford Nash found that even though people consciously think of computers as objects rather than persons, their immediate behaviour towards those computers sometimes resembles their behaviour towards another person. This suggests that, even when we consciously know that something is a tool without any intentions, we might act towards it as though it were alive and an intentional, or sentient, being. It’s fascinating, not least because it exposes a human tendency rather than the traits of just sentimental people; so, what is it about technology that makes us do that?

It might seem strange that we sometimes treat technology as a social and intelligent being. Even so, if we look at the basic psychology behind how we perceive the world, it makes a lot of sense. Human psychology develops thanks to our constant interaction with the world around us. From an early age, we learn how different objects relate to each other and how inanimate objects are different from social or intentional beings. As an example, a famous study on social cognition by Gergely Csibra and colleagues shows that before we are one year old we know that inanimate objects do not move unless pushed by an outside force, whereas intentional beings are able to initiate movement on their own. In other words, we can tell the difference between intentional beings and objects. From an evolutionary perspective, our constantly looking for clues of intentionality is a very practical endeavour. It means that we can easily spot when something has intentions and what those intentions are. It’s what keeps our eyeballs peeled and eardrums primed for the first sign of a threat. If, while walking along a seafront pavement, you drop your wallet on a pebbly beach, for instance, you’ll have no qualms about jumping down to retrieve it. If we change that seafront promenade to a bridge over a crocodile reserve, though, you’ll have serious second thoughts. Putting aside zombie humour, stones, boulders and other inert, ‘dead’ objects can’t harm us. Of course, we can make exceptionally poor choices with them (such as pulling on the ripcord of a chainsaw we have, for some bizarre reason, clenched between our legs, back to front), but they won’t want to do us harm, either by instinct or volition, because they can’t ‘want’ anything.

Author/Copyright holder: Margaret A. McIntyre. Copyright terms and licence: Public Domain.

It used to be easy to differentiate between objects and living beings. With technological development, the line has become more blurred.

For thousands of years, differentiating between inanimate objects and social intelligent beings was relatively straightforward. The 21st century—which has so far seen the culmination of scientific advances occurring at breakneck speed—has thrown up a brand-new problem for us as a species: as products become more intelligent and behave as though they were intentional, they cannot always live up to the users’ expectations. If my robotic vacuum cleaner can move on its own and figure out when to vacuum, why can’t it see the dust in the corner of the kitchen? If the personal voice-controlled assistant on my iPhone can answer questions about the weather and which restaurant I should go to tonight, why can’t it have personal opinions or feelings? Rationally, I know that it cannot because it is not conscious, but it is difficult to know what the upper limit of its ability is.

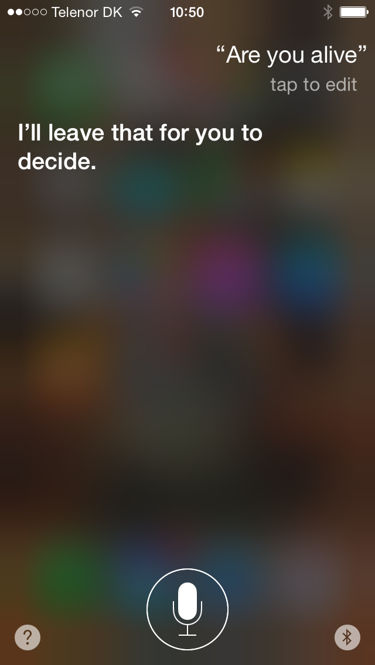

Author/Copyright holder: Apple Incorporated. Copyright terms and licence: Fair Use.

In speech interaction (here, the Siri personal assistant on my iPhone), the technology communicates in a way that is similar to human communication, but the limitations of the interaction can be unclear.

Not understanding what a product is or is not doing and not understanding what a product is capable of creates a negative user experience. In a review of research on how people perceive and interact with intelligent environments, researcher Eija Kassinen and her co-authors stated that users lose trust and satisfaction with intelligent products if they do not understand them. You could have the most sophisticated and lifelike app or device, but the customer needs to understand it in order for it to become successful.

How not to design smart products

So, we know that when something appears to have a mind of its own, users look for further clues of intentionality. This means that if your product is not able to live up to the users’ expectations and behave intelligently, you should not make it look like it is intelligent. A classic example of a design where this was handled wrongly is Microsoft’s infamous office assistant Clippy. Debuting in 1997, Clippy was an animated paper clip that would pop up and offer to provide help with different Office-related tasks in which the user was engaged. The user had no control over when Clippy popped up; its suggestions were not that helpful, and it quickly became reviled among a lot of Office users. One of the problems with Clippy was that—while it superficially resembled an intelligent being (it had a face and it could ‘talk’ in text balloons)—it had limited or no understanding of the user’s activity. On the surface, Clippy appeared sophisticated and users expected it to have a level of social intelligence. Nevertheless, because Clippy had no real understanding of the user or his/her activity, it was not able to act according to social rules. Consequently, the majority of users perceived Clippy as rude and annoying. Clippy could look sympathetic (or like it was pouting) but couldn’t deliver the goods re job description. It was discontinued in 2003.

Author/Copyright holder: simonbarsinister. Copyright terms and licence: CC BY 2.0

Author/Copyright holder: simonbarsinister. Copyright terms and licence: CC BY 2.0

Microsoft’s Office assistant Clippy was so widely reviled in the late 1990s that it lives on today in jokes and art pieces.

Clippy obviously handled the users’ expectations badly; so, what can you do if you want to handle them well? Looking at examples of latter-day successful smart products such as the Amazon Dot and the Nest thermostat, you can see that companies grew to take a different approach and design products that are intelligent but which in no way look like a social being. Both products have pleasant and simple designs, and both look like what they are: a piece of technology. That way, they avoid eliciting the wrong expectations from users. Obviously, you should consider the social rules that apply to your smart product’s use case even if your product looks nothing like a social being. Just think of the Apple iPhone’s autocorrect function that automatically corrects words in users’ text messages, sometimes into very embarrassing or offensive words.

Based on how users interact with intelligent products, we can sum up our learning into three guidelines you should consider whenever you design smart products:

Guidelines for managing the users’ expectations

Never promise more than you can deliver – only create something that looks and acts social if it is actually able to.

If at all possible, clearly inform the user of what a product is able to do and what it is currently doing.

If a product is intelligent, assume that users will also, to some extent, expect it to have social skills. Think about what obvious social rules exist in your domain, and don’t break them. As an example, never interrupt the user if you don’t know what he/she is doing.

What is technology able to predict about the users’ context?

One of the basic problems with Clippy was that even though it looked like a smart product, it had limited intelligence or ability to sense what the user was doing. When you do have a smart product, which can detect more of its surroundings and come up with great suggestions for actions, how much can you make it do automatically and how much do you need the user to approve or initiate actions? Understanding that requires insight into human interaction and context.

“My thesis [is] that to design a tool, we must make our best efforts to understand the larger social and physical context within which it is intended to function.”

—Bill Buxton, a principal researcher at Microsoft and Pioneer in the Human Computer Interaction Field

Clippy broke an important social rule: it interrupted the user and had zero understanding of when it was appropriate. In social terms, it behaved very rudely! You can avoid that by designing your smart product so that it never does anything to call attention to itself without being asked by the user. Alternatively, you can try to learn about the user and the context so as to understand when it might be appropriate for a product to call attention to itself or do something without being asked. This requires a thorough understanding of the users’ context and habits, something which is notoriously hard to achieve because it is constantly changing.

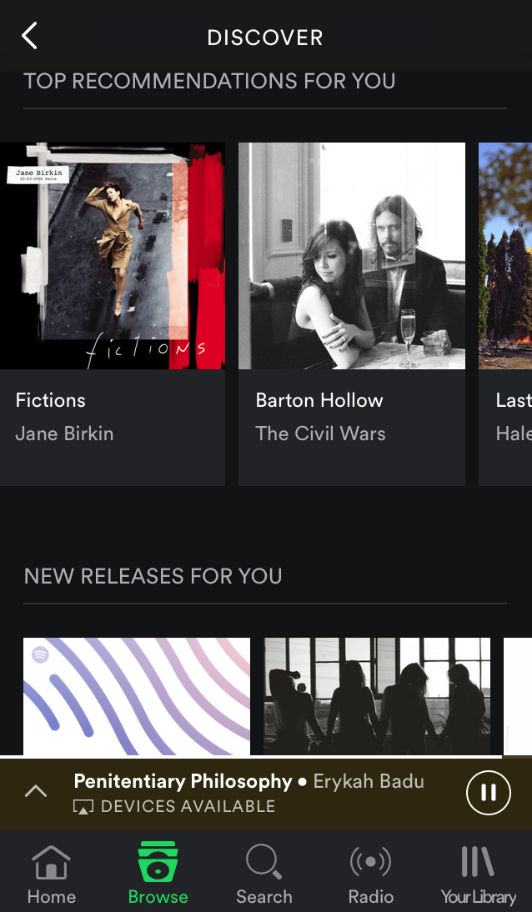

Author/Copyright holder: Spotify Technology S.A. Copyright terms and licence: Fair Use.

The music service Spotify learns from your listening habits to create suggestions tailored to your tastes. The suggestions are easily available in a separate section, but they do not disrupt or call attention to themselves.

In the classic book Plans and Situated Actions: The Problem of Human-machine Communication, Lucy Suchman described in detail what technology would need to know about context and communication so as to create a more social and intelligent interaction. She argues that creating a common ground or understanding of context when designing human-computer interaction is difficult. Human interaction is always situated in a context, and it can only be understood by somebody who also understands the context. When people interact, we effortlessly perceive attention, pick up on new tracks in the communication, use knowledge of our shared history of interaction, and coordinate our actions. We can do that because we have a shared understanding of the context that is difficult for technology to achieve, even when it is intelligent and able to detect information about the context and user.

An example might help underline the complexity of simple everyday situations: My husband and I have just arrived home to a cold house. I look at him and ask, “Can you turn up the temperature?” By this simple interaction, my husband understands that: 1) I am speaking to him because I am looking at him; 2) how much to turn up the temperature by, because he knows the usual temperature of the house; 3) what thermostat I am talking about, because he knows what room we are in and where we usually turn up the temperature. In most cases, I might not even have to ask, because he would also be cold and would want the temperature higher, himself. If I had an Amazon Alexa and a Nest thermostat, I could ask Alexa to turn up the temperature, but I would need to be much more specific—e.g., “Alexa, raise the Kitchen thermostat by 5 degrees.” Alexa needs to be told that I am speaking to it, what thermostat I am referring to and how high to raise the temperature. It seems easy, but you must train users to perform the right commands, as it is unclear how specific you need to be. You really need your users to understand that they need to fine-tune their utterances if they want the device to take the desired action.

Alternatively, let’s say that I had installed sensors that knew which room I was in and what temperature I normally prefer. Then the Nest could just automatically raise the temperature when it detected my presence. That would work on most days, but because it does not understand anything else about the context, it would be annoying on days where I am only home for 10 minutes or when I need to leave the house door open for more than a few minutes. In that case, it might be a good idea to think about how to communicate about what the product is doing—e.g., Alexa could ask, “I’m going to turn up the heat now; is that OK?”; that also gives me the option of turning off the automatic action. In case I leave the door open, it could also ask: “Hey, I turned the heat up because I thought you might be in the room for a while, but I see the door has been left open. Should I turn it off until you close the door?”. Communication with the user about what the product is doing helps, and it means that you do not have to anticipate every possible situation that occurs in a home.

To sum up, people are complex, and even smart products cannot know everything that is going on;so, you should consider the following:

Guidelines for managing limitations

Don’t assume that your product can fully understand the user’s context.

Make options and suggestions easily available to users, but never interrupt to provide them. Spotify provides suggestions for new music, but it does not automatically play.

If you are in doubt, go for limited scenarios where you have an idea of what is going on.

Always provide an easy way for the user to turn off an autonomic action or adaptation.

The Take Away

Users often ascribe intelligence and social skills to smart products that adapt to their behaviour or the context. This means—whenever you’re designing smart products—you must be clear in communicating what your product can and cannot do. Even if your product is sophisticated, you should avoid making it look intelligent or social in ways that it is not. People understand a lot based on the context and on simple cues from other people around us. For smart products, this is a lot more difficult. Be careful when making products that automatically adapt to the user or the context, and always provide an easy way to turn off the autonomic action or adaptation. Knowing your creation’s limitations and staying one step ahead of the natural level of unpredictability in your users’ daily lives will bring your design in line with modern expectations and put your product on the road to success.

References & Where to Learn More

Lucy A. Suchman, Plans and Situated Actions: The problem of human-machine communication, 1985

Byron Reeves and Clifford Nash, The Media Equation. How People Treat Computers, Television, and New Media Like Real People and Places, 1996

Eija Kaasinen, Tiina Kymäläinen, Marketta Niemelä, Thomas Olsson, Minni Kanerva and Veikko Ikonen, ‘A User-Centric View of Intelligent Environments: User Expectations, User Experience and User Role in Building Intelligent Environments’, Computers, 2(1), 1-33. 2013

If you want to learn more about social cognition, you can look at the study by Csibra and colleagues:

Gergely Csibra et al. Goal attribution without agency clues: the perception of ‘pure reason’ in infancy. Cognition, 72, 237-267. 1999

For more examples of smart products and what they can do, take a look at Samsung’s Smart Things blog.

Hero Image: Author/Copyright holder: jeferrb. Copyright terms and licence: CC0 Public Domain