“Just because you can, doesn't mean you should.”

Social ideas like freedom seem far removed from computer code but computing today is social. That technology designers are not ready, have no precedent or do not understand social needs is irrelevant. Like a baby being born, online society is pushing forward, whether we are ready or not. And like new parents, socio-technical designers are causing it, again ready or not. As the World Wide Web's creator observes:

“... technologists cannot simply leave the social and ethical questions to other people, because the technology directly affects these matters”

-- Berners-Lee, 2000: p124

Socio-technical design is the application of social and ethical requirements to HCI, software and hardware systems.

3.1 Designing Work Management

The term socio-technical was first introduced by the UK Tavistock Institute (footnote 1) in the late 1950s to oppose Taylorism — reducing jobs to efficient elements on assembly lines in mills and factories (Porra & Hirschheim, 2007). Community level performance needs applied to the personal level gave work-place management ideas including:

Congruence. Let the process match its objective — democratic results need democratic means.

Minimize control. Give employees clear goals but let them decide how to achieve them.

Local control. Let those with the problem change the system, not absent managers.

Flexibility. Without "extra" skills to handle change, specialization will precede extinction.

Boundary innovation. Innovate at the boundaries, where work goes between groups.

Transparency. Give information first to those it affects, e.g. give work rates to workers.

Evolution. Work system development is an iterative process that never stops.

Lead by example. As the Chinese say: "If a General takes an egg, his soldiers will loot a village." (footnote 2)

Support human needs. Work that lets people learn, choose, feel and belong gives loyal staff.

In computing it became a call for the ethical use of technology.

This book extends that foundation, to apply social requirements to technology design as well as work design, because technologies now mediate social interactions.

During the industrial revolution, when the poet William Blake wrote of "dark satanic mills", technology was seen by many as the heartless enemy of the human spirit. Yet people ran the factories that were enslaving people. The industrial revolution was the rich using the poor as they had always done, but machines let them do it better. Technology was the effect magnifier but not in itself good or evil. Today, we have largely rejected slavery but we embrace technology like the car or cell phone. Today technology is on the other side of the class war, as Twitter, Facebook and YouTube support the Arab spring. Yet the core socio-technical principle is still:

“Just because you can, doesn't mean you should”

Socio-technical design puts social needs above technical wants. The argument is that human evolution involves social and technical progress in that order, e.g. today's vehicles could not work on the road without today's citizenry. Technology structures like cars also need social structures like road rules.

Social inventions like credit were as important in our evolution as technical inventions like cars. Global trade needs not only ships and aircraft to move goods around the world, but also people willing to do that. Today, online traders send millions of dollars to people they have not seen for goods they have not touched to arrive at times unspecified. A trader in the middle ages, or indeed in the early twentieth century, would have seen that as pure folly. What has changed is not just the technology but also the society. Today's global markets work by social and technical support:

“To participate in a market economy, to be willing to ship goods to distant destinations and to invest in projects that will come to fruition or pay dividends only in the future, requires confidence, the confidence that ownership is secure and payment dependable. ... knowing that if the other reneges, the state will step in...”

-- Mandelbaum, 2002: p272.

3.2 Social Requirements

One cannot design socio-technology in a social vacuum. Fortunately, while virtual society is new, people have been socializing for thousands of years. We know that fair communities prosper but corrupt ones do not (Eigen, 2003). Social inventions like laws, fairness, freedom, credit and contracts were bought with blood and tears (Mandelbaum, 2002), so why start anew online? Why reinvent the social wheel in cyber-space (Ridley, 2010)? Why re-learn electronically what we already know physically?

As the new bottle of information technology fills with the old wine of society, the stakes are raised. The information revolution increases our power to gather, store and distribute information, for good or ill (Johnson, 2001). Are we the hunter-gatherers of the information age (Meyrowitz, 1985) or an online civilization? A stone-age society with space-age technology is a bad mix, but what are the requirements for technology to support civilization? Computing cannot implement what it cannot specify.

We live in social environments every day, but struggle to specify them (footnote 3), e.g. a shop-keeper swipes a credit card with a reading device designed not to store credit card number or pin. It is designed to the social requirement that shopkeepers do not steal customer data, even if they can. Without this, credit would collapse, and social disasters like the depression can be worse than natural disasters. Credit card readers support legitimate social interaction by design.

Likewise, if online computer systems take and sell customer data such as home addresses and phone numbers for advantage, users will lose trust and either refuse to register at all, or register with fake data, like "123 MyStreet, MyTown, NJ" (Foreman & Whitworth, 2005). The way to satisfy online privacy is not to store data you do not need. To say it will never be revealed is not good enough, as companies can be forced by governments or bribed by cash to reveal data. One cannot be forced or bribed to give data one does not have. The best way to guarantee online trust is to not to store unneeded information in the first place (footnote 4).

3.3 The Socio-technical Gap

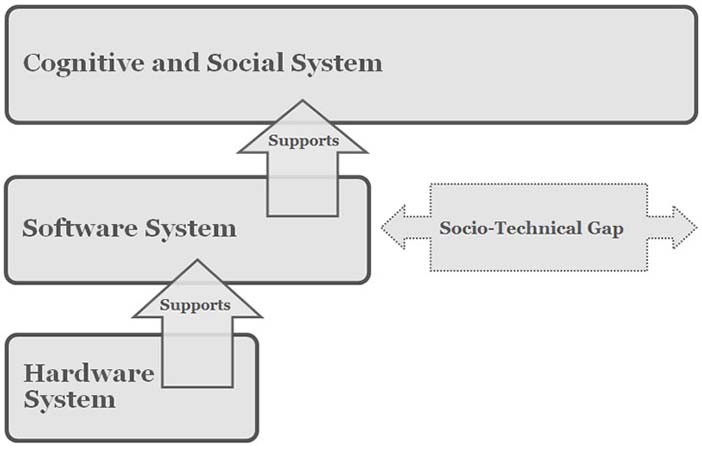

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 3.1: The socio-technical gap

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 3.1: The socio-technical gap

Simple technical design gives a socio-technical gap (Figure 3.1), between what the technology allows and what people want (Ackerman, 2000). For example, email technology ignored the social requirement of privacy, letting anyone email anyone without permission, and so gave spam. The technical level response to this social level problem was inbox filters. They help on a local level, but transmitted spam as a system problem has never stopped growing. User inbox spam has been held constant due to filters, but the percentage of spam transmitted by the Internet commons has never stopped growing. It grew from 20% to 40% of messages in 2002-2003 (Weiss, 2003), to 60-70% in 2004 (Boutin, 2004), to 86.2% to 86.7% of the 342 billion emails sent in 2006 (MAAWG, 2006; MessageLabs, 2006), to 87.7% in 2009 and 89.1% of all emails sent in 2010 (MessageLabs, 2010). A 2004 prediction that within a decade over 95% of all emails transmitted by the Internet will be spam is coming true (Whitworth & Whitworth, 2004).

Due to spam, email users are moving to other media, but if we make the same socio-technical design error there, the problem will just follow, e.g. SPIM is instant messaging spam.

Filters see spam as a user problem but it is really a community problem — a social dilemma. Transmitted spam uses Internet storage, processing and bandwidth, whether users hiding behind their filter walls see it or not. Only socio-technology can resolve social problems like spam because in the "spam wars" technology helps both sides, e.g. image spam can bypass text filters, AI can solve captchas (footnote 5), botnets can harvest web site emails, and zombie sources can send emails. Spam is not going away any time soon (Whitworth and Liu, 2009a).

Right now, aliens observing our planet might think email is built for machines, as most of the messages transmitted go from one (spam) computer to another (filter) computer, untouched by human eye. This is not just due to bad luck. A communication technology is not a Pandora's box whose contents are unknown until opened because we built it. Spam happens when we build technologies instead of socio-technologies.

3.4 Legitimacy Analysis

A social system is here an agreed form of social interaction that persists (Whitworth and de Moor, 2003). People seeing themselves as a community is, therefore, essentially their choice. In politics, a legitimate government is seen as rightful by its citizens, i.e. accepted. In contrast, illegitimate governments need to stay in power by force of arms and propaganda. By extension, legitimate interaction is accepted by the parties involved, who freely repeat it, e.g. fair trade. Legitimacy has been specified as: fairness and public good (Whitworth and de Moor, 2003). Physical and online citizens prefer legitimate communities because they perform better.

In physical society, legitimacy is maintained by laws, police and prisons that punish criminals. Legitimacy is the human level concept by which judges create new laws and juries decide on never before seen cases. A higher affects lower principle applies: communities engender human ideas like fairness, which generate informational laws, that are used to govern physical interactions. Communities of people create rules to direct acts that benefit the community, i.e. higher level goals drive lower level operations to improve system performance. Doing this online, applying social principles to technical systems, is socio-technical design.

Conversely, over time lower levels get a "life of their own" and the tail starts to wag the dog, e.g. copyright laws originally designed to encourage innovators have become a tool to perpetuate the profit of corporations like Disney that purchased those creations (Lessig, 1999) (footnote 6). Unless continuously "re-invented" at the human level, information level laws inevitably decay and cease to work.

Lower levels are more obvious, so it is easy to forget that today's online society is a social and a technical evolution. The Internet is new technology but it is also a move to new social goals like service and freedom rather than control and profit. So for the Internet to become a hawker market, of web sites yelling to sell, would be a devolution. The old ways of business, politics and academia should follow the new Internet way, not the reverse.

There are no shortcuts in this social evolution, as one cannot just "stretch" physical laws into cyberspace (Samuelson, 2003), because they often:

Do not transfer (Burk, 2001), e.g. what is online "trespass"?

Do not apply, e.g. what law applies to online "cookies" (Samuelson, 2003)?

Change too slowly, e.g. laws change over years but code changes in months.

Depend on code (Mitchell, 1995), e.g. anonymity means actors cannot be identified.

Have no jurisdiction. U.S. law applies to U.S. soil, but cyber-space is not "in" America.

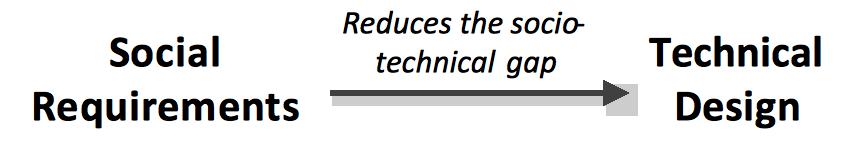

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 3.2: Legitimacy analysis

The software that mediates online interaction has by definition full control of what happens, e.g. any application could upload any hard drive file on your computer to any server. In itself, code could create a perfect online police state, where everything is monitored, all "wrong" acts punished and all undesirables excluded, i.e. a perfect tyranny of code.

Yet code is also an opportunity to be better than the law, based on legitimacy analysis (Figure 3.2). Physical justice, by its nature, operates after the fact, so one must commit a crime to be punished. Currently, with long court cases and appeals, justice can take years, and justice delayed is justice denied. In contrast, code represents the online social environment directly, as it acts right away. It can also be designed to enable social acts as well as to deny anti-social ones. If online code is law (Lessig, 1999), to get legitimacy online we must build it into the system design, knowing that legitimatesocio-technical systems perform better (Whitworth and de Moor, 2003). That technology can positively support social requirements like fairness is the radical core of socio-technical design.

So is every STS designer an application law-giver? Are we like Moses coming down from the mountain with tablets of code instead of stone? Not quite, as STS directives are to software not people. Telling people to act rightly is the job of ethics not software, however "smart". The job of right code, like right laws, is to attach outcomes to social acts, not to take over people's life choices. Code as the social environment cannot be a social actor. Socio-technical design is socializing technology to offer fair choices, not technologizing society to be a machine with no choice at all. It is the higher directing the lower, not the reverse.

To achieve online what laws do offline, STS developers must re-invoke legitimacy for each application. It seems hard, but every citizen on jury service already does this, i.e. interpret the "spirit of the law" for specific cases. STS design is the same but for application cases. That the result is not perfect doesn't matter. Cultures differ but all have some laws and ethics, because some higher level influence is always better than none.

To try to build a community as an engineer builds a house is a levels error, i.e. choosing the wrong level for the job. Social engineering by physical coercion, oppressive laws, or propaganda and indoctrination is people using others as objects, i.e. anti-social. A community is by definition many people, so an elite few enslaving the rest is not a community. Social engineering treats people like bricks in a wall which denies social requirements like freedom and accountability. Communities cannot be "built" from citizen actors because to treat them as objects denies their humanity. Communities can emerge as people interact, but they cannot be built.

3.5 The Web of Social Performance

Communities interact with others, using spies to act as "eyes", diplomats to communicate, engineers to effect, soldiers to defend, intellectuals to adapt and traders to extend, but a community can also interact with itself, to communicate or synergize, as follows:

Productivity. As functionality is what a system can produce, so communities produce bridges, art and science by citizen competence, based on education of symbolic knowledge, ethics and tacit skills. Help and FAQ systems illustrate this for an online community.

Synergy. As usability is less effort per result, so communities improve efficiency by synergy based on trust (footnote 7). Public goods like roads and hospitals involve functional specialists giving to all. If all citizen specialists offer their services to others, all get more for much less effort. Wikipedia illustrates online synergy, as many specialists give a little knowledge so that all get a lot of knowledge.

Both productivity and synergy require citizen participation, based on skills and trust respectively, yet are also in tension as one invokes competition and the other cooperation (footnote 8). The former improves how citizen "parts" perform and the latter how they interact. Service by citizens reconciles productivity and synergy, as it invokes both.

Freedom. As flexibility is a system's ability to change to fit a changing environment, so communities become flexible when citizens have freedom, i.e. the right to act autonomously. This is the right not to be a slave (footnote 9). Freedom allows local resource control, which increases social performance just as decentralized protocols like Ethernet improve network performance.

Order. Reliability is a system's ability to survive internal part failure or error. A community achieves reliability through order, when citizens, by rank, role or job, know and do their duty. Some cultures set up warrior or merchant castes to achieve this. Online order is also by roles, e.g. Sysop or Editor.

Freedom and order are in tension, as freedom has no class but order does. The social invention of democracy merges freedom and order by letting citizens chose their governance, not just of the President or Prime Minister, but for all positions. Democracy is rare online, but Slashdot uses it.

Ownership. Security is a system's defence against outside takeover. A community is made secure internally by ownership, as to "own" a house guarantees that if another takes it, the community will step in (footnote 10). Online, ownership works by access control (see Chapter 6).

Openness. Extendibility is a system's ability to use what is outside itself. A community doing this is illustrated by America's invitation to the world: “Give me your tired, your poor, your huddled masses yearning to breathe free”

A society is open internally if any citizen can achieve any role by merit, just as Abraham Lincoln, born in a log cabin, became US president. The opposite is nepotism or cronyism, giving jobs to family or friends regardless of merit. If community advancement is by who you know, not what you know, performance reduces. Open source systems like Source Forge let people advance by merit.

Ownership and openness are in tension, as the right to keep out denies the right to go in. Fairness can reconcile public access and private control. Offline fairness is supported by justice systems but online fairness must be supported by code.

Transparency. As connectivity lets a person communicate with others, so transparency lets a community communicate with itself by media like TV, newspapers, radio and now the Internet. In a transparent community, people can easily see what the group is doing but in an opaque one they cannot. Transparent governance lets citizens view public money spent and privileges given, as public acts on behalf of a community are not private. Systems like Wikipedia illustrate transparency online.

Privacy. Privacy as a citizen's right to control their personal information can also describe a community right given to all citizens, i.e. the same term works at the community level. In this case, it includes communication privacy, the right not to be monitored without consent.

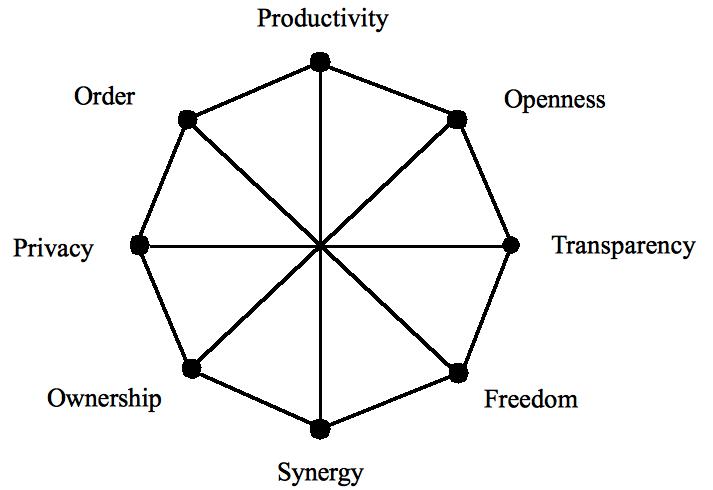

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 3.3: A social web of performance

Transparency and privacy are in natural tension, as giving the right to see another may deny their right not to be seen. Politeness reconciles this tension by letting people of different rank, education, age and experience connect. Further details are given in Chapter 4.

The social invention of rights can also reconcile many aspects of the web of social performance, as discussed in Chapter 6.

In the performance web of Figure 3.3, a community increases citizen competence to be productive, increases trust to get synergy, gives freedoms to adapt and innovate, establishes order to define responsibilities, allocates ownership to prevent property conflicts, is open to outside and inside talent (footnote 11), communicates internally to generate agreement, and grants legitimate rights that stabilize social interaction. As all these together increase social performance but are in design tension, so each community must define its own social structure, whether online or off.

3.6 Communication Forms

Communication media transmit meaning between senders and receivers. Meaning is any change in a person's thoughts, feelings or motives. (footnote 12) The communication performance of a transmission is the total meaning exchanged, i.e. its human impact.It can be based on message richness and sender-receiver linkage as follows:

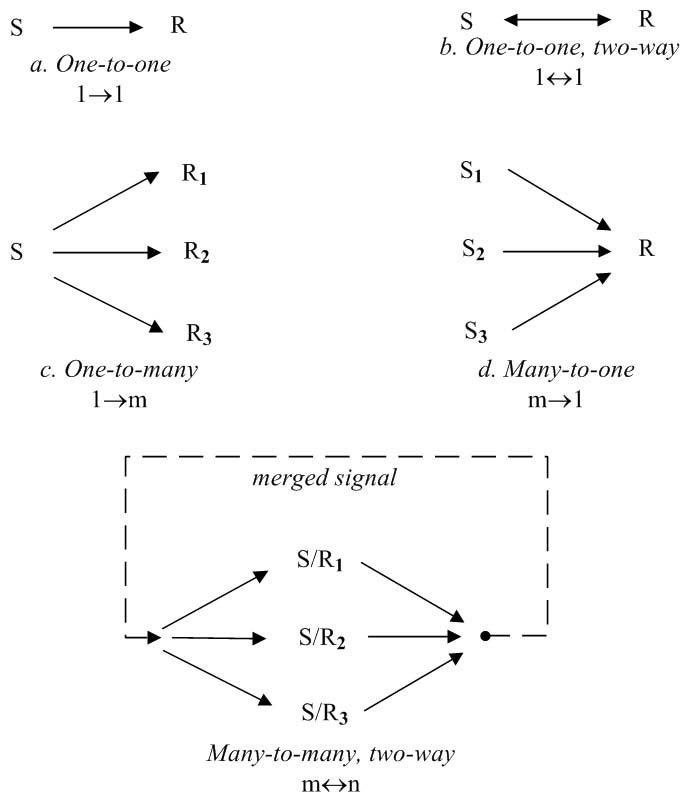

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 3.4: Communication linkage (S = Sender, R = Receiver)

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 3.4: Communication linkage (S = Sender, R = Receiver)

Richness is the amount of meaning a message conveys. To see video as automatically richer than text confuses meaning richness with information bandwidth. Meaning is the human impact, so texting "I'm safe" can have more meaning than a multi-media video (footnote 13). Media richness can be classified by the symbols that generate meaning:

Position. A single, static symbol, e.g. to raise one's hand. A vote is a position.

Document. Many static symbols form a meaning pattern as words form a sentence by syntax or pixels form an object by Gestalt principles. Documents are text or pictures.

Dynamic-media (Audio). A dynamic channel with multiple semantic streams, e.g. speech has tone of voice and content (footnote 14). Music has melody, rhythm and timbre.

Multi-media (Video). Many dynamic channels, e.g. video is both audio and visual channels. Face-to-face communication uses many sensory channels.

Rich media have the potential to transfer more meaning.

Linkage. The meaning exchanged in a communication also depends on the sender-receiver link (Figure 3.4), namely:

Interpersonal (one-to-one, two-way): Both parties can send and receive, usually signed.

Broadcast (one-to-many, one-way): From one sender to many receivers, can be unsigned.

Matrix (many-to-many, two-way): Many senders to many receivers, usually unsigned.

Communication performance depends on both richness and linkage, as a message sent to one person that is then broadcast to many increases the human impact. At the individual level people communicate with people, but community level communication is group-to-group, i.e. many send and many receive in one transmit operation. Matrix communication is one-to-many (broadcast) plus many-to-one (merge) communication, where addressing an audience is one-to-many communication and applauding a speaker is many-to-one. The applauding audience to itself is matrix communication, as the group producing the clapping message also receives it. Matrix communication allows normative influence, e.g. when audiences start and stop clapping together. A choir singing is also matrix communication, so by normative influence if choirs go off key they usually do so together.

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 3.5: Tagging as matrix communication

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 3.5: Tagging as matrix communication

Face-to-face groups also use matrix communication, as body language and facial expressions convey each member of the group's position on an issue to everyone else in the group. A valence index calculated from member position indicators was found to predict a group discussion outcome as well as the words (Hoffman & Maier, 1961). Matrix communication is how online electronic groups can form social agreement without any rich information exchange or discussion, using only anonymous, lean signals, (Whitworth et al., 2001). Community voting, as in an election, is a physically slow matrix communication that computers can speed up. Tag cloud, reputation system and social book-mark technologies all illustrate online support for matrix communication (Figure 3.5).

If communication performance is richness plus linkage, a regime bombarding citizens 24/7 with TV/video propaganda can exchange less meaning by this one-to-many linkage than people talking freely by twitter, which is many-to-many linkage.

3.7 A Media Framework

Table 3.1 categorizes various communication media by richness and linkage with electronic media in italics, e.g. a phone call is an inter-personal audio but a letter is interpersonal text. A book is a document broadcast, radio is broadcast audio and TV is broadcast video. The Internet can broadcast documents (web sites), audio (podcasts) or videos (YouTube). Email allows two-way interpersonal text messages, while Skype adds two-way audio and video. Chat is few-to-few matrix text communication, as is instant messaging but with known people. Blogs are text broadcasts that also allow comment feedback. Online voting is matrix communication, as many communicate with many in one operation.

| Linkage | ||

Richness | Broadcast | Interpersonal | Matrix |

Position | Footprint, | Posture, | Show of hands, |

Document | Poster, Book, | Letter, | Chat, Twitter1 |

Dynamic-media | Radio, | Telephone, | Choir, |

Multi-media | Speech, Show, | Face-to-face | Face-to-face meeting, |

1 Combines broadcast (text) and matrix (following). | |||

Table 3.1: Communication media by richness and linkage

Computers allow "anytime (footnote 15), anywhere" communication for less effort, e.g. an email is easier to send than posting a letter. Lowering the message threshold means that more messages are sent (Reid et al., 1996). Email stores a message until the receiver can view it (footnote 16), but a face-to-face message is ephemeral; it disappears if you are not there to get it. Yet being unable to edit a message sent makes sender state streams like tone of voice more genuine.

As media richness theory framed communication in one-dimensional richness terms, electronic communication was expected to move directly to video, but that was not what happened. EBay's reputations, Amazon's book ratings, Slashdot's karma, tag clouds, social bookmarks and Twitter are not rich at all. Table 3.1 shows that computer communication evolved by linkage as well as richness. Computer chat, blogs, messaging, tags, karma, reputations and wikis are all high linkage but low richness.

Communication that combines high richness and high linkage is interface expensive, e.g. a face-to-face meeting lets rich channels and matrix communication give factual, sender state and group state information. The medium also lets real time contentions between people talking at once be resolved naturally. Everyone can see who the others choose to look at, so whoever gets the group focus continues to speak. To do the same online would require not only many video streams on every screen but also a mechanism to manage media conflicts. Who controls the interface? If each person controls his or her own view there is no commonality, while if one person controls the view like a film editor, there is no group action. Can electronic groups act democratically like face-to-face do?

In audio-based tagging, the person speaking automatically makes his or her video central (Figure 3.6). The interface is common but it is group-directed, i.e. democratic. Gaze-based tagging is the same except that when people look at a person, that person's window expands on everyone's screen. It is in effect a group directed bifocal display (Spence and Apperley, 2012). When matrix communication is combined with media richness, online meetings will start to match face-to-face meetings in terms of communication performance.

Courtesy of Brian Whitworth and Adnan Ahmad;testing 2 author. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Courtesy of Brian Whitworth and Adnan Ahmad;testing 2 author. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 3.6 A-B: Audio based video tagging

A social model of communication suggests why video-phones are technically viable today but video-phoning is still not the norm. Consider its disadvantages, like having to shave or put on lipstick to call a friend or having to clean up the background before calling Mum. Some even prefer text to video because it is less rich, as they do not want a big conversation.

In sum, computer communication is about linkage as well as richness because communication acts involve senders and receivers as well as messages. Communication also varies by system level, as is now discussed.

3.8 Semantic Streams

Communication goals can be classified by level as follows:

Informational. The goal is to analyze information about the world and decide a best choice, but this rational analysis process is surprisingly fragile (Whitworth et al., 2000).

Personal. The goal is to form relationships which are more reliable. Relating involves a turn-taking, mutual-approach process, to manage the emotional arousal evoked by the presence of others (Short et al., 1976) (footnote 17). Friends are better than logic.

Community. The goal is to stay "within" the group, as belonging to a community means being part of it, and so protected by it. Communities outlast friends.

Goal | Influence | Linkage | Questions |

Analyze task | Informational | Broadcast | What is right? |

Relate to | Personal | Interpersonal | Who do I like? |

Belong to a | Normative | Matrix | What are the others doing? |

Table 3.2: Human goals by influence and linkage

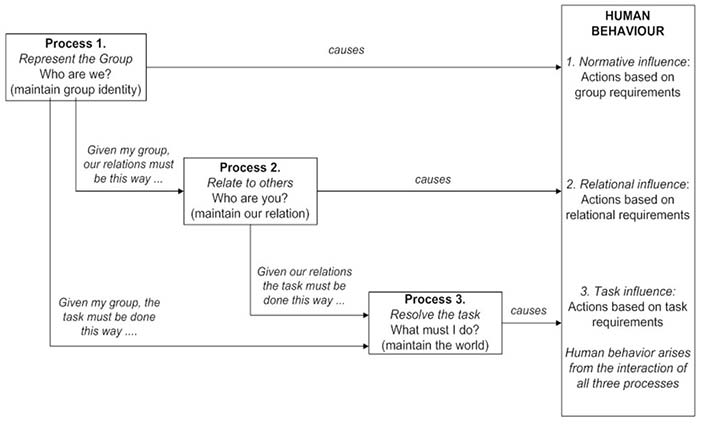

Table 3.2 shows how the level goals map to influence and linkage types. People online or off analyze information, relate to others and belong to communities, so they are subject to informational, personal and normative influences. Normative influence is based on neither logic nor friendship; an example is patriotism, loyalty to your country right or wrong. Even brothers may kill each other on a civil war battlefield.

People are influenced by community norms, friend views and task information, in that order, via different semantic streams. Semantic streams arise as people process a physical signal in different ways to generate different meanings. So one physical message can at the same time convey:

Message content. Symbolic statements about the literal world, e.g. a factual sentence.

Sender state. Sender psychological state, e.g. an agitated tone of voice.

Group position. Sender intent, which added up over many senders gives the group intent, e.g. an election.

Human communication is subtle because one message can have many meanings and people respond to them all at once. So when leaving a party I may say: "I had a good time" but by tone imply the opposite. I can say "I AM NOT ANGRY!" in an angry voice (footnote 18). What is less obvious is that a message can also indicate a position, or intent to act, e.g. saying "I had a good time" in a certain tone or with certain body language can indicate an intention to leave a party.

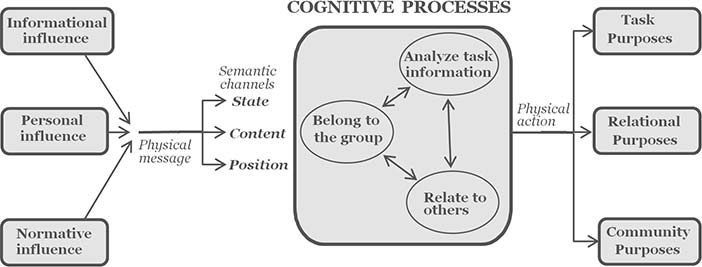

In the cognitive model of Figure 3.7, physical level signals have as many semantic streams as the medium allows. Face-to-face talk allows three streams, but computer communication is usually more restricted, e.g. email text mainly just gives content information.

Community level systems using matrix communication include:

The reputation ratings of Amazon or E-Bay give community-based product quality control. Slashdot does the same for content, letting readers rate comments to filter out poor ones.

Social bookmarks, like Digg and Stumbleupon, let users share links, to see what the community is looking at.

Tag technologies increase the font size of links according to frequency of use. As people walk in a forest walk on tracks trod by others, so we can now follow web-tracks on browser screens.

Twitter's follow function lets leaders broadcast ideas to followers and people choose the leaders they like.

The power of the computer can allow very fast matrix communication by millions or billions. What might a global referendum on current issues reveal? The Internet could tell us.

How these three basic cognitive processes, of belonging, relating and fact analysis, work together in the same person is shown in Figure 3.7. The details are given elsewhere (Whitworth et al., 2000), but note that the rational resolution of task information is the third priority cognitive process, not the first. Only if all else fails do we stop to think things out or read intellectual instructions.

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 3.7: Cognitive processes in communication

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 3.7: Cognitive processes in communication

The first level of the World Wide Web, an information library accessed by search tools, is well in place. Its second level, a medium for personal relations, is also well under way. The third, a civilized social environment, is the current and future challenge, as even a cursory study of Robert's Rules of Order will dispel any illusion that social dealings are simple (Robert, 1993).

Socio-technology lets hundreds of millions of people act together, but we still do not know what Here Comes Everybody (footnote 19) means (Shirky, 2008). How exactly many people act as one has always been a bit of a mystery, yet this is what we must learn to bring group applications to an Internet full of personal applications. Group writing and publishing, group browsing, group singing and music (online choirs and bands), group programming and group research illustrate the many areas of current and future potential in online group activity. One can also expect a bite-back in privacy demands, i.e. more "tight" communities that are hard to get in to.

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 3.8: The cognitive processes that affect human behaviour

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 3.8: The cognitive processes that affect human behaviour

3.9 Discussion Questions

The following questions are designed to encourage thinking on the chapter and exploring socio-technical cases from the Internet. If you are reading this chapter in a class - either at university or commercial – the questions might be discussed in class first, and then students can choose questions to research in pairs and report back to the next class.

Why can technologists not leave the social and ethical questions to non-technologists? Give examples of IT both helping and hurting humanity. What will decide, in the end, whether IT helps or hurts us overall?

Compare central vs. distributed networks (Ethernet vs. Polling). Compare the advantages and disadvantages of centralizing vs. distributing control. Is central control ever better? Now consider social systems. Of the traditional socio-technical principles listed, which ones distribute work-place control? Compare the advantages and disadvantages of centralizing vs. distributing control in a social system. Compare governance by a dictator tyrant, a benevolent dictator and a democracy. Which type are most online communities? How might that change?

Originally, socio-technical ideas applied social requirements to work-place management. How has it evolved today? Why is it important to apply social requirements to IT design? Give examples.

Illustrate system designs that apply: mechanical requirements to hardware (footnote 20); informational requirements to hardware (footnote 21); informational requirements to software; personal requirements to hardware (footnote 22); personal requirements to software; personal requirements to people; community requirements to hardware; community requirements to software; community requirements to people; community requirements to communities. Give an example in each case. Why not design software to mechanical requirements?

Is technology the sole basis of modern prosperity? If people suddenly stopped trusting each other, would wealth continue? Use the 2009 credit meltdown to illustrate your answer. Can technology solve social problems like mistrust? How can social problems be solved? Can technology help?

Should an online system gather all the data it can during registration? Give two good reasons not to gather or store non-essential personal data. Evaluate three online registration examples.

Spam demonstrates a socio-technical gap, between what people want and what technology does. How do users respond to it? In the "spam wars", who wins? Who loses? Give three other examples of a socio-technical gap. Of the twenty most popular third-party software downloads, which relate to a socio-technical gap?

What is a legitimate government? What is a legitimate interaction? How do people react to an illegitimate government or interaction? How are legitimacy requirements met in physical society? Why will this not work online? What will work?

What is the problem with "social engineering"? How about "mental engineering" (brainwashing)? Why do these terms have negative connotations? Is education brainwashing? Why not? Explain the implications for STS design.

For a well known STS, explain how it supports, or not, the eight proposed aspects of community performance, with screenshot examples. If it does not support an aspect, suggest why. How could it?

Can we own something but still let others use it? Can a community be both free and ordered? Can people compete and cooperate at the same time? Give physical and online examples. How are such tensions resolved? How does democracy reconcile freedom and order? Give examples in politics, business and online.

What is community openness for a nation? For an organization? For a club or group? Online? Why are organizations that promote people based on merit more open? Illustrate technology support for merit-based promotion in an online community.

Is a person sending money to a personal friend online entitled to keep it private? What if the sender is a public servant? What if it is public money? Is a person receiving money from a personal friend online entitled to keep it private? What if the receiver is a public servant?

What is communication? What is meaning? What is communication performance? How can media richness be classified? Is a message itself rich? Does video always convey more meaning than text? How can a lean medium like Twitter deliver more communication performance? Give online and offline examples.

What affects communication performance besides richness? How is it classified? Is it a message property? How does it communicate more? Give online/offline examples.

If media richness and linkage both increase communication power, why not have both? Describe a physical world situation that does this. What is the main restriction? Can online media do this? What is, currently, the main contribution of computing to communication power? Give examples.

What communication media type best suits these goals: telling everyone about your new product; relating to friends; getting group agreement? Give online and offline examples. For each goal, what media richness, linkage and anonymity do you recommend. You lead an agile programming team spread across the world: what communication technology would you use?

State differences between the following media pairs: email and chat; instant messaging and texting; telephone and email; chat and face-to-face conversation; podcast and video; DVD and TV movie; wiki and bulletin board. Do another pair of your choice.

How can a physical message convey content, state and position semantic streams? Give examples of communications that convey: content and state; content and position; state and position; and content, state and position. Give examples of people trying to add an ignored semantic stream to technical communication, e.g. people introducing sender state data into lean text media like email.

Can a physical message generate many information streams? Can an information stream generate many semantic streams? Give examples. Does the same apply online? Use the way in which astronomical or earthquake data is shared online to illustrate your answer.

You want to buy a new cell-phone and an expert web review suggests model A based on factors like cost and performance. Your friend recommends B, uses it every day, and finds it great. On an online customer feedback site, some people report problems with A and B, but most users of C like it. What are the pluses and minuses of each influence? Which advice would you probably follow? Ask three friends what they would do.

What is the best linkage to send a message to many others online? What is the best linkage to make or keep friends online? What is the best linkage to keep up with community trends online? List the advantages and disadvantages of each style. How can technology support each of the above?

Explain why reputation ratings, social bookmarks and tagging are all matrix communication. In each case, describe the senders, the message, and the receivers. What is the social goal of matrix communication? How exactly does technology support it?

Give three online leaders searched by Google or followed on Twitter. Why do people follow leaders? How can leaders get people to follow them? How does technology help? If the people are already following a set of leaders, how can new leaders arise? If people are currently following a set of ideas, how can new ideas arise? Describe the innovation adoption model. Explain how it applies to "viral" videos?