“Politeness makes a community a nice place to be”

This chapter analyzes politeness as a socio-technical requirement

4.1 Can Machines be Polite?

Software, with its ability to make choices, has crossed the border between inert machine and social participant, as the term human-computer interaction (HCI) implies. Computers today are no longer just tools that respond passively to directions but social agents that are online participants in their own right. Miller notes that if I hit my thumb with a hammer I blame myself, not the hammer, but people often blame equally mechanical programs for user initiated errors. (Miller, 2004, p. 31).

Computer programs are just as mechanical as cars, as each state defines the next, yet programs now ask questions, suggest actions and give advice. Software mediating a social interaction, like email, is like a social facilitator. As computing evolves, people increasingly see programs as active collaborators rather than passive media. These new social roles, of agent, assistant or facilitator, imply a new requirement – to be polite.

To treat machines as people seems foolish, like talking to an empty car, but words addressed to cars on the road are actually to their drivers. Cars are machines but the drivers are people. Likewise, a program is mechanical but people "drive" the programs we interact with. It is not surprising that people show significantly more relational behaviour when the other party in computer mediated communication is clearly human than when it is not (Shectman & Horowitz, 2003). Studies find that people do not treat computers as people outside the mediation context (Goldstein, Alsio, & Werdenhoff, 2002) – just as people do not usually talk to empty cars.

Treating a software installation program as if it were a person is not unreasonable if the program has a human source. Social questions like: "Do I trust you?" and "What is your attitude to me?" apply. If computers have achieved the status of social agents, it is natural for people to treat them socially.

A social agent is a social entity that represents another social entity in a social interaction. The other social entity can be a person or group, e.g. installation programs interact with customers on behalf of a company (a social entity). The interaction is social even if the agent, a program, is not, because an install is a social contract. Software is not social in itself, but to mediate a social interaction it must operate socially. If a software agent works for the party it interacts with, it is an assistant, both working for and to the same user. A human-computer interaction with an assistant also requires politeness.

If software mediates social interactions it should be designed accordingly. No company would send a socially ignorant person to talk to important clients, yet they send software that interrupts, overwrites, nags, steals, hijacks and in general annoys and offends users (Cooper, 1999). Polite computing is the design of socially competent software.

4.2 Selfish Software

Selfish software acts as if it were the only application on your computer, just as a selfish person acts as if only he or she exists. It pushes itself forward at every opportunity, loading at start-up and running continuously in the background. It feels free to interrupt you at any time to demand things or announce what it is doing, e.g. after I (the first author) had installed new modem software, it then loaded itself on every start-up and regularly interrupted me with a modal (footnote 1) window saying it was going online to check for updates to itself. It never found any, even after weeks. Finally, after yet another pointless "Searching for upgrades" message I could not avoid, I uninstalled it. As in The Apprentice TV show, assistants that are no help are fired! Selfish apps going online to download upgrades without asking mean tourists with a smartphone can find themselves with high data roaming bills for downloads they did not want or need.

Users uninstalling impolite software is a new type of computing error – a social error. A computer system that gets into an infinite loop that hangs it has a software error; if a user cannot operate the computer system, it is a usability error; and if software offends and drives users away, it is a social error. In usability errors, people want to use the system but do not know how to, but in social errors they understand it all too well and choose to avoid it.

Socio-technical systems cannot afford social errors because in order to work, they need people to participate. In practice, a web site that no-one visits is as much a failure as one that crashes. Whether a system fails because the computer cannot run it, the user does not know how to run it, or the user does not want to run it, does not matter. The end effect is the same - the application does not run.

For example, my new 2006 computer came with McAfee Spamkiller, which by design overwrote my Outlook Express mail server account name and password with its own values when activated. I then no longer received email, as the mail server account details were wrong. After discovering this, I retyped in the correct values to fix the problem and got my mail again. However the next time I rebooted the computer, McAfee rewrote over my mail account details again. I called the McAfee help person, who explained that Spamkiller was protecting me by taking control, and routing all my email through itself. To get my mail I had to go into McAfee and tell it my specific email account details, but when I did this it still did not work. I was now at war with this software, which:

Overwrote the email account details I had typed in.

Did nothing when the email didn't work.

The software "took charge" but didn't know what it was doing. Whenever Outlook started, it forced me to watch it do a slow foreground modal check for email spam, but in two weeks of use it never found any! Not wanting to be held hostages by a computer program, I again uninstalled it as selfish software.

4.3 Polite Software

Polite computing addresses the requirement for a social entities to work together. The Oxford English Dictionary (http://dictionary.oed.com) defines politeness as:

"… behaviour that is respectful or considerate to others".

So software that respects and considers users is polite, as distinct from software usefulness or usability, where usefulness addresses functionality and usability how easy it is to use. Usefulness is what the computer does and usability is how users get it to do it. Polite computing in contrast is about social interactions, not computer power or cognitive ease. So software can be easy to use but rude, or polite but hard to use. While usability reduces training and documentation costs, politeness lets a software agent socially interact with success. Both usability and politeness fall under the rubric of human-centred design.

Polite computing is about designing software to be polite, not making people polite. People are socialized by society, but rude, inconsiderate or selfish software is a widespread problem because it is a software design "blind spot" (Cooper, 1999). Most software is socially blind, except for socio-technical systems like Wikipedia, Facebook and E-Bay. This chapter outlines a vision of polite computing for the next generation of social software.

4.4 Social Performance

If politeness is considering the other in a social interaction, the predicted effect is more pleasant interaction. In general, politeness makes a society a nicer place to be, whether online or offline. It contributes to computing by:

Increasing legitimate interactions.

Reducing anti-social interactions.

Increasing synergy.

Increasing software use.

Programmers can fake politeness, as people do in the physical world, but when people behave politely, cognitive dissonance theory finds that people feel polite (Festinger, 1957). So if programmers design for politeness, the overall effect will be positive even although some programmers may be faking it.

Over thousands of years, as physical society became "civilized", it created more prosperity. Today, for the first time in human history, many countries are producing more food than their people can eat, as their obesity epidemics testify. The bloody history of humanity has been a social evolution from zero-sum (win-lose) interactions like war to non-zero-sum (win-win) interactions like trade (Wright, 2001), with productivity the prize. Scientific research illustrates this: scientists freely giving their hard earned knowledge away seems foolish, but when a critical mass do it the results are astounding.

Social synergy is people in a community giving to each other to get more than is possible by selfish activity; e.g. Open Source Software (OSS) products like Linux now compete with commercial products like Office. The mathematics of synergy reflect its social interaction origin: competence gains increase linearly with group size but synergy gains increase geometrically, as they depend on the number of interactions. In the World Wide Web, we each only sow a small part of it but we reap from it the world's knowledge. Without polite computing, however, synergy is not possible.

A study of reactions to a computerized Chinese word-guessing game found that when the software apologized after a wrong answer by saying "We are sorry that the clues were not helpful to you," the game was rated more enjoyable than when the computer simply said "This is not correct" (Tzeng, 2004). In general, politeness improves online social interactions and so increases them. Politeness is what makes a social environment a nice place to be. Businesses who wonder why more people don't shop online should ask whether the World Wide Web is a place people want to be? If it is full of spam, spyware, viruses, hackers, pop-ups, nagware, identity theft, scams, spoofs, offensive pornography and worms then it will be a place to avoid. As software becomes more polite, people will use it more and avoid it less.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

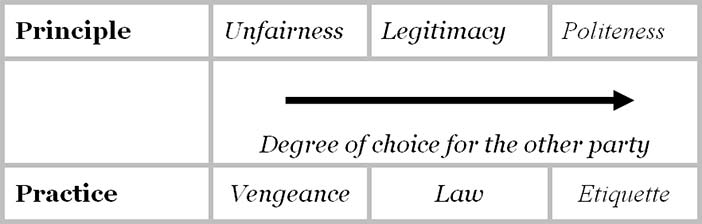

Figure 4.1: Social interactions by degree of choice given

4.5 The Legitimacy Baseline

Legitimate interactions, defined as those that are both fair and in the common good, are the basis of civilized prosperity (Whitworth & deMoor, 2003) and legitimacy is a core demand of any prosperous and enduring community (Fukuyama, 1992). Societies that allow corruption and win-lose conflicts are among the poorest in the world (Transparency-International, 2001). Legitimate interactions offer fair choices to parties involved while anti-social crimes like theft or murder give the victim little or no choice. Figure 4.1 categorizes anti-social, social and polite interactions by the degree of choice the other party has.

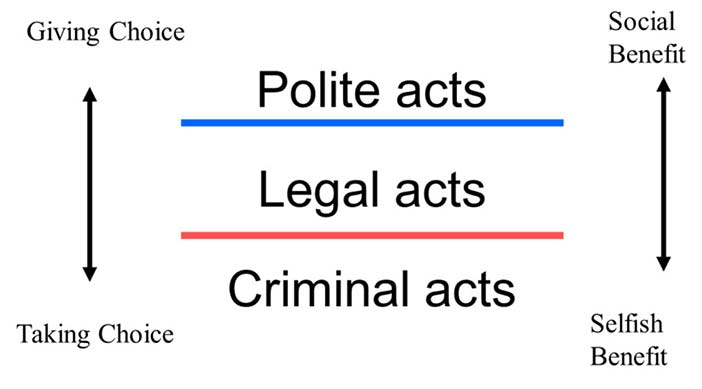

So polite acts are more than fair, i.e. more than the law. To follow the law is not politeness because it is required. One does not thank a driver who stops at a red light, but one thanks the driver who stops to let you into a line of traffic. Laws specify what citizens should do but politeness is what they could do. Politeness involves offering more choices in an interaction than the law requires, so it begins where fixed laws end. If criminal acts fall below the law, then polite acts rise above it (Figure 4.2). Politeness increases social health as criminality poisons it.

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 4.2: Politeness is doing more than the law

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 4.2: Politeness is doing more than the law

4.6 Security

In computing design, politeness takes a back seat to security, but upgrading security every time an attack exploits another loophole is a never-ending cycle. Why not develop strategies to reduce the motivation to attack the community (Rose, Khoo, & Straub, 1999)? Polite computing does just this, as it reduces a common source of attacks — anger against a system that allows those in power to prey upon the weak (Power, 2000). Hacking is often revenge against a person, a company or the capitalist society in general (Forester & Morrison, 1994).

Politeness openly denies the view that "everyone takes what they can so I can too". A polite system can make those who are neutral polite and those who are against society neutral. Politeness and security are thus two sides of the same coin of social health. By analogy, a gardener defends his or her crops from weeds, but does not wait until every weed dies before fertilizing. If politeness grows social health, it complements rather than competes with security.

4.7 Etiquette

Some define politeness as "being nice" to the other party (Nass, 2004). So if someone says "I'm a good teacher; what do you think?" then polite people respond "You're great", even if they do not agree. Agreeing with another's self-praise is called one of the "fundamental rules of politeness" (Nass, 2004, p36). Yet this is illogical, as one can be agreeably impolite and politely disagreeable. One can politely refuse, beg to differ, respectfully object and humbly criticize, i.e. disagree politely. Conversely one can give to charity impolitely, i.e. be kind but rude. Being polite is thus different from being nice, as parents who are kind to their child may not agree to let it choose its own bedtime.

To apply politeness to computer programming, we must define it in information terms. If it is considering others, then as different societies consider differently, what is polite in one culture is rude in another. If there is no universal polite behaviour, there seems no basis to apply politeness to the logic of programming.

Yet while different countries have different laws, the goal of fairness behind the law can be attributed to every society (Rawls, 2001). Likewise, different cultures could have different etiquettes but a common goal of politeness. In Figure 4.1, the physical practices of vengeance, law and etiquette derive from human concepts of unfairness, legitimacy and politeness.

So while societies implement different forms of vengeance, law and etiquette, the aims of avoiding unfairness, enabling legitimacy and encouraging politeness do not change. So politeness is the spirit behind etiquettes, as legitimacy is the spirit behind laws. Politeness lets us generate new etiquettes for new cases, as legitimacy lets us generate new laws. Etiquettes and laws are the information level reflections of the human level concepts of politeness and legitimacy.

If politeness can take different forms in different societies, to ask which implementation applies online is to ask the wrong question. This is like asking a country which other country's laws they want to adopt, when laws are generally home grown for each community. The real question is how to "reinvent" politeness online, whether for chat, wiki, email or other groupware. Just as different physical societies develop different local etiquettes and laws, so online communities will develop their own ethics and practices, with software playing a critical support role. While different applications may need different politeness implementations, we can develop general design "patterns" to specify politeness in information terms (Alexander, 1964).

4.8 An Information Definition of Software Politeness

If the person being considered knows what is "considerate" for them, politeness can be defined abstractly as the giving of choice to another in a social interaction. This is then always considerate given only that the other knows what is good for him or her. The latter assumption may not always be true, e.g. in the case of a young baby. In a conversation where the locus of channel control passes back and forth between parties, it is polite to give control to the other party (Whitworth, 2005), e.g. it is impolite to interrupt someone, as that removes their choice to speak, and polite to let them finish talking, as they then choose when to stop. This gives a definition of politeness as:

"… any unrequired support for situating the locus of choice control of a social interaction with another party to it, given that control is desired, rightful and optional." (Whitworth, 2005, p355)

Unrequired means the choice given is more than required by the law, as a required choice is not politeness. Optional means the polite party has the ability to choose, as politeness is voluntary. Desired by the receiver means giving choice is only polite if the other wants it, e.g. "After you" is not polite when facing a difficult task. Politeness means giving desired choices, not forcing the locus of control, with its burden of action, upon others. Finally, rightful means that consideration of someone acting illegally is not polite, e.g. to considerately hand a gun to a serial killer about to kill someone is not politeness.

4.9 The Requirements

Following previous work (Whitworth, 2005), polite software should:

Respect the user. Polite software respects user rights, does not act pre-emptively, and does not act on information without the permission of its owner.

Be visible. Polite software does not sneak around changing things in secret, but openly declares what it is doing and who it represents.

Be understandable. Polite software helps users make informed choices by giving information that is useful and understandable.

Remember you. Polite software remembers its past interactions and so carries forward your past choices to future interactions.

Respond to you. Polite software responds to user directions rather than trying to pursue its own agenda.

Each of these points is now considered in more detail.

4.9.1 Respectfulness

Respect includes not taking another's rightful choices. If two parties jointly share a resource, one party's choices can deny the other's; e.g. if I delete a shared file, you can no longer print it. Polite software should not preempt rightful user information choices regarding common resources such as the desktop, registry, hard drive, task bar, file associations, quick launch and other user configurable settings. Pre-emptive acts, like changing a browser home page without asking, act unilaterally on a mutual resource and so are impolite.

Information choice cases are rarely simple; e.g. a purchaser can use software but not edit, copy or distribute it. Such rights can be specified as privileges, in terms of specified information actors, methods, objects and contexts (see Chapter 6). To apply politeness in such cases requires a legitimacy baseline; e.g. a provider has no unilateral right to upgrade software on a computer the user owns (though the Microsoft Windows Vista End User License Agreement (EULA) seems to imply this). Likewise users have no right to alter the product source code unilaterally. In such cases politeness applies; e.g. the software suggests an update and the user agrees, or the user requests an update and the software agrees (for the provider). Similarly while a company that creates a browser owns it, the same logic means users own data they create with the browser, e.g. a cookie. Hence, software cookies require user permission to create, and users can view, edit or delete them.

4.9.2 Visibility

Part of a polite greeting in most cultures is to introduce oneself and state one's business. Holding out an open hand, to shake hands, shows that the hand has no weapon, and that nothing is hidden. Conversely, to act secretly behind another's back, to sneak, or to hide one's actions, for any reason, is impolite. Secrecy in an interaction is impolite because the other has no choice regarding things they do not know about. Hiding your identity reduces my choices, as hidden parties are untouchable and unaccountable for their actions. When polite people interact, they declare who they are and what they are doing.

If polite people do this, polite software should do the same. Users should be able to see what is happening on their computer. Yet when Windows Task Manager attributes a cryptic process like CTSysVol.exe to the user, it could be a system critical process or one left over from a long uninstalled product. Lack of transparency is why after 2-3 years Windows becomes "old". With every installation of selfish software it puts itself everywhere, fills the taskbar with icons, the desktop with images, the disk with files and the registry with records. In the end, the computer owner has no idea what software is responsible for what files or registry records.

Selfish applications consider themselves important enough to load at start-up and run continuously, in case you need them. Many applications doing this slow down a computer considerably, whether on a mobile phone or a desktop. Taskbar icon growth is just the tip of the iceberg of what is happening to the computer, as some start-ups do not show on the taskbar. Selfish programs put files where they like, so uninstalled applications are not removed cleanly, and over time Windows accretes a "residue" of files and registry records left over from previous installs. Eventually, only reinstalling the entire operating system recovers system performance.

The problem is that the operating system keeps no transparent record of what applications do. An operating system Source Registry could link all processes to their social sources, giving contact and other details. Source could be a property of every desktop icon, context menu item, taskbar icon, hard drive file or any other resource. If each source creates its own resources, a user could then delete all resources allocated by a source they have uninstalled without concern that they were system critical. Windows messages could also state their source, so that users knew who a message was from. Application transparency would let users decide what to keep and what to drop.

4.9.3 Helpfulness

A third politeness property is to help the user by offering understandable choices, as a user cannot properly choose from options they do not understood. Offering options that confuse is inconsiderate and impolite, e.g. a course text web site offers the choices:

OneKey Course Compass

Content Tour

Companion Website

Help Downloading

Instructor Resource Centre

It is unclear how the "Course Compass" differs from the "Companion Website", and why both seem to exclude "Instructor Resources" and "Help Downloading". Clicking on these choices, as is typical for such sites, leads only to further confusing menu choices. The impolite assumption is that users enjoy clicking links to see where they go. Yet information overload is a serious problem for web users, who have no time for hyperlink merry-go-rounds.

Not to offer choices at all on the grounds that users are too stupid to understand them is also impolite. Installing software can be complex, but so is installing satellite TV technology, and those who install the latter do not just come in and take over. Satellite TV installers know that the user who pays expects to hear his or her choices presented in an understandable way. If not, the user may decide not to have the technology installed.

Complex installations are simplified by choice dependency analysis, of how choices are linked, as Linux's installer does. Letting a user choose to install an application the user wants minus a critical system component is not a choice but a trap. Application-critical components are part of the higher choice to install or not; e.g. a user's permission to install an application may imply access to hard drive, registry and start menu, but not to desktop, system tray, favorites or file associations.

4.9.4 Remembering

It is not enough for the software to give choices now but forget them later. If previous responses have been forgotten, the user is forced to restate them, which is inconsiderate. Software that actually listens and remembers past user choices is a wonderful thing. Polite people remember previous encounters, but each time Explorer opens it fills its preferred directory with files I do not want to see, and then asks me which directory I want, which is never the one displayed. Each time I tell it, and each time Explorer acts as if it were the first time I had used it. Yet I am the only person it has ever known. Why can't it remember the last time and return me there? The answer is that it is impolite by design.

Such "amnesia" is a trademark of impolite software. Any document editing software could automatically open a user's last open document, and put the cursor where they left off, or at least give that option (Raskin, 2000, p.31). The user logic is simple: If I close the file I am finished, but if I just exit without closing the document, then put me back where I was last time. It is amazing that most software cannot even remember the last user interaction. Even within an application, like email, if one moves from inbox to outbox and back, it "forgets" the original inbox message, so one must scroll back to it; cf. browser tabs that remember user web page position.

4.9.5 Responsiveness

Current "intelligent" software tries to predict user wants but cannot itself take correction, e.g. Word's auto-correct function changes i = 1 to I = 1, but if you change it back the software ignores your act. This is software that is clever enough to give corrections but not clever enough to take correction itself. However, responsive means responding to the user's direction, not ignoring it.

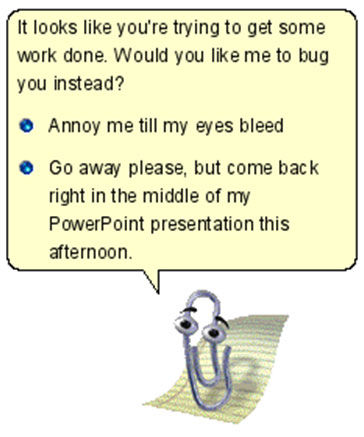

A classic example of non-responsiveness was Mr. Clippy, Office 97's paper clip assistant (Figure 4.3).

Copyright © Microsoft Corp.. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 4.3: Mr. Clippy takes charge

Copyright © Microsoft Corp.. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 4.3: Mr. Clippy takes charge

Searching the Internet for Mr. Clippy" gives comments like "Die, Clippy, Die!" (Gauze, 2003), yet its Microsoft designer still wondered:

"If you think the Assistant idea was bad, why exactly?"

The answer is as one user noted:

"It wouldn't go away when you wanted it to. It interrupted rudely and broke your train of thought. (Pratley, 2004).

To interrupt inappropriately disturbs the user's train of thought. For complex work like programming, even short interruptions cause a mental "core dump", as the user drops one thing to attend to another. The interruption effect is then not just the interruption time, but also the recovery time (Jenkins, 2006); e.g. if it takes three minutes to refocus after an interruption, a one second interruption every three minutes can reduce productivity to zero.

Mr Clippy was impolite, and in XP was replaced by tags smart enough to know their place. In contrast to Mr Clippy, tag clouds and reputation systems illustrate software that reflects rather than directs online users.

Selfish software, like a spoilt child, repeatedly interrupts, e.g. a Windows Update that advises the user when it starts, as it progresses and when it finishes. Such modal windows interrupt users, seize the cursor and lose current typing. Since each time the update only needs the user to press OK, it is like being repeatedly interrupted to pat a self-absorbed kiddie on the head. The lesson of Mr. Clippy, that software serves the user not the other way around, still needs to be learned.

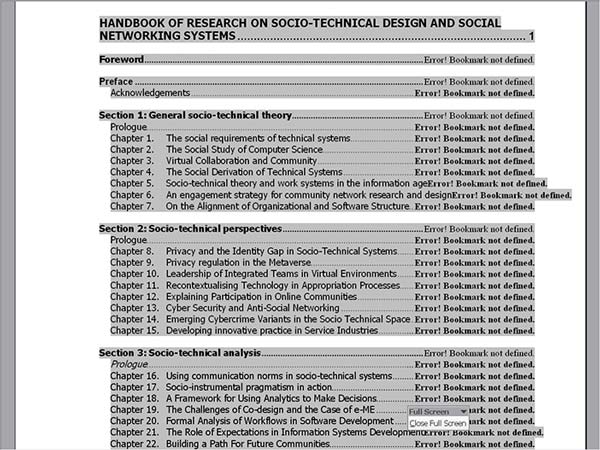

It is hard for selfish software to keep appropriately quiet; e.g. Word can generate a table of contents from a document's headings. However if one sends just the first chapter of a book to someone, with the book's table of contents (to show its scope), every table of contents heading line without a page number loudly declares: "ERROR! BOOKMARK NOT DEFINED", which of course completely spoils the sample document impression (Figure 4.4). Even worse, this effect is not apparent until the document is received. Why could the software not just quietly put a blank instead of a page number? Why announce its needs so rudely? What counts is not what the software needs but what the user needs, and in this case the user needs the software to be quiet.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 4.4: A table of contents as emailed to a colleague (Word)

4.10 Impolite Computing

Impolite computing has a long history; e.g. spam fills inboxes with messages users do not want (Whitworth & Whitworth, 2004). Spam is impolite because it takes choice away from email users. Pop-up windows are also impolite, as they "hijack" the user's cursor or point of focus. They also take away user choices, so many browsers now prevent pop-ups. Impolite computer programs:

Use your computer's services. Software can use your hard drive to store information cookies, or your phone service to download data without asking.

Change your computer settings. Software can change browser home page, email preferences or file associations.

Spy on what you do online. Spyware, stealthware and software back doors can gather information from your computer without your knowledge, or record your mouse clicks as you surf the web, or even worse, give your private information to others.

For example, Microsoft's Windows XP Media Player, was reported to quietly record the DVDs it played and use the user's computer's connection to "phone home", i.e. send data back to Microsoft (Technology threats to Privacy, 2002). Such problems differ from security threats, where hackers or viruses break in to damage information. This problem concerns those we invite into our information home, not those who break in.

A similar concern is "software bundling", where users choose to install one product but are forced to get many:

“When we downloaded the beta version of Triton [AOL's latest instant messenger software], we also got AOL Explorer – an Internet Explorer shell that opens full screen, to AOL's AIM Today home page when you launch the IM client – as well as Plaxo Helper, an application that ties in with the Plaxo social-networking service. Triton also installed two programs that ran silently in the background even after we quit AIM and AOL Explorer”

-- Larkin, 2005

Yahoo's "typical" installation of their IM also used to download their Search Toolbar, anti-spyware and anti-pop-up software, desktop and system tray shortcuts, as well as Yahoo Extras, which inserted Yahoo links on your browser, altered the user's home page and made auto-search functions point to Yahoo by default. Even Yahoo employee Jeremy Zawodny disliked this:

“I don't know which company started using this tactic, but it is becoming the standard procedure for lots of software out there. And it sucks. Leave my settings, preferences and desktop alone”

-- http: //jeremy.zawodny.com/blog/archives/005121.html

Even today, many downloads require the user to opt-out rather than opt-in to offers. One must carefully check the checkboxes, lest one says something like: "Please change all my preferences" or "Please send my endless spam on your products".

A similar scheme is to use security updates to install new products, e.g.:

“Microsoft used the January 2007 security update to induce users to try Internet Explorer 7.0 whether they wanted to or not. But after discovering they had been involuntarily upgraded to the new browser, they next found that application incompatibility effectively cut them off from the Internet”

-- Pallatto, 2007

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

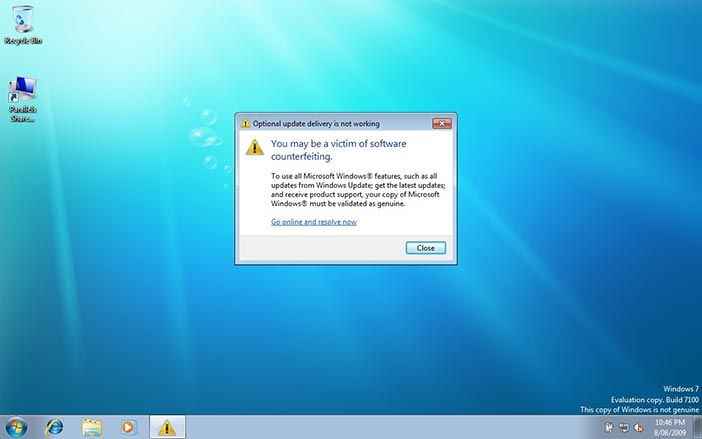

Figure 4.5: Windows genuine advantage nagware

After installing Windows security update KB971033, many Windows 7 owners got a nag screen that their copy of Windows was not genuine, even though it was (Figure 4.5). Clicking the screen gave an option to purchase Windows (again). The update was silently installed, i.e. even with a Never check for updates setting, Windows did check online and installed it anyway. Despite the legitimacy principle that my PC belongs to me, Windows users found that Microsoft can unilaterally validate, alter or even shut down their software, by its End User License Agreement (EULA). The effect was to make legitimate users hack Windows with tools like RemoveWAT, suspect and delay future Windows updates, avoid Microsoft web sites in case they secretly modify their PC and to investigate more trustworthy options like Linux. Yet an investigation found that while 22% of computers failed the test, less than 0.5% had pirated software (footnote 2). Disrespecting and annoying honest customers while trying to catch thieves has never been a good business policy.

Security cannot defend against people one invites in, especially if the offender is the security system itself. However, in a connected society social influence can be very powerful. In physical society the withering looks given to the impolite are not toothless, as what others think of you affects how they behave towards you. In traditional societies banishment was often considered worse than a death sentence. An online company with a reputation for riding roughshod over user rights may find this is not good for business.

4.10.1 Blameware

A fascinating psychological study could compare computer messages when things are going well to times when they are not. While computers seem delighted to be in charge in good times, when they go wrong software seems universally to agree that you have an error, not we have an error. Brusque and incomprehensible error messages like the "HTTP 404 – File not Found" suggest that you need to fix the problem you have clearly created. Although software itself often causes errors, some software designers recognize little obligation to give to users information the software has in a useful form, let alone suggest solutions. To "take the praise and pass the blame" is not polite computing. Polite software sees all interactions as involving "we". Indeed studies of users in human-computer tutorials show that users respond better to "Let's click the Enter button" than to "Click the Enter button" (Mayer et al., 2006). When there is a problem, software should try to help the user, not disown them.

4.10.2 Pryware

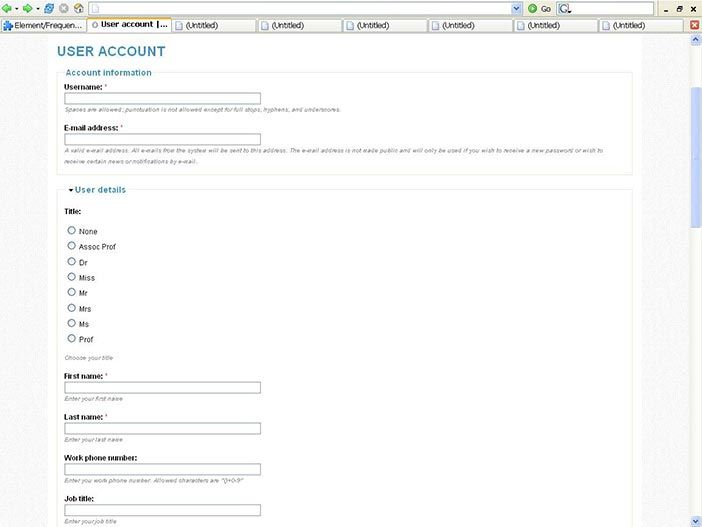

Pryware is software that asks for information it does not need for any reasonable purpose; e.g. Figure 4.6 shows a special interest site which wants people to register but for no obvious reason asks for their work phone number and job title. Some sites are even more intrusive, wanting to know your institution, work address and Skype telephone. Why? If such fields are mandatory rather than optional, people choose not to register, being generally unwilling to divulge data like home phone, cell phone and home address (Foreman & Whitworth, 2005). Equally, they may spam an address like "123 Mystreet, Hometown, zip code 246". Polite software does not pry, because intruding on another's privacy is offensive.

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 4.6: Pryware asks for unneeded details

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 4.6: Pryware asks for unneeded details

4.10.3 Nagware

If the same question is asked over and over, for the same reply, this is to pester or nag, like the "Are we there yet?" of children on a car trip. It forces the other party to give again and again the same choice reply. Many users did not update to Windows Vista because of its reputation as nagware, that asked too many questions. Polite people do not but a lot of software does, e.g. when reviewing email offline in Windows XP, actions like using Explorer triggered a "Do you want to connect?" request every few minutes. No matter how often one said "No!" it kept asking, because it had no memory of its own past. Yet past software has already solved this problem, e.g. uploading a batch of files creates a series of "Overwrite Y/N?" questions, which would force the user to reply repeatedly "Yes", but there is a "Yes to All" meta-choice that remembers for the choice set. Such choices about choices (meta-choices) are polite. A general meta-choice console (GMCC) would give users a common place to see or set meta-choices (Whitworth, 2005).

4.10.4 Strikeware

Strikeware is software that executes a pre-emptive strike on user resources. An example is a zip extract product that, without asking, put all the files it extracted as icons on the desktop! Such software tends to be used only once. Installation programs are notorious for pre-emptive acts; e.g. the Real-One Player that added desktop icons and browser links, installed itself in the system tray and commandeered all video and sound file associations. Customers resent such invasions, which while not illegal are impolite. An installation program changing your PC settings is like furniture deliverers rearranging your house because they happen to be in it. Software upgrades continue the tradition; e.g. Internet Explorer upgrades that make MSN your browser home page without asking. Polite software does not do this.

4.10.5 Forgetware

Selfish software collects endless data on users but is oblivious to how the user sees it. Like Peter Sellers in the film "Being There", selfish software likes to watch but cannot itself relate to others. Someone should explain to programmers that spying on a user is not a relationship. Mr. Clippy watched the user's acts on the document, but could not see his interaction with the user, so was oblivious to the rejection and scorn it evoked. Most software today is less aware of its users than an airport toilet. Software will make remembering user interactions its business when it considers the user, not itself.

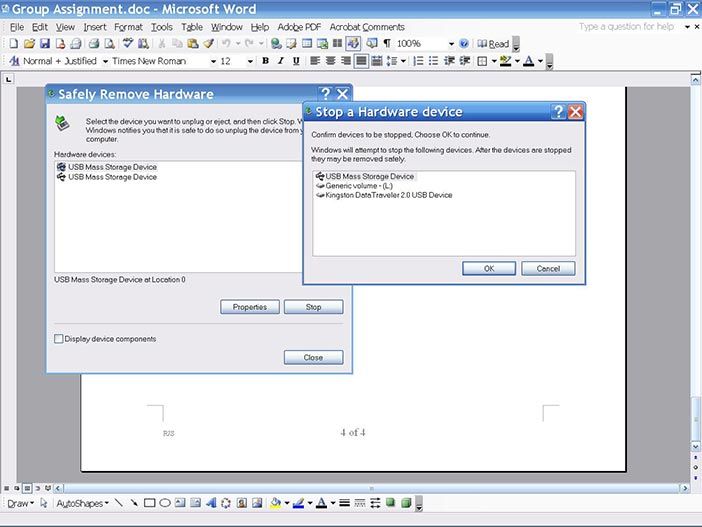

Copyright © Microsoft Windows. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 4.7: Windows XP eject USB interface

Copyright © Microsoft Windows. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 4.7: Windows XP eject USB interface

Working with forgetware makes the simplest interaction hard; e.g. Figure 4.7 shows the hoops users had to jump through to get a USB flash drive back from Windows XP. Imagine a human assistant that worked like this:

User: Hi Bill, please read this book for me (puts book in Bill's left hand).

Assistant: Bill has found a book in his hand!

User: OK, I want the book back now, Bill.

Assistant: Should I safely remove the book in my left or right hand?

User: The book in your left hand, Bill. The one I just gave you.

Assistant: Please confirm the book you mean by pointing to it, or the hand, or both, and say "OK". Then I will try to give it to you.

User: (points to left hand) OK!

Assistant: (left hand tries to give book but the right hand is holding it too) I can't give the book to you right now; try again later.

User: Give me the book back!

Assistant: Do you want me to safely remove the book in my left hand or my right hand …

User: (grabs book and walks away.)

A human helper who acted like this would be insolent. If the Windows software can discover a USB is busy after the user selects it, why not check it before? Is its time more important than that of the user? Most users of course just check the USB drive light and if it is not flashing pull it out, avoiding all the above.

In a similar vein, a computer voice reports my phone messages like this:

"There are five new messages. The first message received at 12.15pm on Wednesday the 14th of November is "<hang-up click>" To save this message press 1, to forward it press 3, to reply to it press 5, ..., to delete it press 76."

Note: "76" was the actual delete message code number, even though it is probably the most used option, especially for hang up calls. Again, imagine a human secretary who felt the need to report every detail of a call before telling you that the caller just hung up.

4.11 The Wizard's Apprentice

The problem presented here, of computers taking over what they do not understand, is embodied in the story of the wizard who left his apprentice in charge of his laboratory. Thinking he knew what he was doing, the apprentice started to cast spells, but they soon got out of hand and only the wizard's return prevented total disaster. Smart software, like that apprentice, often acts on its own, causing things to get out of control, and leaving the user to pick up the pieces.

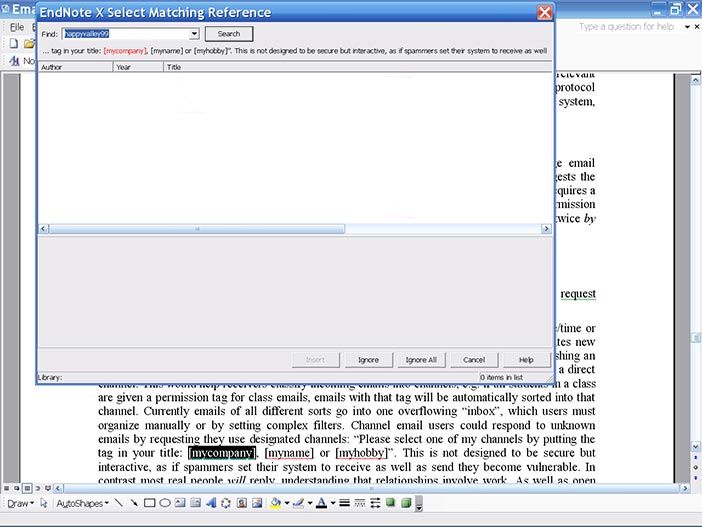

As software evolves it can get things wrong more. For example, Endnote software manages citations in documents like this one by embedding links to a reference database. Endnote Version X ran itself whenever I opened the document, and if it found a problem took the focus from whatever I was doing to let me know right away (Figure 4.8). It used square brackets for citations, so assumed any square brackets in the document were for it, as little children assume any words spoken are to them. After I had told it every time to ignore some [ ] brackets I had in the document, it then closed, dropping the cursor at whatever point it was and leaving me to find my own way back to where I was when it had interrupted.

I could not turn this activation off, so editing my document on the computer at work meant that Endnote could not find its citation database. It complained, then handled the problem by clearing all the reference generating embedded links in the document! If I had carried on without noticing, all the citations would have had to be later re-entered. Yet if you wanted to clear the Endnote links, as when publishing the document, it had to be done manually.

Selfish software comes with everything but an off button. It takes no advice and preemptively changes your data without asking. I fixed the problem by uninstalling Endnote and installing Zotero.

Cooper compares smart software to a dancing bear: we clap not because it dances well, but because we are amazed it can dance at all (Cooper, 1999). We need to stop clapping.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 4.8: Endnote X takes charge

For many reasons, people should control computers, not the reverse. Firstly, computers manage vast amounts of data with ease but handle context changes poorly (Whitworth, 2008), so smart computing invariably needs a human minder. Secondly, computers are not accountable for what they do, as they have no "self" to bear any loss. If people are accountable for what computers do, they need control over computer choices. Thirdly, people will always resist computer domination. Software designers who underestimate the importance of user choice invite grass-roots rebellion. An Internet movement against software arrogance is not inconceivable.

Today many users are at war with their software: removing things they did not want added, resetting changes they did not want changed, closing windows they did not want opened and blocking e-mails they did not want to receive. User weapons in this war, such as third party blockers, cleaners, filters and tweakers, are the most frequent Internet download site accesses. Their main aim is to put users back in charge of the computing estate they paid for. If software declares war on users, it will not win. If the Internet becomes a battlefield, no-one will go there. The solution is to give choices, not take them, i.e. polite computing.

The future of computing lies not in it becoming so clever that people are obsolete but in a human-computer combination that performs better than people or computers alone. The runaway IT successes of the last decade (cell-phones, Internet, e-mail, chat, bulletin boards etc.) all support people rather than supplant them. As computers develop this co-participant role, politeness is a critical success factor.

4.12 The Polite Solution

For modern software, often downloaded from the web, users choose to use it or not; e.g. President Bush in 2001 chose not to use e-mail because he did not trust it. The days when software can hold users hostage to its power are gone.

Successful online traders find politeness profitable. EBay's customer reputation feedback gives users optional access to valued information relevant to their purchase choice, which by the previous definition is polite. Amazon gives customers information on the books similar buyers buy, not by pop-up ads but as a view option below. Rather than a demand to buy, it is a polite reminder of same-time purchases that could save customer postage. Politeness is not about forcing users to buy, which is anti-social, but about improving the seller-customer relationship, which is social. Polite companies win business because customers given choices come back. Perhaps one reason the Google search engine swept all before it was that its simple white interface, without annoying flashing or pop-up ads, made it pleasant to interact with. Google ads sit quietly at screen right, as options not demands. Yet while many online companies know that politeness pays, for others, hit-and-run rudeness remains an online way of life.

Wikipedia is an example of a new generation of polite software. It does not act pre-emptively but lets its users choose, it is visible in what it does, it makes user actions like editing easy rather than throwing up conditions, it remembers each person personally, and it responds to user direction rather than trying to foist preconceived good knowledge conditions on users. Social computing features like post-checks (allowing an act and then checking it later), versioning and rollback, tag clouds, optional registration, reviewer reputations, view filters and social networks illustrate how polite computing gives choices to people. In this movement from software autocracy to democracy, it pays to be polite because polite software is used more and deleted less.

4.13 A New Dimension

Polite computing is a new dimension of social computing. It requires a software design attitude change, e.g. to stop seeing users as little children unable to exercise choice. Inexperienced users may let software take charge, but experienced users want to make their own choices. The view that "software knows best" does not work for computer-literate users. If once users were child-like, today they are grown up.

Software has to stop trying to go it alone. Too clever software acting beyond its ability is already turning core applications like Word into a magic world, where moved figures jump about or even disappear entirely, resized table column widths reset themselves and moving text gives an entirely new format. Increasingly only Ctrl-Z (Undo) saves the day, rescuing us from clever software errors. Software that acts beyond its ability thinks its role is being to lead, when really it is to assist.

Rather than using complicated Bayesian logic to predict users, why not simply follow the user's lead? I repeatedly change Word's numbered paragraph default indents to my preferences, but it never remembers. How hard is it to copy what the boss does? It always knows better, e.g. if I ungroup and regroup a figure it takes the opportunity to reset my text wrap-around options to its defaults, so now my picture overlaps the text again! Software should leverage user knowledge, not ignore it.

Polite software does not act unilaterally, is visible, does not interrupt, offers understandable choices, remembers the past, and responds to user direction. Impolite software acts without asking, works in secret, interrupts unnecessarily, confuses users, has interaction amnesia, and repeatedly ignores user corrections. It is not hard to figure what software type users prefer.

Social software requirements should be taught in system design along with engineering requirements. A "politeness seal" could mark applications that give rather than take user choice, to encourage this. The Internet will only realize its social potential when software is polite as well as useful and usable.

4.14 Discussion Questions

The following questions are designed to encourage thinking on the chapter and exploring socio-technical cases from the Internet. If you are reading this chapter in a class - either at university or commercial – the questions might be discussed in class first, and then students can choose questions to research in pairs and report back to the next class.

Describe three examples where software interacts as if it were a social agent. Cover cases where it asks questions, makes suggestions, seeks attention, reports problems, and offers choices.

What is selfishness in ordinary human terms? What is selfishness in computer software terms? Give five examples of selfish software in order of the most annoying first. Explain why it is annoying.

What is a social computing error? How does it differ from an HCI error, or a software error? Take one online situation and give examples of all three types of error. Compare the effects of each type of error.

What is politeness in human terms? Why does it occur? What is polite computing? Why should it occur? List the ways it can help computing.

What is the difference between politeness and legitimacy in a society? Illustrate by examples, first from physical society and then give an equivalent online version.

Compare criminal, legitimate and polite social interactions with respect to the degree of choice given to the other party. Give offline and online examples for each case.

Should any polite computing issues be left until all security issues are solved? Explain, with physical and online examples.

What is a social agent? Give three common examples of people acting as social agents in physical society. Find similar cases online. Explain how the same expectations apply.

Is politeness niceness? Do polite people always agree with others? From online discussion boards, quote people disagreeing politely and agreeing impolitely with another person.

Explain the difference between politeness and etiquette. As different cultures are polite in different ways, e.g. shaking hands vs. bowing, how can politeness be a general design requirement? What does it mean to say that politeness must be "reinvented" for each application design case?

Define politeness in general information terms. By this definition, is it always polite to let the other party talk first in a conversation? Is it always polite to let them finish their sentence? If not, give examples. When, exactly, is it a bad idea for software to give users choices?

For each of the five aspects of polite computing, give examples from your own experience of impolite computing. What was your reaction in each case?

Find examples of impolite software installations computing. Analyze the choices the user has. Recommend improvements.

List the background software processes running on your computer. Identify the ones where you know what they do and what application runs them. Do the same for your startup applications and system files. Ask three friends to do the same. How transparent is this system? Why might you want to disable a process, turn off a startup, or delete a system file? Should you be allowed to?

Discuss the role of choice dependencies in system installations. Illustrate the problems of: 1) being forced to install what is not needed and 2) being allowed to choose to not install what is.

Find an example of a software update that caused a fault; e.g. update Windows only to find that your Skype microphone does not work. Whose fault is this? How can software avoid the user upset this causes? Hint: Consider things like an update undo, modularizing update changes and separating essential from optional updates.

Give five online examples of software amnesia and five examples of software that remembers what you did last. Why is the latter better?

Find examples of registration pryware that asks for data such as home address that it does not really need. If the pry fields are not optional, what happens if you add bogus data? What is the effect of your willingness to register? Why might you register online after installing software?

Give three examples of nagware – software on a timer that keeps interrupting to ask the same question. In each case, explain how you can turn it off. Give an example of when such nagging might be justified.

Why is it in general not a good idea for applications to take charge? Illustrate with three famous examples where software took charge and got it wrong.

Find three examples of "too clever" software that routinely causes problems for users. Recommend ways to design software to avoid this. Hint: consider asking first, a contextual turn off option and software that can be trained.

What data drove Mr. Clippy's Bayesian logic decisions? What data was left out, so that users found him rude? Why did Mr. Clippy not recognize rejection? Which users liked Mr. Clippy? Turn on the auto-correct in Word and try writing the equation: i = 1. Why does Word change it? How can you stop this, without turning off auto-correct? Find other examples of smart software taking charge.