Human-computer interaction (HCI) is an area of research and practice that emerged in the early 1980s, initially as a specialty area in computer science embracing cognitive science and human factors engineering. HCI has expanded rapidly and steadily for three decades, attracting professionals from many other disciplines and incorporating diverse concepts and approaches. To a considerable extent, HCI now aggregates a collection of semi-autonomous fields of research and practice in human-centered informatics. However, the continuing synthesis of disparate conceptions and approaches to science and practice in HCI has produced a dramatic example of how different epistemologies and paradigms can be reconciled and integrated in a vibrant and productive intellectual project.

2.1 Where HCI came from

Until the late 1970s, the only humans who interacted with computers were information technology professionals and dedicated hobbyists. This changed disruptively with the emergence of personal computing in the later 1970s. Personal computing, including both personal software (productivity applications, such as text editors and spreadsheets, and interactive computer games) and personal computer platforms (operating systems, programming languages, and hardware), made everyone in the world a potential computer user, and vividly highlighted the deficiencies of computers with respect to usability for those who wanted to use computers as tools.

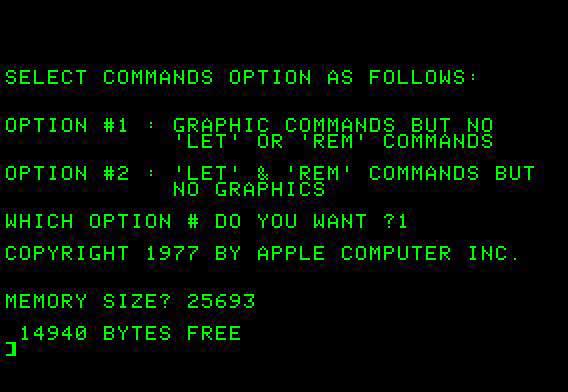

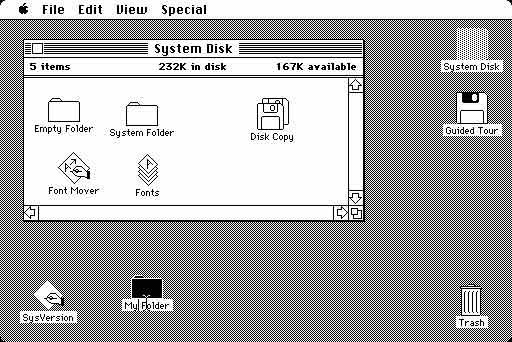

Author/Copyright holder: Steven Weyhrich. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

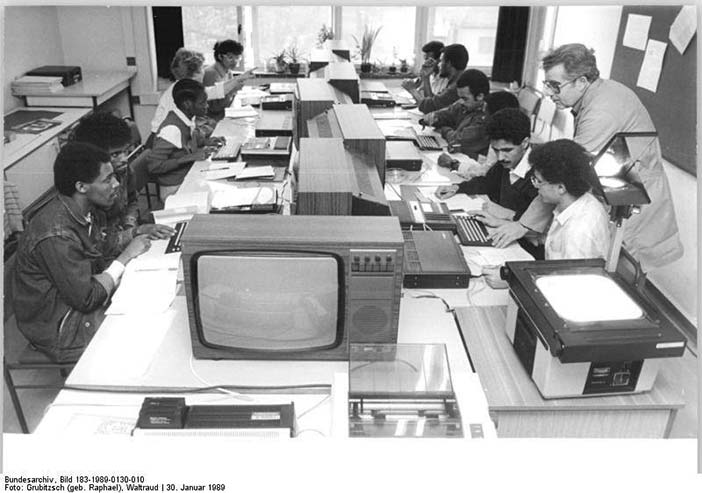

Author/Copyright holder: Courtesy of Grubitzsch (geb. Raphael), Waltraud. Copyright terms and licence:CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0)

Figure 2.1 A-B: Personal computing rapidly pushed computer use into the general population, starting in the later 1970s. However, the non-professional computer user was often subjected to arcane commands and system dialogs.

The challenge of personal computing became manifest at an opportune time. The broad project of cognitive science, which incorporated cognitive psychology, artificial intelligence, linguistics, cognitive anthropology, and the philosophy of mind, had formed at the end of the 1970s. Part of the programme of cognitive science was to articulate systematic and scientifically informed applications to be known as "cognitive engineering". Thus, at just the point when personal computing presented the practical need for HCI, cognitive science presented people, concepts, skills, and a vision for addressing such needs through an ambitious synthesis of science and engineering. HCI was one of the first examples of cognitive engineering.

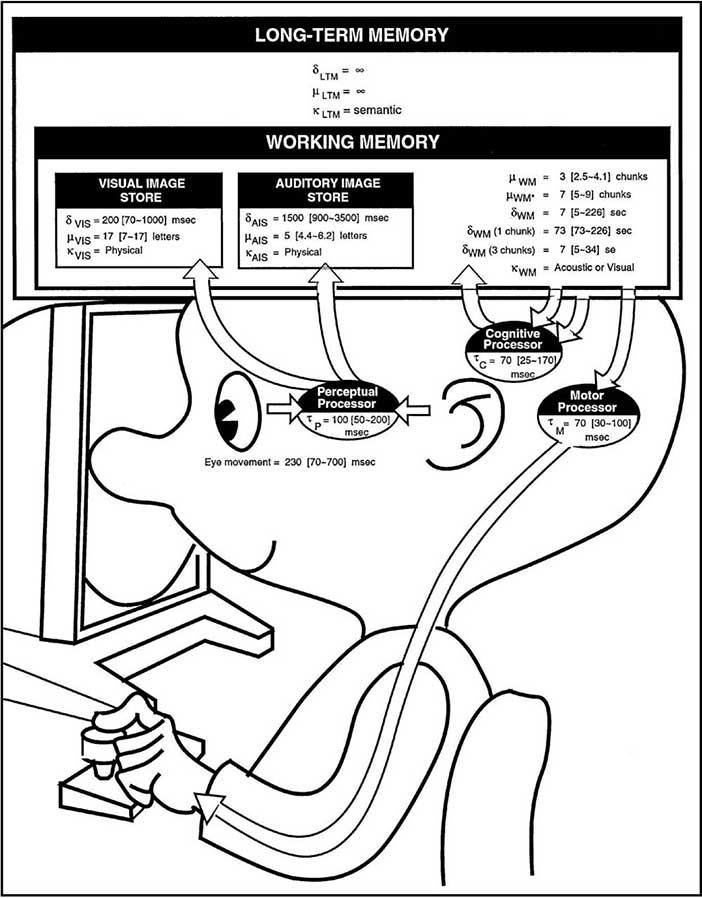

Author/Copyright holder: Card, Moran and Newell. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 2.2: The Model Human Processor was an early cognitive engineering model intended to help developers apply principles from cognitive psychology.

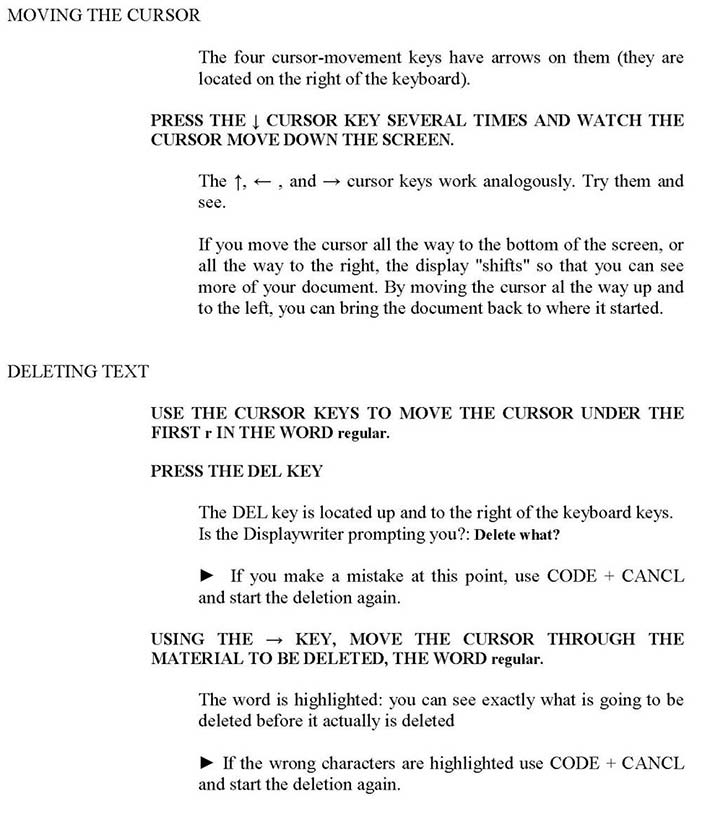

This was facilitated by analogous developments in engineering and design areas adjacent to HCI, and in fact often overlapping HCI, notably human factors engineering and documentation development. Human factors had developed empirical and task-analytic techniques for evaluating human-system interactions in domains such as aviation and manufacturing, and was moving to address interactive system contexts in which human operators regularly exerted greater problem-solving discretion. Documentation development was moving beyond its traditional role of producing systematic technical descriptions toward a cognitive approach incorporating theories of writing, reading, and media, with empirical user testing. Documents and other information needed to be usable also.

Author/Copyright holder: MIT Press. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 2.3: Minimalist information emphasized supporting goal-directed activity in a domain. Instead of topic hierarchies and structured practice, it emphasized succinct support for self-directed action and for recognizing and recovering from error.

Other historically fortuitous developments contributed to the establishment of HCI. Software engineering, mired in unmanageable software complexity in the 1970s (the “software crisis”), was starting to focus on nonfunctional requirements, including usability and maintainability, and on empirical software development processes that relied heavily on iterative prototyping and empirical testing. Computer graphics and information retrieval had emerged in the 1970s, and rapidly came to recognize that interactive systems were the key to progressing beyond early achievements. All these threads of development in computer science pointed to the same conclusion: The way forward for computing entailed understanding and better empowering users. These diverse forces of need and opportunity converged around 1980, focusing a huge burst of human energy, and creating a highly visible interdisciplinary project.

2.2 From cabal to community

The original and abiding technical focus of HCI was and is the concept of usability. This concept was originally articulated somewhat naively in the slogan "easy to learn, easy to use". The blunt simplicity of this conceptualization gave HCI an edgy and prominent identity in computing. It served to hold the field together, and to help it influence computer science and technology development more broadly and effectively. However, inside HCI the concept of usability has been re-articulated and reconstructed almost continually, and has become increasingly rich and intriguingly problematic. Usability now often subsumes qualities like fun, well being, collective efficacy, aesthetic tension, enhanced creativity, flow, support for human development, and others. A more dynamic view of usability is one of a programmatic objective that should and will continue to develop as our ability to reach further toward it improves.

Author/Copyright holder: ©. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 2.4: Usability is an emergent quality that reflects the grasp and the reach of HCI. Contemporary users want more from a system than merely “ease of use”.

Although the original academic home for HCI was computer science, and its original focus was on personal productivity applications, mainly text editing and spreadsheets, the field has constantly diversified and outgrown all boundaries. It quickly expanded to encompass visualization, information systems, collaborative systems, the system development process, and many areas of design. HCI is taught now in many departments/faculties that address information technology, including psychology, design, communication studies, cognitive science, information science, science and technology studies, geographical sciences, management information systems, and industrial, manufacturing, and systems engineering. HCI research and practice draws upon and integrates all of these perspectives.

A result of this growth is that HCI is now less singularly focused with respect to core concepts and methods, problem areas and assumptions about infrastructures, applications, and types of users. Indeed, it no longer makes sense to regard HCI as a specialty of computer science; HCI has grown to be broader, larger and much more diverse than computer science itself. HCI expanded from its initial focus on individual and generic user behavior to include social and organizational computing, accessibility for the elderly, the cognitively and physically impaired, and for all people, and for the widest possible spectrum of human experiences and activities. It expanded from desktop office applications to include games, learning and education, commerce, health and medical applications, emergency planning and response, and systems to support collaboration and community. It expanded from early graphical user interfaces to include myriad interaction techniques and devices, multi-modal interactions, tool support for model-based user interface specification, and a host of emerging ubiquitous, handheld and context-aware interactions.

There is no unified concept of an HCI professional. In the 1980s, the cognitive science side of HCI was sometimes contrasted with the software tools and user interface side of HCI. The landscape of core HCI concepts and skills is far more differentiated and complex now. HCI academic programs train many different types of professionals: user experience designers, interaction designers, user interface designers, application designers, usability engineers, user interface developers, application developers, technical communicators/online information designers, and more. And indeed, many of the sub-communities of HCI are themselves quite diverse. For example, ubiquitous computing (aka ubicomp) is subarea of HCI, but it is also a superordinate area integrating several distinguishable subareas, for example mobile computing, geo-spatial information systems, in-vehicle systems, community informatics, distributed systems, handhelds, wearable devices, ambient intelligence, sensor networks, and specialized views of usability evaluation, programming tools and techniques, and application infrastructures. The relationship between ubiquitous computing and HCI is paradigmatic: HCI is the name for a community of communities.

Author/Copyright holder: User Experience Professionals Association. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

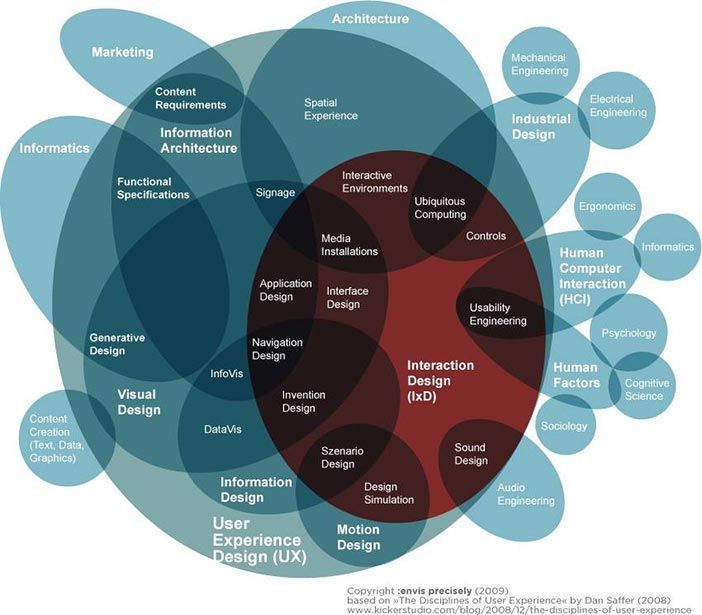

Author/Copyright holder: Envis Precisely. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 2.5 A-B: Two visualizations of the variety of disciplinary knowledge and skills involved in contemporary design of human-computer interactions

Indeed, the principle that HCI is a community of communities is now a point of definition codified, for example, in the organization of major HCI conferences and journals. The integrating element across HCI communities continues to be a close linkage of critical analysis of usability, broadly understood, with development of novel technology and applications. This is the defining identity commitment of the HCI community. It has allowed HCI to successfully cultivate respect for the diversity of skills and concepts that underlie innovative technology development, and to regularly transcend disciplinary obstacles. In the early 1980s, HCI was a small and focused specialty area. It was a cabal trying to establish what was then a heretical view of computing. Today, HCI is a vast and multifaceted community, bound by the evolving concept of usability, and the integrating commitment to value human activity and experience as the primary driver in technology.

2.3 Beyond the desktop

Given the contemporary shape of HCI, it is important to remember that its origins are personal productivity interactions bound to the desktop, such as word processing and spreadsheets. Indeed, one of biggest design ideas of the early 1980s was the so-called messy desk metaphor, popularized by the Apple Macintosh: Files and folders were displayed as icons that could be, and were scattered around the display surface. The messy desktop was a perfect incubator for the developing paradigm of graphical user interfaces. Perhaps it wasn’t quite as easy to learn and easy to use as claimed, but people everywhere were soon double clicking, dragging windows and icons around their displays, and losing track of things on their desktop interfaces just as they did on their physical desktops. It was surely a stark contrast to the immediately prior teletype metaphor of Unix, in which all interactions were accomplished by typing commands.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 2.6: The early Macintosh desktop metaphor: Icons scattered on the desktop depict documents and functions, which can be selected and accessed (as System Disk in the example)

Even though it can definitely be argued that the desktop metaphor was superficial, or perhaps under-exploited as a design paradigm, it captured imaginations of designers and the public. These were new possibilities for many people in 1980, pundits speculated about how they might change office work. Indeed, the tsunami of desktop designs challenged, sometimes threatened the expertise and work practices of office workers. Today they are in the cultural background. Children learn these concepts and skills routinely.

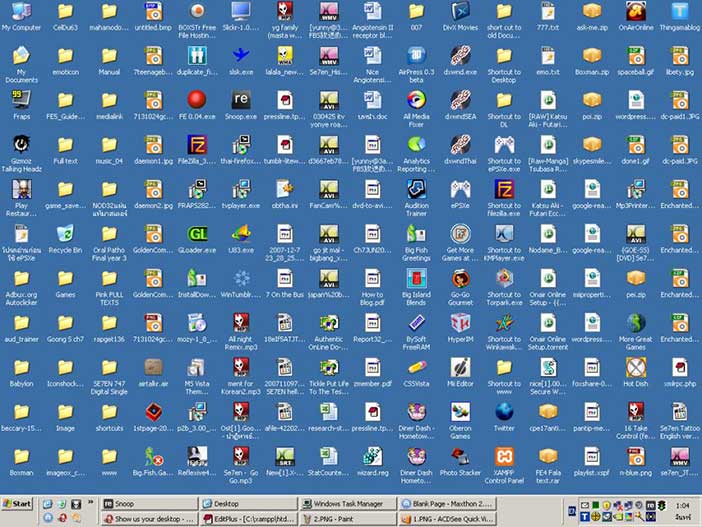

As HCI developed, it moved beyond the desktop in three distinct senses. First, the desktop metaphor proved to be more limited than it first seemed. It’s fine to directly represent a couple dozen digital objects as icons, but this approach quickly leads to clutter, and is not very useful for people with thousands of personal files and folders. Through the mid-1990s, HCI professionals and everyone else realized that search is a more fundamental paradigm than browsing for finding things in a user interface. Ironically though, when early World Wide Web pages emerged in the mid-1990s, they not only dropped the messy desktop metaphor, but for the most part dropped graphical interactions entirely. And still they were seen as a breakthrough in usability (of course, the direct contrast was to Unix-style tools like ftp and telnet). The design approach of displaying and directly interacting with data objects as icons has not disappeared, but it is no longer a hegemonic design concept.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 2.7: The early popularity of messy desktops for personal information spaces does not scale.

The second sense in which HCI moved beyond the desktop was through the growing influence of the Internet on computing and on society. Starting in the mid-1980s, email emerged as one of the most important HCI applications, but ironically, email made computers and networks into communication channels; people were not interacting with computers, they were interacting with other people through computers. Tools and applications to support collaborative activity now include instant messaging, wikis, blogs, online forums, social networking, social bookmarking and tagging services, media spaces and other collaborative workspaces, recommender and collaborative filtering systems, and a wide variety of online groups and communities. New paradigms and mechanisms for collective activity have emerged including online auctions, reputation systems, soft sensors, and crowd sourcing. This area of HCI, now often called social computing, is one of the most rapidly developing.

Author/Copyright holder: ©. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Author/Copyright holder: Courtesy of Larry Ewing. Copyright terms and licence: CC-Att-3 (Creative Commons Attribution 3.0 Unported).

Author/Copyright holder: GitHub Inc. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Figure 2.8 A-B-C: A huge and expanding variety of social network services are part of everyday computing experiences for many people. Online communities, such as Linux communities and GitHub, employ social computing to produce high-quality knowledge work.

The third way that HCI moved beyond the desktop was through the continual, and occasionally explosive diversification in the ecology of computing devices. Before desktop applications were consolidated, new kinds of device contexts emerged, notably laptops, which began to appear in the early 1980s, and handhelds, which began to appear in the mid-1980s. One frontier today is ubiquitous computing: The pervasive incorporation of computing into human habitats — cars, home appliances, furniture, clothing, and so forth. Desktop computing is still very important, though the desktop habitat has been transformed by the wide use of laptops. To a considerable extent, the desktop itself has moved off the desktop.

Author/Copyright holder: Courtesy of Andrew Stern. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0)

Author/Copyright holder: Courtesy of United States Federal Government. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 2.9 A-B-C: Computing moved off the desktop to be everywhere all the time. Computers are in phones, cars, meeting rooms, and coffee shops.

The focus of HCI has moved beyond the desktop, and its focus will continue to move. HCI is a technology area, and it is ineluctably driven to frontiers of technology and application possibility. The special value and contribution of HCI is that it will investigate, develop, and harness those new areas of possibility not merely as technologies or designs, but as means for enhancing human activity and experience.

2.4 The task-artifact cycle

The movement of HCI off the desktop is a large-scale example of a pattern of technology development that is replicated throughout HCI at many levels of analysis. HCI addresses the dynamic co-evolution of the activities people engage in and experience, and the artifacts — such as interactive tools and environments — that mediate those activities. HCI is about understanding and critically evaluating the interactive technologies people use and experience. But it is also about how those interactions evolve as people appropriate technologies, as their expectations, concepts and skills develop, and as they articulate new needs, new interests, and new visions and agendas for interactive technology.

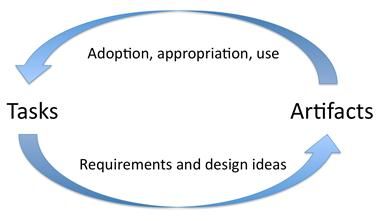

Reciprocally, HCI is about understanding contemporary human practices and aspirations, including how those activities are embodied, elaborated, but also perhaps limited by current infrastructures and tools. HCI is about understanding practices and activity specifically as requirements and design possibilities envisioning and bringing into being new technology, new tools and environments. It is about exploring design spaces, and realizing new systems and devices through the co-evolution of activity and artifacts, the task-artifact cycle.

Author/Copyright holder: Courtesy of John M. Carroll. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0)

Figure 2.10: Human activities implicitly articulate needs, preferences and design visions. Artifacts are designed in response, but inevitably do more than merely respond. Through the course of their adoption and appropriation, new designs provide new possibilities for action and interaction. Ultimately, this activity articulates further human needs, preferences, and design visions.

Understanding HCI as inscribed in a co-evolution of activity and technological artifacts is useful. Most simply, it reminds us what HCI is like, that all of the infrastructure of HCI, including its concepts, methods, focal problems, and stirring successes will always be in flux. Moreover, because the co-evolution of activity and artifacts is shaped by a cascade of contingent initiatives across a diverse collection of actors, there is no reason to expect HCI to be convergent, or predictable. This is not to say progress in HCI is random or arbitrary, just that it is more like world history than it is like physics. One could see this quite optimistically: Individual and collective initiative shapes what HCI is, but not the laws of physics.

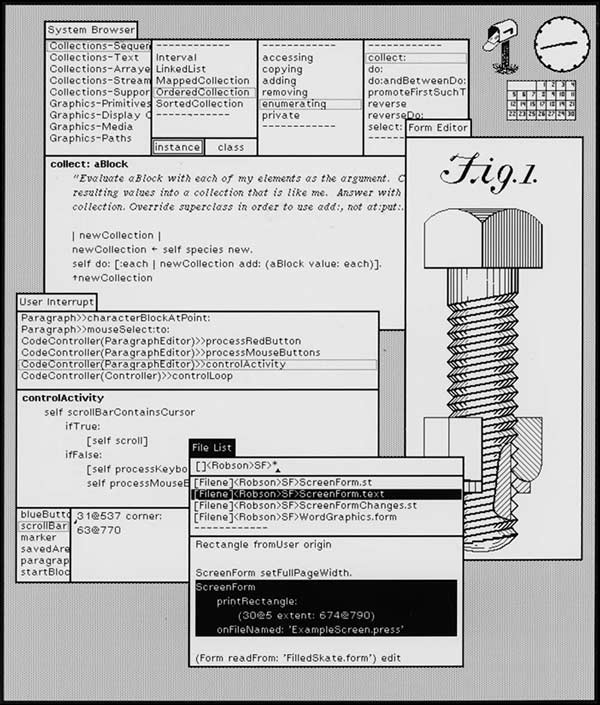

Author/Copyright holder: Palo Alto Research Center Incorporated (PARC) - a Xerox company. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 2.11: Smalltalk was a programming language and environment project in Xerox Palo Alto Research Center in the 1970s. The work of a handful of people, it became the direct antecedent for the modern graphical user interface.

A second implication of the task-artifact cycle is that continual exploration of new applications and application domains, new designs and design paradigms, new experiences, and new activities should remain highly prized in HCI. We may have the sense that we know where we are going today, but given the apparent rate of co-evolution in activity and artifacts, our effective look-ahead is probably less than we think. Moreover, since we are in effect constructing a future trajectory, and not just finding it, the cost of missteps is high. The co-evolution of activity and artifacts evidences strong hysteresis, that is to say, effects of past co-evolutionary adjustments persist far into the future. For example, many people struggle every day with operating systems and core productivity applications whose designs were evolutionary reactions to misanalyses from two or more decades ago. Of course, it is impossible to always be right with respect to values and criteria that will emerge and coalesce in the future, but we should at least be mindful that very consequential missteps are possible.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 2.12 A-B: The Drift Table is an interactive coffee table; aerial views of England and Wales are displayed the porthole on top; placing and moving objects on the table causes the aerial imagery to scroll. This design is intended to provoke reaction and challenge thinking about domestic technologies.

The remedy is to consider many alternatives at every point in the progression. It is vitally important to have lots of work exploring possible experiences and activities, for example, on design and experience probes and prototypes. If we focus too strongly on the affordances of currently embodied technology we are too easily and uncritically accepting constraints that will limit contemporary HCI as well as all future trajectories.

Author/Copyright holder: Apple Computer, Inc. Copyright terms and licence: All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice.

Author/Copyright holder: Courtesy of Antonio Zugaldia. Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

Figure 2.13 A-B: Siri, the speech-based intelligent assistant for Apple’s iPhone, and the augmented reality glasses of Goggle’s Project Glass are recent examples of technology visions being turned into everyday HCI experiences.

HCI is not fundamentally about the laws of nature. Rather, it manages innovation to ensure that human values and human priorities are advanced, and not diminished through new technology. This is what created HCI; this is what led HCI off the desktop; it will continue to lead HCI to new regions of technology-mediated human possibility. This is why usability is an open-ended concept, and can never be reduced to a fixed checklist.

2.5 A caldron of theory

The contingent trajectory of HCI as a project in transforming human activity and experience through design has nonetheless remained closely integrated with the application and development of theory in the social and cognitive sciences. Even though, and to some extent because the technologies and human activities at issue in HCI are continually co-evolving, the domain has served as a laboratory and incubator for theory. The origin of HCI as an early case study in cognitive engineering had an imprinting effect on the character of the endeavor. From the very start, the models, theories and frameworks developed and used in HCI were pursued as contributions to science: HCI has enriched every theory it has appropriated. For example, the GOMS (Goals, Operations, Methods, Selection rules) model, the earliest native theory in HCI, was a more comprehensive cognitive model than had been attempted elsewhere in cognitive science and engineering; the model human processor included simple aspects of perception, attention, short-term memory operations, planning, and motor behavior in a single model. But GOMS was also a practical tool, articulating the dual criteria of scientific contribution plus engineering and design efficacy that has become the culture of theory and application in HCI.

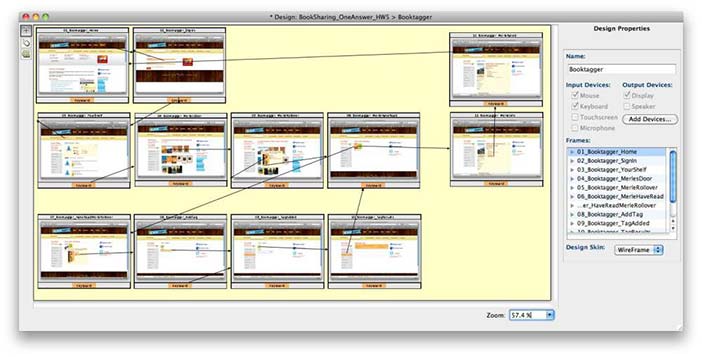

Author/Copyright holder: Bonnie E. John. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Bonnie E. John. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 2.14 A-B: CogTool analyzes demonstrations of user tasks to produce a model of the cognitive processes underlying task performance; from this model it predicts expert performance times for the tasks.

The focus of theory development and application has moved throughout the history of HCI, as the focus of the co-evolution of activities and artifacts has moved.?á Thus, the early information processing-based psychological theories, like GOMS, were employed to model the cognition and behavior of individuals interacting with keyboards, simple displays, and pointing devices. This initial conception of HCI theory was broadened as interactions became more varied and applications became richer. For example, perceptual theories were marshaled to explain how objects are recognized in a graphical display, mental model theories were appropriated to explain the role of concepts — like the messy desktop metaphor — in shaping interactions, active user theories were developed to explain how and why users learn and making sense of interactions. In each case, however, these elaborations were both scientific advances and bases for better tools and design practices.

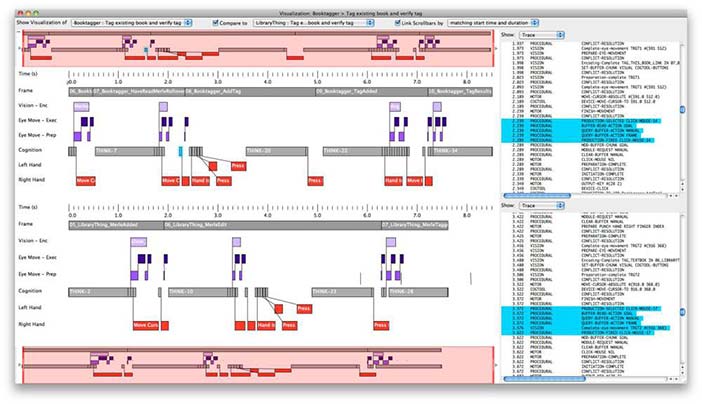

This dialectic of theory and application has continued in HCI. It is easy to identify a dozen or so major currents of theory, which themselves can by grouped (roughly) into three eras: theories that view human-computer interaction as information processing, theories that view interaction as the initiative of agents pursuing projects, and theories that view interaction as socially and materially embedded in rich contexts. To some extent, the sequence of theories can be understood as a convergence of scientific opportunity and application need: Codifying and using relatively austere models made it clear what richer views of people and interaction could be articulated and what they could contribute; at the same time, personal devices became portals for interaction in the social and physical world, requiring richer theoretical frameworks for analysis and design.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 2.15: Through the past three decades, a series of theoretical paradigms emerged to address the expanding ambitions of HCI research, design, and product development. Successive theories both challenged and enriched prior conception of people and interaction. All of these theories are still relevant and still in use today in HCI.

The sequence of theories and eras is of course somewhat idealized. People still work on GOMS models; indeed, all of the major models, theories and frameworks that ever were employed in HCI are still in current use. Indeed, they continue to develop as the context of the field develops. GOMS today is more a niche model than a paradigm for HCI, but has recently been applied in research on smart phone designs and human-robot interactions.

The challenge of integrating, or at least better coordinating descriptive and explanatory science goals with prescriptive and constructive design goals is abiding in HCI. There are at least three ongoing directions — traditional application of ever-broader and deeper basic theories, development of local, sometimes domain dependent proto-theories within particular design domains, and the use of design rationale as a mediating level of description between basic science and design practice.

2.6 Implications of HCI for science, practice, and epistemology

One of the most significant achievements of HCI is its evolving model of the integration of research and practice. Initially this model was articulated as a reciprocal relation between cognitive science and cognitive engineering. Later, it ambitiously incorporated a diverse science foundation, notably social and organizational psychology, Activity Theory, distributed cognition, and sociology, and a ethnographic approaches human activity, including the activities of design and technology development and appropriation. Currently, the model is incorporating design practices and research across a broad spectrum, for example, theorizing user experience and ecological sustainability. In these developments, HCI provides a blueprint for a mutual relation between science and practice that is unprecedented.

Although HCI was always talked about as a design science or as pursuing guidance for designers, this was construed at first as a boundary, with HCI research and design as separate contributing areas of professional expertise. Throughout the 1990s, however, HCI directly assimilated, and eventually itself spawned, a series of design communities. At first, this was a merely ecumenical acceptance of methods and techniques laying those of beyond those of science and engineering. But this outreach impulse coincided with substantial advances in user interface technologies that shifted much of the potential proprietary value of user interfaces into graphical design and much richer ontologies of user experience.

Somewhat ironically, designers were welcomed into the HCI community just in time to help remake it as a design discipline. A large part of this transformation was the creation of design disciplines and issues that did not exist before. For example, user experience design and interaction design were not imported into HCI, but rather were among the first exports from HCI to the design world. Similarly, analysis of the productive tensions between creativity and rationale in design required a design field like HCI in which it is essential that designs have an internal logic, and can be systematically evaluated and maintained, yet at the same time provoke new experiences and insights.?á Design is currently the facet of HCI in most rapid flux. It seems likely that more new design proto-disciplines will emerge from HCI during the next decade.

No one can accuse HCI of resting on laurels. Conceptions of how underlying science informs and is informed by the worlds of practice and activity have evolved continually in HCI since its inception. Throughout the development of HCI, paradigm-changing scientific and epistemological revisions were deliberately embraced by a field that was, by any measure, succeeding intellectually and practically. The result has been an increasingly fragmented and complex field that has continued to succeed even more. This example contradicts the Kuhnian view of how intellectual projects develop through paradigms that are eventually overthrown. The continuing success of the HCI community in moving its meta-project forward thus has profound implications, not only for human-centered informatics, but for epistemology.

2.7 Pointers: How to learn more

In these “pointers” I have listed general background references to the discussion above, specific references to points made in the text, and reference to other chapters in the Encyclopedia of Human-Computer Interaction (Interaction-Design.org). I have organized the pointers by section, so the next six sections (below) echo the six major section headings in the paper itself (above).

2.7.1 Where HCI came from

There are many highly readable descriptions of the disciplinary landscape in which early HCI developed:

1980 volume of the journal Cognitive Science provides a vivid picture of the foundations of cognitive science as they were being built (http://csjarchive.cogsci.rpi.edu/1980v04/index.html);

F. Brooks’ book The Mythical Man-Month (1975, Addison-Wesley) is an insightful analysis of software engineering, and the original source for the idea that iterative prototyping is inevitable in the design and development of complex software;

J. Foley and A. van Dam’s book Computer Graphics (1982, Addison Wesley) describes the early field of computer graphics as a root of what would become human-computer interaction.

Vivid primary information about the founding of HCI - the proceedings of the 1982 US Bureau of Standards Conference in Gaithersburg, Maryland, are available in the ACM Digital Library at http://dl.acm.org/citation.cfm?id=800049

Several histories of HCI have been published:

Carroll, J.M. (1997) Human-Computer Interaction: Psychology as a science of design. Annual Review of Psychology, 48, 61-83.?á (Co-published (slightly revised) in International Journal of Human-Computer Studies, 46, 501-522).

Grudin, J. (2012) A Moving Target: The evolution of Human-computer Interaction. In J. Jacko (Ed.), Human-computer interaction handbook: Fundamentals, evolving technologies, and emerging applications. (3rd edition). Taylor & Francis.

Myers, B.A. (1998) A Brief History of Human Computer Interaction Technology. ACM interactions. Vol. 5, no. 2, March. pp. 44-54.

The leading HCI textbooks also include some discussion of history (see below).

2.7.2 From cabal to community

There is some dispute as to how to address the evolution of usability. In this overview, I take a historical view that the concept itself is evolving, analogous to way physics has treated its fundamental concepts, such as gravity and mass. See also

Carroll, J.M. (2004) Beyond fun. ACM interactions, 11(5), 38-40.

The ACM Special Interest Group on Computer-Human Interaction (SIGCHI), and its CHI Conference, one of the most general and significant HCI conferences, now is explicitly organized into communities that manage pieces of the technical program (http://www.sigchi.org/communities). In fall of 2012, these communities included CCaA (Creativity, Cognition and Art), CSCW (Computer-Supported Cooperative Work), EICS (Engineering Interactive Computer Systems), HCI and Sustainability, HCI Education, HCI4D (HCI for Development), Heritage Matters, Latin American HCI, Pattern Languages and HCI, Research-practice Interaction, UbiComp (Ubiquitous Computing), and UIST (User Interface Software and Tools).

An even more diverse view of HCI can be appreciated by investigating HCI activities and interest groups embedded in professional communities other than ACM: the Design Research Society (designresearchsociety.org), the Association for Information Systems (sighci.org), the Human Factors and Ergonomics Society (hfes.org), the Society for Technical Communication (stc.org), the AIGA (aiga.org), International Communication Association (icahdq.org), the Interaction Design Association (https://www.ixda.org/), the IEEE Professional Communication Society (pcs.ieee.org), the European Association of Work and Organizational Psychology (eawop2013.org), and many others.

Further relevant material in the Encyclopedia of Human-Computer Interaction can be found in chapters 1, 3, 8, 13, 15, 19, 21, and 22.

2.7.3 Beyond the desktop

A classic discussion of the desktop metaphor is Apple Human Interface Guidelines: Apple & Raskin, J. (1992). Macintosh Human Interface Guidelines. Addison-Wesley Professional. ISBN 0-201-62216-5.

An early critique of the Macintosh user interface paradigm is:

Gentner, D. and Nielsen, J. (1996) The Anti-Mac interface, Communications of the ACM 39, 8 (August), 70-82.

The emergence of collaboration, mobility, and new types of user devices and interactions as major themes driving “HCI beyond the desktop” are discussed widely, of course; here are some starting points:

Horn, D.B., Finholt, T.A., Birnholtz, J.P., Motwani, D. and Jayaraman, S. (2004) Six degrees of jonathan grudin: a social network analysis of the evolution and impact of CSCW research. In Proceedings of the 2004 ACM conference on Computer supported cooperative work (CSCW '04). ACM, New York, NY, USA, 582-591.

Luff, P. and Heath, C. (1998) Mobility in collaboration. In Proceedings of the 1998 ACM conference on Computer supported cooperative work (CSCW '98). ACM, New York, NY, USA, 305-314.

Shaer, O. and Hornecker, E. (2010) Tangible User Interfaces: Past, Present, and Future Directions. Found. Trends Hum.-Comput. Interact. 3, 1-2 (January), 1-137.

Waller V. and Johnston, R.B. (2009) Making ubiquitous computing available. Commun. ACM 52, 10 (October 2009), 127-130.

Further relevant material in the Encyclopedia of Human-Computer Interaction can be found in chapters 4, 14, 23, and 27.

2.7.4 The task-artifact cycle

I use the term “task-artifact cycle” here, as originally introduced in a 1991 paper with Wendy Kellogg and Mary Beth Rosson, though think “activity” better conveys what I mean than “task”. Not surprisingly, the terminology of the task-artifact cycle is itself an example of how HCI shifts under its own foundations; see

Carroll, J.M., Kellogg, W.A., & Rosson, M.B. (1991) The task-artifact cycle.?á In J.M. Carroll (Ed.),?á Designing Interaction: Psychology at the human-computer interface. ?áNew York: Cambridge University Press, pages 74-102.

A good reference for the history of the Smalltalk project is

Kay, A.C. (1996) The early history of Smalltalk. In History of programming languages---II, Thomas J. Bergin, Jr. and Richard G. Gibson, Jr. (Eds.). ACM, New York, NY, USA 511-598.

For more discussion of the drift table project, see

Boucher, A. and Gaver, W. 2006. Developing the drift table. interactions 13, 1 (January 2006), 24-27.

For more discussion of the general point being emphasized through the example of the drift table, see

Sengers, P. and W. Gaver, W. (2006) Staying open to interpretation: engaging multiple meanings in design and evaluation. In Proceedings of the 6th conference on Designing Interactive systems (DIS '06). ACM, New York, NY, USA, 99-108.

Further relevant material in the Encyclopedia of Human-Computer Interaction can be found in chapters 7, 12, 14, and 20.

2.7.5 A caldron of theory

I edited a book of theory overviews (Carroll, 2003), referenced below. It is available online (http://www.sciencedirect.com/science/book/9781558608085). I am currently curating theory overviews in the Synthesis Lectures on Human-Centered Informatics (http://www.morganclaypool.com/toc/hci/1/1).

John, B.E. (2011) Using predictive human performance models to inspire and support UI design recommendations. In Proceedings of the 2011 annual conference on Human factors in computing systems (CHI '11). ACM, New York, NY, USA, 983-986.

Further relevant material in the Encyclopedia of Human-Computer Interaction can be found in chapters 5, 6, 9, 11, 16, 1 7, 24, 25, 26, and 28.

2.7.6 Implications of HCI for science practice and epistemology

The work I refer to on creativity and design rationale is collected in a book, Carroll (2012), reference below. A nice example, I think, of the theoretical multi-vocality I describe in this section can be appreciated by contrasting these three treatments of aesthetics in HCI design (all are published in the Synthesis Lectures on Human-Centered Informatics, http://www.morganclaypool.com/toc/hci/1/1):

Hassenzahl, M. (2010). Experience Design: Technology for All the Right Reasons.

Sutcliffe, A. (2009) Designing for User Engagement: Aesthetic and Attractive User Interfaces

Wright, P. and McCarthy, J. (2010) Experience-Centered Design: Designers, Users, and Communities in Dialogue.

My reference to Kuhn regarding the development of science and knowledge is:

Kuhn, T.S. (1962) The Structure of Scientific Revolutions. Chicago: University of Chicago Press.

Kuhn, T.S. (1977) The Essential Tension: Selected Studies in Scientific Tradition and Change. Chicago and London: University of Chicago Press.

2.7.7 Textbooks

The number of important monographs is just too large to list, so I have concentrated in the list below on a few significant textbooks. Readers should also check the HCI Bibliography, the HCC Education Digital Library, the ACM Digital Library, and the Synthesis Series of lectures on human-centered informatics. These are the three most comprehensive textbooks:

Dix, A.J., Finlay, J.E., Abowd, G.D. and Beale, R. (2003). Human-Computer Interaction (3rd Edition). Prentice Hall

Rogers, Y., Sharp, H. and Preece, J.J. (2011) Interaction Design: Beyond Human-Computer Interaction (3rd ed.). John Wiley and Sons

Shneiderman, B. and Plaisant, C. (2009). Designing the User Interface: Strategies for Effective Human-Computer Interaction (5th ed.). Addison-Wesley

Several texts present more specialized views of HCI. Carroll (2003) collected a set of introductory papers on major theories used in HCI. L??wgren and Stolterman (2007) present a design perspective on HCI. Rosson and Carroll (2002) emphasize a software engineering view of HCI using a set of case studies to convey an engineering process view of usability. Tidwell (2011) presents a pattern-based approach to user interface design.

Carroll, John M. (ed.) (2003). HCI Models, Theories, and Frameworks: Toward a Multidisciplinary Science. Morgan Kaufmann

L??wgren, J. and Stolterman, E. (2007) Thoughtful Interaction Design: A Design Perspective on Information Technology. MIT Press.

Rosson, M.B. and Carroll, J.M. (2002). Usability Engineering: Scenario-Based Development of Human Computer Interaction. Morgan Kaufmann.

Tidwell, J. (2011) Designing Interfaces (2nd ed.). O’Reilly Media.

2.7.8 Journals

The leading general journal for HCI is the ACM Transactions on Computer-Human Interaction. However, there are many other well-established journals of roughly equivalent quality: Human-Computer Interaction (emphasizes design research), Interacting With Computers, International Journal of Human-Computer Studies, Behaviour and Information Technology, International Journal of Human-Computer Interaction, Journal of Computer-Supported Cooperative Work. Recently, Association for Information Systems has initiated a Transactions on Human-Computer Interaction.Morgan-Claypool publishes a monograph series, Synthesis Lectures on Human-Centered Informatics.

My personal perspectives on the emergence and development of HCI are elaborated in several other articles, monographs, and introductions to edited books:

Carroll, J.M. ?á(1995)?á Introduction: The scenario perspective on system development.?á In Carroll, J.M. (Ed.), Scenario-based design: Envisioning work and technology in system development. New York: John Wiley & Sons, pp 1-17.

Carroll, John M. (1997) Human-Computer Interaction: Psychology as a Science of Design. Annual Review of Psychology, 48, 61-83.?á Co-published (slightly revised) in International Journal of Human-Computer Studies, 46(4), 501-522

Carroll, J.M. (1998) Reconstructing minimalism. In J.M. Carroll (Ed.) Minimalism beyond?á “The Nurnberg Funnel”. M.I.T. Press

Carroll, J.M. (2000)?á Making use: Scenario-based design of human-computer interactions.?á MIT Press.?á Japanese edition published in 2003 by Kyoritsu Publishing; translated by Professor Kentaro Go.

Carroll, John M. (2002). Human-Computer Interaction. In: (ed.), MacMillan Encyclopedia of Cognitive Science. Macmillan-Nature Publishing Group.

Carroll, John M. (2004). Beyond fun. Interactions, 11(5), 38-40

Carroll, J.M. (2010) Narrating the Future: Scenarios and the Cult of Specification. In Selber, S. (Ed.), Rhetorics And Technologies: New directions in writing and communication. University of South Carolina Press, pp. 134-147.

Carroll, J.M. (2010). Conceptualizing a possible discipline of Human-Computer Interaction. Interacting with Computers, 22, 3-12.

Carroll, J.M. (2012) The neighborhood in the Internet: Design research projects in community informatics. Routledge.

Carroll, J.M. (2012) Creativity and Rationale: The Essential Tension, in J.M. Carroll (Ed.) Creativity and rationale: Enhancing human experience by design. Springer, pages 1-10.

2.7.9 Relevant Conference Series

2.7.9.1 CHI - Human Factors in Computing Systems

20112010200920082007200620052004200320022001200019991998199719961995199419931992199119901989198819871986198519831982

2.7.9.2 ECSCW - European Conference on Computer Supported Cooperative Work

200920072003200320012001199919971995199319911989

2.7.9.3 CSCW - Conference On Computer-Supported Cooperative Work

201220122012201120102008200620042004200220001998199619941992199019881986

2.7.9.4 UIST - Symposium on User Interface Software and Technology

20122012201120102009200820072007200720062005200420032003200220012000199919981997199619951994199319921991199019891988

2.7.9.5 NordiCHI - Nordic conference on human-computer interaction

20102008200620042002200220002000

2.7.9.6 BCSHCI People and Computers

2012201020092008200620062005200420032002200120001998199719961995199419931992199119891988198719861985

2.7.9.7 SIGGROUP - Conference on Supporting Group Work

2010200920072005200320011999199719951993199119901988198619841982

2.7.9.8 DIS - Designing Interactive Systems

201220102008200620042002200019971995

Next conference is coming up 08 Jun 2016 in Brisbane, Australia

2.7.9.9 CC - Creativity and Cognition

2.8 References

Myers, Brad A. (1998): A Brief History of Human-Computer Interaction Technology. In Interactions, 5 (2) pp. 44-54