“Customer: “Which of your cuts are the best?”

Butcher: “All of my cuts are the best.””

While the previous chapter described computing system levels, this chapter describes how the many dimensions of system performance interact to create a design requirements space.

2.1 The Elephant in the Room

The beast of computing has regularly defied pundit predictions. Key advances like the cell-phone (Smith et al., 2002) and open-source development (Campbell-Kelly, 2008) were not predicted by the experts of the day, though the signs were there for all to see. Experts were pushing media-rich systems even as lean text chat, blogs, texting and wikis took off. Even today, people with smart-phones still send text messages. Google's simple white screen scooped the search engine field, not Yahoo's multi-media graphics. The gaming innovation was social gaming, not virtual reality helmets as the experts predicted. Investors in Internet bandwidth lost money when users did not convert to a 3G video future. Cell phone companies are still trying to get users to sign up to 4G networks.

In computing, the idea that practice leads but theory bleeds has a long history. Over thirty years ago, paper was declared "dead", to be replaced by the electronic paperless office (Toffler, 1980). Yet today, paper is used more than ever before. James Martin saw program generators replacing programmers, but today, we still have a programmer shortage. A “leisure society” was supposed to arise as machines took over our work, but today we are less leisured than ever before (Golden & Figart, 2000). The list goes on: email was supposed to be for routine tasks only, the Internet was supposed to collapse without central control, video was supposed to replace text, teleconferencing was supposed to replace air travel, AI smart-help was supposed to replace help-desks, and so on.

We get it wrong time and again, because computing is the elephant in our living room. We cannot see it because it is too big. In the story of the blind men and the elephant, one grabbed its tail and found the elephant like a rope and bendy, another took a leg and declared the elephant was fixed like a pillar, a third felt an ear and thought it like a rug and floppy, while the last seized the trunk, and found it like a pipe but very strong (Sanai, 1968). Each saw a part but none saw the whole. This chapter outlines the multi-dimensional nature of computing.

2.2 Design Requirements

To design a system is to find problems early, e.g. a misplaced wall on an architect's plan can be moved by the stroke of a pen, but once the wall is built, changing it is not so easy. Yet to design a thing, its performance requirements must be known. Doing this is the job of requirements engineering, whichanalyzes stakeholder needs to specify what a system must do in order that the stakeholders will sign off on the final product. It is basic to system design:

“The primary measure of success of a software system is the degree to which it meets the purpose for which it was intended. Broadly speaking, software systems requirements engineering (RE) is the process of discovering that purpose...”

-- Nuseibeh & Easterbrook, 2000: p. 1

A requirement can be a particular value (e.g. uses SSL), a range of values (e.g. less than $100), or a criterion scale (e.g. is secure). Given a system's requirements designers can build it, but the computing literature cannot agree on what the requirements are. One text has usability, maintainability, security and reliability (Sommerville, 2004, p. 24) but the ISO 9126-1 quality model has functionality, usability, reliability, efficiency, maintainability and portability (Losavio et al., 2004).

Berners-Lee made scalability a World Wide Web criterion (Berners-Lee, 2000) but others advocate open standards between systems (Gargaro et al., 1993). Business prefers cost, quality, reliability, responsiveness and conformance to standards (Alter, 1999), but software architects like portability, modifiability and extendibility (de Simone & Kazman, 1995). Others espouse flexibility (Knoll & Jarvenpaa, 1994) or privacy (Regan, 1995). On the issue of what computer systems need to succeed, the literature is at best confused, giving what developers call the requirements mess (Lindquist, 2005). It has ruined many a software project. It is the problem that agile methods address in practice (footnote 1) and that this chapter now addresses in theory.

In current theories, each specialty sees only itself. Security specialists see security as availability, confidentiality and integrity (OECD, 1996), so to them, reliability is part of security. Reliability specialists see dependability as reliability, safety, security and availability (Laprie & Costes, 1982), so to them security is part of a general reliability concept. Yet both cannot be true. Similarly, a usability review finds functionality and error tolerance part of usability (Gediga et al., 1999) while a flexibility review finds scalability, robustness and connectivity aspects of flexibility (Knoll & Jarvenpaa, 1994). Academic specialties usually expand to fill the available theory space, but some specialties recognize their limits:

“The face of security is changing. In the past, systems were often grouped into two broad categories: those that placed security above all other requirements, and those for which security was not a significant concern. But ... pressures ... have forced even the builders of the most security-critical systems to consider security as only one of the many goals that they must achieve”

-- Kienzle & Wulf, 1998: p5

Analyzing performance goals in isolation gives diminishing returns.

2.3 Design Spaces

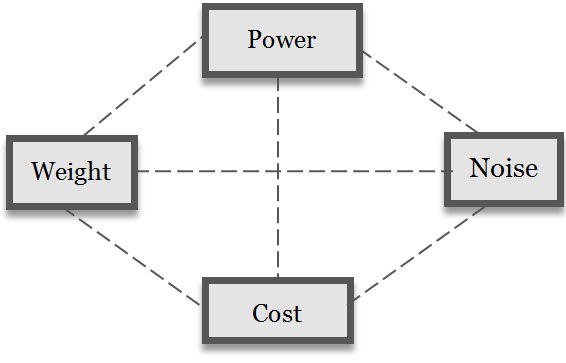

Architect Christopher Alexander observed that vacuum cleaners with powerful engines and more suction were also heavier, noisier and cost more (Alexander, 1964). One performance criterion has a best point, but two criteria, like power and cost, give a best line. The efficient frontier of two performance criteria is a line, of the maxima of one for each value of the other (Keeney & Raiffa, 1976). System design is choosing a point in a multi-dimensional design criterion space with many "best" points, e.g. a cheap, heavy but powerful vacuum cleaner, or a light, expensive and powerful one (Figure 2.1). The efficient frontier is a set of "best" combinations in a design space (footnote 2). Advanced system performance is not a one dimensional ladder to excellence, but a station with many trains to many destinations.

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 2.1: A vacuum cleaner design space

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 2.1: A vacuum cleaner design space

Successful life includes flexible viruses, reliable plants, social insects and powerful tigers, with the latter the endangered species. There is no “best” animal because in nature performance is multi-dimensional, and a multi-dimensional design space gives many best points. In evolution, not just the strong are fit, and too much of a good thing can be fatal, as over specialization can lead to extinction.

Likewise, computing has no "best". If computer performance was just more processing we would all want supercomputers, but we buy laptops with less power over desktops with more (David et al., 2003). On the information level, blindly adding software functions gives bloatware (footnote 3), applications with many features no-one needs.

Design is the art of reconciling many requirements in a particular system form, e.g. a quiet and powerful vacuum cleaner. It is the innovative synthesis of a system in a design requirements space (Alexander, 1964). The system then performs according to its requirement criteria.

Most design spaces are not one dimensional, e.g. Berners-Lee chose HTML for the World Wide Web for its flexibility (across platforms), reliability and usability (easy to learn). An academic conference rejected his WWW proposal because HTML was inferior to SGML (Standard Generalized Markup Language). Specialists saw their specialty criterion, not system performance in general. Even after the World Wide Web's phenomenal success, the blindness of specialists to a general system view remained:

“Despite the Web’s rise, the SGML community was still criticising HTML as an inferior subset ... of SGML”

-- Berners-Lee, 2000: p96

What has changed since academia found the World Wide Web inferior? Not a lot. If it is any consolation, an equally myopic Microsoft also found Berners-Lee’s proposal unprofitable from a business perspective. In the light of the benefits both now freely take from the web, academia and business should re-evaluate their criteria.

2.4 Non-functional Requirements

In traditional engineering, criteria like usability are quality requirements that affect functional goals but cannot stand alone (Chung et al., 1999). For decades, these non-functional requirements (NFRs), or “-ilities”, were considered second class requirements. They defied categorization, except to be non-functional. How exactly they differed from functional goals was never made clear (Rosa et al., 2001), yet most modern systems have more lines of interface, error and network code than functional code, and increasingly fail for "unexpected" non-functional reasons (footnote 4) (Cysneiros & Leite, 2002, p. 699).

The logic is that because NFRs such as reliability cannot exist without functionality, they are subordinate to it. Yet by the same logic, functionality is subordinate to reliability as it cannot exist without it, e.g. an unreliable car that will not start has no speed function, nor does a car that is stolen (low security), nor one that is too hard to drive (low usability).

NFRs not only modify performance but define it. In nature, functionality is not the only key to success, e.g. viruses hijack other systems’ functionality. Functionality differs from the other system requirements only in being more obvious to us. It is really just one of many requirements. The distinction between functional and non-functional requirements is a bias, like seeing the sun going round the earth because we live on the earth.

2.5 Holism and Specialization

In general systems theory, any system consists of:

Parts, and

Interactions.

The performance of a system of parts that interact is not defined by decomposition alone. Even simple parts, like air molecules, can interact strongly to form a chaotic system like the weather (Lorenz, 1963). Gestalt psychologists called the concept of the whole being more than its parts holism, as a curve is just a curve but in a face becomes a "smile". Holism is how system parts change by interacting with others. Holistic systems are individualistic, because changing one part, by its interactions, can cascade to change the whole system drastically. People rarely look the same because one gene change can change everything. The brain is also holistic — one thought can change everything you know.

Yet a system's parts need not be simple. The body began as one cell, a zygote, that divided into all the cells of the body, including liver, skin, bone and brain cells (footnote 5). Likewise in early societies most people did most things, but today we have millions of specialist jobs. A system's specialization (footnote 6) is the degree to which its parts differ in form and action, especially its constituent parts.

Holism (complex interactions) and specialization (complex parts) are the hallmarks of evolved systems. The former gives the levels of the last chapter and the latter gives the constituent part specializations discussed now.

2.6 Constituent Parts

In general terms, what are the parts of a system? Are software parts lines of code, variables or sub-programs? A system's elemental parts are those not formed of other parts, e.g. a mechanic stripping a car stops at the bolt as an elemental part of that level. To decompose it further gives atoms which are physical not mechanical elements. Each level has a different elemental part: physics has quantum strings, information has bits, psychology has qualia (footnote 7) and society has citizens (Table 2.1). Elemental parts then form complex parts as bits form bytes.

Level | Element | Other parts |

Community | Citizen | Friendships, groups, organizations, societies. |

Personal | Qualia | Cognitions, attitudes, beliefs, feelings, theories. |

Informational | Bit | Bytes, records, files, commands, databases. |

Physical | Strings? | Quarks, electrons, nucleons, atoms, molecules. |

Table 2.1: System elements by level

A system's constituent parts are those that interact to form the system but are not part of other parts (Esfeld, 1998), e.g. the body frame of a car is aconstituent part because it is part of the car but not part of any other car part. So, dismantling a car entirely gives elemental parts, not constituent parts, e.g. a bolt on a wheel is not a constituent part if it is part of a wheel.

How elemental parts give constituent parts is the system structure, e.g. to say a body is composed of cells ignores its structure: how parts combine into higher parts or sub-systems. Only in system heaps, like a pile of sand, are elemental parts also constituent parts. An advanced system like the body is not a heap because the cell elements combine to form sub-systems just as the elemental parts of a car do, e.g. a wheel as a car constituent has many sub-parts. Just sticking together arbitrary constituents in design without regard to their interaction has been called the Frankenstein effect (footnote 8) (Tenner, 1997). The body's constituent parts are, for example, the digestive system, the respiratory system, etc., not the head, torso and limbs. The specialties of medicine often describe body constituents.

In order to develop a general model of system design it is necessary to specify the constituent parts of systems in general.

2.7 General System Requirements

Requirement engineering aims to define a system’s purposes. If levels and constituent specializations change those purposes, how can requirements engineering succeed? The approach used here is to try to view the system from the perspective of the system itself. This means specifying requirements for level constituents without regard to any particular environment. How general requirements can be reconciled in a specific environment is then the art of system design.

In general, a system performs by interacting with its environment to gain value and avoid loss in order to survive. In Darwinian terms, what does not survive dies out and what does lives on. Any system in this situation needs a boundary, to exist apart from the world, and an internal structure, to support and manage its existence. It needs effectors to act upon the environment around it, and receptors to monitor the world for risks and opportunities. The requirement to reproduce is here ignored as it is not very relevant to computing. This would however add a time dimension to the model.

Constituent | Requirement | Definition |

Boundary | Security | To deny unauthorized entry, misuse or takeover by other entities. |

| Extendibility | To attach to or use outside elements as system extensions. |

Structure | Flexibility | To adapt system operation to new environments |

| Reliability | To continue operating despite system part failure |

Effector | Functionality | To produce a desired change on the environment |

| Usability | To minimize the resource costs of action |

Receptor | Connectivity | To open and use communication channels |

| Privacy | To limit the release of self-information by any channel |

Table 2.2: General system requirement definitions

For example, cells first evolved a boundary membrane, then organelle and nuclear structures for support and control; then eukaryotic cells evolved flagella to move, and then protozoa got photo-receptors (Alberts et al., 1994). We also have a skin boundary, metabolic and brain structures, muscle effectors and sense receptors. Computers also have a case boundary, a motherboard internal structure, printer or screen effectors and keyboard or mouse receptors.

Four general system constituents, each with risk and opportunity goals, gives eight general performance requirements (Table 2.2) as follows:

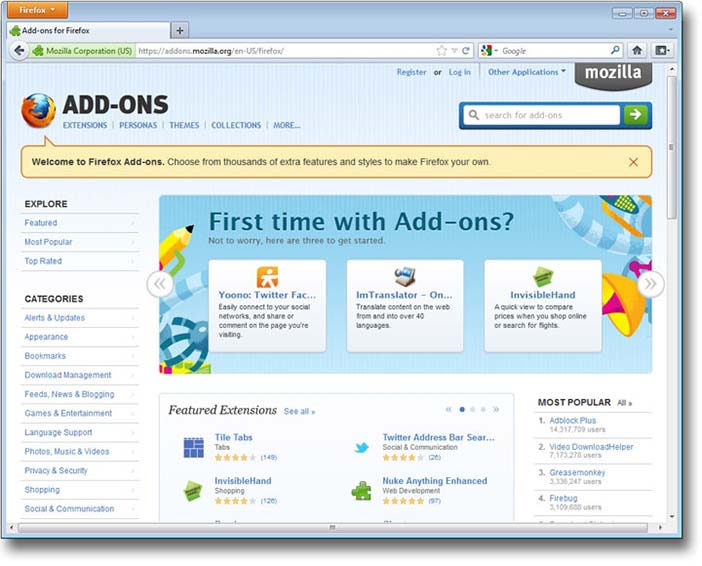

Boundary constituents manage the system boundary. They can be designed to deny outside things entry (security) or to use them (extendibility). In computing, virus protection is security and system add-ons are extendibility (Figure 2.2). In people, the immune system gives biological security and tool-use illustrates extendibility.

Structure constituents manage internal operations. They can be designed to limit internal change to reduce faults (reliability), or to allow internal change to adapt to outside changes (flexibility). In computing, reliability reduces and recovers from error, and flexibility is the system preferences that allow customization. In people, reliability is the body fixing a cell "error" that might cause cancer, while the brain learning illustrates flexibility.

Effector constituents manage environment actions, so can be designed to maximize effects (functionality) or minimize resource use (usability). In computing, functionality is the menu functions, while usability is how easy they are to use. In people, functionality gives muscle effectiveness, and usability is metabolic efficiency.

Receptor constituents manage signals to and from the environment, so can be designed to open communication channels (connectivity) or close them (privacy). Connected computing can download updates or chat online, while privacy is the power to disconnect or log off. In people, connectivity is conversing, and privacy is the legal right to be left alone. In nature, privacy is camouflage, and the military calls it stealth. Note that privacy is the ownership of self-data, not secrecy. It includes the right to make personal data public.

These general system requirements map well to current terms (Table 2.3), but note that as what is exchanged changes by level, so do the names preferred:

Hardware systems exchange energy. So functionality is power, e.g. hardware with a high CPU cycle rate. Usable hardware uses less power for the same result, e.g. mobile phones that last longer. Reliable hardware is rugged enough to work if you drop it, and flexible hardware is mobile enough to work if you move around, i.e. change environments. Secure hardware blocks physical theft, e.g. by laptop cable locks, and extendible hardware has ports for peripherals to be attached. Connected hardware has wired or wireless links and private hardware is tempest proof i.e. it does not physically leak energy.

Software systems exchange information. Functional software has many ways to process information, while usable software uses less CPU processing (“lite” apps). Reliable software avoids errors or recovers from them quickly. Flexible software is operating system platform independent. Secure software cannot be corrupted or overwritten. Extendible software can access OS program library calls. Connected software has protocol "handshakes" to open read/write channels. Private software can encrypt information so that others cannot see it.

HCI systems exchange meaning, including ideas, feelings and intents. In functional HCI the human computer pair is effectual, i.e. meets the user task goal. Usable HCI requires less intellectual, affective or conative (footnote 9) effort, i.e. is intuitive. Reliable HCI avoids or recovers from unintended user errors by checks or undo choices — the web Back button is an HCI invention. Flexible HCI lets users change language, font size or privacy preferences, as each person is a new environment to the software. Secure HCI avoids identity theft by user password. Extendible HCI lets users use what others create, e.g. mash-ups and third party add-ons. Connected HCI communicates with others, while privacy includes not getting spammed or being located on a mobile device.

Each level applies the same ideas to a different system view. The community level is discussed in Chapter 3.

Requirement | Synonyms |

Functionality | Effectualness, capability, usefulness, effectiveness, power, utility. |

Usability | Ease of use, simplicity, user friendliness, efficiency, accessibility. |

Extendibility | Openness, interoperability, permeability, compatibility, standards. |

Security | Defence, protection, safety, threat resistance, integrity, inviolable. |

Flexibility | Adaptability, portability, customizability, plasticity, agility, modifiability. |

Reliability | Stability, dependability, robustness, ruggedness, durability, availability. |

Connectivity | Networkability, communicability, interactivity, sociability. |

Privacy | Tempest proof, confidentiality, secrecy, camouflage, stealth, encryption. |

Table 2.3: Performance requirement synonyms

Copyright © Mozilla. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 2.2: Firefox add-ons are extendibility

Copyright © Mozilla. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 2.2: Firefox add-ons are extendibility

2.8 A General Design Space

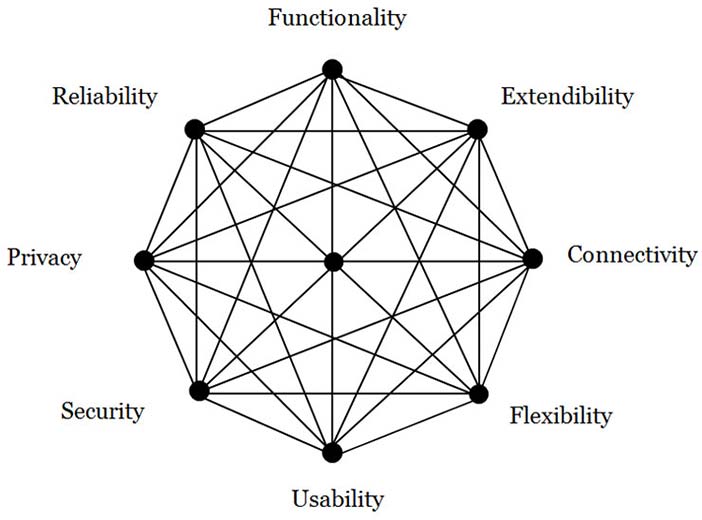

Figure 2.3 shows a general system design space, where the:

Area is the system performance requirements met.

Shape is the environment requirement weightings.

Lines are design requirement tensions.

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 2.3: A general system design space

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 2.3: A general system design space

The space has four active requirements that are about taking opportunities (footnote 10) and four passive ones that are about reducing risks (footnote 11). In system performance, taking opportunities is as important as reducing risk (Pinto, 2002).

The weightings of each requirement vary with the environment, e.g. security is more important in threat scenarios and extendibility more important in opportunity situations.

The requirement criteria of Figure 2.3 have no inherent contradictions, e.g. a bullet-proof plexi-glass room can be secure but not private, while encrypted files can be private but not secure. Reliability provides services while security denies them (Jonsson, 1998), so a system can be reliable but insecure, unreliable but secure, unreliable and insecure or reliable and secure. Likewise, functionality need not deny usability (Borenstein & Thyberg, 1991) or connectivity privacy. Cross-cutting requirements (Moreira et al., 2002) can be reconciled by innovative design if they are logically modular.

2.9 Design Tensions and Innovation

A design tension is when making one design requirement better makes another worse. Applying two different requirements to the same constituent often gives a design tension, e.g. castle walls that protect against attacks but have a gate to get supplies in. Computers that deny virus attacks but still need plug-in software hooks. These contrasts are not anomalies, but built into the nature of systems.

Design begins with no tensions. As requirements are met the Figure 2.3 performance area increases, so the lines between them tighten like rubber bands stretched. In advanced systems, the tension is so "tight" that increasing any performance criterion will pull back one or more others. In the Version 2 Paradox, a successful product improved to version 2 actually performs worse!

| Code | Criteria | Analysis | Testing |

Actions | Application | Functionality | Task | Business |

| Interface | Usability | Usability | User |

Interactions | Access control | Security | Threat | Penetration |

| Plug-ins | Extendibility | Standards | Compatibility |

Changes | Error recovery | Reliability | Stress | Load |

| Preferences | Flexibility | Contingency | Situation |

Interchange | Network | Connectivity | Channel | Communication |

| Rights | Privacy | Legitimacy (footnote 12) | Social |

Table 2.4: Project specializations by constituent

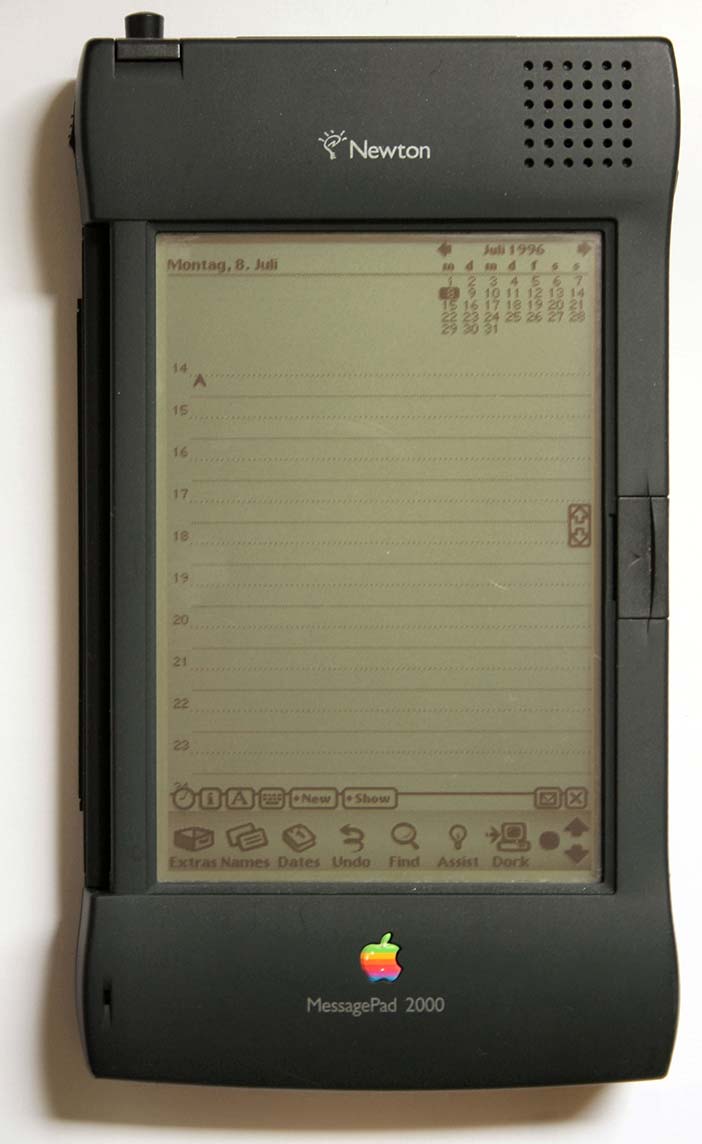

To improve a complex system one cannot just improve one criterion, i.e. just pull one corner of its performance web. For example, in 1992 Apple CEO Sculley introduced the hand-held Newton, claiming that portable computing was the future (Figure 2.4). We now know he was right, but in 1998 Apple dropped the line due to poor sales. The Newton’s small screen made data entry hard, i.e. the portability gain was nullified by a usability loss. Only when Palm’s Graffiti language improved handwriting recognition did the personal digital assistant (PDA) market revive. Sculley's portability innovation was only half the design answer — the other half was resolving the usability problems that the improvement had created. Innovative design requires cross-disciplinary generalists to resolve such design tensions.

In general system design, too much focus on any one criterion gives diminishing returns, whether it is functionality, security (OECD, 1996), extendibility (De Simone & Kazman, 1995), privacy (Regan, 1995), usability (Gediga et al., 1999) or flexibility (Knoll & Jarvenpaa, 1994). Improving one aspect alone of a performance web can even reduce performance, i.e. "bite back" (Tenner, 1997), e.g. a network that is so secure no-one uses it. Advanced system performance requires balance, not just one dimensional design “excellence”.

Courtesy of Ralf Pfeifer. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 2.4: Sculley introduced the Newton PDA in 1992

Courtesy of Ralf Pfeifer. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 2.4: Sculley introduced the Newton PDA in 1992

2.10 Project Development

The days when programmers could list a system's functions and then just code them are gone, if they ever existed. Today, design involves not only many specialties but also their interaction. A system development could involve up to eight specialist groups, with distinct requirements, analysis and testing (Table 2.4). Smaller systems might have four groups (actions, interactions, changes and interchanges), two (opportunities and risks) or just one (performance). Design tensions can be reduced by agile methods where specialists talk more to each other and stakeholders, but advanced system development also needs innovators who can cross specialist boundaries to resolve cross-cutting design tensions.

2.11 Discussion Questions

The following questions are designed to encourage thinking on the chapter and exploring socio-technical cases from the Internet. If you are reading this chapter in a class - either at university or commercial – the questions might be discussed in class first, and then students can choose questions to research in pairs and report back to the next class.

What three widespread computing expectations did not happen? Why not? What three unexpected computing outcomes did happen? Why?

What is a system requirement? How does it relate to system design? How do system requirements relate to performance? Or to system evaluation criteria? How can one specify or measure system performance if there are many factors?

What is the basic idea of general systems theory? Why is it useful? Can a cell, your body, and the earth all be considered systems? Describe Lovelock’s Gaia Hypothesis. How does it link to both General Systems Theory and the recent film Avatar? Is every system contained within another system (environment)?

Does nature have a best species? If nature has no better or worse, how can species evolve to be better? Or if it has a better and worse, why is current life so varied instead of being just the “best”? (footnote 13) Does computing have a best system? If it has no better or worse, how can it evolve? If it has a better and worse, why is current computing so varied? Which animal actually is “the best”?

Why did the electronic office increase paper use? Give two good reasons to print an email in an organization. How often do you print an email? When will the use of paper stop increasing?

Why was social gaming not predicted? Why are MMORPG human opponents better than computer ones? What condition must an online game satisfy for a community to "mod" it (add scenarios)?

In what way is computing an "elephant"? Why can it not be put into an academic "pigeon hole"? (footnote 14) How can science handle cross-discipline topics?

What is the first step of system design? What are those who define what a system should do called? Why can designers not satisfy every need? Give examples from house design.

Is reliability an aspect of security or is security an aspect of reliability? Can both these things be true? What are reliability and security both aspects of? What decides which is more important?

What is a design space? What is the efficient frontier of a design space? What is a design innovation? Give examples (not a vacuum cleaner).

Why did the SGML academic community find Tim Berners-Lee's WWW proposal of low quality? Why did they not see the performance potential? Why did Microsoft also find it “of no business value”? How did the WWW eventually become a success? Given that business and academia now use it extensively, why did they reject it initially? What have they learnt from this lesson?

Are NFRs like security different from functional requirements? By what logic are they less important? By what logic are they equally critical to performance?

In general systems theory (GST), every system has what two aspects? Why does decomposing a system into simple parts not fully explain it? What is left out? Define holism. Why are highly holistic systems also individualistic? What is the Frankenstein effect? Show a "Frankenstein" web site. What is the opposite effect? Why can “good” system components not be stuck together?

What are the elemental parts of a system? What are its constituent parts? Can elemental parts be constituent parts? What connects elemental and constituent parts? Give examples.

Why are constituent part specializations important in advanced systems? Why do we specialize as left-handers or right-handers? What about the ambidextrous?

If a car is a system, what are its boundary, structure, effector and receptor constituents? Explain its general system requirements, with examples. When might a vehicle's "privacy" be a critical success factor? What about its connectivity?

Give the general system requirements for browser application. How did its designers meet them? Give three examples of browser requirement tensions. How are they met?

How do mobile phones meet the general system requirements, first as hardware and then as software?

Give examples of usability requirements for hardware, software and HCI. Why does the requirement change by level? What is "usability" on a community level?

Are reliability and security really distinct? Can a system be reliable but insecure, unreliable but secure, unreliable and insecure, or reliable and secure? Give examples. Can a system be functional but not usable, not functional but usable, not functional or usable, or both functional and usable? Give examples.

Performance is taking opportunities and avoiding risks. Yet while mistakes and successes are evident, missed opportunities and mistakes avoided are not. Explain how a business can fail by missing an opportunity, with WordPerfect vs Word as an example. Explain how a business can succeed by avoiding risks, with air travel as an example. What happens if you only maximize opportunity? What happens if you only reduce risks? Give examples. How does nature both take opportunities and avoid risks? How should designers manage this?

Describe the opportunity enhancing general system performance requirements, with an IT example of each. When would you give them priority? Describe the risk reducing performance requirements, with an IT example of each. When would you give them priority?

What is the Version 2 paradox? Give an example from your experience of software that got worse on an update. You can use a game example. Why does this happen? How can designers avoid this?

Define extendibility for any system. Give examples for a desktop computer, a laptop computer and a mobile device. Give examples of software extendibility, for email, word processing and game applications. What is personal extendibility? Or community extendibility?

Why is innovation so hard for advanced systems? Why stops a system being secure and open? Or powerful and usable? Or reliable and flexible? Or connected and private? How can such diverse requirements ever be reconciled?

Give two good reasons to have specialists in a large computer project team. What happens if they disagree? Why are cross-disciplinary integrators also needed?