Wearable computing is the study or practice of inventing, designing, building, or using miniature body-borne computational and sensory devices. Wearable computers may be worn under, over, or in clothing, or may also be themselves clothes (i.e. "Smart Clothing" (Mann, 1996a)).

23.1 Bearable Computing

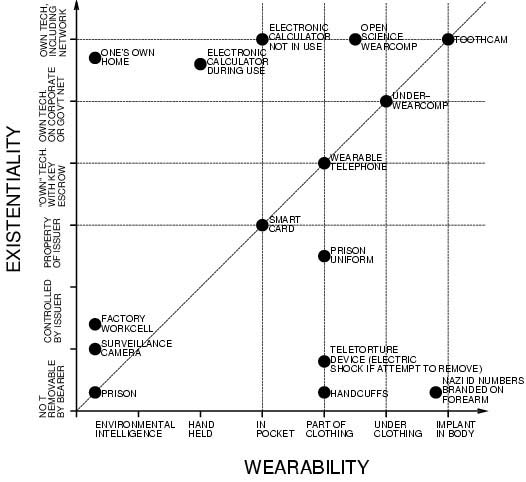

The field of wearable computing, however, extends beyond "Smart Clothing". The author often uses the term "Body-Borne Computing" or "Bearable Computing" as a substitute for "Wearable Computing" so as to include all manner of technology that is on or in the body, e.g. implantable devices as well as portable devices like smartphones. In fact the word "portable" comes from the French word "porter" which means "to wear".

23.2 Practical Applications

Applications of body-borne computing include seeing aids for the blind or visually impaired, as well as memory aids to help persons with special needs. The MindMesh, an EEG (ElectroEncephaloGram) based "thinking cap", for example, allows the user to plug various devices into their brain. A blind person can plug in a camera and use it as an "eye".

Moreover, body-borne computing in the inclusive sense is for everyone, in the form of such applications as wayfinding, and Personal Safety Devices (PSDs). Body-borne computing is already a part of many people's lives, in the form of a smartphone that helps them find their way if they get lost, or helps protect them from danger (e.g. for emergency notification). The next generation of smartphones will be borne by the body in a way that it is always attentive (e.g. that the camera can always "see" one's environment), so that if a person gets lost, the device will help the user "remember" where they are. Additionally, it will function like the "black box" flight recorder on an aircraft, and, in the event of danger, will be able to automatically notify others of the user's physiological state as well as what happened in the environment.

Consider, for example, a simple heart monitor that continuously records ECG (ElectroCardioGram) along with video of the environment. This may help physicians correlate heart arrythmia, or other irregularties, with possible envioronmental causes of stress - a physician may be able to see what was happening to the patient at the time a problem was first detected.

23.3 Wearable computing as a reciprocal relationship between man and machine

An important distinction between wearable computers and portable computers (handheld and laptop computers for example) is that the goal of wearable computing is to position or contextualize the computer in such a way that the human and computer are inextricably intertwined, so as to achieve Humanistic Intelligence – i.e. intelligence that arises by having the human being in the feedback loop of the computational process, e.g. Mann 1998.

An example of Humanistic Intelligence is the wearable face recognizer (Mann 1996) in which the computer takes the form of electric eyeglasses that "see" everything the wearer sees, and therefore the computer can interact serendipitously. A handheld or laptop computer would not provide the same serendipitous or unexpected interaction, whereas the wearable computer can pop-up virtual nametags if it ever "sees" someone its owner knows or ought to know.

In this sense, wearable computing can be defined as an embodiment of, or an attempt to embody, Humanistic Intelligence. This definition also allows for the possibility of some or all of the technology to be implanted inside the body, thus broadening from "wearable computing" to "bearable computing" (i.e. body-borne computing).

One of the main features of Humanistic Intelligence is constancy of interaction, that the human and computer are inextricably intertwined. This arises from constancy of interaction between the human and computer, i.e. there is no need to turn the device on prior to engaging it (thus, serendipity).

Another feature of Humanistic Intelligence is the ability to multi-task. It is not necessary for a person to stop what they are doing to use a wearable computer because it is always running in the background, so as to augment or mediate the human's interactions. Wearable computers can be incorporated by the user to act like a prosthetic, thus forming a true extension of the user's mind and body.

It is common in the field of Human-Computer I nteraction (HCI) to think of the human and computer as separate entities. The term "Human-Computer Interaction" emphasizes this separateness by treating the human and computer as different entities that interact. However, Humanistic Intelligence theory thinks of the wearer and the computer with its associated input and output facilities not as separate entities, but regards the computer as a second brain and its sensory modalities as additional senses, in which synthetic synesthesia merges with the wearer's senses. In this context, wearable computing has been referred to as a "Sixth Sense" (Mann and Niedzviecki 2001, Mann 2001 and Geary 2002).

When a wearable computer functions as a successful embodiment of Humanistic Intelligence, the computer uses the human's mind and body as one of its peripherals, just as the human uses the computer as a peripheral. This reciprocal relationship is at the heart of Humanistic Intelligence (Mann 2001, Mann 1998, and Knight 2000)

23.4 Concrete examples of wearable computing

23.4.1 Example 1: Augmented Reality

Augmented Reality means to super-impose an extra layer on a real-world environment, thereby augmenting it. An ”augmented reality” is thus a view of a physical, real-world environment whose elements are augmented by computer-generated sensory input such as sound, video, graphics or GPS data. One example is the Wikitude application for the iPhone which lets you point your iPhone’s camera at something, which is then “augmented” with information from the Wikipedia (strictly speaking this is a mediated reality because the iPhone actually modifies vision in some ways - even if nothing more than the fact we're seeing with a camera).

Author/Copyright holder: Courtesy of Leonard Low. Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

Figure 23.1: Augmented Reality prototype

Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Figure 23.2: Photograph of the Head-Up Display taken by a pilot on a McDonnell Douglas F/A-18 Hornet

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 23.3: The glogger.mobi application: Augmented reality 'lined up' with reality

Author/Copyright holder: Courtesy of Mr3641. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.4: The Wikitude iphone application

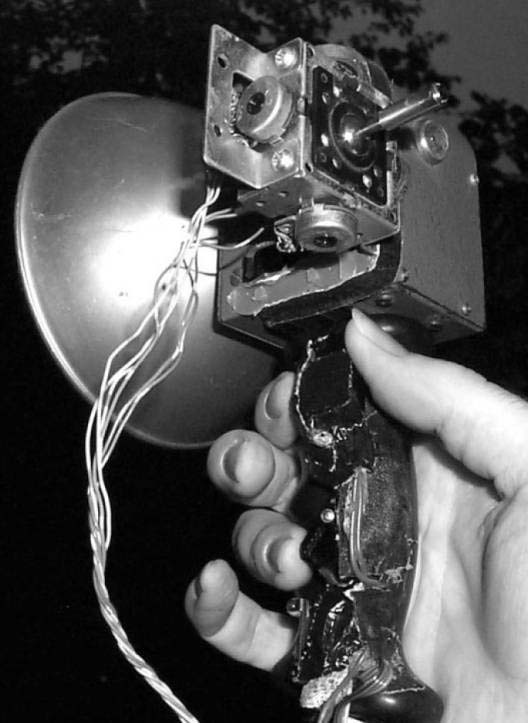

A concrete example of wearable computing used for augmented reality is Mann's pendant-based camera and projector system for Augmented Reality. The system shown below was completed by Mann in 1998:

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.5: Neckworn self-gesturing webcam and projector system designed and built by Steve Mann in 1998

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.6: Closeup of dome pendant showing the laser-based infinite depth-of-focus projector, called an "aremac" (Mann 2001). The laser-based aremac was developed to project onto any 3D surface and does not require any focusing adjustments.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

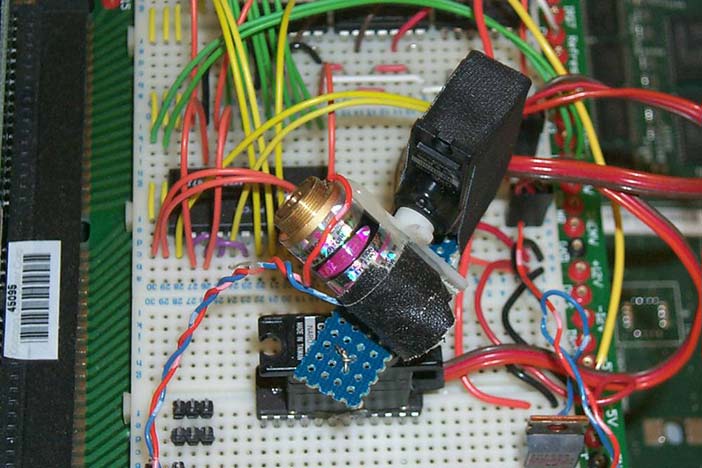

Figure 23.7: Early breadboard prototype of the aremac that Mann developed for the neckworn webcam+projector.

In Figure 5 the wearer is shopping for milk, but this could also have been a more significant purchase like a new car or a house. The wearer's wife, at a remote location, is looking through the camera by way of a projection screen in her living room in another country. She points a laser pointer at the screen, and a vision system in the projector tracks that and remotely operates the aremac in the wearer's necklace. Thus he sees whatever she draws or scribbles on her screen. This scribbling or drawing directly annotates the “reality” that he's experiencing.

In another application, the wearer can use hand gestures to control the wearable computer. The author referred to this system as “Synthetic Synesthesia of the Sixth Sense”, and it is often called “SixthSense” for short.

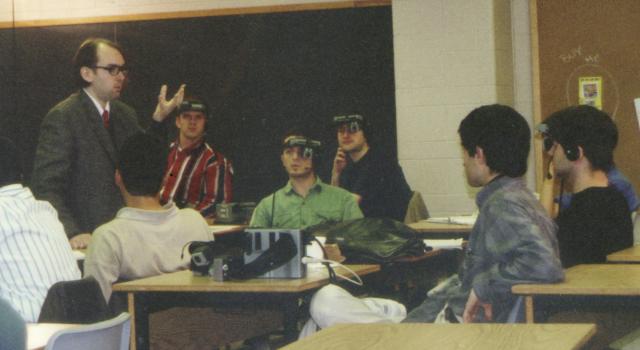

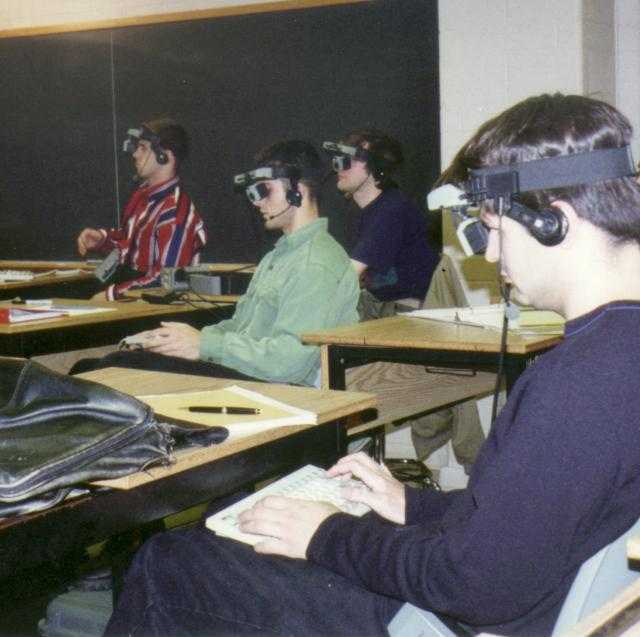

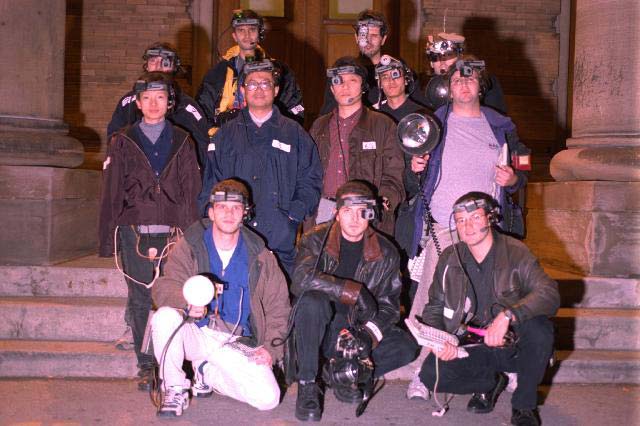

This wearable computer system was used as a teaching example at University of Toronto, where hundreds of students were taught how to build the system, including the vector-graphics laser-based infinite depth-of-field projector, using surplus components obtained at low cost. The system cost approximately $75 for each student to build (not including the computer). The software and circuit board design for this system was distributed to students under an Open Source licence, and the circuit board itself was designed using Open Source computer programs (PCB, kicad, etc.), see Mann 2001b.

23.4.2 Example 2: Diminished Reality

While the goal of Augmented Reality is to augment reality, an Augmented Reality system often accomplishes quite the opposite. For example, Augmented Reality often adds to the confusion of an already confusing existence, adding extra clutter to an already cluttered world. There seems to be a fine line between Augmented Reality and information overload.

Sometimes there are situations where it is appropriate to remove or diminish clutter. For example, the electric eyeglasses (www.eyetap.org) can assist the visually impaired by simplifying rather than complexifying visual input. To do this, visual reality can be re-drawn as a high-contrast cartoon-like world where lines and edges are made more bold and crisp and clear, thus being visible to a person with limited vision.

Another situation in which diminished reality makes sense is dealing with advertising. Our world is increasingly being cluttered with advertising and visual detritus. The electric eyeglasses can filter out unwanted advertising, and reclaim that visual space for useful information. Unwanted advertising, seen once, is inserted into a killfile (e.g. a file of particular ads that are to be reclaimed). For example, if the user is a non-smoker, he or she may decide to put certain cigarette ads into the killfile, so that when subsequently seen, they are removed. That space can then be overwritten with useful data. The following videos show examples:

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-ND (Creative Commons Attribution-NoDerivs 3.0 Unported).

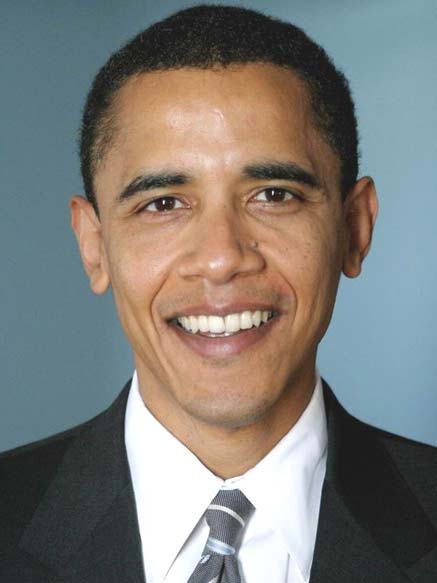

Author/Copyright holder: Courtesy of US Senate. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Author/Copyright holder: Courtesy of US Senate. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Figure 23.8 A-B: Simplifying rather than complexifying visual input. Such "diminished reality" may help the visually impaired

23.4.3 Example 3: Mediated Reality

Another concrete example is Mediated Reality. Whereas the Augmented Reality system shown above can only add to "reality", the Mediated Reality systems can augment, deliberately diminish, or otherwise enhance or modify visual reality beyond what is possible with Augmented Reality. Thus mediated reality is a proper superset of augmented reality.

Mediated Reality refers to a general framework for artificial modification of human perception by way of devices for augmenting, deliberately diminishing, and more generally, for otherwise altering sensory input. A simple example is electric eyeglasses (www.eyetap.org) in which the eyeglass prescription is downloaded wirelessly, and can be updated continuously in a way that's subject-matter specific or task-specific.

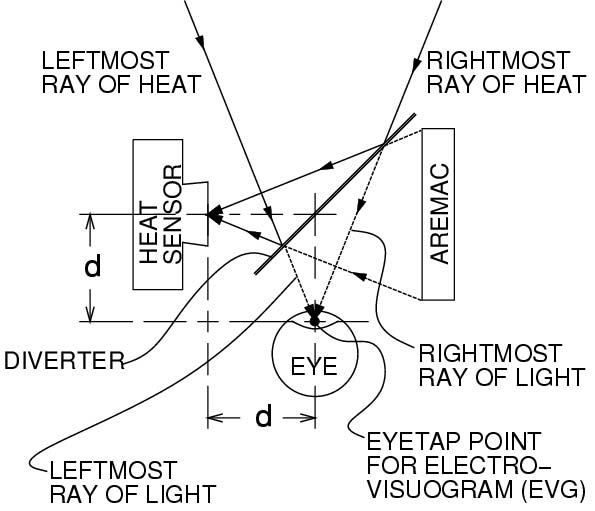

These electric eyeglasses also allow the wearers to reconfigure their vision into different spectral bands. For example, infrared eyeglasses allow us to see where people have recently stood on the ground (where the ground is still warm) or which cars in a parking lot recently arrived (because the engine is still warm). One can see how well the insulation in a building is doing, by observing where heat is leaking out of the building. A roofer can see where a roof membrane may be problematic, or where heat is leaking out of a building. Moreover, during roof repair, one can see the molten asphalt, and get a good sense of whether or not it is at the right temperature.

The electric eyeglasses can allow us to see in different spectral bands while actually repairing a roof, thus forming a closed feedback look, as an example of Humanistic Intelligence. See Figure 8 A-B.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.9 A-B: A (at left): Author (looking down at the mop he is holding) wearing a thermal EyeTap wearable computer system for seeing heat. This device modified the author's visual perception of the world, and also allowed others to communicate with the author by modifying his visual perception. A bucket of 500 degree asphalt is present in the foreground. B (at right): Thermal EyeTap principle of operation: Rays of thermal energy that would otherwise pass through the center of projection of the eye (EYE) are diverted by a specially made 45 degree "hot mirror" (DIVERTER) that reflects heat, into a heat sensor. This effectively locates the heat sensor at the center of projection of the eye (EYETAP POINT). A computer controlled light synthesizer (AREMAC) is controlled by a wearable computer to reconstruct rays of heat as rays of visible light that are each collinear with the corresponding ray of heat. The principal point on the diverter is equidistant to the center of the iris of the eye and the center of projection of the sensor (HEAT SENSOR). (This distance, denoted "d", is called the eyetap distance.) The light synthesizer (AREMAC) is also used to draw on the wearer's retina, under computer program control, to facilitate communication with (including annotation by) a remote roofing expert

23.5 History of Wearable Computing

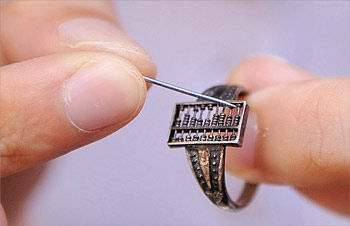

Depending on how broadly wearable computing is defined, the first wearable computer might have been an abacus hung around the neck on a string for convenience, or worn on the finger.

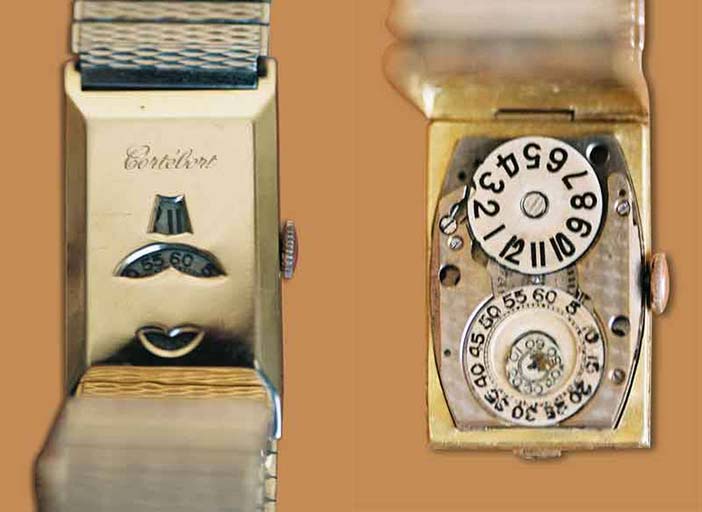

Or it might have been the pocket watches of the early 1500s, or the wristwatches that replaced them, since a timepiece is a computer of sorts (i.e. a device that computes or keeps time). See Figure 10 A-B.

Author/Copyright holder: Courtesy of Pirkheimer. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Wallstonekraft. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.10 A-B: Leftmost, One of the first pocket watches, called "The Nuremberg Egg", made arround 1510. Rightmost, an early digital wristwatch from the 1920s.

More recently electronic calculators (which could be carried in a pocket or worn on the wrist) emerged, as did electronic timepieces. Other task-specific electronic circuits included a timing device concealed in a shoe to help the wearer cheat at a game of roulette (Bass 1985).

A common understanding of the term "computer" is that a computer is something that is programmable by the user, while it is being used, or that is of a relatively general-purpose nature (e.g. the user can change programs and run various applications).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

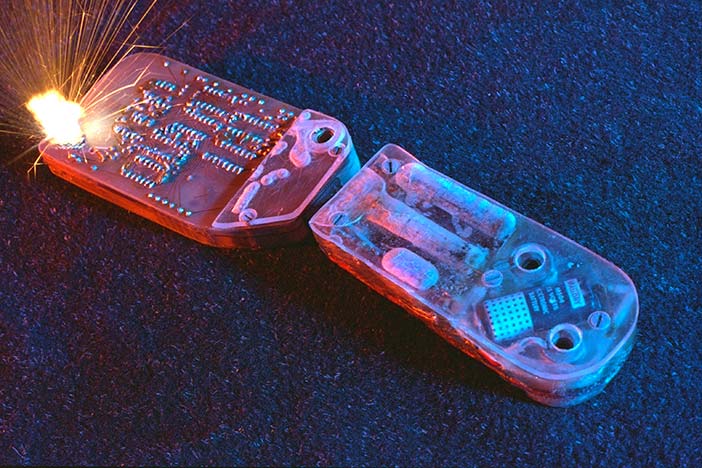

Figure 23.11 A-B: A timing device designed to be concealed in a shoe for use in roulette invented by Ed Thorp and Claude Shannon in 1961 but first mentioned in Thorp 1966. Although it uses electronic circuits it could not be programmed by the wearer, and ran only one application: a program that computed time. The devices described above are predecessors to what is commonly meant by the term "wearable computer".

Author/Copyright holder: Xinhua Photo and The People's Government of Anhui Province. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 23.12: Here is a "computer" (an abacus) and since it is a piece of jewelry (a ring), it is wearable. Such devices have existed for centuries, but do not successfully embody Humanistic Intelligence. In particular, because the abacus is task-specific, it does not give rise to what we generally mean by "wearable computer". For example, its functions and purpose (algorithms, applications, etc.) can't be reconfigured (programmed) by the end user while wearing it. In short, "wearable computer" means more than the sum of its parts i.e. more than just "wearable" and "computer". Made with beads of a silver ring abacus of 1.2 centimeter long and 0.7 centimeter wide, dating back to Chinese Qing Dynasty (1616-1911 BC)

Thus a task-specific device like an abacus or wristwatch or timer hidden in a shoe is not generally what we think of when we think of "computer". Indeed, what made the computer revolution so profound was that the computer is a software re-programmable device capable of being used for a wide variety of complex algorithms and applications.

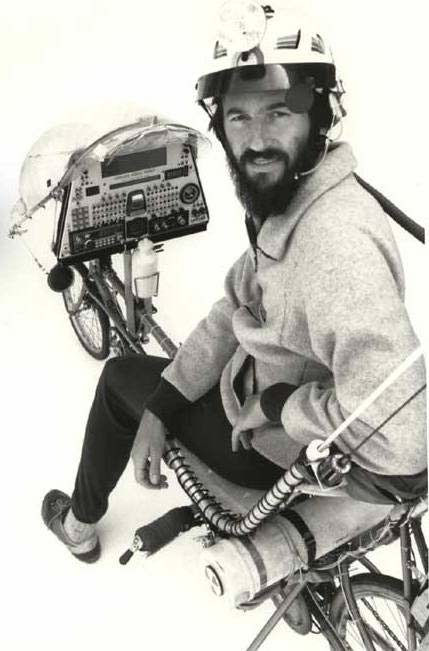

In the 1970s and early 1980s Steve Mann designed and built a number of general-purpose wearable computer systems, including various kinds of sensing, biofeedback, and multimedia computers such as wearable musical instruments, audio-based computers, and seeing aids for the blind.

In 1981 Mann designed and built a backpack-based general-purpose multimedia wearable computer system with a head-mounted display visible to one eye. The system provided text, graphics, audio, and video capability, and included a handheld chording keyer (for one-handed input). Because of its generality, this system fit the description of what most people would call a "computer" by today's standards.

The system allowed various computer applications to be run while walking around doing other things. The computer could even be programmed (i.e. new applications could be written) while walking around. Among the applications written for this wearable computer system was an application for photographically mediated reality and "lightvector painting" ("lightvectoring") used extensively throughout the 1980s. A variety of different systems were designed and built by Mann in the 1980s, and this marked a steady evolution in wearable computing toward something resembling ordinary eyeglasses by the late 1990s (Mann 2001b).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.13 A-B: The WearComp wearable computer by the late 1970s and early 1980s - a backpack based system

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.14 A-B: The WearComp wearable computer by the mid 1980s

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Webb Chappell. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 23.15 A-B: The WearComp wearable computer anno 1990 (leftmost) and by the mid 1990s (rightmost)

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 23.16 A-B: The WearComp wearable computer by the late 1990s, resembling ordinary eyeglasses

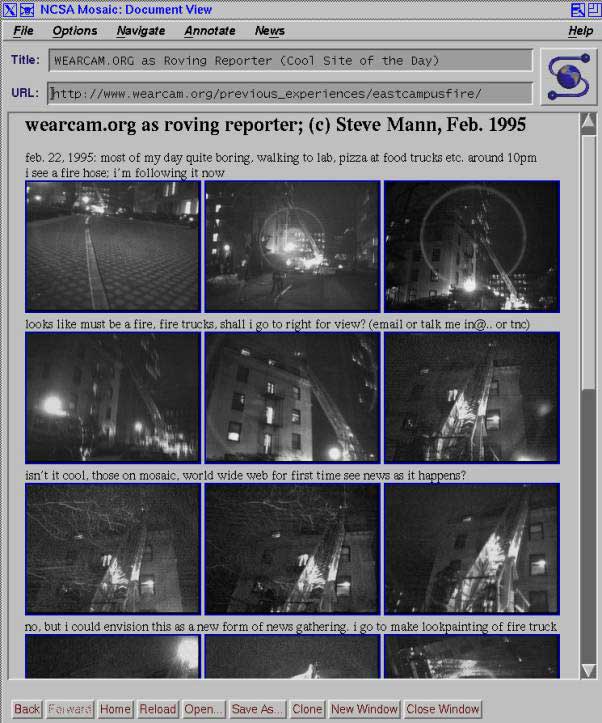

By 1994 Mann had streaming live video from his wearable computer to and from the World Wide Web, such that viewers to his web site could see what he was seeing, as well as annotate what he was seeing (i.e. "scribble on his retina" so to speak). This "Wearable Wireless Webcam" was the first embodiment of live webcasting from a wireless device.

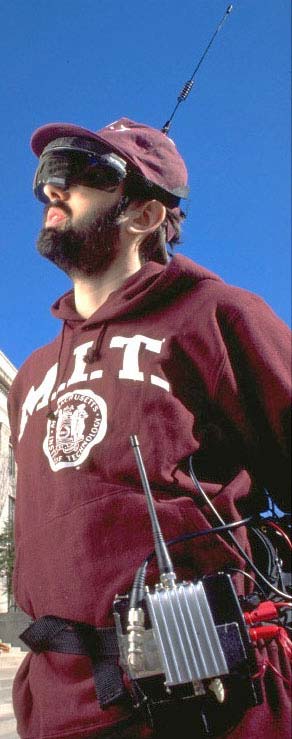

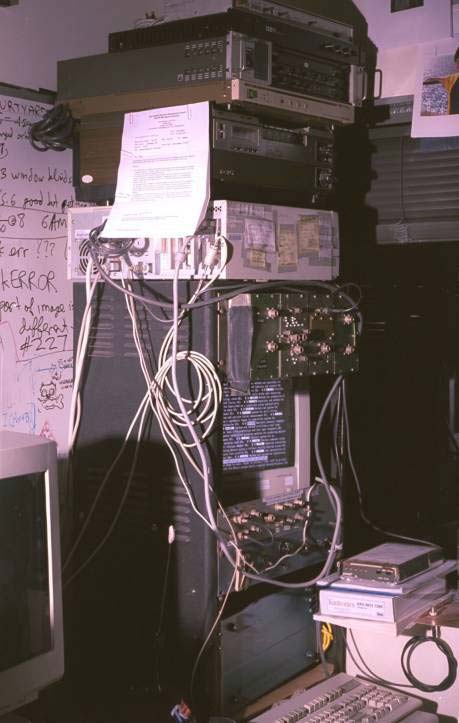

Because there were no wireless service providers at this time (much of this technology had not been invented yet), it all had to be built by hand. See Figure 17 A-B-C.

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.17 A-B-C: Early 1990s wireless communications system invented, designed, and built by Mann. Home-made wireless network (left) 19-inch relay rack with various equipment including microwave link to+from the roof of the tallest building in the city (middle) Steve Mann wearing the computer system (electric eyeglasses) while servicing the antenna on the roof of the tallest building in the city. This along with a network of other antennas, was setup to obtain wireless connectivity. Mann applied for and obtained a 100kHz spectral allocation through the New England Spectrum Management Council, 445.225MHz, for a community of "cyborgs". In many ways amateur radio (ham radio) was the predecessor of the modern Internet, where radio operators would actively communicate from their homes (base stations), vehicles (mobile units), or bodies (portable units) with other radio operators around the world. Mann was an active ham radio operator, with callsign N1NLF

Another ham radio operator, Steven K. Roberts, callsign N4RVE, designed and built Winnebiko-II, a recumbent bicycle with on-board computer and chording keyer. Roberts referred to his efforts as "nomadness", which he defined as "nomadic computing". For example, he could type while riding the bicycle (Roberts 1988).

Author/Copyright holder: Steven K. Roberts, Nomadic Research Labs. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 23.18: The Winnebiko II system, which integrated a wide range of computer and communication systems in such a way that they could be effectively be used while riding, including a chord keyboard in the handlebars.

In 1989 a "pushbroom" type display using a linear array of 280 red LEDs became available from a company called Reflection Technology. The product was referred to as the Private Eye. Because of the lack of adequate grayscale on the 280 LEDs, and also due to the use of red light (which makes image display difficult to see clearly), the Private Eye was aimed mainly at text display, rather than the multimedia computing typical of the earlier wearable computing efforts.

However, despite its limitations, the Private Eye display product brought wearable computing to the mainstream, making it easy for hobbyists to put together a wearable computer from commercial off-the-shelf devices.

Among these hobbyists were Gerald "Chip" Maguire from Columbia University and Doug Platt who built a system he called the "Hip-PC", (Bade et al 1990) and later, Thad Starner at MIT.

In 1993, Starner built a system based on Platt's design, using a Private Eye display and a Handykey Twiddler keyer. That same year, Steve Feiner, et al, at Columbia University created an augmented reality system based on the Private Eye (Feiner et al 1993).

By 1990 Xybernaut Corporation was founded, originally called Computer Products & Services Incorporated (CPSI), the name being changed to Xybernaut in 1996. Xybernaut marketed wearable computing in vertical market segments such as to telephone repair technicians, soldiers, and the like. Around this time, another company, ViA Inc., produced a flexible wearable computer that could be worn like a belt, although there were some problems with the "rubber dockey" product that connected it to the outside world.

In 1998 Steve Mann made a working prototype of a wristwatch computer running GNU Linux. The wristwatch included video-conferencing capability and was demonstrated at the ISSCC 2000 conference in February. In July 2000, Mann's Linux wristwatch was featured on the cover of Linux Journal, Issue 75, along with an article about it. See Figure 19.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.19: A wristwatch computer with videoconferencing capability running the videoconferencing application underneath a transparent oclock, running XF86 under the GNUX (GNU+Linux) operating system. The computer, being general-purpose in nature, rather than task-specific (e.g. beyond merely keeping time, etc.) made this device fit what we typically mean by "wearable computer" (i.e. something that the wearer can reconfigure, program, etc., while wearing it, as well as something that implements Humanistic Intelligence). The project was completed in 1998. The SECRET function, when selected, conceals the videoconferencing window by turning off the transparency of the o’clock, so that the watch then looks like an ordinary watch (just showing the clock filling the entire 640x480 pixel screen). The OPEN function cancels the SECRET function and opens the videoconferencing session up again. The system streamed live video at 7fps, 640x480, 24 bit color.

In 2001 IBM publicly unveiled a prototype for a wristwatch computer running Linux, but it has yet to be commercially released.

The vision of wearable computing has yet to be fulfilled commercially, but the proliferation of portable devices such as smart phones suggests an evolution in that direction. Most notably, with the appropriate input and output devices, a smart phone can form a good central processor upon which to realize an embodiment of Humanistic Intelligence.

23.6 Wearable computing Input Output devices

For a wearable computer to achieve a full implementation of Humanistic Intelligence there needs to be a constancy of user interaction, or at least a low threshold for interaction to begin. Much of the serendipity is lost if the computer must be taken out of a purse or pocket and started up). Therefore a wearable computer typically has an output device such as a display that the user can sense, and an input device with which to communicate explicitly with the computer.

Starting with the input device, the first wearable computers used a keying device called a "keyer". The keyer is inspired by the telegraph keyer of ham radio (e.g. a morse code input device), which has evolved from the single key, then to iambic (or what the author calls "biambic"), then to triambic, and more generally, multiambic. The term "iambic" existed previously to describe two-key morse code devices (e.g. morse code comprised of iambs, i.e. concepts of rhythm borrowed from poetry, having meter of verse comprised of iambs). Mann, upon hearing the word "iambic" in childhood, misunderstood the term "iambic" and thought it meant "biambic" and due to his mistake, he generalized the concept to "triambic" (3 buttons), and so on

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.20 A-B: Early (1978) wearable computing keyer prototypes invented, designed, and built by the author. These keyers were built into the hand grip of another device. Leftmost, is the author's PoV (Persistence of Vision) pushbroom text generator. Text keyed into the keyer was displayed on a linear array of lights waved through the air like a pushbroom, visible in a dimly lit space, either by persistence of human vision, or by long exposure photographs. Rightmost, a “painting with lightvectors” invention allows various layers to be built in a multidimensional “lightspace”. Note the keyer combined with the pointing device, which was connected to a multimedia wearable computer.

Copyright status: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 23.21: Input device for wearable computing: This EyeTap uses a framepiece to conceal the laser light source, which runs along an image fiber optic element, and the camera which runs along another image fiber optic element, the two fibers running in opposite directions, one along the left earpiece, and the other along the right earpiece. This fully functioning prototype of the EyeTap technology has a physical appearance that approximates that of ordinary eyeglasses. The result is a more sleek and slender design.

Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Figure 23.22: Original wearable computer input devices were inspired by the telegraph key -- This particular telegraph key is a J38 World War II-era U.S. military model

Morse code was an early form of serial communication, which in modern times is usually automated. In a completely automated teleprinter system, the sender presses keys to send an ASCII data stream to a receiver, and computation alleviates the need for timing to be done by the human operator. In this way, much higher typing speeds are possible.

In simple terms, a keyer is like a keyboard but without the board. Instead of keys fixed to a board like one might find on a desktop, the keys are held in the hand so that a person can press keys in mid air without having to sit at a desk, or the like.

An important application of wearable computing is mediated reality, for which the input+output devices are the sensors and effectors which (a) capture sensory experiences the wearer experiences or would experience; and (b) stimulate these senses. An example is the EyeTap device which causes the eye itself, in effect, to function as if it were both a camera and a display. See Figure 23.

An EyeTap having a physical appearance of ordinary eyeglasses, was also designed and built by the author, with materials and assistance provided by Rapp optical; see Figure 21.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.23: Mann's 'GlassEye™' invention, also known as an EyeTap device, is an input+output device that can connect to a smart phone or other body-borne computer, wirelessly, or by a connection to the AudioVisual ports. A person wearing an EyeTap has the appearance of having a glass eye, or an appearance as if the camera were inside the eye, because of the diverter which diverts eyeward-bound rays of light into the camera system to resynthesize them, typically in laser light. The wearer of the eyetap sees visual reality as re-synthesized from the laser light (computer-controlled laser light source). Pictured here is designer Chris Aimone who collaborated with Mann on this design.

23.7 Lifeglogging

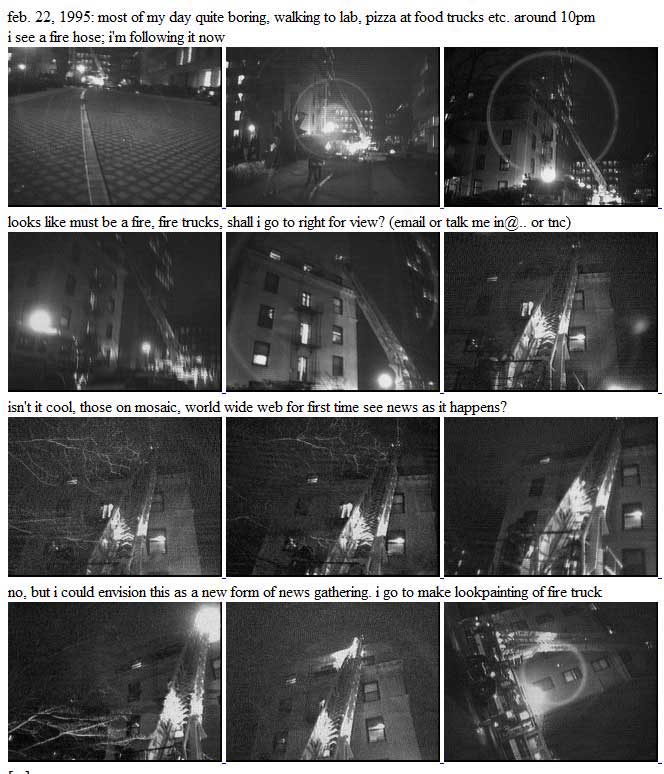

From 1994-1996, Steve Mann conducted a Wearable Wireless Webcam experiment where he streamed live video from his wearable computer to and from the World Wide Web, on an essentially 24 hour-a-day basis., For the most part, the wearable computer streamed continuously although the computer itself was not waterproof so it needed to be set aside during showering or bathing. As a personal data capture, Wearable Wireless Webcam raised some new and interesting issues in the capture and archival of a person's entire life from their own perspective. And it also opened up some new ideas such as the roving reporter, where day-to-day living can result in serendipitous capture of newsworthy events. See Figure 24 and Joi Ito's chronology of moblogging/lifelogging at the end of this chapter.

In another incident the author was the victim of a hit-and-run. The visual memory of the incident resulted in the arrest and prosecution of the perpetrator.

Wearable Wireless Webcam was at the nexus of art, science, and technology, i.e. it followed a tradition commonly used in contemporary endurance art. For example, it was akin to the living art and endurance art of Linda Montano and Tehching Hsieh who tied themselves to opposite ends of a rope, and remained that way 24 hours a day for a whole year, without touching one another.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.24: Screenshot from Steve Mann’s Wearable Wireless Webcam experiment from 1994-1996. Real-time webcast of everyday life resulted in the serendipitous capture of a newsworthy incident. Interestingly the traditional media like newspapers had no pictures of the incident, so this is the only photographic record of the incident.

But it also served a scientific purpose (controlled in-lab experiments are more controlled but make a trade-off between external validity versus internal validity), and an engineering or technical purpose (inventing new technologies, etc.).

One interesting by-product of Wearable Wireless Webcam was the concept of lifeglogging, also known as cyborGLOGGING, glogging, lifelogging, lifecasting, or sousveillance.

The word "surveillance" derives from the French words "sur", meaning from above, and "veiller" meaning "to watch". Surveillance therefore means "watching from above" or "overwatching" or "oversight". While much has been written about surveillance and the relative balance between privacy and security (i.e. some people arguing for more surveillance and others arguing for countersurveillance), this argument is one-dimensional in that it functions like a one degree-of-freedom "slider" to choose more or less surveillance. But sousveillance ("sous" is French for "from below" so the English word would be "undersight") has recently emerged as an alternative.

Sousveillance refers to the recording of an activity by a participant in the activity, typically by way of small wearable or portable personal technologies (Mann et al 2003, Mann 2004, Dennis 2008, Baikr 2010, Deirdre 2009, Thompson 2011, Brin 2011).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.25 A-B: Leftmost, a surveillance dome camera atop a lamp post serves as an "eye-in-the-sky" watching down on a parking lot. Rightmost: a surveillance dome as a necklace has a fisheye lens and various physiological sensors. Sensor camera designed and built and photographed by Steve Mann 1998. Mann presented this invention to Microsoft Corporation as the Opening Keynote at ACM Multimedia's CARPE in 2004 (http://wearcam.org/carpe/).

Author/Copyright holder: Microsoft Research, Cambridge. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in thecopyright terms below.

Author/Copyright holder: Microsoft Research, Cambridge. Copyright terms and licence: All Rights Reserved. Reproduced with permission. See section "Exceptions" in the copyright terms below.

Figure 23.26 A-B: Around 2005, Microsoft built and researched their SenseCam prototype - a version of the neckworn camera. It was commercialized in 2009 (licenced to Vicon) and is now available as a product called Vicon Revue.

While surveillance and sousveillance both generally refer to visual monitoring (i.e. "veiller" being "to watch"), the terms also denote other forms of monitoring such as audio surveillance or sousveillance. In the audio sense (e.g. recording of phone conversations) sousveillance is referred to as "one party consent".

23.8 Future directions and underlying themes

23.8.1 Cyborgs, Humanistic Intelligence and the reciprocal relationship between man and machine

The wearable computer can provide many benefits, such as assistive technologies to help people see better, remember better, and function better, e.g. for the elderly to age gracefully, or for those with Alzheimer's disease to be able to remember and recognize names and faces.

One project, the author's Mindmesh, enables the blind to see, and people with visual memory impairment to remember and recall visual subject matter. The Mindmesh comprises a permanently attached skull cap with a combination of implantable and surface electrodes, as well as a mesh-based computing architecture in which individual processors are each responsible for eight electrodes. The Mindmesh, still in its early development stages, is evolving toward an apparatus that allows the user to plug various sensory devices "into their brain" in a sense. So a blind person will be able to plug a camera into their brain, or an Alzheimer's patient will be able to attain a form of autoassociative memory. See Figure 27 A-B

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.27 A-B: The Mindmesh is a mesh-based computing architecture currently under development, to allow various sensors and related devices to be "plugged into the brain". Some variations of the Mindmesh can be permanently attached, and are ruggedized to withstand the rigours of life, e.g. running through fountains or jumping into the ocean, etc. The author wishes to thank Olivier Mayrand, InteraXon, and the OCE (Ontario Centres of Excellence) for assistance with this work.

The Visual Memory Prosthetic (VMP) is thus combined with the new computational seeing aid, which can thusly capture a cyborglog of a person's entire life and hopefully in the future be able to index into it. This is part of the author's “Silicon Brain” project in which the Mindmesh indexes into an autoassociative memory to assist persons with sensory integration disorder, or the like. As we replace more of our mind with external memory, these memories become part of us, and our own personhood.

Businesses and other organizations have a legal obligation not to discriminate against persons with special needs, or the like, or to treat persons differently depending on such technologies. As we see the widespread adoption of technologies like Mindmesh, which, essential to their functioning as memory aids, must capture, process, and retain data, may be interpreted as making recordings. The "Silicon Brain" of the Mindmesh thus asks the question "is remembering recording?". As more people embrace prosthetic minds, this distinction will disappear. Businesses and other organizations have a legal obligation not to discriminate, and will therefore not be able to prevent individuals from seeing and remembering, whether by natural biological or computational means.

But we don't have to even wait for the future widespread adoption of the Mindmesh to observe culture in contention. As mentioned earlier, smartphones are the precursor to full-on wearable computing, and their proliferation has already brought forth this very issue.

23.8.2 Privacy, surveillance, and sousveillance

Surveillance is an established practice, and while controversial, much of the controversies have been worked out and understood. Sousveillance, however, being a newer practice, remains, in many ways, yet to be worked out.

The proliferation of camera phones itself has even resulted in numerous cases in which police and security guards have been caught in wrongdoing. Also, there have been numerous cases where police and security guards have tried to destroy evidence captured by ordinary citizens. In one case, a man named Simon Glik was arrested for recording actions of police officers on video. However, The United States Court of Appeals ruled in favor of Glik, and after finding no wrong doing on his part, the courts found that the officers violated Glik's first and fourth amendment rights (footnote 1).

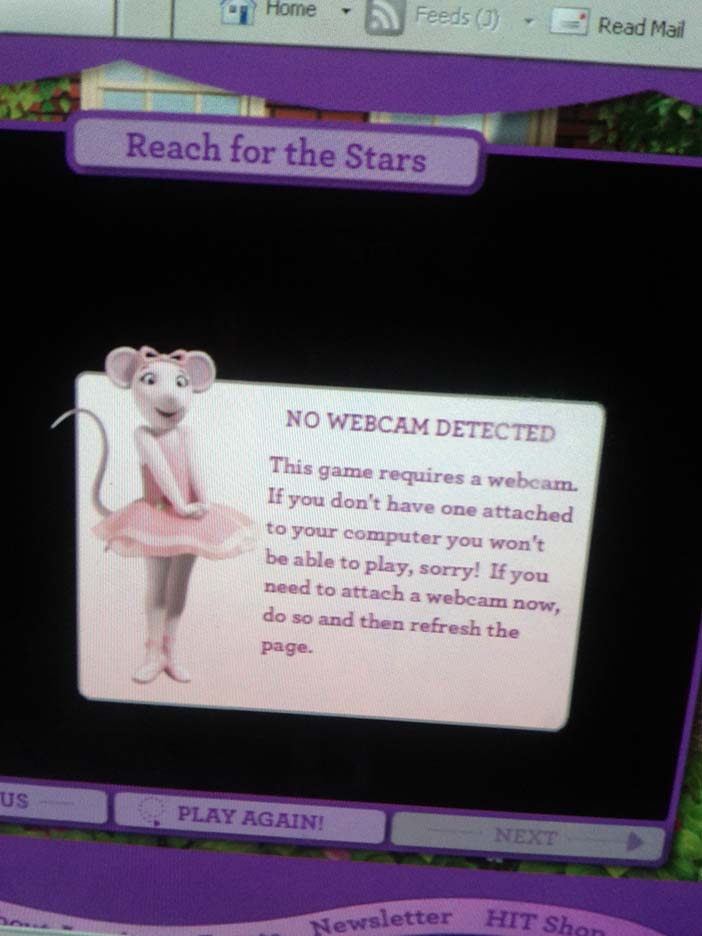

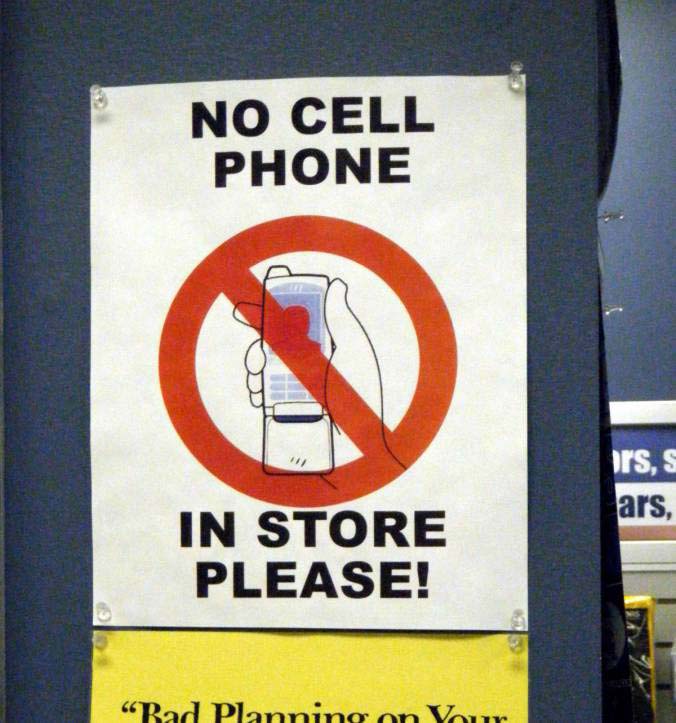

These controversies will not go away, though, as the line between remembering and recording, and between the eye and camera, blur. Many department stores and other establishments have signs up prohibiting photography and prohibiting cameras (see Figure 28 A-B-C), but they also have 2-dimensional barcodes designed to be read by patrons using their smartphones (see Figure 29 A-B). Thus they simultaneously encourage patrons to take photographs and prohibit patrons from taking photographs.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 23.28 A-B-C: Signs that say “No video or photo taking” and “NO CELL PHONE IN STORE PLEASE!” are commonplace, yet people are relying more and more on cameras and cellphones as seeing aids (hand-held magnifiers to help them read the very signs that prohibit their use, for example), and to access additional information that will help them make a purchase decision.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.29 A-B: “SCAN ME... Use your smartphone to scan this QR code...” says the box in a store where cellphones and cameras are forbidden

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.30: The irony of treating cameras and cellphones as contraband in semi-public places is that this trend seems to come around the same time as the proliferation of CCTV surveillance cameras. In the future, when a security guard demands a patron remove their electric eyeglasses, the guard may be liable when the patron trips and falls. The authority of the guard does not extend to mandating eyeglass prescriptions of their customers.

23.8.3 Copyright and ownership: Who do memories, information and data belong to?

This potential controversy extends to content. For example, the trend toward licensed software leads to a situation where licenses expire and the computer stops working when the license fee is not paid. When a computer program is helping someone see, a site license becomes a sight license. As our bodies and computing increasingly intersect and intertwine, as in the case of Wearable Computing, we must ask ourselves if we really want to live in a society where "your pacemaker firmware license is about to expire, please insert your credit card to continue living". (Mann 2003). This theme was the topic of an art installation at San Francisco Art Institute (SFAI), in response to their request for an exhibit on wearable computing. See Figure 31

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.31: Wearable computing exhibit at San Francisco Art Institute 2001 Feb. 7th. This exhibit comprised a chair with spikes that retract for a certain time period when a credit card is inserted to purchase a seating license.

By its very nature, wearable computing evokes a visceral response, and will likely fundamentally change the way in which people live and interact. In the future, devices that capture our lifelong memories, and share them in real-time, will be commonplace and worn continuously, and perhaps even permanently implanted. As an example, the author has invented and filed a patent for an artificial eye that provides people with vision in one eye, an implantable eye to see stereo using a crosseyetap. Various filmmakers have approached the author requesting help embodying this invention. See for example Figure 33.

Author/Copyright holder: Courtesy of Steve Mann. Copyright terms and licence: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0).

Figure 23.33: Occular implant artificial eye camera invented by Steve Mann (Canadian Pat.2313693), and built by Rob Spence and others, in collaboration with Mann. The artificial eye has a camera built into it for persons with vision in only one eye; the eye may thus function as a wearable wireless webcam and cyborglogging device, and hopefully soon in the future as a vision replacement. With the computer and camera implanted fully inside the body, some people are able to stream live video without having to wear anything. The apparatus has the appearance of a normal eye, yet provides sousveillance and cyborglogging (today) and may provide vision replacement tomorrow.

23.9 Where to learn more

IEEE Special Issue on Wearable Computing and Humanistic Intelligence

Editor's Blog Sousveillance: Wearable Computing and Citizen "Undersight"

Barfield, Woodrow and Caudell, Thomas (eds.) (2001): Fundamentals of Wearable Computers and Augmented Reality. CRC Press

23.9.1 Wearable Computing Conferences

The first wearable computing conferences were:

The International Conference on Wearable Computing (ICWC) and

The International Symposium on Wearable Computing (ISWC)

23.9.2 Historical chronology of moblogging, also known as cyborglogging, lifeglogging, lifelogging, lifecasting, and the like

The following is a "chronology of articles, events and resources, About moblogging", written by Joi Ito:

February 1995 - wearcam.org as roving reporter Steve Mann

January 4, 2001 4:16p - Stuart Woodward first posts from his cellphone on Stuart Woodward's LiveJournal using J-Phone, Python and Qmail

January 6, 2001 15:09 - First reported posting from under the sea

Thursday, March 1, 2001 - First post by SMS to David Davies' SMSblog and his announcement on the Radio Userland Support List

January 11, 2002 - Radio Userland released with mail to post feature

January 13, 2002 - A text post from an Docomo P503i to Al's Radio Weblog

February 18, 2002 - Hiptop Photo Gallery started by Michael Morrissey (using procmail and PERL script later improved by Dave Bort who shared it to people including Mike Popovic who started Hiptop Nation)

February 2002 - Justin Hall posts pictures and text to Jiqoo.com using a a J-Phone and Brian Hooper's code. (Link is now dead)

Fisher, Scott S., " An Authoring Tool Kit for Mixed Reality Experiences", International Workshop on Entertainment Computing (IWEC2002): Special Session on Mixed Reality Entertainment, May 14 -17 2002, Tokyo, Japan

Summer 2002 - Kuku Nipernaadide - Estonian moblog started by Peeter Marvet

2001 - Howard Rheingold coins the word "Smartmobs"

November 5, 2002 - Adam Greenfield coins the term "Moblogging"

October 1, 2002 - T-Mobile Sidekick launch moblog (Danger internal) 149 pictures in 24 hours

Friday October 4, 2002 9:15a - Hiptop Nation created by mikepop

October 31, 2002 - Hiptop Nation Halloween Scavenger Hunt

November 21 2002 - The Feature article From Weblog to Moblog by Justin Hall

Tuesday, November 26, 2002 - Stuart Woodward's first image posted from a cell phone

November 27, 2002 10:41p - Joi Ito's Moblog (using attached image mail to MT)

December 4, 2002 - Milano::Monolog blogs text from mobile phones (Japanese)

December 12, 2002 - Guardian article Weblogs get upward mobility

December 31, 2002 - New Year's Eve Moblog Blog-Misoka by JBA

January 8, 2003 - electricnews.net Start-up marries blogs and camera phones

January 8, 2003 - The Register Start-up marries blogs and camera phones

January 9, 2003 - Robert announces PhoneBlogge

23.10 References

Bade, J. Peter, Jr., Gerald Quentin Maguire and Bantz, David F. (1990). The IBM/Columbia Student Electronic Notebook Project. IBM, T. J. Watson Research Lab, Yorktown Heights, NY, USA

Barfield, Woodrow and Caudell, Thomas (eds.) (2001): Fundamentals of Wearable Computers and Augmented Reality. CRC Press

Bass, Thomas A. (2000): The Eudaemonic Pie. iUniverse

Brin, David (2011). Sousveillance: A New Era for Police Accountability. Retrieved 25 December 2011 from http://davidbrin.blogspot.com/2011/06/sousveillanc...

Dennis, Kingsley (2008): Keeping a Close Watch - The Rise of SelfSurveillance & the Threat of Digital Exposure. InSociological Review, 56

Feiner, Steven K., MacIntyre, Blair and Seligmann, Dorée D. (1993): Knowledge-Based Augmented Reality. InCommunications of the ACM, 36 (7) pp. 53-62

Geary, James (2002): The Body Electric: An Anatomy of the New Bionic Senses. Rutgers University Press

Herling, Jan and Broll, Wolfgang (2011). Video: Page title suppressed - page not yet published. Retrieved 4 November 2013 from [URL suppressed - page not yet published]

Knight, Brooke A. (2000): Watch Me! Webcams and the Public Exposure of Private Lives. In Art Journal, 59 (4)

Mann, Steve (2011). Video: Page title suppressed - page not yet published. Retrieved 4 November 2013 from [URL suppressed - page not yet published]

Mann, Steve (2003): Existential Technology: Wearable Computing is not the real issue. In Leonardo, 36 (1) pp. 19-25

Mann, Steve (2004): "Sousveillance": inverse surveillance in multimedia imaging. In: Schulzrinne, Henning,Dimitrova, Nevenka, Sasse, Martina Angela, Moon, Sue B. and Lienhart, Rainer (eds.) Proceedings of the 12th ACM International Conference on Multimedia October 10-16, 2004, New York, NY, USA. pp. 620-627

Mann, Steven (1998): Humanistic computing: "WearComp" as a new framework and application for intelligent signal processing. In Proceedings of the IEEE, 86 (11) pp. 2123-2151

Mann, Steve (1996b). Wearable, tetherless computer--mediated reality: WearCam as a wearable face--recognizer, and other applications for the disabled, MIT Tech Report number 361, Cambridge, Massachusetts. MIThttp://wearcam.org/vmp.htm

Mann, Steve (1996a): Smart Clothing: The Shift to Wearable Computing. In Communications of the ACM, 39 (8) pp. 23-24

Mann, Steve (2001b): Intelligent Image Processing. Wiley-IEEE Press

Mann, Steve (2001a): Guest Editor's Introduction: Wearable Computing-Toward Humanistic Intelligence. In IEEE Intelligent Systems, 16 (3) pp. 10-15

Mann, Steve and Niedzviecki, Hal (2001): Cyborg: Digital Destiny and Human Possibility in the Age of the Wearable Computer. Doubleday of Canada

Mann, Steve, Nolan, Jason and Wellman, Barry (2003): Sousveillance: Inventing and Using Wearable Computing Devices for Data Collection in Surveillance Environments. In Surveillance & Society, 1 (3)

Mulligan, Deirdre K. (2009). University Course on Sousveillance: SURVEILLANCE, SOUSVEILLANCE, COVEILLANCE, AND DATAVEILLANCE. Retrieved 1 December 2011 from Berkeley iSchool:

Roberts, Steven K. (1988): Computing Across America: The Bicycle Odyssey of a High-Tech Nomad. Information Today Inc

Thompson, Clive (2011). Clive Thompson on Establishing Rules in the Videocam Age. Retrieved 1 December 2011 from Wired Magazine: http://www.wired.com/magazine/2011/06/st_thompson_...

Thorp, Edward O. (1966): Beat the Dealer: A Winning Strategy for the Game of Twenty-One. Vintage