A socio-technical system (STS) is a social system operating on a technical base, e.g. email, chat, bulletin boards, blogs, Wikipedia, E-Bay, Twitter, Facebook and YouTube. Hundreds of millions of people use them every day, but how do they work? More importantly, can they be designed? If socio-technical systems are social and technical, how is computing both at once?

This chapter may be used as part of a STS design course. Hence each part has a set of interesting discussion questions that students can investigate and report back to the class. Anyone wishing to set up a course in the design of social technologies is welcome to use this resource

24.1 Part 1: The evolution of computing

Evolution is systems evolving higher levels.

24.1.1 A short history

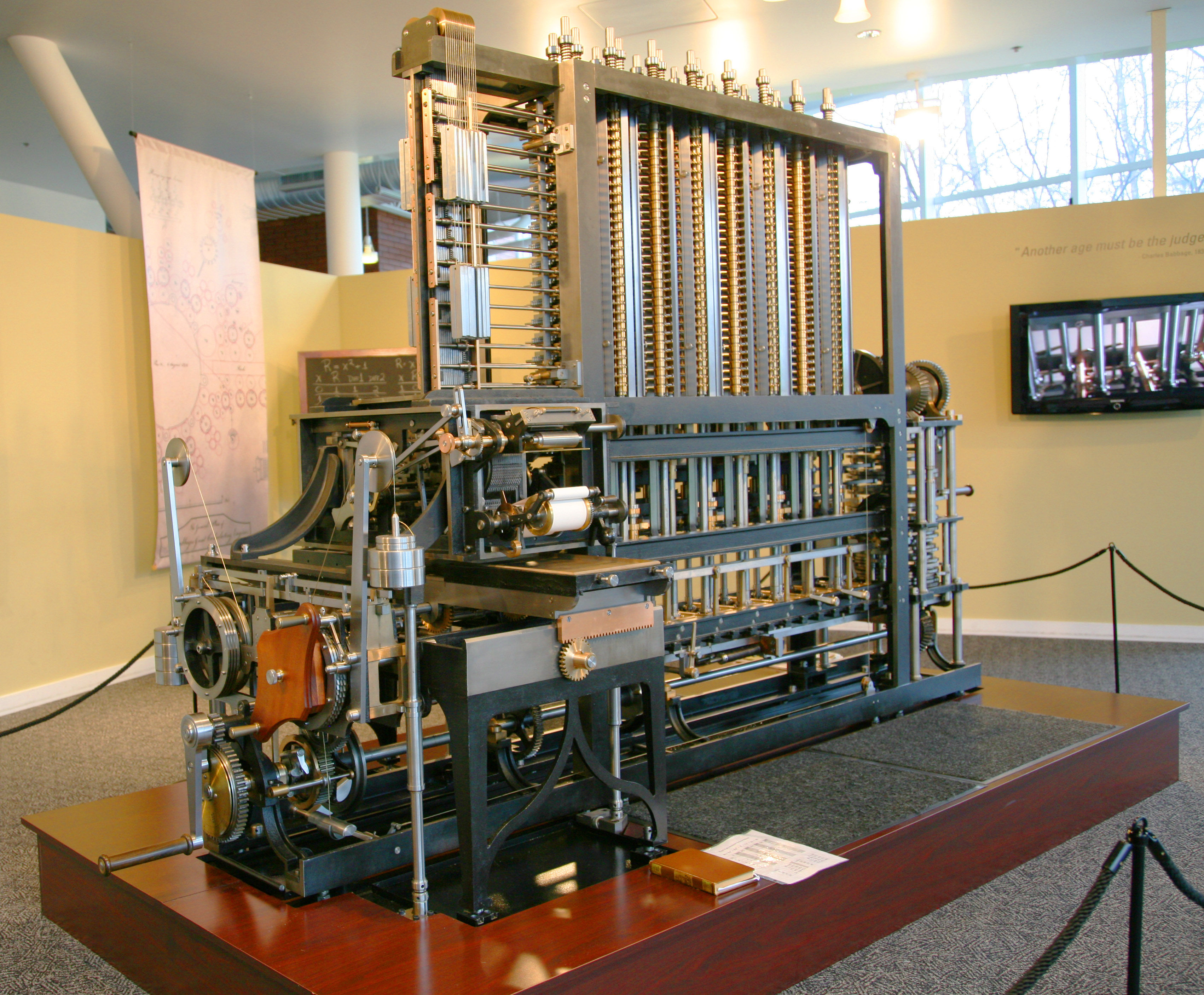

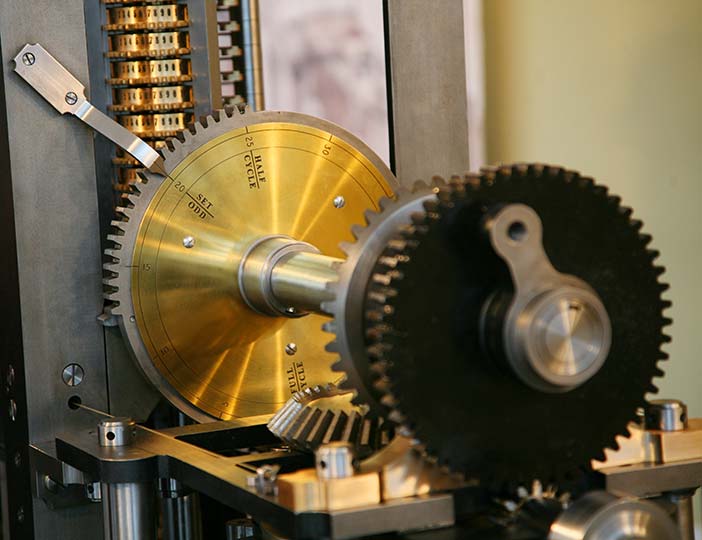

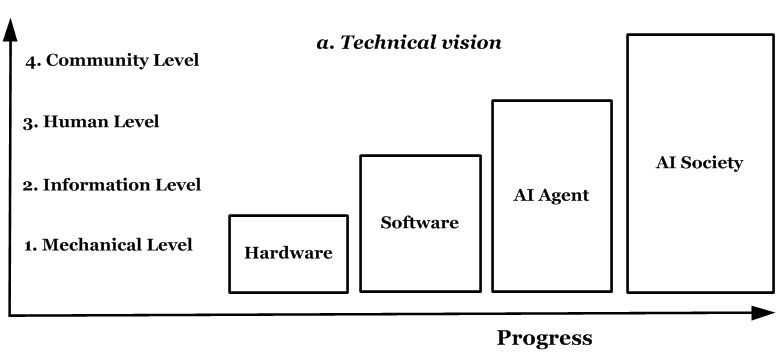

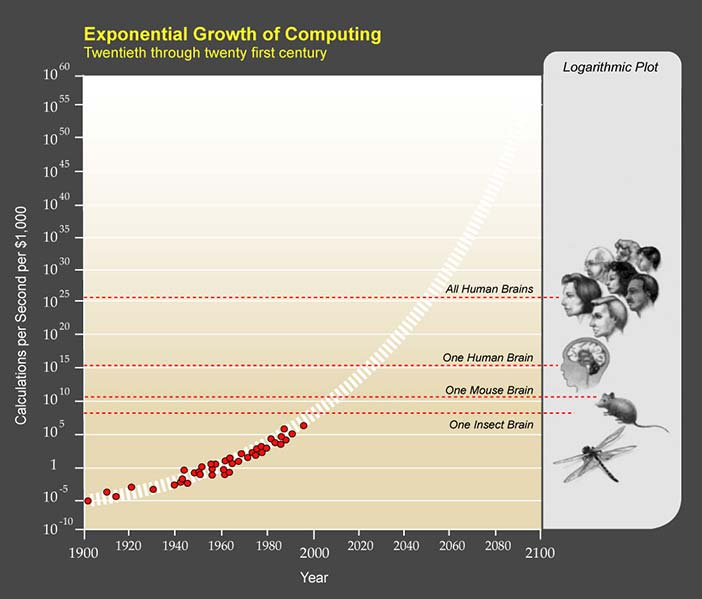

The first computer was conceived of as a machine of cogs and gears (Figure 1). It became operational in the 1950s and -60s with the invention of semi-conductors. In the 1970s, a hardware company called IBM (footnote 1) was a computing leader. In the 1980s software became more important, so by the 1990s a software company called Microsoft (footnote 2) took the computing lead, giving ordinary people tools like word-processing. During the 1990s, computing became more personal, as the World-Wide-Web turned Internet URLs into web site names that people could read (footnote 3). Then a company called Google (footnote 4) offered the ultimate personal service, free access to the vast public library we call the Internet, and everyone's gateway to the web became the new computing leader. The 2000s computing evolved yet again, to become a social medium as well as a personal tool. So now Facebook challenges Google, as Google challenged Microsoft, as Microsoft challenged IBM.

Author/Copyright holder: Courtesy of Jitze Couperus. Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

Figure 24.1: Charles Babbage (1791-1871) designed the first automatic computing engines. He invented computers but failed to build them. The first complete Babbage Engine was completed in London in 2002, 153 years after it was designed. Difference Engine No. 2, built faithfully to the original drawings, consists of 8,000 parts, weighs five tons, and measures 11 feet. The one pictured above is Serial Number 2 and is located in Silicon Valley at the Computer History Museum in Mountain View, California.

Author/Copyright holder: Courtesy of Jitze Couperus. Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

Author/Copyright holder: Courtesy of Jitze Couperus. Copyright terms and licence: CC-Att-SA-2 (Creative Commons Attribution-ShareAlike 2.0 Unported).

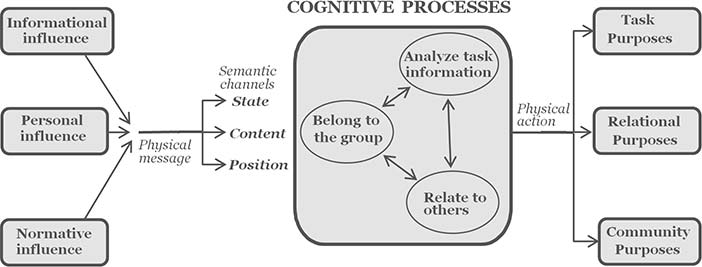

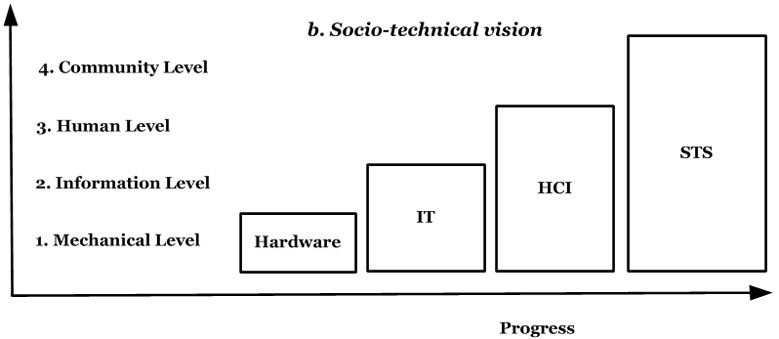

Figure: Details from Babbage's difference engine

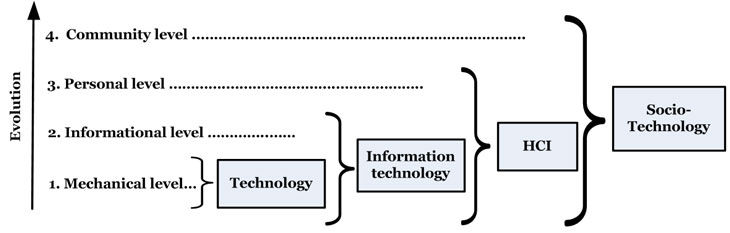

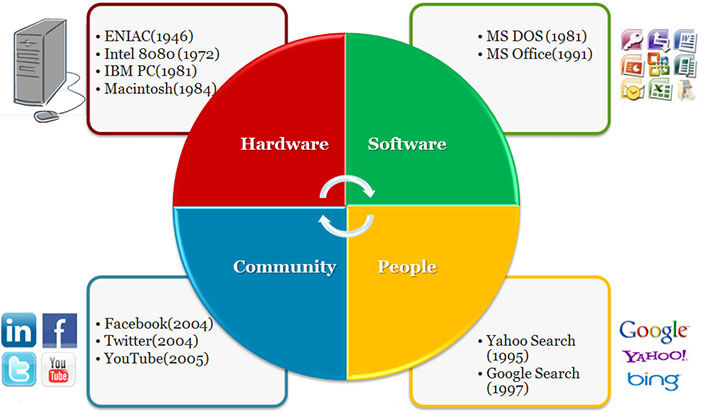

Computing has re-invented itself every decade or so (Figure 2). What began as just hardware became about software, then people, and now communities. A physical machine exchanging electricity became software exchanging information, people exchanging meaning and now communities exchanging memes (footnote 5). The World Wide Web was initially an information web (Web 1.0), then an active web (Web 2.0), now a semantic web (Web 3.0) and is becoming a social web (Web 4.0). Each evolutionary step built on the previous, as social computing needs personal computing, personal computing needs software and software needs hardware. The corresponding evolution of computing design culminates in socio-technical design.

When the software era arrived, hardware continued to evolve but hardware leaders like IBM no longer dominated computing as before. The evolution of computing changed business fortunes by changing what computing is. Selling software makes more money than selling hardware because it changes more often. Web queries are even more volatile, but Google gave a service away for free and then sold advertising around it — it sold its services to those who sold theirs. The business model changed, because selling knowledge is not like selling software. Facebook's business model is still evolving. It now challenges Google because we relate to family and friends more than we query knowledge - social exchanges have more trade potential than knowledge exchange.

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.2: The computing evolution

Yet friends are just social dyads. It is naive to think that social computing will stop at a unit of two. Beyond friends are tribes, cities, city-states, nations and meta-nations like the European Union. A community isn't like a friend, as one has a friend but belongs to a community. With a world population at seven billion and growing, Facebook's 900 million active accounts are just the beginning. The future is computer support for acting groups, families, tribes, nations and eventually a global community, e.g. a group browser for people to tour the Internet together, commenting to each other as they go. Each could take turns to pick the next site or follow an expert host. If socio-technology is just beginning, we need to understand how it works.

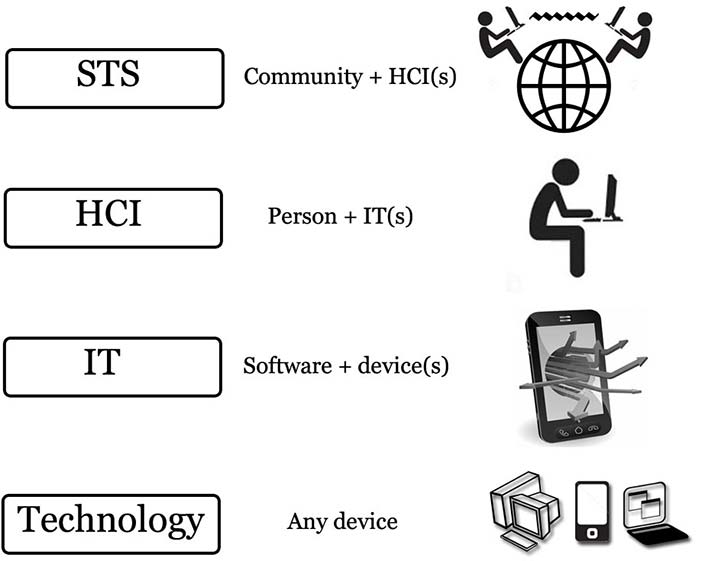

24.1.2 Computing levels

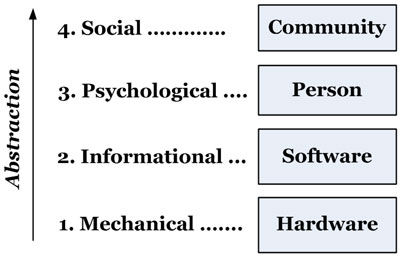

The basis of socio-technical design is general systems theory (Bertalanffy, 1968). It describes what the disciplines of science have in common: sociologists see social systems, psychologists cognitive systems, computer scientists information systems and engineers hardware systems. All refer to systems. In general systems theory, no discipline has a monopoly on science and all are valid. Discipline isomorphies (footnote 6) arise from common system properties, e.g. a social agreement measure that matched a biological diversity measure (Whitworth, 2006). Mechanical, logical, psychological and social systems are studied by engineers, computer scientists, psychologists and sociologists respectively. These perspectives in computing give levels (Table 1). Computing then began at the mechanical level, evolved an information level, then acquired human and community levels.

Level | Examples | Discipline |

Community | Norms, culture, laws, zeitgeist, sanctions, roles | Sociology |

Personal | Semantics, attitudes, beliefs, feelings, ideas | Psychology |

Informational | Programs, data, bandwidth, memory | Computer science |

Mechanical | Hardware, motherboard, telephone, FAX | Engineering |

Table 24.1: Computing levels as discipline perspectives

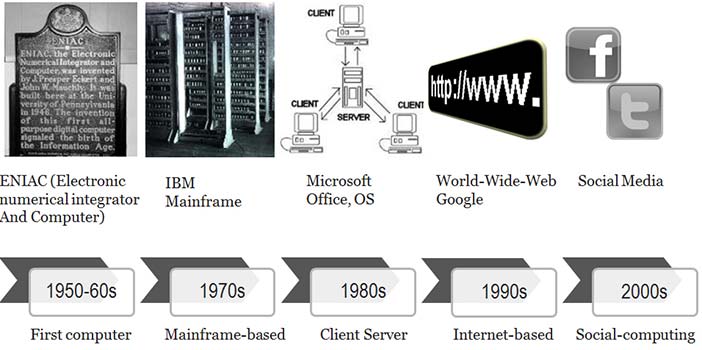

Levels also help clarify terminology. In Figure 3, a technology is any tool people build to use, e.g. a spear is a technology (footnote 7). So a hardware device alone is a technology, but information technology (IT) is both hardware and software. Likewise, computer science(CS) (footnote 8) is a hybrid of mathematics and engineering, not either alone. So information technology is not a sub-set of technology, nor is computer science a sub-set of engineering.

Human computer interaction (HCI) is then a person plus an IT system, with physical, informational and psychological levels. Just as IT isn't hardware, so HCI isn't IT, but the child of IT and psychology. HCI links CS to psychology as CS linked engineering to mathematics. HCI introduces human requirements to computing and HCI systems turn information into meaning.

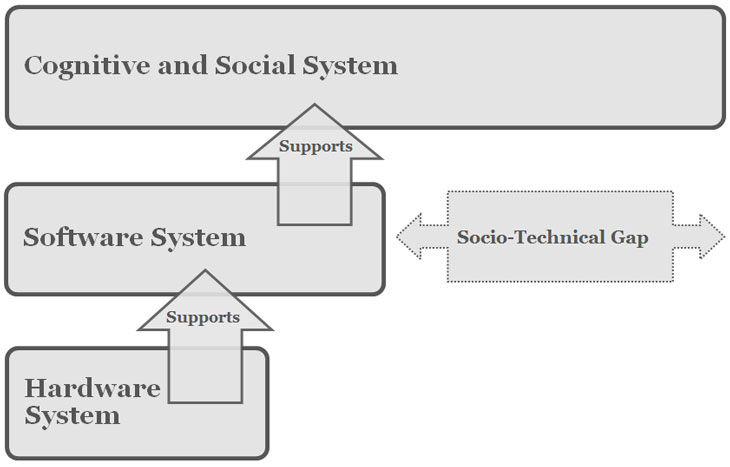

Finally, people can form an online community with hardware, software, personal and community levels. If the first two levels are technical and the last two social, the result is a socio-technical system (STS). If technology design is computing built to hardware and software requirements, then socio-technical design is computing built to personal and community requirements as well. In socio-technical systems, the new "user" of computing is the community (Whitworth, 2009b).

Currently, many terms refer to human factors in computing: Engineers extend the term IT to refer to applications built to user requirements; Business calls people and organizations using computing information systems (IS); Education prefers information communication technology(ICT) to describe computer communication; Health chose the term informatics. Whether your preferred term is IT, IS, ICT or informatics doesn't change the basic idea, that people are now part of computing. This chapter uses the term HCI for consistency (footnote 9).

In this pan-discipline view, all of Figure 3 is computing, whose complexity arises from its discipline promiscuity. Socio-technology then designs a computer product as a social and technical system. Limiting computing to hardware (engineering) or software (computer science) denies its obvious evolution.

Levels in computing are ways to view it, not ways to partition it, e.g. a pilot in a plane is one system with different levels, not a mechanical part (the plane) plus a human part (the pilot). The physical level includes not just the plane body but also the pilot's body, as both have weight, volume etc. The information level isn't just the onboard computer, but also the neuronal processing in the pilot’s brain that generates the qualia (footnote 10) of human experience.

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.3: Computer system levels

The human level is just a pilot who sees the plane as an extension of his or her body, like extra hands or feet, and computer data like extra eyes or ears. On this level, the pilot is the actor and the plane is just a tool. The information level covers all processing, not just of onboard computers but also of the brain. The physical level is not just the body of the plane but also of the pilot. In an aerial conflict, the tactics of a piloted plane will be different from a computer drone. Finally, a plane in a squadron may do things it would not do alone, e.g. expose itself as a decoy so others can attack the enemy.

24.1.3 The reductionist dream

The reductionist dream, based on logical positivism (footnote 11), is that only the physical level is "real", so everything else must reduce to it. Yet when Shannon and Weaver defined information as a choice between physical options, the options were physical but the choosing wasn't (Shannon and Weaver, 1949). A message physically fixed in one way has by this definition zero information, because the other ways it could have been don't exist physically (footnote 12). It is strange but logically true that hieroglyphics one can't read have in themselves no information at all. It is reader choices that generate information, which until deciphered is unknown. If this were not so, data compression couldn't put the same data in a physically smaller signal, which it can. Information is defined by the encoding, not the physical message. If the encoding is unknown, the information is undefined, e.g. an electronic pulse sent down a wire could be a bit, or a byte (an ASCII “1”), or as the first word of a dictionary, say Aardvark, be many bytes. The information a message conveys depends on the decoding process, e.g. every 10th letter of a text gives a new message. Information doesn't exist physically, as it can't be touched or seen. Physicality is necessary for it, but not sufficient.

That mathematical laws are real even though they aren't concrete is mathematical realism (Penrose, 2005). Mathematics is a science because its constructs are logical, not because they are physical. They are real because we conceive them, not because they physically exist. That they are later physically useful is another matter. Cognitive realism is the case that cognitions are also real because we experience them. Mathematical or cognitive constructs defined in physical terms become empirical (footnote 13), and so the feedback loop of science still works, e.g. fear measured by heart rate is a cognitive construct measured in physical terms. Yet fear isn't just heart-rate, as it can also be measured by pupil dilation, blood pressure, etc. Even terms like "red" aren't physical facts as the light spectrum is continuous, with no red frequency section.

The physical level alone is what it is. It has no choices so has no information, i.e. reductionism denies information science. In physics, it gave a clockwork universe, where each state perfectly defined the next. Quantum theory flatly denied this, as quantum events are by definition random, i.e. explained by no physical history. Either quantum theory is wrong, which it has never been, or reductionism, that only the physical is real, is a naive nineteenth century assumption that has had its day. If all science were physical, all science would be physics, which it is not.

Physics today has a quantum level, i.e. a primordial non-physical (footnote 14) reality below physical reality (Whitworth, 2011). Yet long ago, the great 18th Century German philosopher Kant argued that reality is just a view, that we don't see things as they are in themselves (Kant, 1999) (footnote 15). Levels return the observer to science, as quantum theory's measurement paradoxes demand. In philosophy, psychology, mathematics, computing and quantum physics, levels apply (footnote 16).

24.1.4 Science as a world view

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

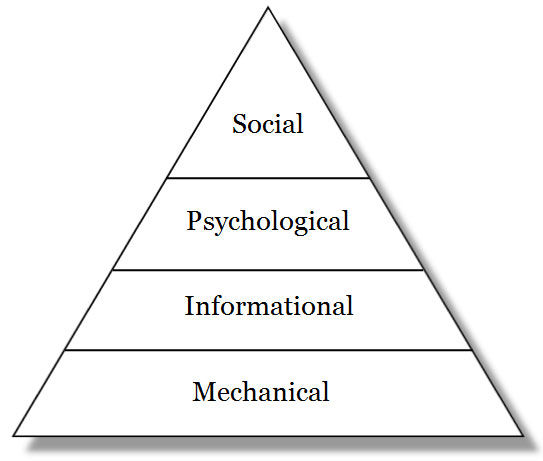

Figure 24.4: Computing levels as abstract views

A level is a world view, a way of seeing complete and consistent in itself. In the mechanical view, a computer is all hardware, but in the informational view it is all data. One can't point to a program on a motherboard nor a device in a data structure. A mobile phone doesn't have hardware and software parts, but is hardware or software in toto. Hardware and software are ways to look at it, not ways to divide it up. Hardware becomes software when we view computing in a different way. The switch is as one swaps glasses to see the same object close-up. The disciplines of science are world views, like walking around an object to see it from different perspectives.

Levels are a fact of science, e.g. to describe World War II as a "history" of atomic events would be ridiculous. A political summary is more useful. Yet levels emerge from each other, as higher abstractions form from lower ones (Figure 4). Information needs hardware choices, cognitions need information flows, and communities need common cognitions. Conversely, without physical choices there is no information, without information there are no cognitions and without cognitions there is no community (footnote 17).

A world view has properties, like being:

Essential.One cannot view a world without first having a point of view.

Empirical. Based on world interaction, e.g. information is empirical.

Complete. A world view consistently describes a whole world.

Subjective. One chooses a view before viewing, explicitly or implicitly.

Exclusive. One can't view two ways at once, as one can't sit in two places at once. (footnote 18)

Emergent. One world view can emerge from another.

Levels as views must be chosen before viewing, i. e. pick a level then view.

Yet how we see the world affects how we act, e.g. if we saw ultra-violet light, as bees do, previously dull flowers would become bright. Every flower shop would have to change its stock. Levels as higher ways to view a system are also new ways to operate and design it, e.g. new software protocols like Ethernet can improve network performance as much as new cables.

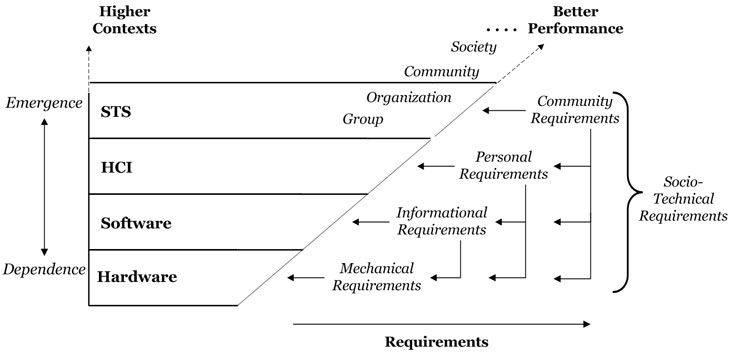

New ways to view computing affect how we build it, and how social levels affect technology design is socio-technical design. Level requirements cumulate, so socio-technical design includes hardware, software and HCI requirements (Figure 5). What appears as just hardware now has requirements outside itself, e.g. smart-phone buttons mustn't be too small for people's fingers. Levels are why computer design has evolved from hardware engineering to socio-technology.

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.5: Computing applications and levels

For a village beside a factory, community needs come second to factory productivity, with ethics an after-thought, but for socio-technology, the community and the technology are one. If social needs are not met there is no community, and if there is no community the technology fails to perform as expected. Socio-technical design is the application of community requirements to people, software and hardware. The following sections derive each computing level from the previous.

24.1.5 From hardware to software

Hardware is any physical computer part, e.g. mouse, screen or case. It doesn't "cause" software nor is software a hardware output, as physical systems have physical outputs. We create software by seeing choice in physicality. Software needs hardware but it isn't hardware, as the same code can run on a PC, Mac or mobile phone. An entity-relationship diagram can work for any physical storage, whether disk, CD or USB, as data entities aren't disk sectors. Software assumes some hardware but no specific one.

If any part of a device acquires software, the whole system gets an information level, e.g. a computer is information technology even though its case is just hardware. We describe a system by its highest level, so if the operating system "hangs" (footnote 19) we say "the computer" crashed, even though the computer hardware is working fine. Rebooting fixes the software problem with no hardware change, so a software system can fail while the hardware still works perfectly.

Conversely, a computer can fail as hardware but not software, if a chip overheats. Replace the hardware part and the computer works with no software change needed. Software can fail without hardware failing and hardware can fail without software failing. New hardware needn't change software and new software needn't change hardware. Each level has its own performance requirements: if software fails we call a programmer, but if hardware fails we call an engineer.

Software requirements can be met by hardware operations, e.g. reading a logical file takes longer if the file is fragmented, as the drive head must jump between physically distant disk sectors. Defragmenting a disk improves software access by putting files in adjacent physical sectors. File access improves, but the physical drive read rate hasn't changed, i.e. hardware actions can meet software goals, e.g. database and network requirements gave new hardware chip commands. The software goal, of better information throughput, also becomes the hardware goal, e.g. physical chip design today is as much about caching and co-processing as it is about cycle rate.

24.1.6 From software to HCI

HCI began with the personal computing era. Adding people to the computing equation meant that getting technology to work was only half the problem - the other half was getting people to use it. Web users who didn't like a site just clicked on. Web sites that got more hits succeeded because given equal functionality, users chose the more usable product (Davis, 1989), e.g. Word replaced Word Perfect because it was more usable - users who took a week to learn Word Perfect picked up Word in a day. As computing previously gained a software level, it now gained a human level.

Human-computer interaction (HCI) is a person using IT, as IT is software using hardware. As computer science merges mathematics and engineering, but is neither, so HCI merges psychology and computer science, but is neither. Psychology is the study of people, and computer science the study of software, but the study of people using software, or HCI, is new. It is another computing discipline that cuts across other disciplines. HCI applies psychology to computing design, e.g. Miller's paper on cognitive span suggests limiting computer menu choices to seven (Miller, 1956). Our many senses and multi-media computing is another example of a human requirement defining computing.

24.1.7 From HCI to STS

Social structures, roles and rights add a fourth level to computing. Socio-technical design uses the social sciences in computing design as HCI uses psychology. STS is not part of HCI, nor is sociology part of psychology, because a society is more than the people in it, e.g. East and West Germany, with similar people, performed differently as communities, as is true for North and South Korea today. To say "the Jews" survived but "the Romans" didn't is to say that the society didn't continue, not its people, as no Roman era people are alive today. A society is not just the people in it. People who gather to view a spectacle or customers coming to shop for bargains, are not a community. A community is here an agreed form of social interaction that persists (Whitworth and de Moor, 2003).

Social interactions can have a physical or a technical base, e.g. a socio-physical system is people connecting by physical means. Face-to-face friendships cross seamlessly to Facebook because the social level persists across physical and electronic architecture bases. Whether electronically or physically mediated, a social system is always people interacting with people. Electronic communication may be “virtual”, but the people involved are real.

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.6: The computing requirements hierarchy

A community works through people using technology as people work through software using hardware, so social requirements are now part of computing design (Sanders and McCormick, 1993). While sociology studies the social level alone, socio-technical design studies how personal and social requirements can be met by IT system design. Certainly this raises the cost of development, but then systems like social networks have far more performance potential.

24.1.8 The computing requirements hierarchy

The evolution of computing implies a requirements hierarchy (Figure 6). If the hardware works software becomes the priority, if the software works user needs arise, and when user needs are met social requirements follow. As one level's issues are met those of the next appear, as climbing one hill reveals another. As hardware over-heating problems are solved, software data locking problems arise. As software response times improve, user response times become the issue. Companies like Google and E-bay still seek customer satisfaction, but customers in crowds have social needs like fairness and synergy. As computing evolves, higher levels come to drive success. In general, the highest level of a system defines its success, e.g. social networks need a community to succeed. If no community forms, it doesn't matter how easy to use, fast or reliable the software is. Lower levels are essential to avoid failure, but higher levels are essential to success.

Level | Requirements | Errors |

Community | Reduce community overload, clashes, increase productivity, synergy, fairness, freedom, privacy, transparency. | Unfairness, slavery, selfishness, apathy, corruption, lack of privacy. |

Personal | Reduce cognitive overload, clashes, increase meaning transfer efficiency. | User misunderstands, gives up, is distracted, or enters wrong data. |

Informational | Reduce information overload, clashes, increase data processing, storage, or transfer effeciency | Processing hangs, data storage full, network overload, data conflicts. |

Mechanical | Reduce physical heat or force overload. Increase heat or force efficiency. | Overheating, mechanical fractures or breaks, heat leakage, jams. |

Table 24.2: Computing errors by system level

Conversely, any level can cause failure, e.g. it doesn’t matter how high community morale is if the hardware fails, the software crashes or the interface is unusable. An STS fails if its hardware fails, if its program crashes or if users can’t figure it out. Hardware, software, personal and community failures are all computing errors (Table 2). The one thing they have in common is that the system fails to perform, and in evolution, what doesn't perform doesn't survive.

When computing was just technology, it only failed for technical reasons, but now it is socio-technology; it can also fail for social reasons. Technology is hard, but society is soft. That the soft should direct the hard seems counter-intuitive, but trees grow at their soft tips not their hard base. As a tree trunk doesn't direct its expanding canopy, so today's social computing was undreamt of by its technical base.

24.1.9 Design combinations

Author/Copyright holder: Courtesy of Ocrho. Copyright terms and licence: pd (Public Domain (information that is common property and contains no original authorship)).

Figure 24.7.A: Remote controls for Apple products are good examples of HCI Design

Author/Copyright holder: Unknown (pending investigation). Copyright terms and licence: Unknown (pending investigation). See section "Exceptions" in the copyright terms below.

Figure 24.7.B: Remote controls for televisions are not.

Design fields combine different requirements and design levels, as in Table 3:

Ergonomics is the design of safe and comfortable machines for people. To design technology to human body needs like posture and eye-strain merges biology and engineering.

Object design, as defined by Norman, applies psychological needs to mechanical design (Norman, 1990), e.g. a door's design affects whether it is pushed or pulled. An affordance is a physical object design that cues its use, as a button cues pressing. Physical systems designed to human requirements work better. In World War II, planes crashed until engineers designed cockpit controls to the cognitive needs of pilots as follows (with computing examples):

Put the control by the thing controlled, e.g. a handle on a door (context menus).

Let the control “cue” the required action, e.g. a joystick (a 3D screen button).

Make the action/result link intuitive, e.g. press a joystick forward to go down, (press a button down to turn on).

Provide continuous feedback, e.g. an altimeter, (a web site breadcrumbs line).

Reduce mode channels, e.g. altimeter readings, (avoid edit and zoom mode confusions).

Use alternate sensory channels, e.g. warning sounds, (error beeps).

Let pilots "play", e.g. flight simulators, (a system sandbox).

Human computer interaction applies psychological requirements to software design. Usable interfaces respect cognitive principles, e.g. by the nature of human attention, users don’t usually read the entire screen. HCI turns psychological needs into IT designs as architecture turns buyer needs into house designs. Compare Steve Jobs' IPod to a television remote (Figure 7). Both do the same job (footnote 20) but one is a cool tool and the other a mass of buttons. One was designed to engineering requirements and the other to human needs. Which then performs better?

Fashion is the social need to look good applied to object design. In computing, a mobile phone can be a fashion accessory, just like a hat or handbag. Its role is to impress, not just to function. Aesthetic criteria apply when people buy mobile phones to be trendy or fashionable, so color is as important as battery life in mobile phone selection.

Socio-technology, the social design of information technology, applies social requirements to software design. Anyone online can see the power of socio-technology but most see it as an aspect of their specialty. Sociologists study society as if it were apart from physicality, which it is not. Technologists study technology as it were apart from community, which it is not. Only socio-technology studies how the social links to the technical, as a new discipline.

Field | Target | Requirements | Example |

STS | IT | Community ... | Wikipedia, YouTube, E-bay |

Fashion | Accessory | Community ... | Mobile phone as an accessory |

HCI | IT | Personal ... | Framing, border contrast, richness |

Design | Technology | Personal ... | Keyboard, mouse |

Ergonomics | Technology | Biological ... | Adjustable height screen |

Table 24.3: Design fields by target and requirement levels

In Figure 8, higher level requirements filter down to affect lower level operation and design. This higher affects lower principle is that higher levels directing lower ones improves system performance. Any level requirement can translate down, e.g. communities require agreement to act, which at the citizen level gives norms, at the informational level laws and at the physical level cultural events. The same applies online, e.g. online communities make demands of netizens (footnote 21) as well as hardware. STS design then is about having it all: reliable devices, efficient code, intuitive interfaces and sustainable communities.

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.8: Computing requirements cumulate

In physical society, over thousands of years, families formed tribes, tribes formed city states, city-states formed nations states, and nations formed nations of nations, each with more complex social structures (Diamond, 1998). The social level in Figure 8 isn't just one step, as social units can form bigger social units (footnote 22) to get new requirements (Whitworth and Whitworth, 2010).

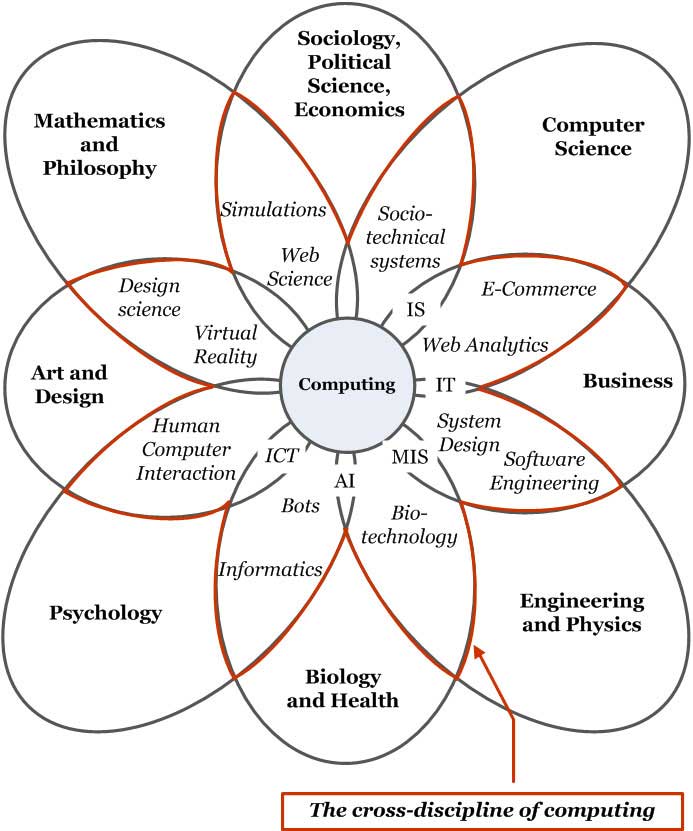

24.1.10 The flower of computing

The evolution of computing involves four main specialties (Figure 9), but pure engineers see only mechanics, pure computer scientists only information, pure psychologists only cognitions and pure sociologists only social structures. So computing as a whole isn't pure, yet this hybrid is the future because performance isn't about purity, as practitioners understand (Raymond, 1999).

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.9: The four stages of computing

The kingdom of computing is a realm divided, as academics specialize to get publications, grants and promotions (Whitworth and Friedman, 2009). Specialties guard their knowledge in journal castles with jargon walls, like medieval fiefdoms, but in doing so hold hostage knowledge, that by its nature should be free. This division also disguises and limits the growth of computing. Every day more people use more computers to do more things in more ways but computing staff rarely get critical mass, because engineering, computer science, health (footnote 23), business, psychology, mathematics and education all compete for the computing crown (footnote 24). A realm divided is weak, and will get weaker if music, art, journalism, architecture etc. also set up outposts. Computing faculty scatter over the academic landscape like the tribes of Israel, some in engineering, some in computer science, some in health, etc. Yet we are one. Mathematics split up like this would be equally dilute.

The flower of computing is borne of many disciplines but belongs to none. It is a new discipline in itself (Figure 10). For it to bear research fruit, its academic parents must set it free. Let us trade knowledge not dominate it. Using different terms, models and theories for the same subject invites confusion. Universities that split computing research into small groups, isolated by discipline boundaries, distance themselves from its multi-disciplinary future. Until computing research becomes one, computing theory will remain as it is now - decades behind computing practice.

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.10: The flower of computing

24.1.11 Discussion questions

Research selected questions from the list below. If you are reading this chapter as part of a class - either at university or a commercial course - you can research these questions in pairs and report back to the class, with reasons and examples.

How has computing evolved since it began? Is it just faster machines and better software? What is the role of hardware companies like IBM and Intel in modern computing?

How has the computing business model changed as it evolved? Why does selling software make more money than selling hardware? Can selling knowledge make even more money? What about selling friendships? Can one sell communities?

Is a kitchen table a technology? Is a law a technology? Is an equation a technology? Is a computer program a technology? Is an information technology (IT) system a technology? Is a person an information technology? Is an HCI system (person plus computer) an information technology? What, exactly, isn't a technology?

Is any set of people a community? How do people form a community? Is a socio-technical system (an online community) any set of HCI systems? How do HCI systems form an online community?

Is computer science part of engineering or of mathematics? Is human computer interaction (HCI) part of engineering, computer science or psychology? Is socio-technology part of engineering, computer science, psychology or one of the social sciences? (footnote 25)

In an aircraft, is the pilot a person, a processor, or a physical object? Can one consistently divide the aircraft into human, computer and mechanical parts? How can one see it?

What is the reductionist dream? How did it work out in physics? Does it recognize computer science? How did it challenge psychology? Has it worked out in any discipline?

How much information does a physical book, that is fixed in one way, by definition, have? If we say a book "contains" information, what is assumed? How is a book's information generated? Can the same physical book "contain" different information for different people? Give an example.

If information is physical, how can data compression put the same information in a physically smaller signal? If information is not physical, how does data compression work? Can one encode more than one semantic stream into one physical message? Give an example.

Is a bit physical "thing"? Can you see or touch a bit? If a signal wire sends a physical "on" value, is that always a bit? If a bit isn't physical, can it exist without physicality? How can a bit require physicality but not itself be physical? What creates information, if it is not the mechanical signal?

Is information concrete? If we can't see information physically, is the study of information a science? Explain. Are cognitions concrete? If we can't see cognitions physically, is the study of cognitions (psychology) a science? Explain. What separates science from imagination if it isn't physicality?

Give three examples of other animal species who sense the world differently from us. If we saw the world as they do, would it change what we do? Explain how seeing a system differently can change how it is designed. Give examples from computing.

If a $1 CD with a $1,000 software application on it is insured, what do you get if it is destroyed? Can you insure something that is not physical? Give current examples.

Is a "mouse error" a hardware, software or HCI problem? Can a mouse's hardware affect its software performance? Can it affect its HCI performance? Can mouse software affect HCI performance? Give examples in each case. If a wireless mouse costs more and is less reliable, how is it better?

Give three examples of a human requirement giving an IT design heuristic. This is HCI. Give three examples of a community requirement giving an IT design heuristic. This is STS.

Explain the difference between a hardware error, a software error, a user error and a community error, with examples. What is the common factor here?

What is an application sandbox? What human requirement does it satisfy? Show an online example.

Distinguish between a personal requirement and community requirement in computing. Relate to how STS and HCI differ and how socio-technology and sociology differ. Why can't sociologists or HCI experts design socio-technical systems?

What in general to people do if their needs aren't met by a physical situation? What do users do if their needs aren't met online? What is the difference? What do citizens of a physical community do if it doesn’t meet their needs? What about an online community? Again, what is the difference? Give specific examples to illustrate.

According to Norman, what is ergonomics? What is the difference between ergonomics and HCI? What is the difference between HCI and STS?

Give examples of: Hardware meeting engineering requirements. Hardware meeting Computer Science requirements. Software meeting CS requirements. Hardware meeting psychology requirements. Software meeting psychology requirements. People meeting psychology requirements. Hardware meeting community requirements. Software meeting community requirements. People meeting community requirements. Communities meeting their requirements. Which are computing design

Why is an IPod so different from TV or video controls? Which is better and why? Why has TV remote design changed so little in decades? If TV and the Internet compete for the hearts and minds of viewers, who will win?

How does an online friend differ from a physical friend? Can friendships transcend physical and electronic interaction architectures? Give examples. How is this possible?

Why do universities spread computing researchers across many disciplines? What is a cross-discipline? What past cross-disciplines became disciplines. Why is computing a cross-discipline?

24.2 Part 2: Design spaces

All my cuts are the best (said by a butcher to a housewife who asked him for the best cuts).

The previous section reviewed computing system levels, this one reviews constituent parts.

24.2.1 The elephant in the room

The beast of computing has regularly defied pundit predictions. Key advances like the cell-phone (Smith et al, 2002) and open-source development (Campbell-Kelly, 2008) weren't predicted by the experts of the day, though the signs were there for all to see. As experts pushed media-rich systems, lean text chat, blogs, texting and wikis took off. Even today, people with rich video-phones still text. Google's simple white screen scooped the search engine field not Yahoo's multi-media graphics. In gaming, the innovation was social gaming not virtual reality helmets. Investors in Internet bandwidth lost money when the future wasn't all video.

In computing, that practice leads but theory bleeds has a long history. Over thirty years ago, paper was declared "dead", by the electronic paperless office (Toffler, 1980). Yet today, paper is used more than ever before. James Martin saw program generators replacing programmers, but today, we still have a programmer shortage. A “leisure society” was supposed to arise as machines took over our work, but today, we are less leisured than we ever were (Golden and Figart, 2000). The list goes on: email was supposed to be for routine tasks, the Internet was supposed to collapse without central control, video was supposed to replace text, teleconferencing was supposed to replace air travel, AI smart-help was supposed to replace help-desks, and so on.

We get it wrong time and again, because computing is the elephant in our living room. We can't see it because it is too big. In the story of the blind men and the elephant, one grabbed its tail and found it like a rope and bendy, another took a leg and declared it fixed like a pillar, a third felt an ear and thought it like a rug and floppy, while the last seized the trunk, and found it like a pipe but very strong (Sanai, 1968). Each saw a part but none saw the whole. How can one see an elephant by analyzing its toenails? (footnote 26)

24.2.2 Design requirements

To design a system is to find problems early, e.g. a misplaced wall on an architect's plan can be moved by the stroke of a pen, but design needs performance requirements, like efficiency. Requirements engineering analyzes stakeholder needs, to specify what a system must do for them to sign off on the end product. It is basic to system design:

The primary measure of success of a software system is the degree to which it meets the purpose for which it was intended. Broadly speaking, software systems requirements engineering (RE) is the process of discovering that purpose...

-- Nuseibeh and Easterbrook, 2000: p. 1

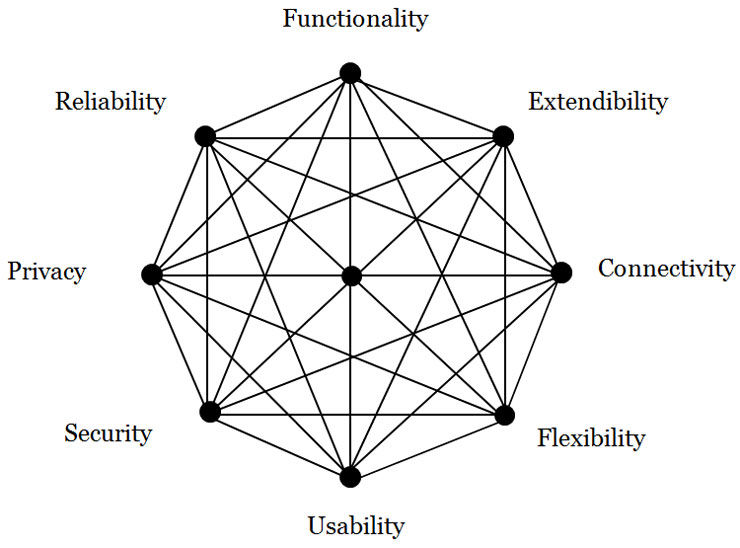

A requirement can be a particular value (e.g. uses SSL), a range of values (e.g. less than $100), or a criterion scale (e.g. is secure). Given a system's requirements, designers can build it, but for computing, the literature can't agree on what they are. One text has usability, repairability, security and reliability (Sommerville, 2004, p. 24) but the ISO 9126-1 quality model has functionality, usability, reliability, efficiency, maintainability and portability (Losavio et al, 2004). Berners-Lee made scalability a World Wide Web criterion (Berners-Lee, 2000) while others stress open standards between systems (Gargaro et al, 1993). Business criteria are cost, quality, reliability, responsiveness and conformance to standards (Alter, 1999), but software architects prefer portability, modifiability and extendibility (de Simone and Kazman, 1995). Others espouse flexibility (Knoll and Jarvenpaa, 1994) and privacy (Regan, 1995). On the issue of what computer systems need to succeed, the literature is at best confused. This gives what developers call the requirements mess (Lindquist, 2005), that has ruined many a software project. It is the problem that agile methods address.

In current theories, each specialty sees only itself. Security specialists see security as availability, confidentiality and integrity (OECD, 1996), so to them, reliability is part of security. Reliability specialists see dependability as reliability, safety, security and availability (Laprie and Costes, 1982), so to them security is part of a general reliability concept. Yet both can't be generally true. Similarly, a usability review finds functionality and error tolerance part of usability (Gediga et al, 1999) while a flexibility review finds scalability, robustness and connectivity aspects of flexibility (Knoll and Jarvenpaa, 1994). In academia, each specialty expands to fill the theory space around it.

Yet there is recognition that no specialty is the be all or end all:

The face of security is changing. In the past, systems were often grouped into two broad categories: those that placed security above all other requirements, and those for which security was not a significant concern. But ... pressures ... have forced even the builders of the most security-critical systems to consider security as only one of the many goals that they must achieve.

-- Kienzle and Wulf, 1998: p5

Analyzing performance goals in isolation is giving diminishing returns.

24.2.3 Design spaces

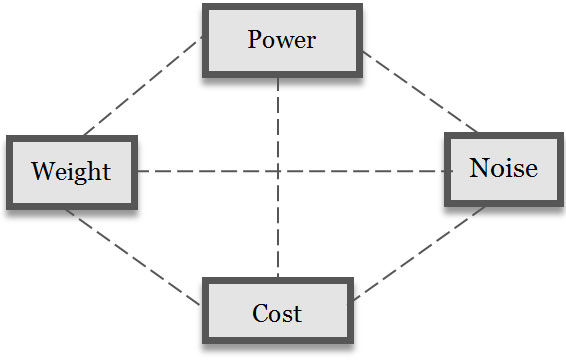

Architect Christopher Alexander observed that vacuum cleaners with powerful engines and more suction were also heavier, noisier and cost more (Alexander, 1964). One performance criterion has a best point, but two criteria, like power and cost, give a best line. The efficient frontier of two performance criteria is the maximum of one for a value of the other (Keeney and Raiffa, 1976). A system design is choosing a many value point in a multi-dimensional design space, of many combinations. So there are many "best" points, e.g. a cheap, heavy but powerful vacuum cleaner, or light, expensive and powerful one (Figure 11). The efficient frontier of a design space is a surface of "best" combinations (footnote 27). Advanced system performance is not a one dimensional ladder to excellence, but a station with many trains to many destinations.

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.11: A vacuum cleaner design space

Designing in a multi-dimensional space gives many "best" points, so nature has no best animal. Successful life includes flexible viruses, reliable plants, social insects and powerful tigers, with the latter endangered. In evolution, not just the strong are fit and over specialization can even lead to extinction. Likewise, computing has no "best". If computer performance was just about processing we would all want supercomputers, but laptops with less power perform better for some (David et al, 2003). Blindly adding software functions gives bloatware (footnote 28), applications full of features that no-one uses.

Design is then the art of reconciling many requirements in a system form, e.g. a quiet, reliable, cheap and powerful vacuum cleaner. It is the innovative synthesis of a performance form in a requirements space (Alexander, 1964). It isn't one dimensional, e.g. Berners-Lee chose HTML for the World Wide Web for its flexibility (across platforms), reliability and usability (easy to learn). An academic conference rejected his WWW proposal because HTML was inferior to SGML (Standard Generalized Markup Language). Specialists saw their specialty, not system performance. Even after the World Wide Web's phenomenal success, their blindness remained:

Despite the Web’s rise, the SGML community was still criticising HTML as an inferior subset ... of SGML

-- Berners-Lee, 2000: p96

What has changed since academia found the World Wide Web "inferior"? Not a lot. If it is any consolation, an equally myopic Microsoft also found it "unprofitable". In system design, a focus on any one criterion gives diminishing returns, whether it is functionality, security (OECD, 1996), extendibility (Simone and Kazman, 1995), privacy (Regan, 1995), usability (Gediga et al., 1999) or flexibility (Knoll and Jarvenpaa, 1994). Improving one aspect alone can even reduce performance, i.e. "bite back" (Tenner, 1997), e.g. a network so secure that no-one uses it. Advanced system performance does not result from one dimensional design.

24.2.4 Non-functional requirements

In traditional requirements engineering, criteria like usability are quality requirements that affect functional goals but can't stand alone (Chung et al, 1999). For decades, these non-functional requirements (NFRs), or “-ilities”, were considered second class requirements. They defied categorization, except to be non-functional. How exactly they differed from functional goals was never made clear (Rosa et al, 2001), yet most modern systems have more lines of interface, error and network code than functional code, and increasingly fail for "unexpected" non-functional reasons (footnote 29) (Cysneiros and Leite, 2002, p. 699).

The logic is that NFRs like reliability can't exist without functionality, so are subordinate to it. Yet by the same logic, functionality can't exist without reliability, e.g. a car that won't start has no speed function, nor does a car that is stolen or can't be driven. NFRs don't just modify performance they define it. In nature, functionality isn't the only key to success, e.g. viruses hijack the functionality of other system's. Functionality differs from other system requirements only in being more obvious to us. It is really just one of many requirements. The distinction between functional and non-functional requirements is our bias, like seeing the sun going round the earth because we are on the earth.

24.2.5 Constituent parts

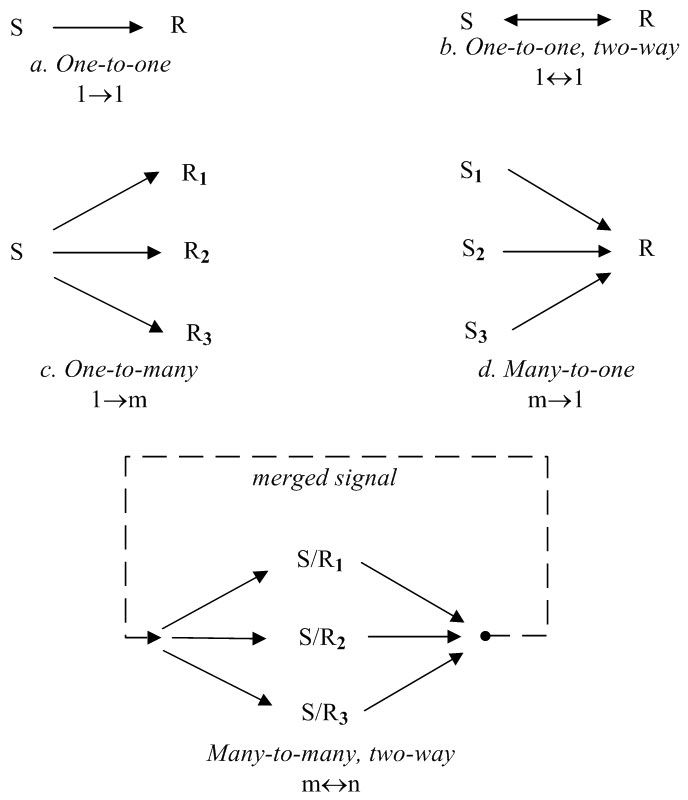

In general systems theory, any system consists of:

Parts, and

Interactions.

But are software parts lines of code, variables or sub-programs? Let a system's elemental parts be those not formed of other parts. A mechanic stripping a car stops at the bolt element, as to decompose it further gives atoms, which are no longer mechanical. Each level has a different elemental part: physics has quantum strings, information has bits, psychology has qualia, and society has citizens (Table 4). Elemental parts then form complex parts as bits form bytes.

Level | Elemental part | Other parts |

Community | Citizen | Friendships, groups, organizations, societies. |

Personal | Qualia | Cognitions, attitudes, beliefs, feelings, theories. |

Informational | Bit | Bytes, records, files, commands, databases. |

Physical | Quantum strings? | Quarks, electrons, nucleons, atoms, molecules. |

Table 24.4: System parts by level

Let a system's constituent parts be those that interact to form the system but are not part of other parts (Esfeld, 1998). So, disconnecting a car entirely gives elemental parts not constituent parts, e.g. a bolt on a wheel isn't a constituent because it is part of the wheel.

To say a body is composed of cells ignores its structure: how elemental parts form constituent parts. Only in system heaps, like a pile of sand, are elemental parts also constituent parts. The body's constituent parts are the digestive system, the respiratory system, etc, not its cells. Just sticking together arbitrary physical parts, like head, arms, and legs, gives the Frankenstein effect (footnote 30) (Tenner, 1997).

24.2.6 Holism and specialization

The performance of a system of parts that interact isn't defined by decomposition alone. Even simple parts, like air molecules, can interact strongly to form a chaotic system like the weather (Lorenz, 1963). Gestalt psychologists called the whole being more than its parts holism, as a curve is just a curve but in a face becomes a "smile". Holism is how system parts change by interacting with others. Holistic systems are individualistic, because changing one part, by its interactions, can cascade to change the whole system drastically. People rarely look the same because one gene change can change everything. The brain is also holistic - one thought can change everything you know.

Yet a system's parts needn't be simple. The body began as one cell, a zygote, that divided into all the cells of the body, including liver, skin, bone and brain cells (footnote 31). Likewise in early societies most people did most things, but today we have millions of specialist jobs. A system's specialization (footnote 32) is the degree its parts differ in form and action, especially constituent parts.

Holism (complex interactions) and specialization (complex parts) are hallmarks of evolved systems, giving both levels and constituent specializations.

24.2.7 General performance requirements

Requirements engineering aims to define a system’s purposes. If levels and constituent specializations change those purposes, how can requirements engineering succeed? The answer proposed here is to take the view of the system itself, specifying requirements for different levels and constituent specializations. How these are reconciled is then the art of system design.

A system interacts with its environment to perform, i.e. to gain value and avoid loss in order to survive. In Darwinian terms, what doesn't survive fails and what does succeeds. So a system needs a boundary to exist apart from the world and an internal structure to support and manage that existence. It needs effectors to act upon the environment around it and receptors to monitor the world for risks and opportunities.

Constituent | Requirement | Definition |

Boundary | Security | To deny unauthorized entry, misuse or takeover by other entities. |

Extendibility | To attach to or use outside elements as system extensions. | |

Structure | Flexibility | To adapt system operation to new environments |

Reliability | To continue operating despite system part failure | |

Effector | Functionality | To produce a desired change on the environment |

Usability | To minimize the resource costs of action | |

Receptor | Connectivity | To open and use communication channels |

Privacy | To limit the release of self information by any channel |

Table 24.5: System performance requirements by constituent specialty

So as cells evolved they first got a boundary membrane, then organelle and nuclear structures for support and control, then eukaryotic cells evolved flagella to move and protozoa got photo-receptors (Alberts et al, 1994). We also have a skin boundary, metabolic and brain structures, muscle effectors and sense receptors, like the eye. Computers also have a case boundary, a motherboard internal structure, printer or screen effectors and keyboard or mouse receptors. Four constituent specializations by risk and opportunity goal options gives eight performance requirements (Table 5). The details are as follows:

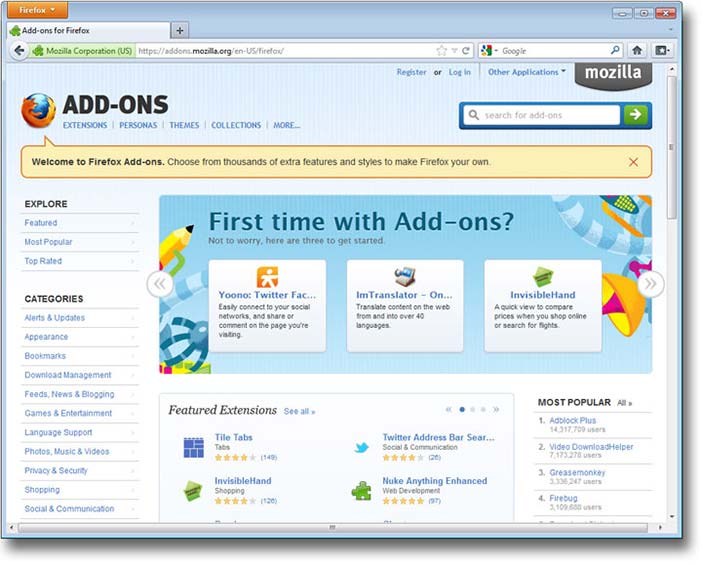

Boundary constituents manage the system boundary. They can be designed to deny outside things entry (security) or to use them (extendibility). In computing, virus protection is security and system add-ons are extendibility (Figure 24.12). In people, the immune system gives biological security and tool-use illustrates extendibility.

Structure constituents manage internal operations. They can be designed to limit internal change to reduce faults (reliability), or to allow internal change to adapt to outside changes (flexibility). In computing, reliability reduces and recovers from error and flexibility is the system preferences that allow customization. In people, reliability is the body fixing a cell "error" that might cause cancer, while the brain learning illustrates flexibility.

Effector constituents manage environment actions, so can be designed to maximize effects (functionality) or minimize resource use (usability). In computing, functionality is the menu functions, while usability is how easy they are to use. In people, functionality gives muscle effectiveness and usability is metabolic efficiency.

Receptor constituents manage signals to and from the environment, so can be designed to open communication channels (connectivity) or close them (privacy). Connected computing can download updates or chat online, while privacy is the power to disconnect or log off. In people, connectivity is conversing and privacy is the legal right to be left alone. In nature, privacy is camouflage, and the military calls it stealth.

Every system is somehow created, which takes effort both for applications that are built or organisms that are born. A system's ability to reproduce is important but outside the current scope, as apart from virus programs, few computer systems do this.

These general system criteria map well to current terms (Table 6). They apply at any level, but as what is exchanged changes, so do the names used:

Hardware systems exchange energy. So “functionality” is power, i.e. hardware with high CPU cycle or disk read-write rates. “Usable” hardware uses less power for the same result, e.g. mobile phones that last longer. Reliable hardware is rugged enough to work if you drop it, and flexible hardware is mobile to still work if you move around, i.e. change environments. Secure hardware blocks physical theft, e.g. by laptop cable locks, and extendible hardware has ports for peripherals to be attached. Connected hardware has wired or wireless links and private hardware is tempest proof i.e. it doesn’t physically leak energy.

Software systems exchange information. Functional software has many ways to process information, while “usable” software uses less CPU processing (“lite” apps). Reliable software avoids errors or recovers from them quickly. Flexible software is operating system platform independent. Secure software can't be corrupted or overwritten. Extendible software can access OS program library calls. Connected software has protocol "handshakes" to open read/write channels. Private software can encrypt information so others can't see it.

HCI systems exchange meaning, including ideas, feelings and intents. In functional HCI the human computer pair is effectual, i.e. meets the task goal. Usable HCI requires less intellectual, affective or conative (footnote 33) effort, i.e. is intuitive. Reliable HCI avoids or recovers from unintended user errors by checks or undo choices — the web Back button is an HCI invention. Flexible HCI lets users change language, font size or privacy preferences, as each person is a new environment to the software. Secure HCI avoids identity theft by user password. Extendible HCI lets users use what others create, e.g. mash-ups and third party add-ons. Connected HCI communicates with others, while privacy includes not getting spammed or being located on a mobile device.

Each level applies the same ideas to a different system view. The community level is covered later.

24.2.7.1 GSR Criterion | 24.2.7.2 Related Criteria |

Functionality | Effectualness, capability, usefulness, effectiveness, power, utility. |

Usability | Ease of use, simplicity, user friendliness, efficiency, accessibility. |

Extendibility | Openness, interoperability, permeability, compatibility, standards. |

Security | Defense, protection, safety, threat resistance, integrity, inviolable. |

Flexibility | Adaptability, portability, customizability, plasticity, agility, modifiability. |

Reliability | Stability, dependability, robustness, ruggedness, durability, availability. |

Connectivity | Networkability, communicability, interactivity, sociability. |

Privacy | Tempest proof, confidentiality, secrecy, camouflage, stealth, encryption. |

Table 24.6: Related performance criteria

Copyright © Mozilla. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See section "Exceptions" in the copyright terms below.

Figure 24.12: Mozilla/Firefox add-ons

24.2.8 A general system design space

The above gives the general system design space of Figure 13, where for a particular system:

The area is the overall performance requirements met, i.e. performance in general.

The shape is the requirement weights, defined by the environment.

The lines are design requirement "tensions" (see below).

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.13: A general system performance design space

This space has active requirement that enhance opportunities (footnote 34) and passive ones that reduce risks (footnote 35), where taking opportunities is as important in performance as reducing risk (Pinto, 2002). Criteria weights vary by environment, so security is more important in threat environments and extendibility is better in opportunity environments (Whitworth et al, 2008). These performance criteria are general because they have no inherent contradictions, e.g. a bullet-proof plexi-glass room can be secure but not private, while encrypted files can be private but not secure. Reliability provides services but security denies them (Jonsson, 1998), so a system can be reliable but insecure, unreliable but secure, unreliable and insecure or reliable and secure. Functionality needn’t deny usability (Borenstein and Thyberg, 1991) or connectivity privacy. Cross-cutting requirements (Moreira et al, 2002) can be reconciled by innovative design if they are logically modular, so one can get both.

24.2.9 Design tensions and innovations

A design tension is when satisfying one design requirement denies another. Applying different requirements to the same constituent gives a design tension, e.g. castle walls that protect against attacks but need a gate to receive supplies, or computers impenetrable to virus attacks that need plug-in software hooks.These contrasts are not anomalies, but built into the nature of systems.

Design tensions begin slack for new systems, but increase as performance improves. Eventually, like stretched rubber bands, the system becomes so "tight" that advancing one requirement can easily pull back another, or more than one. In the version 2 paradox, development effort spent improving a successful product can decrease its performance!

To expand a performance web, one can't just pull one corner, e.g. in 1992, Apple CEO Sculley introduced the hand held Newton, claiming that portable computing was the future. We now know he was right, yet in 1998 Apple dropped the line due to poor sales. The Newton’s small screen made data entry hard, i.e. the portability gain was nullified by a usability loss. Only when Palm’s Graffiti language improved handwriting recognition, did the personal digital assistant market revive. Sculley's innovation was only half the answer - the other half was resolving the usability problems created by increasing flexibility. Innovative design must meet specialist requirements and resolve design tensions.

24.2.10 Project development

24.2.10.1 | ||||

24.2.10.2 Constituent | 24.2.10.3 Code | 24.2.10.4 Requirement | 24.2.10.5 Analysis | 24.2.10.6 Testing |

Actions | Application | Functionality | Task | Business |

Interface | Usability | Usability | User | |

Interactions | Authorization | Security | Threat | Penetration |

Plug-ins | Extendibility | Standards | Compatibility | |

Changes | Error recovery | Reliability | Stress | Load |

Preferences | Flexibility | Contingency | Situation (Beta) | |

Interchanges | Network | Connectivity | Channel | Communication |

Rights | Privacy | Legitimacy (footnote 36) | Community | |

Table 24.7: Project development specializations by constituent

The days when programmers could list a system's functions then just code them are gone, if they ever existed. Today, design involves not only many specialties but also their interaction. A system development could involve up to eight specialists, with distinct requirements, analysis and testing (Table 7). Smaller systems might have four (actions, interactions, changes and interchanges), two (opportunities and risks) or just one (performance). Design tensions are reduced by agile methods, where specialists talk to each other and stakeholders, but system development also needs innovators, people to cut across specialist boundaries to resolve cross-cutting design tensions.

24.2.11 Discussion questions

Research selected questions from the list below. If you are reading this chapter as part of a class - either at university or a commercial course - you can research these questions in pairs and report back to the class, with reasons and examples.

What three widespread computing expectations didn't happen? Why not? What three unexpected computing outcomes did happen? Why?

What is a system requirement? How does it relate to system design? How do system requirements relate to performance? Or to system evaluation criteria? How can one specify or measure system performance if there are many factors?

What is the basic idea of general systems theory? Why is it useful? Can a cell, your body, and the earth all be considered systems? Describe Lovelock’s Gaia Hypothesis. How does it link to both General Systems Theory and the recent film Avatar? Is every system contained within another system (environment)?

Does nature have a best species? If nature has no better or worse, how can species evolve to be better? Or if it has a better and worse, why is current life so varied instead of just the “best”? (footnote 37) Does computing have a best system? If it has no better or worse, how can it evolve? If it has a better and worse, why is current computing so varied? Which animal actually is “the best”?

Why did the electronic office increase paper use? Give two good reasons to print an email in an organization. How often do you print an email? When will the use of paper stop increasing?

Why wasn't social gaming predicted? Why are MMORPG human opponents better than computer ones? What condition must an online game satisfy for a community to "mod" it (add scenarios)?

In what way is computing an "elephant"? Why can't it be put into an academic "pigeon hole"? (footnote 38) How can science handle cross-discipline topics?

What is the first step of system design? What are those who define what a system should do called? Why can't designers satisfy every need? Give examples from house design.

Is reliability an aspect of security or is security an aspect of reliability? Can both these things be true? What are reliability and security both aspects of? What decides which is more important?

What is a design space? What is the efficient frontier of a design space? What is a design innovation? Give examples (not a vacuum cleaner).

Why did the SGML academic community find Tim Berners-Lee's WWW proposal of low quality? Why didn't they see the performance potential? Why did Microsoft also find it “of no business value”? How did the WWW eventually become a success? Given that business and academia now use it extensively, why did they reject it initially? What have they learned from this lesson?

Are NFRs like security different from functional requirements? By what logic are they less important? By what logic are they equally critical to performance?

In general systems theory (GST), every system has what two aspects? Why doesn't decomposing a system into simple parts fully explain it? What is left out? Define holism. Why are highly holistic systems also individualistic? What is the Frankenstein effect? Show a "Frankenstein" web site. What is the opposite effect? Why cant “good” system components just be stuck together?

What are the elemental parts of a system? What are its constituent parts? Can elemental parts be constituent parts? What connects elemental and constituent parts? Give examples.

Why are constituent part specializations important in advanced systems? Why do we specialize as left-handers or right-handers? What about the ambidextrous?

If a car is a system, what are its boundary, structure, effector and receptor constituents? Explain its general system requirements, with examples. When might a vehicle's "privacy" be a critical success factor? What about its connectivity?

Give the general system requirements for browser application. How did its designers meet them? Give three examples of browser requirement tensions. How are they met?

How do mobile phones meet the general system requirements, first as hardware and then as software?

Give examples of usability requirements for hardware, software and HCI. Why does the requirement change by level? What is "usability" on a community level?

Are reliability and security really distinct? Can a system be reliable but insecure, unreliable but secure, unreliable and insecure, or reliable and secure? Give examples. Can a system be functional but not usable, not functional but usable, not functional or usable, or both functional and usable? Give examples.

Performance is taking opportunities and avoiding risks. Yet while mistakes and successes are evident, missed opportunities and mistakes avoided aren't. Explain how a business can fail by missing an opportunity, with WordPerfect vs Word as an example. Explain how a business can succeed by avoiding risks, with air travel as an example. What happens if you only maximize opportunity? What if you only reduce risks? Give examples. How does nature both take opportunities and avoid risks? How should designers manage this?

Describe the opportunity enhancing general system performance requirements, with an IT example of each. When would you give them priority? Describe the risk reducing performance requirements, with an IT example of each. When would you give them priority?

What is the Version 2 paradox? Give an example from your experience, of software that got worse on an update. You can use a game example. Why does this happen? How can designers avoid this?

Define extendibility for any system. Give examples for a desktop computer, a laptop computer and a mobile device. Give examples of software extendibility, for email, word processing and game applications. What is personal extendibility? Or community extendibility?

Why is innovation so hard for advanced systems? Why stops a system being secure and open? Or powerful and usable? Or reliable and flexible? Or connected and private? How can such diverse requirements ever be reconciled?

Give two good reasons to have specialists in a large computer project team. What happens if they disagree? Why are cross-disciplinary integrators also needed?

24.3 Part 3: Socio-technical design

“Let the social define the technical”

Social ideas like freedom seem far removed from computer code but computing today is social. That technology designers aren't ready, have no precedent or don't recognize social needs is irrelevant. Like a baby being born, online society is pushing forward, ready or not. And like new parents, socio-technical designers are causing it, whether they want to or not. As the World Wide Web's creator observes:

... technologists cannot simply leave the social and ethical questions to other people, because the technology directly affects these matters

-- Berners-Lee, 2000: p124

The online reality is that how people interact in socio-technical systems depends entirely on the software.

24.3.1 Designing work management

The term socio-technical was first introduced by the Tavistock Institute (footnote 39) in the late 1950’s to oppose Taylorism - reducing jobs to efficient elements on assembly lines in mills and factories. Community level needs gave work-place management ideas like (Porra and Hirschheim, 2007):

Congruence. A process must match its objective - democratic results need democratic means.

Minimize control. Give employees clear goals, but let them decide how to achieve them.

Local control. Let those experiencing a problem change the system, not absent managers.

Flexibility. Without "extra" skills to handle change, specialization will precede extinction.

Boundary innovation. Innovate at the boundaries, where work goes between groups.

Transparency. Give information first to those it affects, e.g. give work rates to workers.

Evolution. Work system development is an iterative process that never stops.

Lead by example. Chinese saying: "If the General takes an egg, his soldiers will loot a village." (footnote 40)

Support human needs. Work that lets people learn, choose, feel and belong gives loyal staff.

In computing it became a call for the ethical use of technology. Yet social needs apply to technology design as well as to work management. Technology that mediates social interactions must also satisfy social needs. In the industrial revolution, “dark satanic mills” enslaved people, so technology was the enemy. Yet people ran those factories. It was the rich oppressing the poor, as always, with machines just letting them do it better. Technology is an effect magnifier, i.e. it isn't in itself good or evil. The people of nineteenth century Britain rejected slavery (footnote 41)but embraced car and phone technologies. In today's information revolution we “love” technology. It is on the other side of the class war, as Twitter, Facebook and YouTube support the Arab spring. Yet the core socio-technical principle is the same:

(footnote 42). Yet America and England somehow got democracy, and now it is unclear why our predecessors ever settled for less. Democracies out-produce autocracies as free people do more and online is no different (Beer and Burrows, 2007). Communities perform by improving social interactions, which happens when citizens do what they should - not what they can.

24.3.2 Social requirements

One can't design socio-technology in a social vacuum. Fortunately, while virtual society is new people have been socializing for thousands of years. We know that fair communities prosper but corrupt ones don't (Eigen, 2003). Social inventions like laws, fairness, freedom, credit and contracts were bought with blood and tears (Mandelbaum, 2002), so why start anew online? Why reinvent the social wheel in cyber-space (Ridley, 2010)? Why re-learn electronically what we already know physically, if the social level in both cases is the same?

When nuclear technology magnified the physical power of war, humanity had a choice: to destroy itself physically by nuclear holocaust, or not. We didn't destroy ourselves by choice, not by technology, which just upped the ante. As the new bottle of information technology fills with the old wine of society, the stakes are raised again. Today’s information revolution vastly increases the power to gather, store and distribute information, for good or ill (Johnson, 2001). We can be hunter-gatherers of the information age or an online civilization (Meyrowitz, 1985). Yet a stone-age society with space-age technology isn’t a good mix.

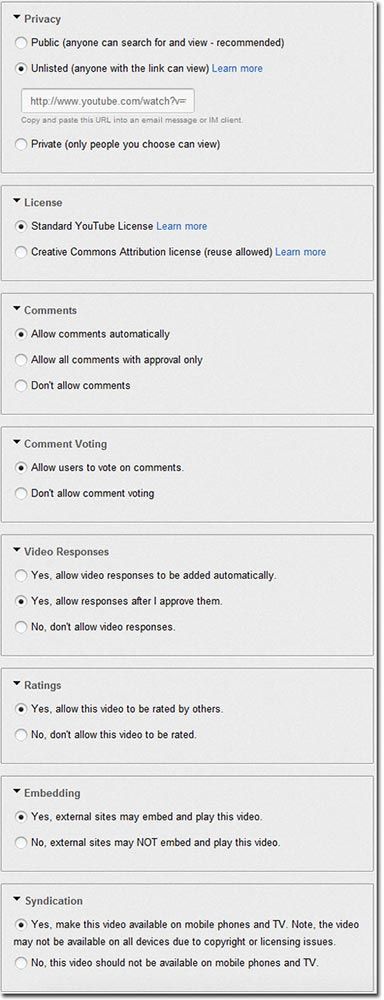

In general, we are “environment blind”. We don’t see social environments not because they are too far away but because they are too close. As a fish is the last to see water, or a bird the air, so we can’t see social environments. Yet if technology is to support civilization, it must specify its requirements. Computing can’t implement what it can’t specify. We live in social environments every day, but struggle to specify them (footnote 43), e.g. a shop-keeper swipes a credit card with a reading device designed to not store data like credit card number or pin. It is designed to the social requirement that shopkeepers don't steal from customers, even if they can. Without this, credit would collapse and a social failure, or depression, can be worse than a natural disaster. In sum, credit card readers support social trust by design.

Likewise, if online systems take and sell customer data like home address and phone for advantage, users will lose trust, and either refuse to register at all, or register with fake data, like "123 MyStreet, MyTown, NJ" (Foreman and Whitworth, 2005). The key to online privacy is not storing data. To say it will never be revealed isn't good enough, as companies can be forced by governments or bribed by cash to reveal data. One can't be forced or bribed to give data one doesn't have. The best way to guarantee online trust is to not to store unneeded information in the first place (footnote 44).

24.3.3 The socio-technical gap

Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). See section "Exceptions" in the copyright terms below.

Figure 24.14: The socio-technical gap

Socio-technical design is the application of community requirements to people and software and hardware. Pure technical design gives a socio-technical gap (Figure 14), between what technology supports and what people want (Ackerman, 2000), e.g. designing email to let anyone message anyone without permission gave the spam problem. Filters help on a personal level but transmitted spam as a system problem has never stopped growing. While inbox spam is constant, due to filters, transmitted spam grew from 20% to 40% in 2002-2003 (Weiss, 2003), to 60-70% in 2004 (Boutin, 2004), to from 86.2% to 86.7% of the 342 billion emails sent in 2006 (MAAWG, 2006; MessageLabs, 2006), to 87.7% of spam in 2009 and 89.1% in 2010 (MessageLabs, 2010). A 2004 prediction that within a decade over 95% of all emails transmitted by the Internet will be spam is coming true (Whitworth and Whitworth, 2004).

Filters address spam as a user problem, but it is really a community problem. Transmitted spam uses Internet processing, bandwidth and storage whether users behind their filter walls see it or not. Only socio-technology can resolve social problems like spam, because in the "spam wars", technology helps both sides, e.g. image spam can bypass text filters, AI can solve captchas (footnote 45), botnets can harvest web site emails, and zombie sources can send emails. So spam isn't going away any time soon (Whitworth and Liu, 2009a).

Aliens visiting our planet might suppose our email system was build for machines, as most of the messages it transmits go from one computer (spammer) to another computer (filter), untouched by human eye.This result is not just bad luck. A communication technology isn't a Pandora's box, unknown until opened, because we built it. Spam happens when we build technologies instead of socio-technologies.

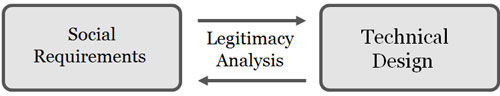

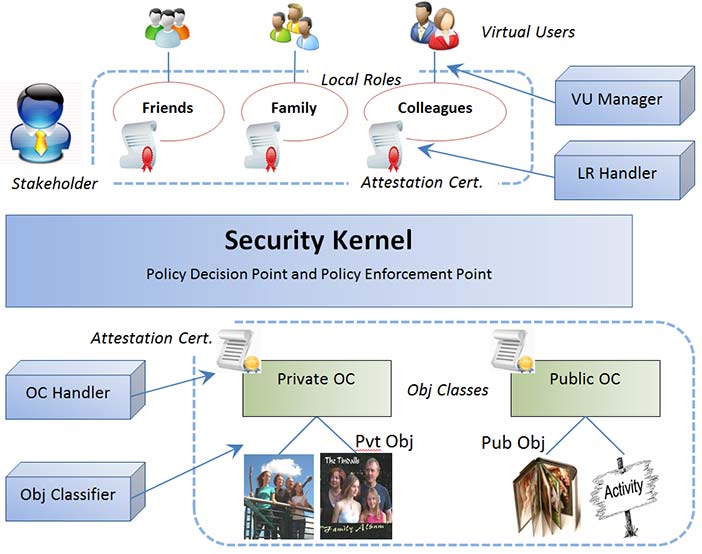

24.3.4 Legitimacy analysis

In politics, a legitimate government is seen as rightful by its citizens, i.e. accepted. In contrast, illegitimate governments need force of arms and propaganda to stay in power. By extension, legitimate interaction is accepted by the parties involved, who freely repeat it, e.g. fair trade. Legitimacy has been specified as: fairness and public good (Whitworth and de Moor, 2003). Physical and online citizens prefer legitimate communities because they perform better socially.

In physical society, legitimacy is maintained by laws, police and prisons, that punish criminals. Legitimacy is the human concept by which judges create new laws and juries decide on never before seen cases. The higher affects lower principle applies here: communities engender human ideas like fairness, which generate informational laws, that are used to govern physical interactions. Communities affect people to create rules to direct acts that benefit the community, i.e. higher level goals drive lower level operations to improve system performance. Doing so online, applying social principles to technical systems, is socio-technical design.

Conversely, over time laws get a "life of their own" and the tail wags the dog, e.g. copyright laws designed to encourage innovators are now just a tool to perpetuate corporate profit (Lessig, 1999) (footnote 46). Unless continuously "re-invented" at the human level, laws inevitably decay. Today's online society is a social evolution as well as a technical one. The social Internet is a move to community goals like service and freedom, so to reduce it to a hawker market place would be its devolution. So let the old ways of business, politics and academia be changed by the Internet, not the other way around.

One can't just “stretch” physical laws into cyberspace (Samuelson, 2003) because they often:

Don't transfer (Burk, 2001), e.g. what is online “trespass”?

Don't apply, e.g. what law applies to online "cookies" (Samuelson, 2003)?

Change too slowly, e.g. laws change in years but code changes in months.

Depend on code (Mitchell, 1995), e.g. anonymity means actors can't be identified.

Have no jurisdiction. U.S. law applies to U.S. soil but cyber-space isn't "in" America.