“The future is socio-technology not technology”

The future of computing depends on its relationship to humanity. This chapter contrasts the socio-technical and technical visions.

7.1 Technology Utopianism

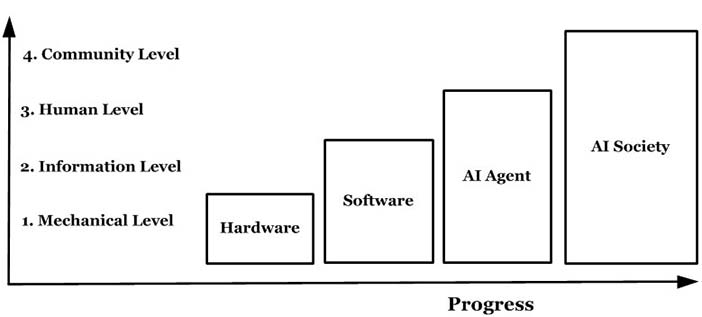

Technology utopianism is the view that technology will endlessly improve until it not only does what people do, but does it better. The idea that computer technology will define the future of humanity is popular in fiction; e.g. Rosie in The Jetsons, C-3PO in Star Wars and Data in Star Trek are robots that read, talk, walk, converse, think and feel. We do these things easily so how hard could it be? In films, robots learn (Short Circuit), reproduce (Stargate's replicators), think (The Hitchhiker's Guide's Marvin), become self-aware (I, Robot) and eventually replace us (The Terminator, The Matrix). In this view, computers are an unstoppable evolutionary juggernaut that must eventually supersede us, even to taking over the planet (Figure 7.1).

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 7.1: Technological utopianism

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 7.1: Technological utopianism

Yet cartoon fiction (Figure 7.2a) and movie fiction (Figure 7.2b) are not fact. Today's actual housework robot is the Roomba (Figure 7.2c). It picks up dirt but has problems like turning in repeating circles, software paralysis, thread clog build up and getting stuck under the couch. If you wash it in water, it dies, but make no mistake, this is a clever robot. Yet it needs help to survive in a house with perfectly flat terrain, no enemies, and free food (footnote 1). Drop it outside, or even just stop tending it, and it dies out, i.e. insects do better. Right now, computers could not even conquer a planet of cockroaches, let alone people.

Courtesy of Fleischer Brothers production. Copyright: pd (Public Domain (information that is common property and contains no original authorship)). Figure 7.2.A: A Cartoon Robot in Superman

Courtesy of Fleischer Brothers production. Copyright: pd (Public Domain (information that is common property and contains no original authorship)). Figure 7.2.A: A Cartoon Robot in Superman

Courtesy of Brayon Michael Pieske. Copyright: pd (Public Domain (information that is common property and contains no original authorship)). Figure 7.2.B: A Movie Robot in Star Wars .

Courtesy of Brayon Michael Pieske. Copyright: pd (Public Domain (information that is common property and contains no original authorship)). Figure 7.2.B: A Movie Robot in Star Wars .

Courtesy of Larry D. Moore. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 7.2.C: An Actual Robot (iRobot Roomba version) 104

Courtesy of Larry D. Moore. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 7.2.C: An Actual Robot (iRobot Roomba version) 104

(footnote 2) (footnote 3) (footnote 4)

Compare people and robots on the tasks we do well, like conversation. People chat easily but the computers next to us are strangely silent. The technology to convert text to speech is well established, so why don't cars for example talk to us, like KITT in Knight Rider? The reason is that computer conversations are truly embarrassing, e.g. this Cleverbot (CB) conversation, run through twice (footnote 5):

Me: If you become me, am I you?

CB: I believe in you babe, or What if I don't believe they are?

Me: If you are not you, who are you?

CB: I am not a vampire, or I'm you.

Me: How do you know?

CB: Because I'm a girl, or Because you know I know I know you know me.

Me: But girls can be vampires.

CB: Yes they can, or No they can't.

Me: So could you be a vampire girl?

CB: No. I am not, or A girl.

Me: Could you become a vampire girl?

CB: Why? or No, could you become a girl?

Me: If you were bitten by a vampire would you be a vampire girl?

CB: Who have you told that I am Jesus? or I'd buy an X-wing, because they're awesome.

Me: So you could become a vampire girl?

CB: I doubt it. But I know it could be true, or I could be a lot of things.

Me: That contradicts what you said before.

CB: I don't think it does, or You are contradicting yourself.

Me: You could be not you and a vampire.

CB: No, I'm just a rabbit, or Girl vampire.

Me: Could you be not you as a vampire girl?

CB: No, or I could probably be a giraffe, if science were able to make me so.

Me: You just said you could be a vampire girl which is not you.

CB: I never said I was a boy, or I never said I was a girl.

This painful conversation has no end. It is not hard to see the programmers faking conversation, i.e. it is a Turing test fail.

Spatial coordination is no better, as a comparison of the Robot Soccer World Cup and the human Soccer World Cup reveals a vast disparity. Artificial Intelligence (AI) advocates say robots will surpass people in forty years, but they said the same forty years ago.

In vision, computers struggle with retinal, not cortical, activity:

“Computers are no real competition for the human brain in areas such as vision, hearing, pattern recognition and learning. ... And when it comes to operational efficiency there is no contest at all. A typical room-size supercomputer weights roughly 1,000 times more, occupies 10,000 times more space and consumes a millionfold more power ...”

-- Boahen, 2005

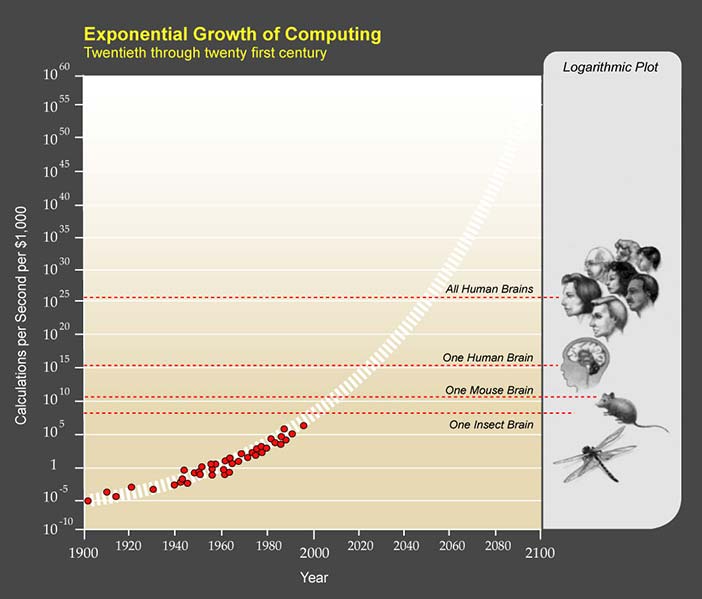

Courtesy of Ray Kurzweil and Kurzweil Technologies, Inc.. Copyright: CC-Att-SA-1 (Creative Commons Attribution-ShareAlike 1.0 Unported). Figure 7.3: The exponential growth of simple processing power

Courtesy of Ray Kurzweil and Kurzweil Technologies, Inc.. Copyright: CC-Att-SA-1 (Creative Commons Attribution-ShareAlike 1.0 Unported). Figure 7.3: The exponential growth of simple processing power

Clearly talking, walking and seeing are not as easy as our brain makes it seem.

Technology utopians use Moore's law, that computer power doubles every eighteen months (footnote 6), to predict a future singularity at about 2050, when computers go beyond us. Graphing simple processing power by time suggests when computers will replace people (Kurzweil, 1999) (Figure 7.3). In theory, when computers have as many transistors as the brain has neurons, they will process as well as the brain, but the assumption is that brains process as computers do, which they do not.

In Figure 7.3, computers theoretically processed as an insect in 2000, and as a mouse in 2010; they will process as a human in 2025 and beyond all humans in 2050. So why, right now, are they unable to do even what ants do, with their neuron sliver? How can computers get to human conversation, pattern recognition and learning in a decade if they are currently not even at the insect level? The error is to assume that all processing is simple processing, i.e. calculating, but calculating cannot cross the 99% performance barrier.

7.2 The 99% Performance Barrier

Computers calculate better than us as cars travel faster and cranes lift more, but calculating is not what brains do. Simple tasks are solved by simple processing, but tasks like vision, hearing, thinking and conversing are not simple (footnote 7). Such tasks are productive, i.e. the demands increase geometrically with element number, not linearly (footnote 8).

For example, the productivity of language is that five year olds know more sentences than they could learn in a lifetime at one per second (Chomsky, 2006). Children easily see that a Letraset page (Figure 7.4) is all ‘A's, but computers struggle with such productive variation. As David Marr observed (Marr, 1982), using pixel level processing for pattern recognition is:

“like trying to understand bird flight by studying only feathers. It just cannot be done”

Simple processing, at the part level, cannot manage productive task wholes – the variation is just too much. AI experts who looked beyond the hype knew decades ago that productive tasks like language would not be solved anytime soon (Copeland, 1993).

Copyright: pd (Public Domain (information that is common property and contains no original authorship)). Figure 7.4: Letraset page for letter 'A'

Copyright: pd (Public Domain (information that is common property and contains no original authorship)). Figure 7.4: Letraset page for letter 'A'

The bottom line for a simple calculation approach is the 99% performance barrier, where the last performance percentage can take more effort than all the rest together, e.g. 99% accurate computer voice recognition is one error per 100 words, which is unacceptable in conversation. For computer auto-drive cars, 99% accuracy is an accident a day! In the 2005 DARPA Grand Challenge, only five of 23 robot vehicles finished a simple course (Miller et al., 2006).

In 2007, six of eleven better funded vehicles finished an urban track with a top average speed of 14mph. By comparison, skilled people drive for decades on bad roads, in worse weathers, in heavier traffic, much faster, and with no accidents (footnote 9). The best robot cars are not even in the same league as most ordinary human drivers.

Nor is the gap is closing, because the 99% barrier cannot be crossed by any simple calculation, whatever the power. The reason is simply that the options are too many to enumerate. Calculating power, by definition, cannot solve incalculable tasks. Productive tasks can only be solved by productive processing, whether the processor is a brain or a super computer.

(footnote 10)  Courtesy of Dmadeo. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 7.5.A: Kim Peek inspired the film Rain Man.

Courtesy of Dmadeo. Copyright: CC-Att-SA-3 (Creative Commons Attribution-ShareAlike 3.0). Figure 7.5.A: Kim Peek inspired the film Rain Man.  Copyright © MGM. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 7.5.B: Dustin Hoffman in the role of Rain Man. (footnote 11)

Copyright © MGM. All Rights Reserved. Used without permission under the Fair Use Doctrine (as permission could not be obtained). See the "Exceptions" section (and subsection "allRightsReserved-UsedWithoutPermission") on the page copyright notice. Figure 7.5.B: Dustin Hoffman in the role of Rain Man. (footnote 11)

Our brain in its evolution already discovered this and developed beyond simple processing. In savant syndrome, people who can calculate 20 digit prime numbers in their head still need full time care to live in society; e.g. Kim Peek (Figure 7.5a), who inspired the movie Rain Man (Figure 7.5b), could recall every word on every page of over 9,000 books, including all of Shakespeare and the Bible, but had to be cared for by his father. He was a calculation genius, but as a human was neurologically disabled because the higher parts of his brain did not develop.

Savant syndrome is our brain working without its higher parts. That it calculates better means that it already tried simple processing in the past, and moved on. We become like computers if we regress. The brain is not a computer because it is a different kind of processor.

7.3 A Different Kind of Processor

To be clear: the brain is an information processor. Its estimated hundred billion neurons are biological on/off devices, no different on the information level from the transistors of a computer. Both neurons and transistors are powered by electricity and both allow logic gates (McCulloch & Pitts, 1943) .

So how did the brain solve the "incalculable" productive tasks of language, vision and movement? A hundred billion neurons per head is more than the people in the world, about as many as the stars in our galaxy, or galaxies in our universe, but the solution was not just to increase the number of system parts.

If the brain's processing did depend only on neuron numbers, then computers would indeed soon overtake its performance, but it does not. As explained, general system performance is not just the parts, but also how they connect, i.e. the system architecture. The processing power of the brain depends not only on the number of neurons but also on the interconnections, as each neuron can connect to up to ten thousand others. The geometric combinations of neurons then matches the geometrics of productive tasks.

The architecture of almost all computers today was set by von Neumann, who made certain assumptions to ensure success:

Centralized Control: Central processing unit (CPU) direction.

Sequential Input: Input channels are processed in sequence.

Exclusive Output: Output resources are locked for one use.

Location Based Storage: Access by memory address.

Input Driven Initiation: Processing is initiated by input.

No Recursion: The system does not process itself.

In contrast, the brain's design is not like this at all, e.g. it has no CPU (Sperry & Gazzaniga, 1967). It crossed the 99% barrier using the processing design risks that von Neumann avoided, namely:

Decentralized Control: The brain has no centre, or CPU.

Massively Parallel Input: The optic nerve has a million fibres while a computer parallel port has 25.

Overlaid Output: Primitive and advanced sub-systems compete for output control, giving the benefits of both.

Connection Based Storage: As in neural nets.

Process Driven Initiation: People hypothesize and predict.

Self-processing: The system changes itself, i.e. learns.

Rather than an inferior biological version of silicon computers, the brain is a different kind of processor, designed to respond in real time to ambiguous and incomplete information, with both fast and slow responses, in conditions that alter over time while referencing concepts like "self", "other" and "us".

Computer science avoided the processing of processing as it gave infinite loops, but the brain embraced recursion. It is layer upon layer of link upon link, which currently beggars the specification of mathematical or computer models. Yet it is precisely recursion that allows symbolism - linking one brain neural assembly (a symbol) to another (a perception). Symbolism in turn was the basis of meaning. It was by processing its own processing that the brain acquired language, mathematics and philosophy.

Yet one can make "super computers" by linking many PC video cards in parallel. If more of the same is better computing, then bigger oxen are better farming! How can AI surpass HI (Human Intelligence) if it is not even going in the same direction?

That computers will soon do what people do is a big lie, a statement so ludicrous that it is taken as true (footnote 12). Computers are electronic savants, calculation wizards that need minders to survive in any real world situation. If today's computers excel at the sort of calculating the brain outgrew millions of years ago, how are they the future? For computing to attempt human specialties by doing more of the same is not smart but dumb. If today's super-computers are not even in the same processing league as the brain (footnote 13), technology utopians are computing traditionalists posing as futurists.

The key to the productivity problem was not more processing but the processing of processing. By this risky step, the brain constructs a self, others and a community, the same constructs that both human and computer savants struggle with. So why tie up twenty-million-dollar super-computers to do what brains with millions of years of real life beta-testing already do? Even if we made computers work like the brain, say as neural nets, who is to say they would not inherit the same weaknesses? Why not change the computing goal, from human replacement to human assistant?

7.4 The Socio-technical Vision

In the socio-technical vision, humanity operates at a level above information technology. Meaning derives from information but it is not information. Meaning relates to information as software relates to hardware. So IT trying to do what people do is like trying to write an Android application by setting physical bit values on and off: it is not feasible even though it is not impossible. Hence computers will be lucky to do what the brain does in a thousand years, let alone forty. The question facing IT is not when it will replace people, but when it will be seen for what it is: just information level processing.

While the hype of smart computing takes centre stage, the real future of computing is quietly happening. Driverless cars are still a dream but reactive cruise control, range sensing and assisted parallel parking are already here (Miller et al., 2006). Computer surgery still struggles but computer supported remote surgery and computer assisted surgery are here today. Robots are still clumsy but people with robot limbs are already better than able. Computer piloted drones are a liability but remotely piloted drones are an asset. Computer generated animations are good, but state-of-the-art animations like Gollum in Lord of the Rings, or the Avatar animations, are human actors plus computers. Even chess players advised by computers are better than computers alone (footnote 14). The future of computing is happening while we are looking elsewhere.

All the killer applications of the last decade, from email to Facebook, were people doing what they do best and technology doing what it does best, e.g. in email, people create meaning and the software transmits information. It is only when computers try to manage meaning that things go wrong. It is because meaning is not information that people should supervise computers and computers should not control people.

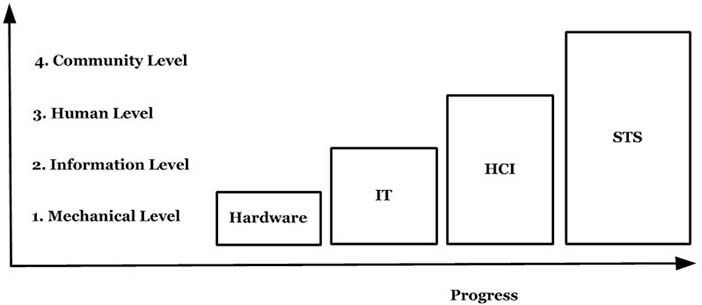

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 7.6: The socio-technical vision

Courtesy of Brian Whitworth and Adnan Ahmad. Copyright: CC-Att-ND-3 (Creative Commons Attribution-NoDerivs 3.0 Unported). Figure 7.6: The socio-technical vision

When computing becomes background, as in pervasive computing theory, it will work with people as it should. Socio-technical design builds technology to social requirements because higher levels directing lower ones improves performance (Figure 7.6). If people direct technology it may go wrong, but if technology directs people it will go wrong. Higher requirements directing lower designs is an evolution but the reverse is not. To look for progress in lower levels of operation because they are more obvious makes no sense (footnote 15).

To see the Internet as just hardware and software is to underestimate it. Computing is more than that. Technology alone, without a human context, is less than useless — it is pointless. To say "technology is the future" is to pass the future to the mindless and heartless. The future is socio-technology not technology.

All that we know, think or feel, right or wrong, is now online, for everyone to see. Thoughts previously hidden in minds are now visible to all. This is good and bad because thoughts cause deeds as guns fire bullets, so what happens online connects directly to what happens offline, physically. The ideas that precede acts now battle for the hearts and minds of people on the Internet. Virtuality has become reality because although the medium is electronic, the people are real.

Some say the Internet is making us stupid, but it is not (footnote 16). Technology is just a glass that reflects, whether as a telescope or a microscope. Online computer media show us our ignorance, but they do not create it. We must take the credit for that. The Internet is just a giant mirror, reflecting humanity. It is showing us to us.

What we see in the electronic mirror is not always pretty, but it is what we are, and teh same mirror also shows selfless-service, empathy and understanding. As has been said, you cannot get out of jail unless you know you are in jail, so humanity cannot change itself until it sees itself. The Internet then, with all its faults, is a necessary step forward in our social evolution. In this view, computing is an integral part of a social evolution that began over four thousand years ago, with the first cities. Now, as then, the future belongs to us, not to the technology. The information technology revolution is leading to new social forms, just as the industrial technology revolution did. Let us all help it succeed.

7.5 Discussion Questions

The following questions are designed to encourage thinking on the chapter and exploring socio-technical cases from the Internet. If you are reading this chapter in a class - either at university or commercial – the questions might be discussed in class first, and then students can choose questions to research in pairs and report back to the next class.

What is technology utopianism? Give examples from movies. What is the technology singularity? In this view, computers must take over from people. Why may this not be true?

List some technology advances people last century expected by the year 2000? Which ones are still to come? What do people expect robots to be doing by 2050? What is realistic? How do robot achievements like the Sony dog rank? How might socio-technical design improve the Sony dog? How does the socio-technical paradigm see robots evolving? Give examples.

If super-computers achieve the processing power of one human brain, then are many brains together more intelligent than one? Review the "Madness of Crowds" theory, that people are dumber rather than smarter when together, with examples. Why doesn't adding more programmers to a project always finish it quicker? What, in general, affects whether parts perform better together? Explain why a super computer with as many transistors as the brain has neurons is not necessarily its processing equal.

How do today's super computers increase processing power? List the processor cores of the top ten? Which use NVidia PC graphic board cores? How is this power utilized in real computing tasks? How does processing cores operating in sequence or parallel affect performance? How is that decided in practice?

Review the current state-of-the-art for automated vehicles, whether car, plane, train, etc. Are any fully "pilotless" vehicles currently in use? What about remotely piloted vehicles? When does full computer control work? When doesn't it? (hint: consider active help systems). Give a case when full computer control of a car would be useful. Suggest how computer control of vehicles will evolve, with examples.

What is the 99% performance barrier? Why is the last 1% of accuracy a problem for productive tasks? Give examples from language, logic, art, music, poetry, driving and one other. How common are such tasks in the world? How does the brain handle them?

What is a human savant? Give examples past and present. What tasks do savants do easily? Can they compete with modern computers? What tasks do savants find hard? What is the difference between the tasks they can and cannot do? Why do savants need support? If computers are like savants, what support do they need?

Find three examples of software that, like Mr. Clippy, thinks it knows best. Give examples of: 1. Acts without asking, 2. Nags, 3. Changes secretly, 4. Causes you work.

Think of a personal conflict you would like advice on. Keep it simple and clear. Now try these three options. In each case ask the question the same way:

Go to your bedroom alone, put a photo of family member you like on a pillow. Explain and ask the question out loud, then imagine their response.

Go to an online computer like http://cleverbot.com/ and do the same.

Ring an anonymous help line and do the same.

Compare and contrast the results. Which was the most helpful?

A rational way to decide is to list all the options, assess each one and pick the best. How many options are there for these contests: 1. Checkers, 2. Chess, 3. Civilization (a strategy game), 4. A MMORPG, 5. A debate. Which ones are computers good at? What do people do if they cannot calculate all the options? Can a program do this? How do online gamers rate human and AI opponents? Why? Will this always be so?

What is the difference between syntax and semantics in language? What are programs good at in language? Look at text-to-speech systems, like here, or translators here. How successful are they? Are computers doing what people do? At what level is the translating occurring? Are they semantic level transformations? Discuss John Searle's Chinese room thought experiment.